Qwen-7B 🤖 | 🤗 | Qwen-7B-Chat 🤖 | 🤗 | Demo | Report

We opensource Qwen-7B and Qwen-7B-Chat on both 🤖 ModelScope and 🤗 Hugging Face (Click the logos on top to the repos with codes and checkpoints). This repo includes the brief introduction to Qwen-7B, the usage guidance, and also a technical memo link that provides more information.

Qwen-7B is the 7B-parameter version of the large language model series, Qwen (abbr. Tongyi Qianwen), proposed by Alibaba Cloud. Qwen-7B is a Transformer-based large language model, which is pretrained on a large volume of data, including web texts, books, codes, etc. Additionally, based on the pretrained Qwen-7B, we release Qwen-7B-Chat, a large-model-based AI assistant, which is trained with alignment techniques. The features of the Qwen-7B series include:

- Trained with high-quality pretraining data. We have pretrained Qwen-7B on a self-constructed large-scale high-quality dataset of over 2.2 trillion tokens. The dataset includes plain texts and codes, and it covers a wide range of domains, including general domain data and professional domain data.

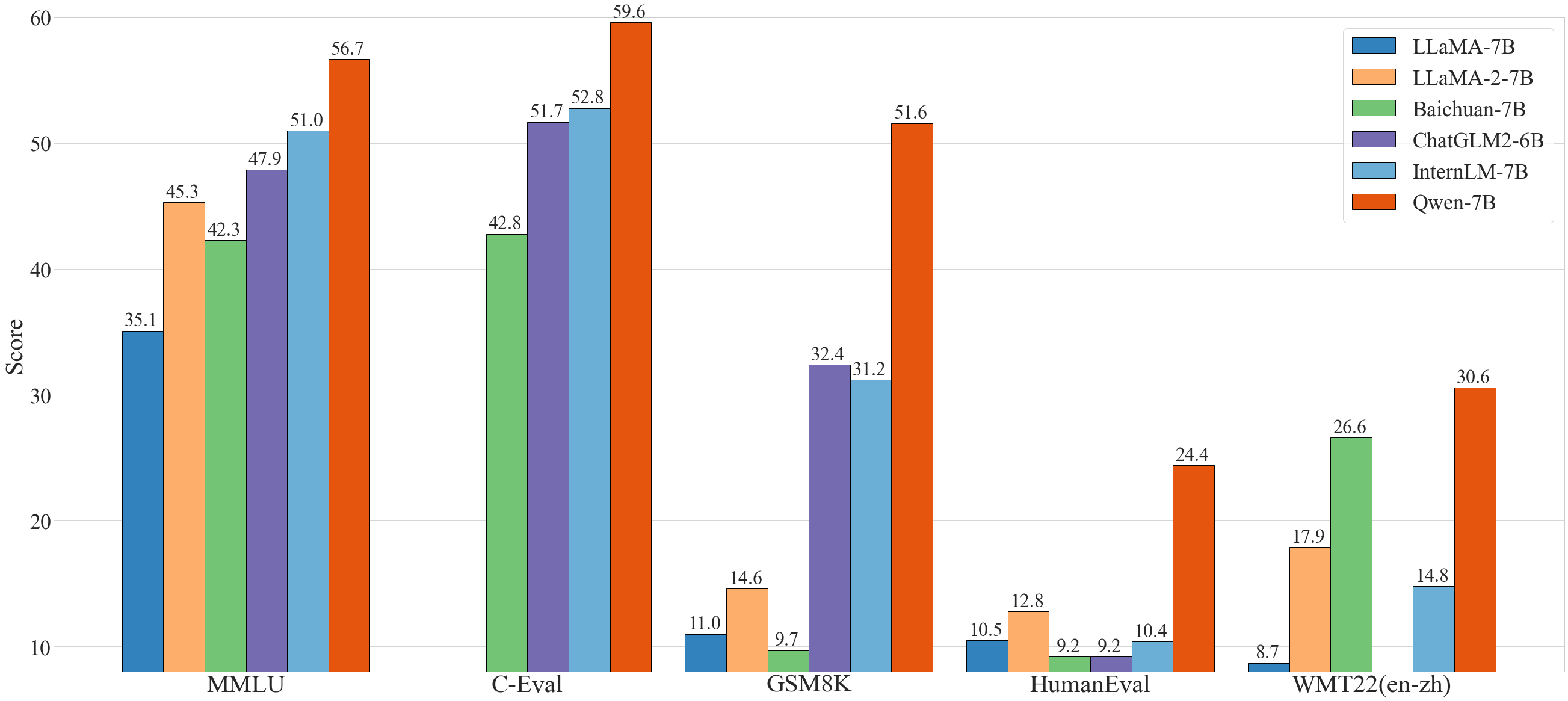

- Strong performance. In comparison with the models of the similar model size, we outperform the competitors on a series of benchmark datasets, which evaluates natural language understanding, mathematics, coding, etc.

- Better support of languages. Our tokenizer, based on a large vocabulary of over 150K tokens, is a more efficient one compared with other tokenizers. It is friendly to many languages, and it is helpful for users to further finetune Qwen-7B for the extension of understanding a certain language.

- Support of 8K Context Length. Both Qwen-7B and Qwen-7B-Chat support the context length of 8K, which allows inputs with long contexts.

- Support of Plugins. Qwen-7B-Chat is trained with plugin-related alignment data, and thus it is capable of using tools, including APIs, models, databases, etc., and it is capable of playing as an agent.

The following sections include information that you might find it helpful. Specifically, we advise you to read the FAQ section before you launch issues.

- 2023.8.3 We release both Qwen-7B and Qwen-7B-Chat on ModelScope and Hugging Face. We also provide a technical memo for more details about the model, including training details and model performance.

In general, Qwen-7B outperforms the baseline models of a similar model size, and even outperforms larger models of around 13B parameters, on a series of benchmark datasets, e.g., MMLU, C-Eval, GSM8K, HumanEval, and WMT22, etc., which evaluate the models' capabilities on natural language understanding, mathematic problem solving, coding, etc. See the results below.

| Model | MMLU | C-Eval | GSM8K | HumanEval | WMT22 (en-zh) |

|---|---|---|---|---|---|

| LLaMA-7B | 35.1 | - | 11.0 | 10.5 | 8.7 |

| LLaMA 2-7B | 45.3 | - | 14.6 | 12.8 | 17.9 |

| Baichuan-7B | 42.3 | 42.8 | 9.7 | 9.2 | 26.6 |

| ChatGLM2-6B | 47.9 | 51.7 | 32.4 | 9.2 | - |

| InternLM-7B | 51.0 | 52.8 | 31.2 | 10.4 | 14.8 |

| Baichuan-13B | 51.6 | 53.6 | 26.6 | 12.8 | 30.0 |

| LLaMA-13B | 46.9 | 35.5 | 17.8 | 15.8 | 12.0 |

| LLaMA 2-13B | 54.8 | - | 28.7 | 18.3 | 24.2 |

| ChatGLM2-12B | 56.2 | 61.6 | 40.9 | - | - |

| Qwen-7B | 56.7 | 59.6 | 51.6 | 24.4 | 30.6 |

Additionally, according to the third-party evaluation of large language models, conducted by OpenCompass, Qwen-7B and Qwen-7B-Chat are the top 7B-parameter models. This evaluation consists of a large amount of public benchmarks for the evaluation of language understanding and generation, coding, mathematics, reasoning, etc.

For more experimental results (detailed model performance on more benchmark datasets) and details, please refer to our technical memo by clicking here.

- python 3.8 and above

- pytorch 1.12 and above, 2.0 and above are recommended

- CUDA 11.4 and above are recommended (this is for GPU users, flash-attention users, etc.)

Below, we provide simple examples to show how to use Qwen-7B with 🤖 ModelScope and 🤗 Transformers.

Before running the code, make sure you have setup the environment and installed the required packages. Make sure you meet the above requirements, and then install the dependent libraries.

pip install -r requirements.txtIf your device supports fp16 or bf16, we recommend installing flash-attention for higher efficiency and lower memory usage. (flash-attention is optional and the project can run normally without installing it)

git clone -b v1.0.8 https://github.com/Dao-AILab/flash-attention

cd flash-attention && pip install .

# Below are optional. Installing them might be slow.

# pip install csrc/layer_norm

# pip install csrc/rotaryNow you can start with ModelScope or Transformers.

To use Qwen-7B-Chat for the inference, all you need to do is to input a few lines of codes as demonstrated below. However, please make sure that you are using the latest code.

from transformers import AutoModelForCausalLM, AutoTokenizer

from transformers.generation import GenerationConfig

# Note: The default behavior now has injection attack prevention off.

tokenizer = AutoTokenizer.from_pretrained("Qwen/Qwen-7B-Chat", trust_remote_code=True)

# use bf16

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-7B-Chat", device_map="auto", trust_remote_code=True, bf16=True).eval()

# use fp16

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-7B-Chat", device_map="auto", trust_remote_code=True, fp16=True).eval()

# use cpu only

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-7B-Chat", device_map="cpu", trust_remote_code=True).eval()

# use auto mode, automatically select precision based on the device.

model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-7B-Chat", device_map="auto", trust_remote_code=True).eval()

# Specify hyperparameters for generation

model.generation_config = GenerationConfig.from_pretrained("Qwen/Qwen-7B-Chat", trust_remote_code=True)

# 第一轮对话 1st dialogue turn

response, history = model.chat(tokenizer, "你好", history=None)

print(response)

# 你好!很高兴为你提供帮助。

# 第二轮对话 2nd dialogue turn

response, history = model.chat(tokenizer, "给我讲一个年轻人奋斗创业最终取得成功的故事。", history=history)

print(response)

# 这是一个关于一个年轻人奋斗创业最终取得成功的故事。

# 故事的主人公叫李明,他来自一个普通的家庭,父母都是普通的工人。从小,李明就立下了一个目标:要成为一名成功的企业家。

# 为了实现这个目标,李明勤奋学习,考上了大学。在大学期间,他积极参加各种创业比赛,获得了不少奖项。他还利用课余时间去实习,积累了宝贵的经验。

# 毕业后,李明决定开始自己的创业之路。他开始寻找投资机会,但多次都被拒绝了。然而,他并没有放弃。他继续努力,不断改进自己的创业计划,并寻找新的投资机会。

# 最终,李明成功地获得了一笔投资,开始了自己的创业之路。他成立了一家科技公司,专注于开发新型软件。在他的领导下,公司迅速发展起来,成为了一家成功的科技企业。

# 李明的成功并不是偶然的。他勤奋、坚韧、勇于冒险,不断学习和改进自己。他的成功也证明了,只要努力奋斗,任何人都有可能取得成功。

# 第三轮对话 3rd dialogue turn

response, history = model.chat(tokenizer, "给这个故事起一个标题", history=history)

print(response)

# 《奋斗创业:一个年轻人的成功之路》Running Qwen-7B pretrained base model is also simple.

Running Qwen-7B

from transformers import AutoModelForCausalLM, AutoTokenizer

from transformers.generation import GenerationConfig

tokenizer = AutoTokenizer.from_pretrained("Qwen/Qwen-7B", trust_remote_code=True)

# use bf16

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-7B", device_map="auto", trust_remote_code=True, bf16=True).eval()

# use fp16

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-7B", device_map="auto", trust_remote_code=True, fp16=True).eval()

# use cpu only

# model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-7B", device_map="cpu", trust_remote_code=True).eval()

# use auto mode, automatically select precision based on the device.

model = AutoModelForCausalLM.from_pretrained("Qwen/Qwen-7B", device_map="auto", trust_remote_code=True).eval()

# Specify hyperparameters for generation

model.generation_config = GenerationConfig.from_pretrained("Qwen/Qwen-7B", trust_remote_code=True)

inputs = tokenizer('蒙古国的首都是乌兰巴托(Ulaanbaatar)\n冰岛的首都是雷克雅未克(Reykjavik)\n埃塞俄比亚的首都是', return_tensors='pt')

inputs = inputs.to(model.device)

pred = model.generate(**inputs)

print(tokenizer.decode(pred.cpu()[0], skip_special_tokens=True))

# 蒙古国的首都是乌兰巴托(Ulaanbaatar)\n冰岛的首都是雷克雅未克(Reykjavik)\n埃塞俄比亚的首都是亚的斯亚贝巴(Addis Ababa)...ModelScope is an opensource platform for Model-as-a-Service (MaaS), which provides flexible and cost-effective model service to AI developers. Similarly, you can run the models with ModelScope as shown below:

import os

from modelscope.pipelines import pipeline

from modelscope.utils.constant import Tasks

from modelscope import snapshot_download

model_id = 'QWen/qwen-7b-chat'

revision = 'v1.0.0'

model_dir = snapshot_download(model_id, revision)

pipe = pipeline(

task=Tasks.chat, model=model_dir, device_map='auto')

history = None

text = '浙江的省会在哪里?'

results = pipe(text, history=history)

response, history = results['response'], results['history']

print(f'Response: {response}')

text = '它有什么好玩的地方呢?'

results = pipe(text, history=history)

response, history = results['response'], results['history']

print(f'Response: {response}')Our tokenizer based on tiktoken is different from other tokenizers, e.g., sentencepiece tokenizer. You need to pay attention to special tokens, especially in finetuning. For more detailed information on the tokenizer and related use in fine-tuning, please refer to the documentation.

We provide examples to show how to load models in NF4 and Int8. For starters, make sure you have implemented bitsandbytes. Note that the requirements for bitsandbytes are:

**Requirements** Python >=3.8. Linux distribution (Ubuntu, MacOS, etc.) + CUDA > 10.0.

Then run the following command to install bitsandbytes:

pip install bitsandbytes

Windows users should find another option, which might be bitsandbytes-windows-webui.

Then you only need to add your quantization configuration to AutoModelForCausalLM.from_pretrained. See the example below:

from transformers import AutoModelForCausalLM, BitsAndBytesConfig

# quantization configuration for NF4 (4 bits)

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type='nf4',

bnb_4bit_compute_dtype=torch.bfloat16

)

# quantization configuration for Int8 (8 bits)

quantization_config = BitsAndBytesConfig(load_in_8bit=True)

model = AutoModelForCausalLM.from_pretrained(

args.checkpoint_path,

device_map="cuda:0",

quantization_config=quantization_config,

max_memory=max_memory,

trust_remote_code=True,

).eval()With this method, it is available to load Qwen-7B in NF4 and Int8, which saves you memory usage. We provide related statistics of model performance below. We find that the quantization downgrades the effectiveness slightly but significantly reduces memory costs.

| Precision | MMLU | GPU Memory for Loading Model |

|---|---|---|

| BF16 | 56.7 | 16.38G |

| Int8 | 52.8 | 10.44G |

| NF4 | 48.9 | 7.79G |

Note: The GPU memory usage profiling in the above table is performed on single A100-SXM4-80G GPU, PyTorch 2.0.1 and CUDA 11.8, with flash attention used.

We measured the average inference speed of generating 2K tokens under BF16 precision and Int8 or NF4 quantization levels, respectively.

| Quantization Level | Inference Speed with flash_attn (tokens/s) | Inference Speed w/o flash_attn (tokens/s) |

|---|---|---|

| BF16 (no quantization) | 30.06 | 27.55 |

| Int8 (bnb) | 7.94 | 7.86 |

| NF4 (bnb) | 21.43 | 20.37 |

In detail, the setting of profiling is generating 2048 new tokens with 1 context token. The profiling runs on single A100-SXM4-80G GPU with PyTorch 2.0.1 and CUDA 11.8. The inference speed is averaged over the generated 2048 tokens.

We also profile the peak GPU memory usage for encoding 2048 tokens as context (and generating single token) and generating 8192 tokens (with single token as context) under BF16 or Int8/NF4 quantization levels, respectively. The results are shown below.

When using flash attention, the memory usage is:

| Quantization Level | Peak Usage for Encoding 2048 Tokens | Peak Usage for Generating 8192 Tokens |

|---|---|---|

| BF16 | 18.11GB | 23.52GB |

| Int8 | 12.17GB | 17.60GB |

| NF4 | 9.52GB | 14.93GB |

When not using flash attention, the memory usage is:

| Quantization Level | Peak Usage for Encoding 2048 Tokens | Peak Usage for Generating 8192 Tokens |

|---|---|---|

| BF16 | 18.11GB | 24.40GB |

| Int8 | 12.18GB | 18.47GB |

| NF4 | 9.52GB | 15.81GB |

The above speed and memory profiling are conducted using this script.

We provide a CLI demo example in cli_demo.py, which supports streaming output for the generation. Users can interact with Qwen-7B-Chat by inputting prompts, and the model returns model outputs in the streaming mode. Run the command below:

python cli_demo.py

We provide code for users to build a web UI demo (thanks to @wysaid). Before you start, make sure you install the following packages:

pip install -r requirements_web_demo.txt

Then run the command below and click on the generated link:

python web_demo.py

We provide methods to deploy local API based on OpenAI API (thanks to @hanpenggit). Before you start, install the required packages:

pip install fastapi uvicorn openai pydantic sse_starletteThen run the command to deploy your API:

python openai_api.pyYou can change your arguments, e.g., -c for checkpoint name or path, --cpu-only for CPU deployment, etc. If you meet problems launching your API deployment, updating the packages to the latest version can probably solve them.

Using the API is also simple. See the example below:

import openai

openai.api_base = "http://localhost:8000/v1"

openai.api_key = "none"

for chunk in openai.ChatCompletion.create(

model="Qwen-7B",

messages=[

{"role": "user", "content": "你好"}

],

stream=True

):

if hasattr(chunk.choices[0].delta, "content"):

print(chunk.choices[0].delta.content, end="", flush=True)Qwen-7B-Chat is specifically optimized for tool usage, including API, database, models, etc., so that users can build their own Qwen-7B-based LangChain, Agent, and Code Interpreter. In our evaluation benchmark for assessing tool usage capabilities, we find that Qwen-7B reaches stable performance.

| Model | Tool Selection (Acc.↑) | Tool Input (Rouge-L↑) | False Positive Error↓ |

|---|---|---|---|

| GPT-4 | 95% | 0.90 | 15% |

| GPT-3.5 | 85% | 0.88 | 75% |

| Qwen-7B | 99% | 0.89 | 9.7% |

For how to write and use prompts for ReAct Prompting, please refer to the ReAct examples. The use of tools can enable the model to better perform tasks.

Additionally, we provide experimental results to show its capabilities of playing as an agent. See Hugging Face Agent for more information. Its performance on the run-mode benchmark provided by Hugging Face is as follows:

| Model | Tool Selection↑ | Tool Used↑ | Code↑ |

|---|---|---|---|

| GPT-4 | 100 | 100 | 97.41 |

| GPT-3.5 | 95.37 | 96.30 | 87.04 |

| StarCoder-15.5B | 87.04 | 87.96 | 68.89 |

| Qwen-7B | 90.74 | 92.59 | 74.07 |

To extend the context length and break the bottleneck of training sequence length, we introduce several techniques, including NTK-aware interpolation, window attention, and LogN attention scaling, to extend the context length to over 8K tokens. We conduct language modeling experiments on the arXiv dataset with the PPL evaluation and find that Qwen-7B can reach outstanding performance in the scenario of long context. Results are demonstrated below:

| Model | Sequence Length | ||||

|---|---|---|---|---|---|

| 1024 | 2048 | 4096 | 8192 | 16384 | |

| Qwen-7B | 4.23 | 3.78 | 39.35 | 469.81 | 2645.09 |

| + dynamic_ntk | 4.23 | 3.78 | 3.59 | 3.66 | 5.71 |

| + dynamic_ntk + logn | 4.23 | 3.78 | 3.58 | 3.56 | 4.62 |

| + dynamic_ntk + logn + window_attn | 4.23 | 3.78 | 3.58 | 3.49 | 4.32 |

For your reproduction of the model performance on benchmark datasets, we provide scripts for you to reproduce the results. Check eval/EVALUATION.md for more information. Note that the reproduction may lead to slight differences from our reported results.

If you meet problems, please refer to FAQ and the issues first to search a solution before you launch a new issue.

Researchers and developers are free to use the codes and model weights of both Qwen-7B and Qwen-7B-Chat. We also allow their commercial use. Check our license at LICENSE for more details. If you have requirements for commercial use, please fill out the form to apply.

If you are interested to leave a message to either our research team or product team, feel free to send an email to qianwen_opensource@alibabacloud.com.