This is an End-to-End machine learning Project.

This project requires Python and the following Python libraries installed:

OR just simply clone the requirements.txt file from here and type pip install -r requirements.txt in the Command Line / IDE environment.

You will also need to have software installed to run and execute a Jupyter Notebook or any other IDE of your choice.

If you do not have Python installed yet, it is highly recommended that you install the Anaconda distribution of Python, which already has the above packages and more included.

All the code is in the Movie_review_Sentiment_analysis.ipynb notebook file.

Ok so first we do the Imports

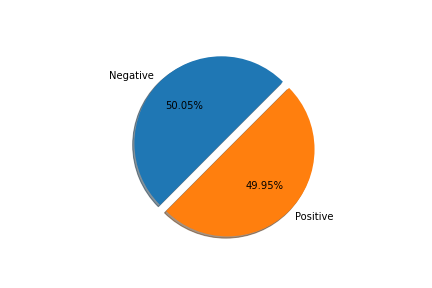

and look at the data.The data was collected from Kaggle.

Hmm,the data looks balanced...

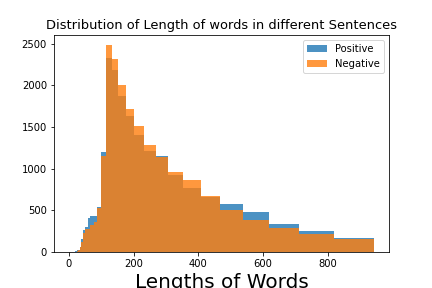

Ok it's good that we don't have to worry about data imbalance (which is rare in real-world scenarios), let's see if the length of sentences (in terms of words) is different

for positive and negative sentiment.

As the length of sentences of both the classes have almost same distribution and overlap so this feature is not important.

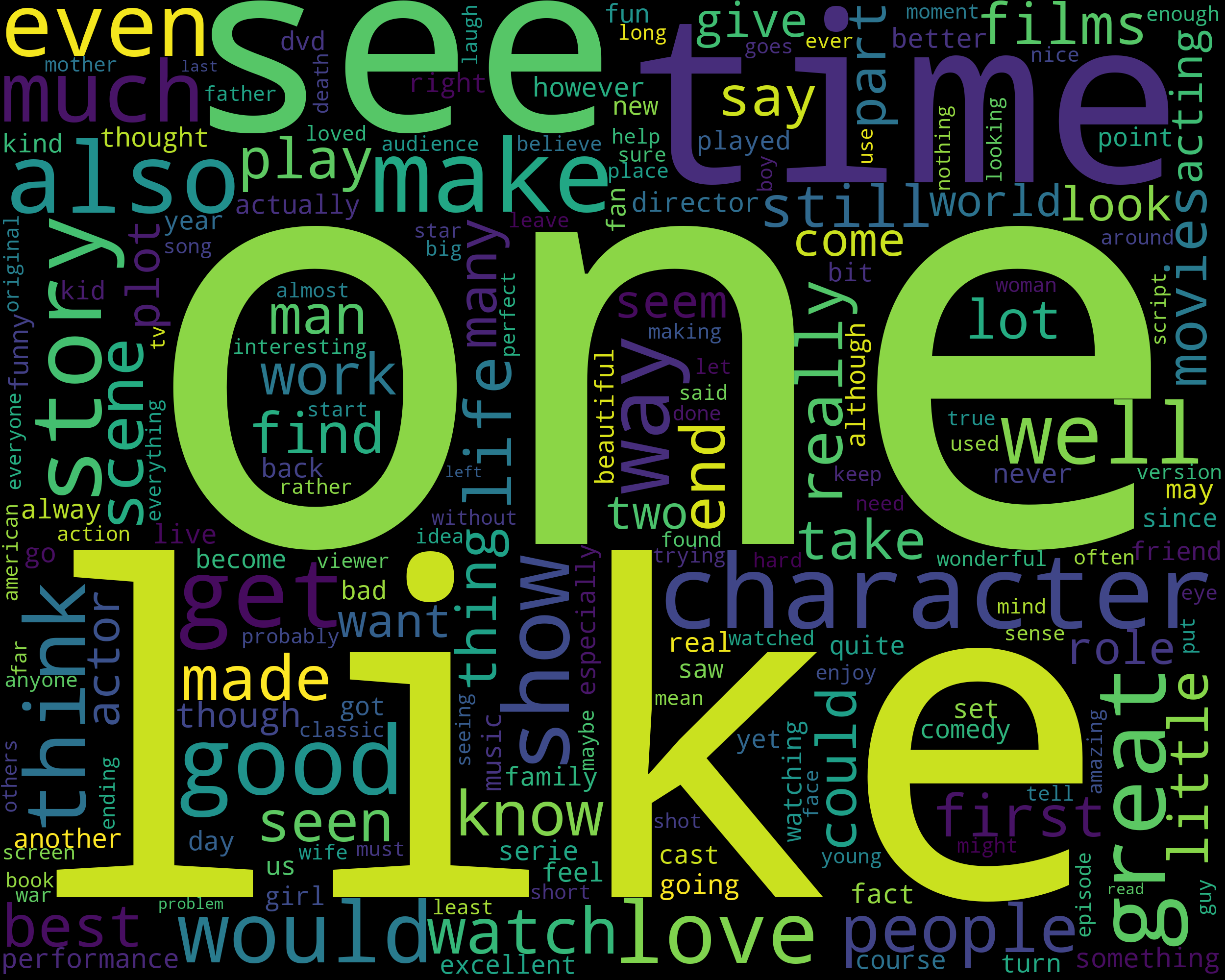

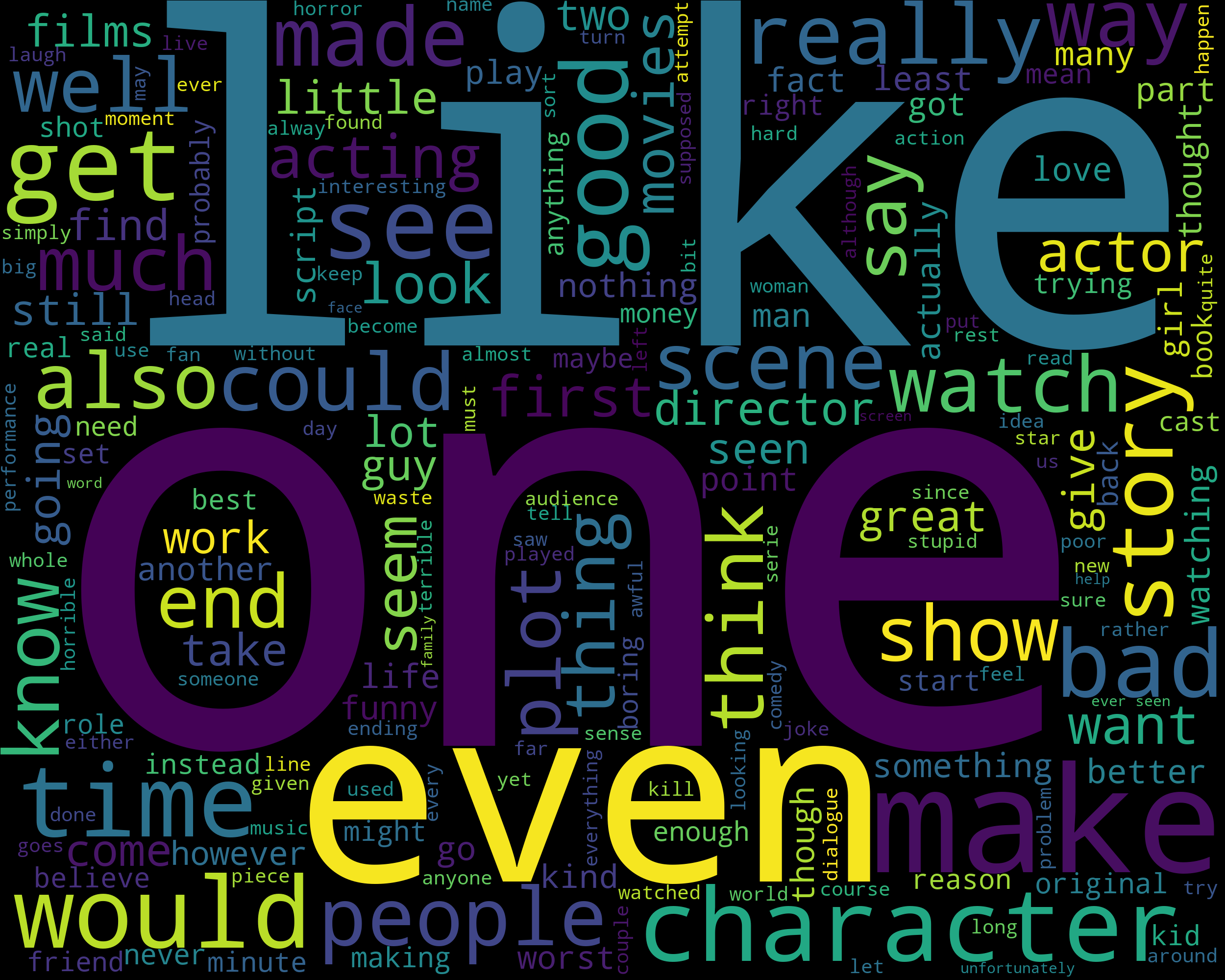

After some Text Preprocessing we visualize the text in movie reviews / docs.

Here are some visualizations using WordCloud -

The Text present in the Positive reviews -

The Text present in the Negative reviews -

Hmmm.... that's interesting that we saw word like like and one in both positive and negative sentiments.Well, that's because I like the movie and I don't like the movie both involve the word like but are in very different contexts and hence different meanings. Don't worry we'll take care of them in model building process using something called N-grams.

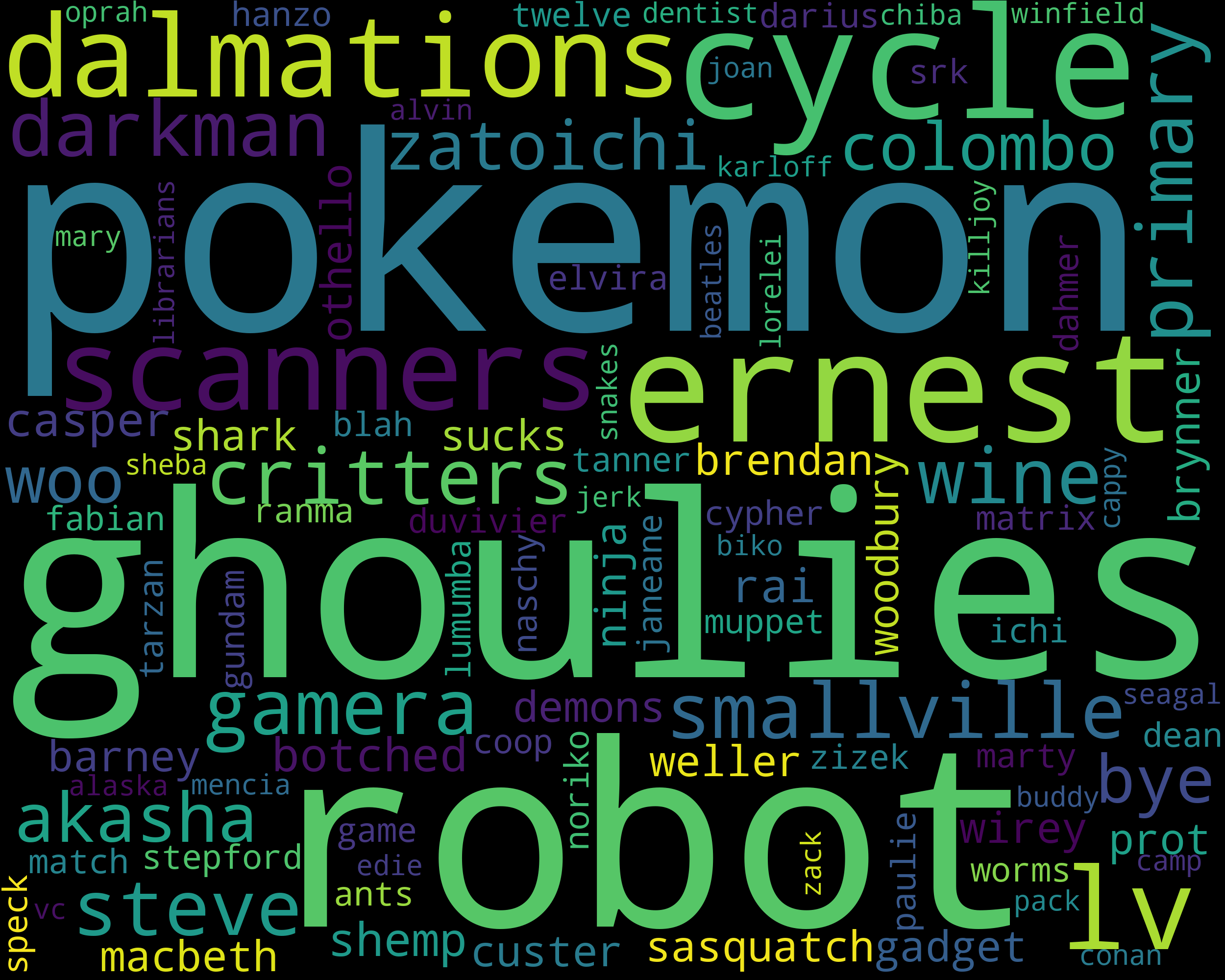

Top 100 Rarest words in reviews -

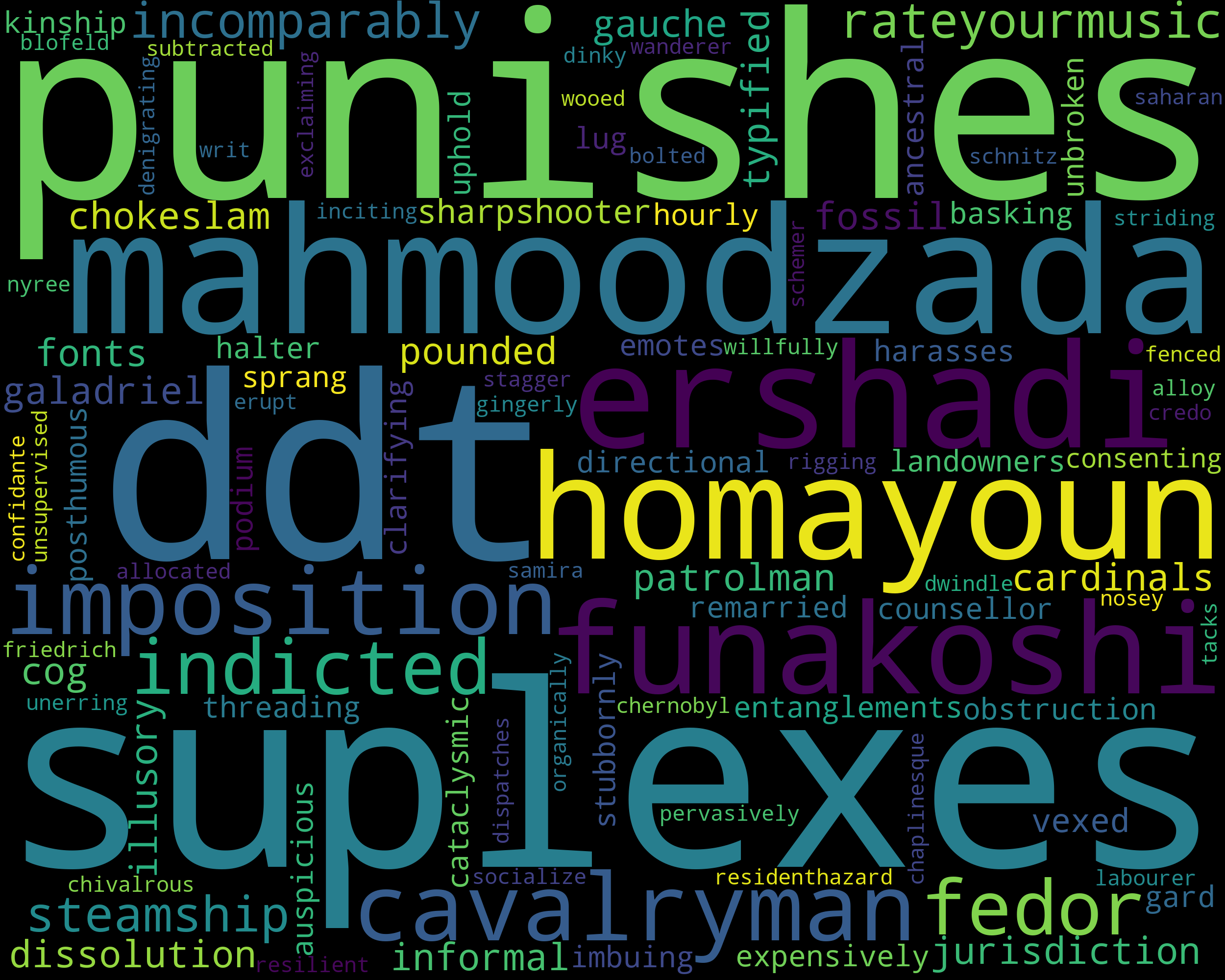

Top 100 most Common words in reviews -

We don't do much here except for providing our custom Lemmatization

function to the tokenizer variable in TF-IDF Vectorizer.

Lemmatizing is actually reducing words like walks and walking to base form walk,thus reducing the dimensions of the data. Learn more here.

Finally we're at the model building process. Here we build a Pipeline

which first fits and transforms our text reviews into a matrix of numbers that Computers can understand. How it works is that each word in the data present is assigned a fraction number which is the number of times it is present across the whole data divided by the number of reviews/docs it is present in. Head here

for a thorough explaination.

The next object in our Pipeline is obviously,the Model. We use a Logistic regression model with parameters - ngram_range=(1,3) , n_jobs=-1 , max_iter=500.

The n-grams are used so that the model can classify between I like it and I don't like it by also noticing the context they are being used in and doesn't just simply classifies everything as positive on seeing the word - like.

The model is trained on almost 40k movie reviews and has a really large Corpora with thousand of dimensions and takes almost half an hour to train on normal hardware.

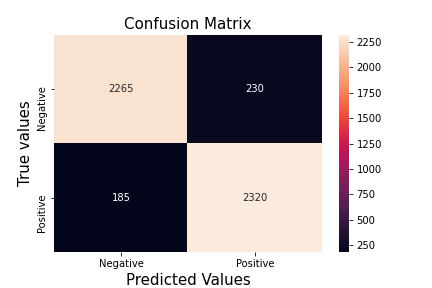

We Receive an accuracy of almost 92% which is really good, and also reliable as we had balanced Dataset. Still in case if you have doubts, here's the Confusion Matrix -

We also recieved F1 Score of 92%.

We saved the model using Pickle ,the size of the saved model is approximately 350MB. You can clone the logistic_model.pkl from the repository instead of going through the hassle of training it.

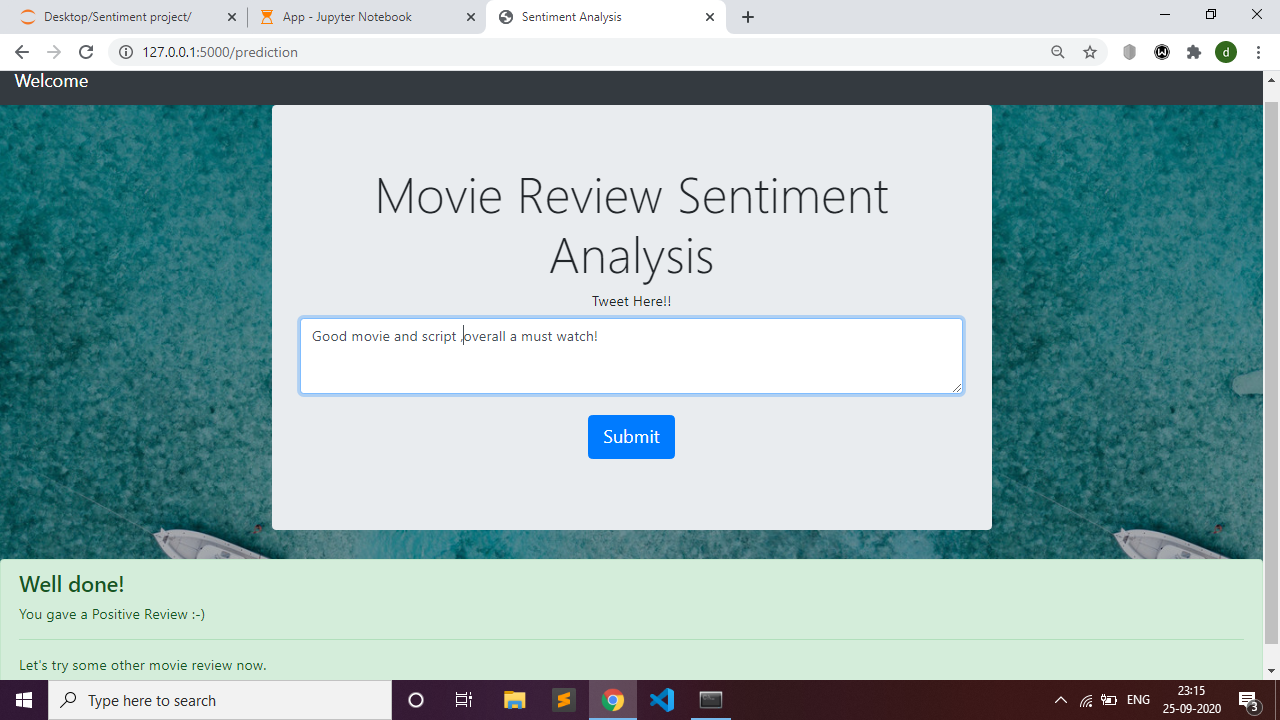

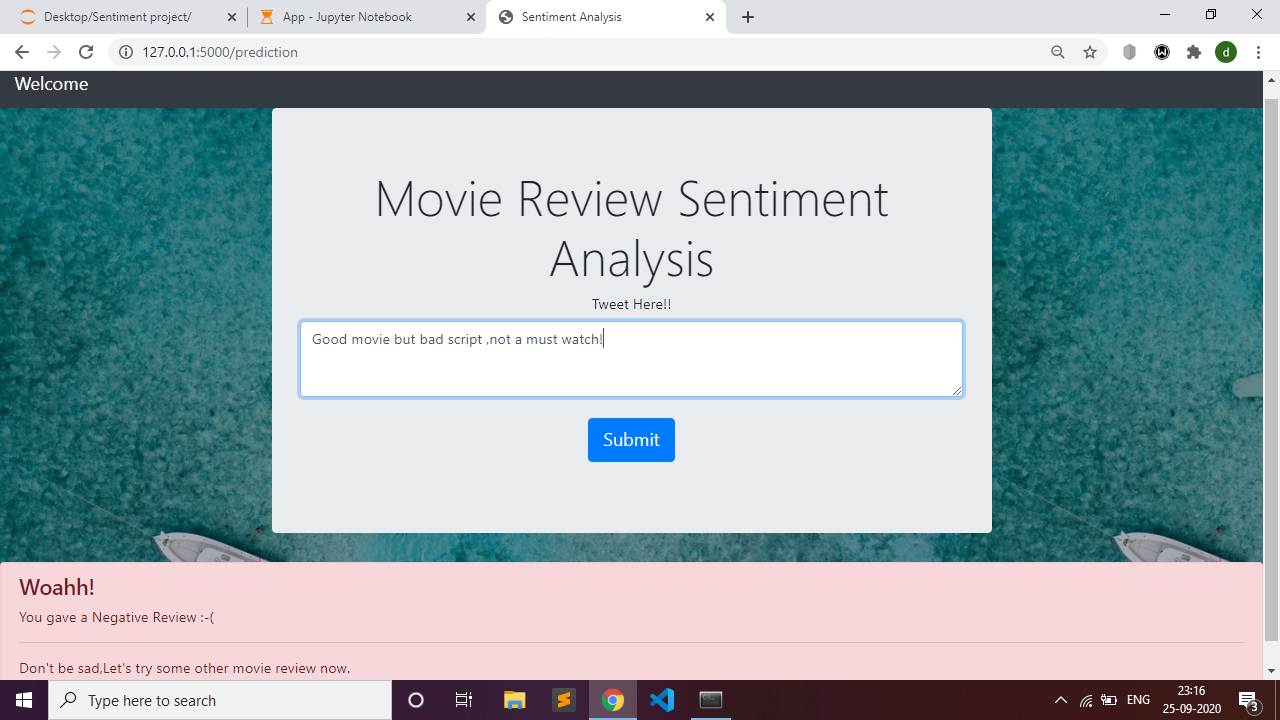

The Model is then deployed using the Flask frontend Local server using HTML,CSS and BOOTSTRAP 4.

You can run App.ipynb file if you are using the Jupyter notebook or app.py file for opening the flask website on your pc if you are using a normal IDE like VSCode/sublime etc ,both have been provided.

Postive Review

Negative Review

Note- The project wasn't productionized on Heroku because it's size (because of the model size) exceeded their platform's slug size of 300mb. Other cloud Platforms needed Credit-Card. Thus, it's best to run the model locally.

So,this was my first end-to-end model,hope you liked it!.

Want to connect? Here's my: