This project aims to create a simple and scalable repo, to reproduce Sora (OpenAI, but we prefer to call it "CloseAI" ) and build knowledge about Video-VQVAE (VideoGPT) + DiT at scale. However, we have limited resources, we deeply wish all open-source community can contribute to this project. Pull request are welcome!!!

本项目希望通过开源社区的力量复现Sora,由北大-兔展AIGC联合实验室共同发起,当前我们资源有限仅搭建了基础架构,无法进行完整训练,希望通过开源社区逐步增加模块并筹集资源进行训练,当前版本离目标差距巨大,仍需持续完善和快速迭代,欢迎Pull request!!!

Project stages:

- Primary

- Setup the codebase and train a un-conditional model on landscape dataset.

- Train models that boost resolution and duration.

- Extensions

- Conduct text2video experiments on landscape dataset.

- Train the 1080p model on video2text dataset.

- Control model with more condition.

[2024.03.05] See our latest todo, welcome to pull request.

[2024.03.04] We re-organize and modulize our codes and make it easy to contribute to the project, please see the Repo structure.

[2024.03.03] We open some discussions and clarify several issues.

[2024.03.01] Training codes are available now! Learn more in our project page. Please feel free to watch 👀 this repository for the latest updates.

- Setup repo-structure.

- Add Video-VQGAN model, which is borrowed from VideoGPT.

- Support variable aspect ratios, resolutions, durations training on DiT.

- Support Dynamic mask input inspired FiT.

- Add class-conditioning on embeddings.

- Incorporating Latte as main codebase.

- Add VAE model, which is borrowed from Stable Diffusion.

- Joint dynamic mask input with VAE.

- Make the codebase ready for the cluster training. Add SLURM scripts.

- Add sampling script.

- Incorporating SiT.

- Add PI to support out-of-domain size.

- Add frame interpolation model.

- Finish data loading, pre-processing utils.

- Add CLIP and T5 support.

- Add text2image training script.

- Add prompt captioner.

- Looking for a suitable dataset, welcome to discuss and recommend.

- Finish data loading, pre-processing utils.

- Support memory friendly training.

- Add flash-attention2 from pytorch.

- Add xformers.

- Add accelerate to automatically manage training, e.g. mixed precision training.

- Add gradient checkpoint.

- Train using the deepspeed engine.

- Load pretrained weight from PixArt-α.

- Incorporating ControlNet.

├── README.md

├── docs

│ ├── Data.md -> Datasets description.

│ ├── Contribution_Guidelines.md -> Contribution guidelines description.

├── scripts -> All training scripts.

│ └── train.sh

├── sora

│ ├── dataset -> Dataset code to read videos

│ ├── models

│ │ ├── captioner

│ │ ├── super_resolution

│ ├── modules

│ │ ├── ae -> compress videos to latents

│ │ │ ├── vqvae

│ │ │ ├── vae

│ │ ├── diffusion -> denoise latents

│ │ │ ├── dit

│ │ │ ├── unet

| ├── utils.py

│ ├── train.py -> Training code

The recommended requirements are as follows.

- Python >= 3.8

- Pytorch >= 1.13.1

- CUDA Version >= 11.7

- Install required packages:

git clone https://github.com/PKU-YuanGroup/Open-Sora-Plan

cd Open-Sora-Plan

conda create -n opensora python=3.8 -y

conda activate opensora

pip install torch==1.13.1+cu117 torchvision==0.14.1+cu117 torchaudio==0.13.1 --extra-index-url https://download.pytorch.org/whl/cu117

pip install -r requirements.txt

cd VideoGPT

pip install -e .

cd ..

Refer to Data.md

cd src/sora/modules/ae/vqvae/videogpt

Refer to origin repo. Use the scripts/train_vqvae.py script to train a Video-VQVAE. Execute python scripts/train_vqvae.py -h for information on all available training settings. A subset of more relevant settings are listed below, along with default values.

--embedding_dim: number of dimensions for codebooks embeddings--n_codes 2048: number of codes in the codebook--n_hiddens 240: number of hidden features in the residual blocks--n_res_layers 4: number of residual blocks--downsample 4 4 4: T H W downsampling stride of the encoder

--gpus 2: number of gpus for distributed training--sync_batchnorm: usesSyncBatchNorminstead ofBatchNorm3dwhen using > 1 gpu--gradient_clip_val 1: gradient clipping threshold for training--batch_size 16: batch size per gpu--num_workers 8: number of workers for each DataLoader

--data_path <path>: path to anhdf5file or a folder containingtrainandtestfolders with subdirectories of videos--resolution 128: spatial resolution to train on--sequence_length 16: temporal resolution, or video clip length

python rec_video.py --video-path "assets/origin_video_0.mp4" --rec-path "rec_video_0.mp4" --num-frames 500 --sample-rate 1python rec_video.py --video-path "assets/origin_video_1.mp4" --rec-path "rec_video_1.mp4" --resolution 196 --num-frames 600 --sample-rate 1We present four reconstructed videos in this demonstration, arranged from left to right as follows:

| 3s 596x336 | 10s 256x256 | 18s 196x196 | 24s 168x96 |

|---|---|---|---|

|

|

|

|

sh scripts/train.sh

Coming soon.

We greatly appreciate your contributions to the Open-Sora Plan open-source community and helping us make it even better than it is now!

For more details, please refer to the Contribution Guidelines

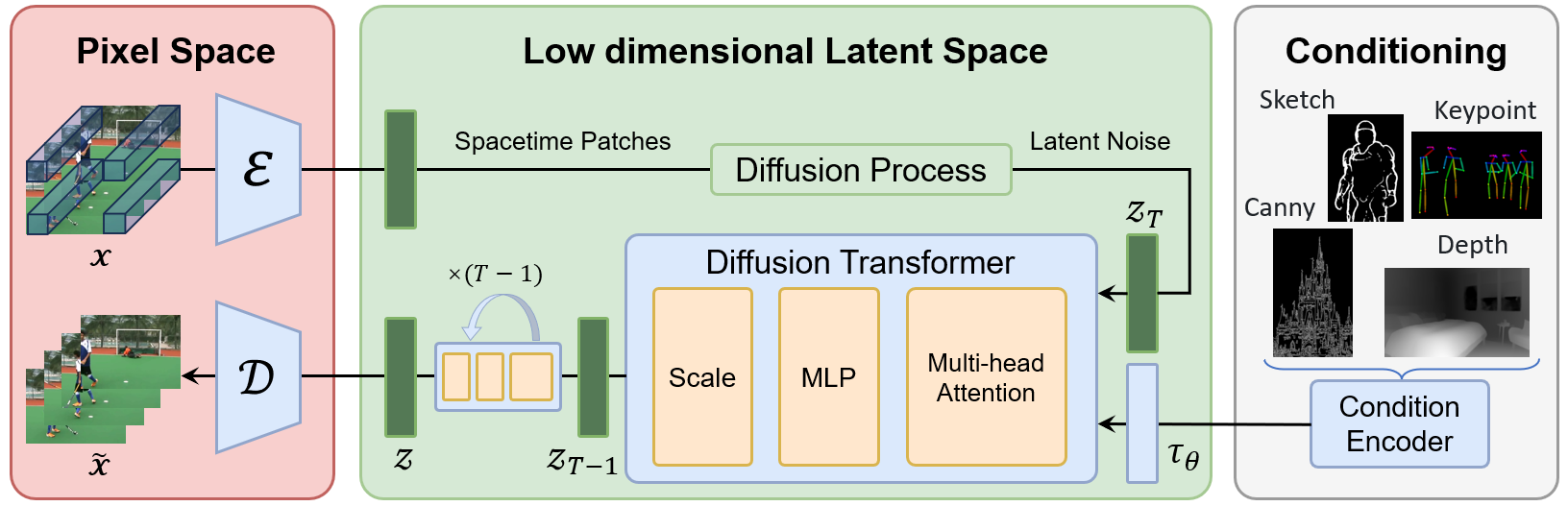

- DiT: Scalable Diffusion Models with Transformers.

- VideoGPT: Video Generation using VQ-VAE and Transformers.

- FiT: Flexible Vision Transformer for Diffusion Model.

- Positional Interpolation: Extending Context Window of Large Language Models via Positional Interpolation.

- The service is a research preview intended for non-commercial use only. See LICENSE.txt for details.