Materials for an introduction to machine learning.

- Lecture 1: Preliminaries, Feature Engineering and Feature Selection

- Lecture 2: Contemporary Linear Model Approaches

- Lecture 3: Model Assessment and Selection

- Lecture 4: Decision Trees

- Lecture 5: Artificial Neural Networks

- Lecture 6: Other Estimators: Support Vector Machines (SVM) k-Nearest-Neighbors (kNN), etc.

- Lecture 7: Decision Tree Ensembles

- Lecture 8: Convolutional Neural Networks

- Lecture 9: Clustering

- Lecture 10: Dimension Reduction

- Lecture 11: Association Rules and Recommendation

Corrections or suggestions? Please file a GitHub issue.

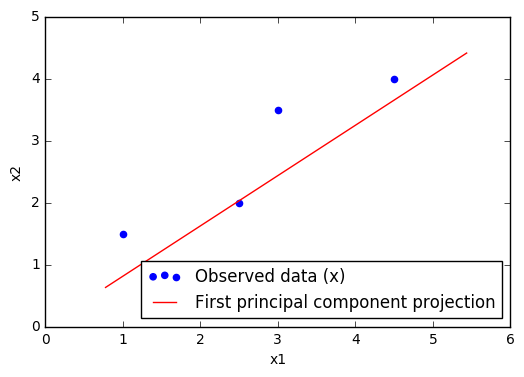

Source: Lecture 1 feature extraction example.

- Lecture Notes

- Lecture 1 Feature Engineering Table

- Assignment 1

- Software Examples:

All notebooks also available in the notebook folder.

- Label, Segment, Featurize: a cross domain framework for prediction engineering

- Introduction to Data Mining - Sections 2.2-2.3 (Chapter 2 notes)

- Introduction to the Foundations of Causal Discovery - Sections 1-4, and 7

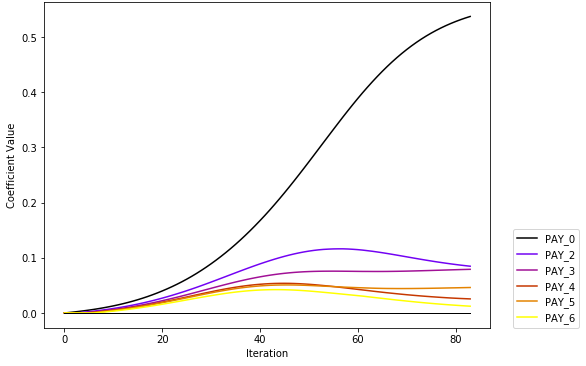

Source: From GLM to GBM: Building the Case For Complexity.

Notebooks and data also available via GitHub.

- Elements of Statistical Learning:

- Sections 3.1 - 3.4

- Section 4.4

- Regularization and variable selection via the elastic net

- h2o (Python or R download, requires Java)

- Generalized Linear Model (GLM) documentation

- Generalized Linear Modeling with H2O

- elasticnet (R)

- glmnet (R)

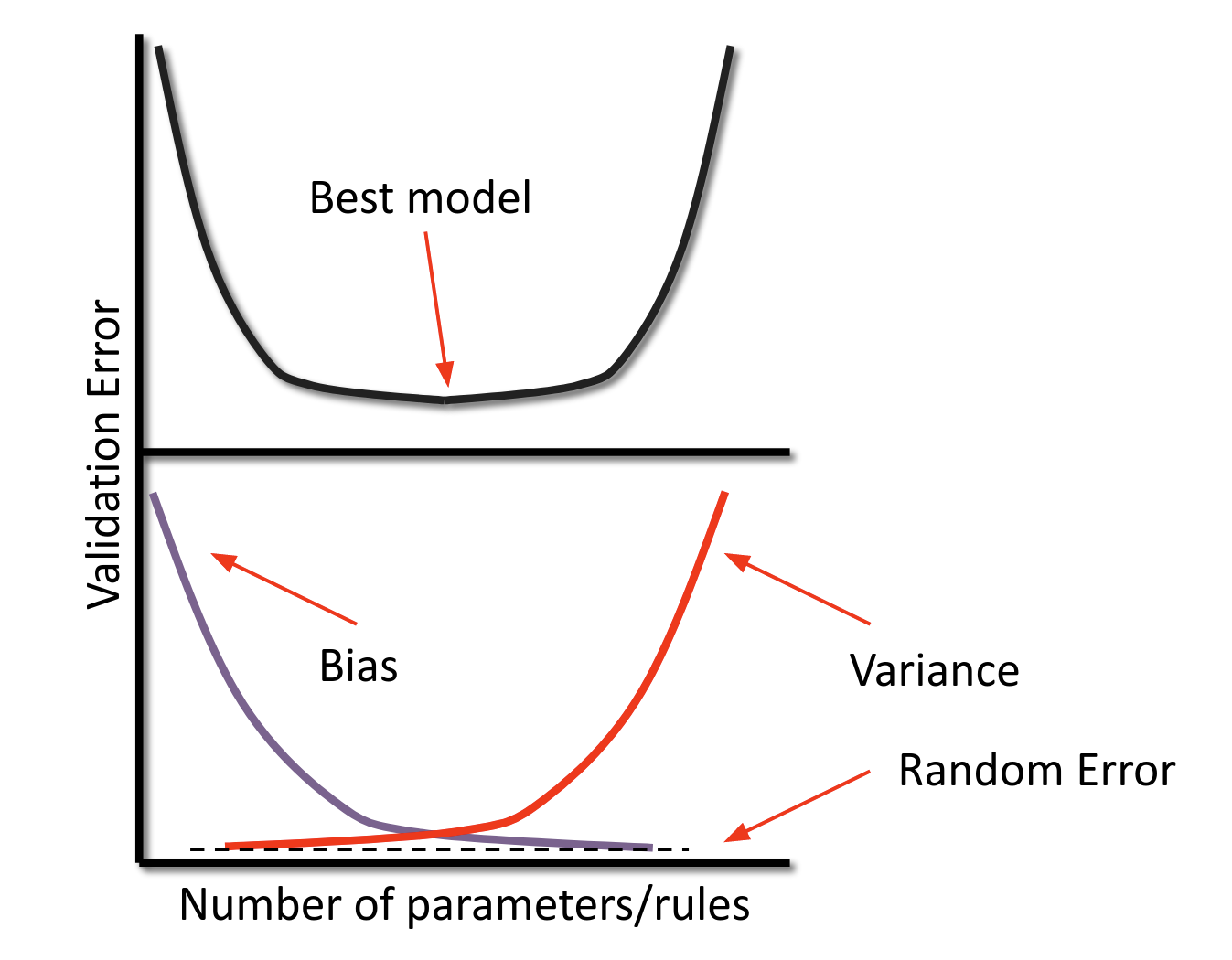

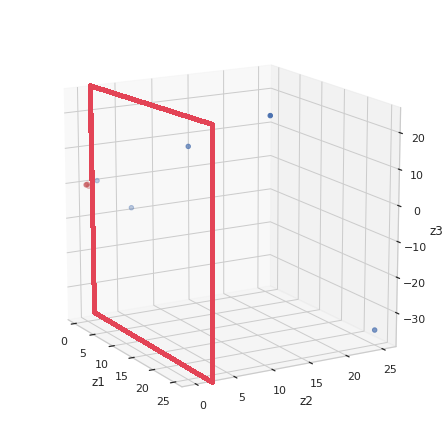

Source: From Lecture 3.

Notebooks and data also available via GitHub.

-

Elements of Statistical Learning:

- Sections 7.1 - 7.5

- Section 7.10

-

- Sections 3.4 - 3.6

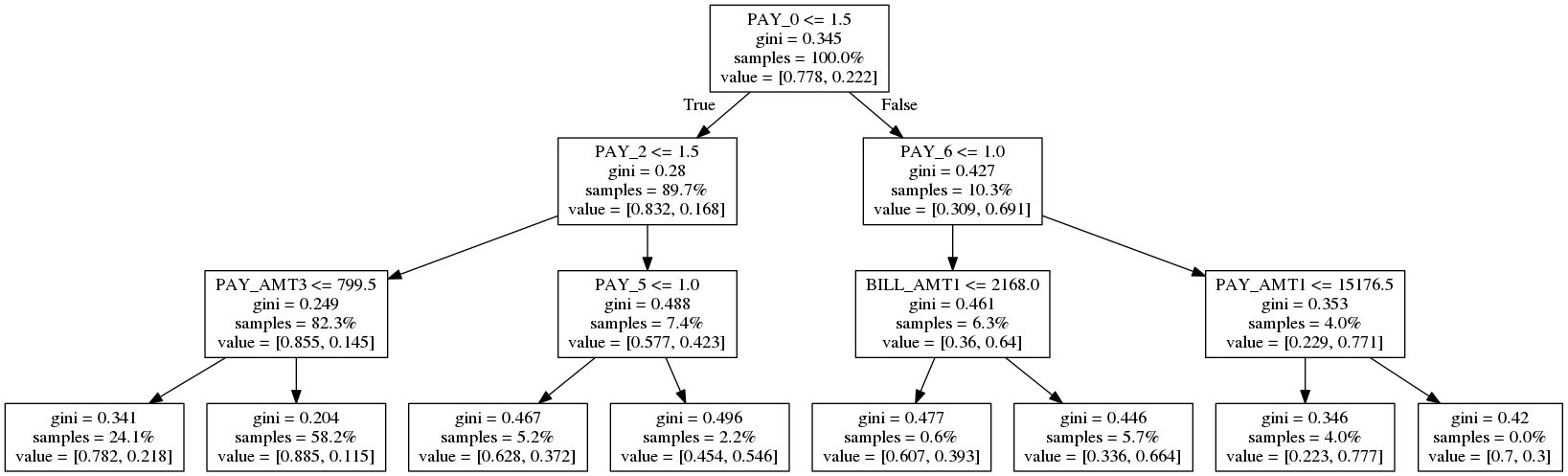

Source: Machine Learning for High-Risk Applications.

Notebooks and data also available via GitHub.

-

- Sections 3.1 - 3.3

-

Elements of Statistical Learning:

- Section 9.2

Source: Demystifying Deep Learning, SAS Institute.

Notebooks and data also available via GitHub.

-

- Section 4.7

-

Elements of Statistical Learning:

- Sections 11.3 - 11.7

- Neural Network Zoo

- Deep Learning with H2O

- Neural Additive Models: Interpretable Machine Learning with Neural Nets (How I would recommend training an ANN for structured data.)

- THE MNIST DATABASE

Source: From Assignment 6.

Notebooks and data also available via GitHub.

-

- Section 4.9

-

Elements of Statistical Learning:

- Sections 12.1 - 12.3

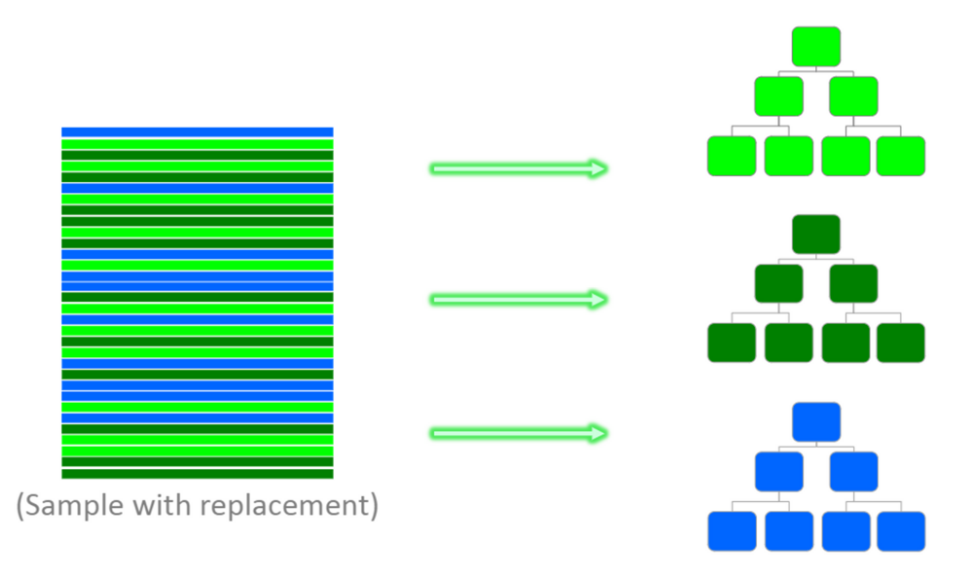

Source: From Lecture 7.

Notebooks and data also available via GitHub.

-

- Section 4.10

-

Elements of Statistical Learning:

- Chapter 10

- Chapter 15

- Random Forests

- Greedy Function Approximation: A Gradient Boosting Machine

- Gradient Boosting Machine With H2o

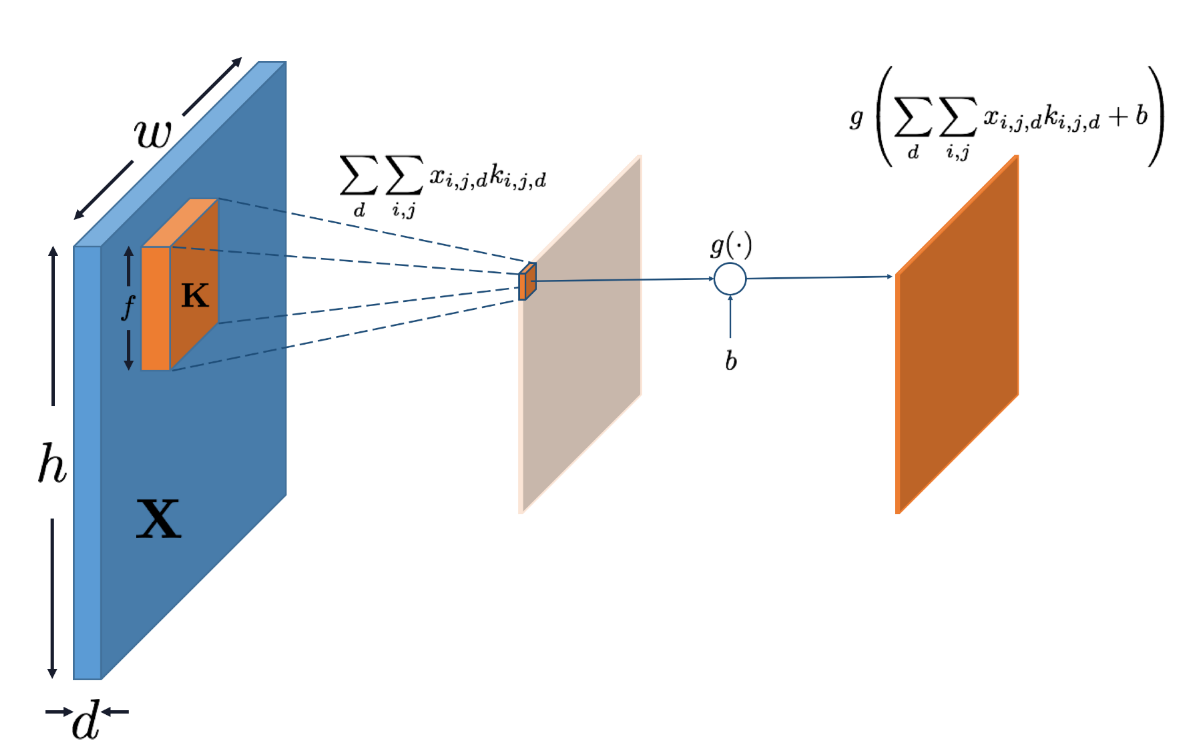

Source: From Lecture 8, with thanks to Wen Phan.

Notebooks are also available via GitHub.

-

- Section 4.8

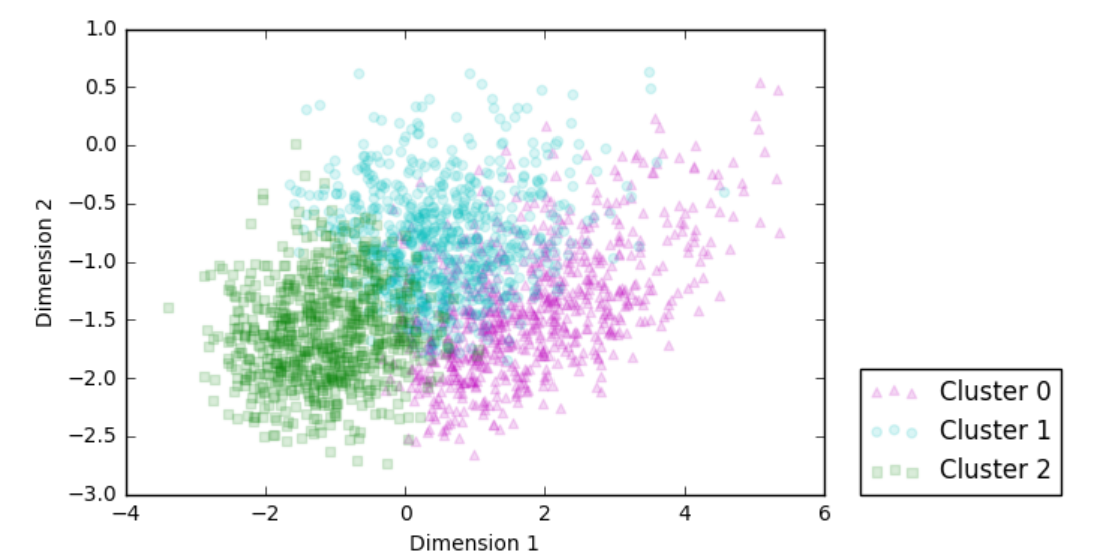

Source: From Assignment 9 Notebook.

Notebooks and data are also available via GitHub.

-

Introduction to Data Mining:

- Chapter 7, through Section 7.3

-

Elements of Statistical Learning:

- Section 14.3

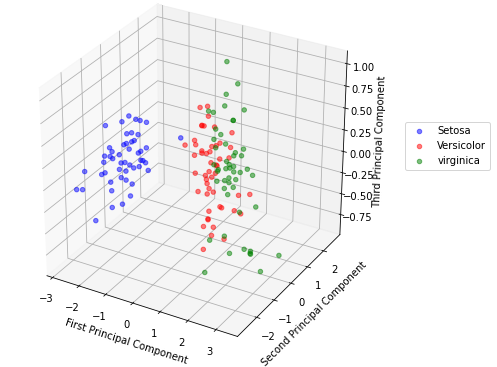

Source: From Lecture 10 Code Example.

Notebooks and data are also available via GitHub.

- Elements of Statistical Learning:

- Section 14.5 - 14.6

- Generalized Low Rank Models

- Near Optimal Signal Recovery From Random Projections: Universal Encoding Strategies?

Notebooks and data are also available via GitHub.

-

Introduction to Data Mining, Chapter 5

- Sections 5.1 – 5.3, and 5.7

-

Elements of Statistical Learning:

- Section 14.5 - 14.6