Paper: https://arxiv.org/abs/2003.03581

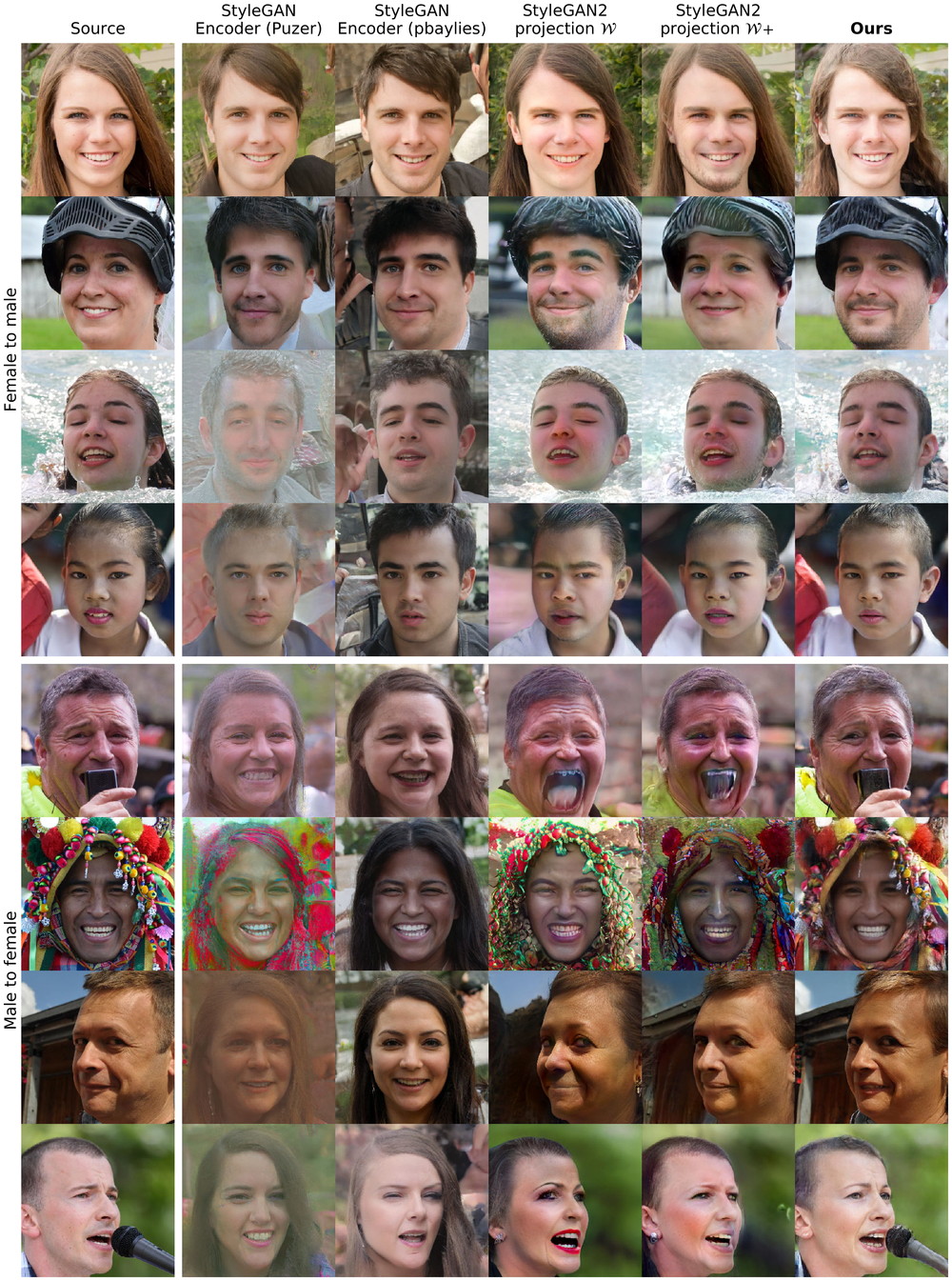

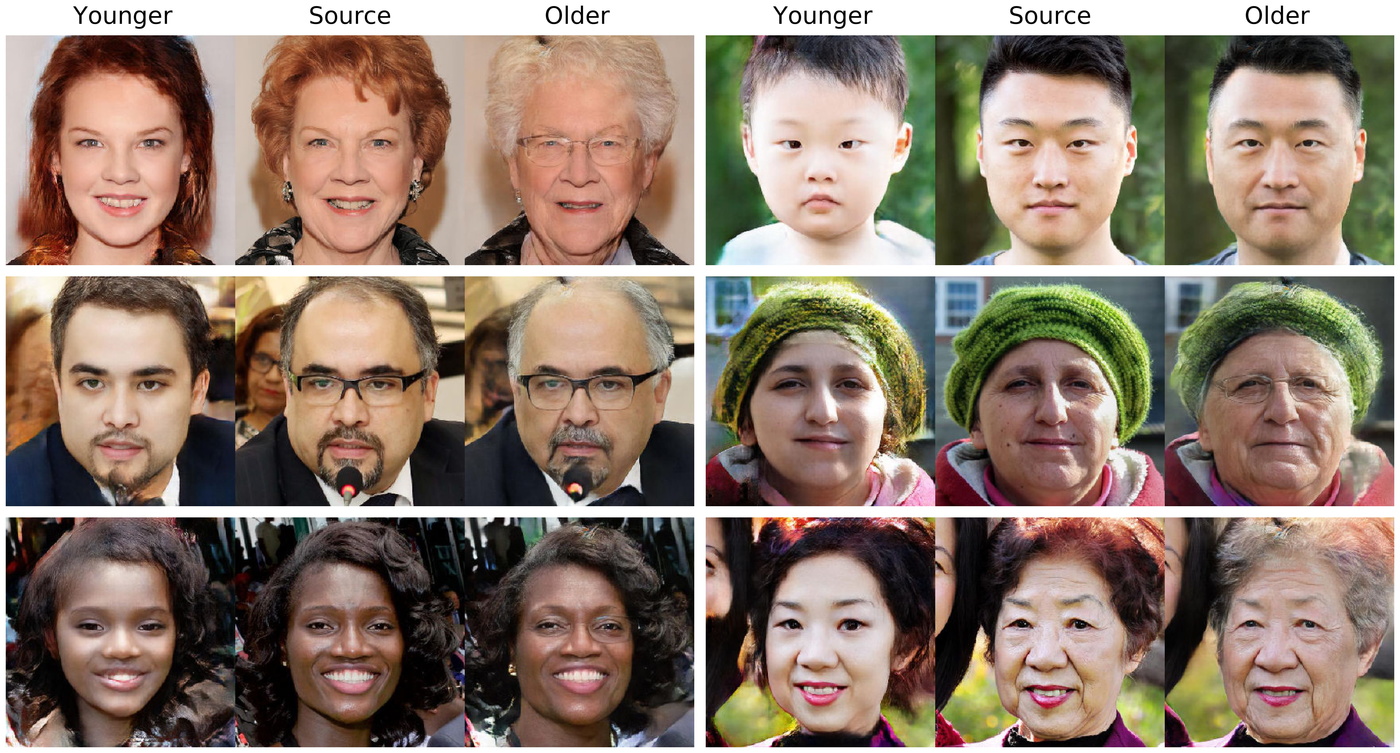

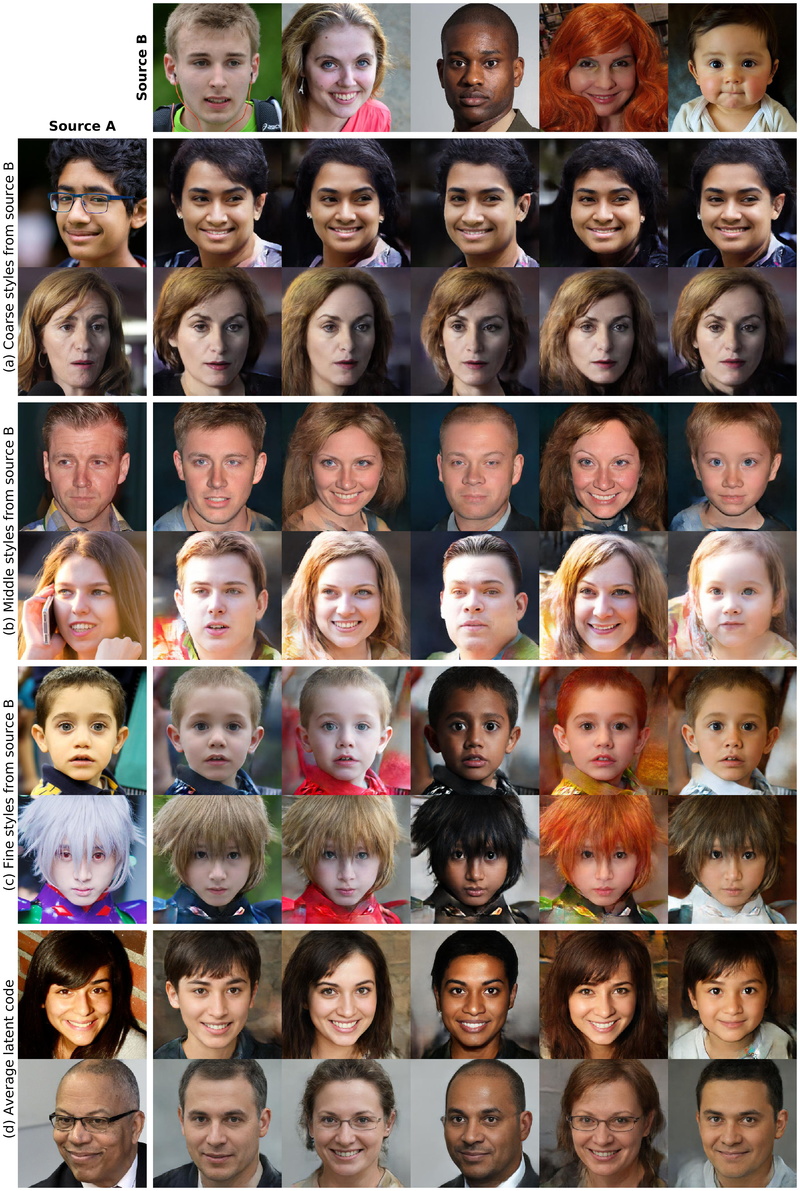

TL;DR: Paired image-to-image translation, trained on synthetic data generated by StyleGAN2 outperforms existing approaches in image manipulation.

StyleGAN2 Distillation for Feed-forward Image Manipulation

Yuri Viazovetskyi*1, Vladimir Ivashkin*1,2, and Evgeny Kashin*1

[1]Yandex, [2]Moscow Institute of Physics and Technology (* indicates equal contribution).

Abstract: StyleGAN2 is a state-of-the-art network in generating realistic images. Besides, it was explicitly trained to have disentangled directions in latent space, which allows efficient image manipulation by varying latent factors. Editing existing images requires embedding a given image into the latent space of StyleGAN2. Latent code optimization via backpropagation is commonly used for qualitative embedding of real world images, although it is prohibitively slow for many applications. We propose a way to distill a particular image manipulation of StyleGAN2 into image-to-image network trained in paired way. The resulting pipeline is an alternative to existing GANs, trained on unpaired data. We provide results of human faces’ transformation: gender swap, aging/rejuvenation, style transfer and image morphing. We show that the quality of generation using our method is comparable to StyleGAN2 backpropagation and current state-of-the-art methods in these particular tasks.

- Uncurated generated examples

- Synthetic dataset for gender swap

- Pix2pixHD weights for to female translation and for to male translation

Based on stylegan2 and pix2pixHD repos. To use it, you must install their requirements.

In stylegan2 directory.

- Generate random images and save source dlatents vectors:

python run_generator.py generate-images-custom --network=gdrive:networks/stylegan2-ffhq-config-f.pk

--truncation-psi=0.7 --num 5000 --result-dir /mnt/generated_faces

- Predict attributes for each image with some pretrained classifier (we used internal)

- Use

Learn_direction_in_latent_space.ipynbto find a direction in dlatents - Alternatively, you can use publicly available vectors https://twitter.com/robertluxemburg/status/1207087801344372736 (stylegan2directions folder in root)

- Generate the dataset using a vector:

python run_generator.py generate-images-custom --network=gdrive:networks/stylegan2-ffhq-config-f.pkl

--truncation-psi=0.7 --num 50000 --result-dir /mnt/generated_ffhq_smile

--direction_path ../stylegan2directions/smile.npy --coeff 1.5

- Our filtered gender dataset as an example

- Use

Dataset_preprocessing.ipynbto transform data in pix2pixHD format TODO: make a script

In pix2pixHD directory.

Training command:

python train.py --name r512_smile_pos --label_nc 0

--dataroot /mnt/generated_ffhq_smile --tf_log --no_instance

--loadSize 512 --gpu_ids 0,1 --batchSize 8

Testing command:

python test.py --name r512_smile_pos --label_nc 0

--dataroot /mnt/datasets/ffhq_69000 --no_instance --loadSize 512

--gpu_ids 0,1 --batchSize 32 --how_many 100

Style mixing:

For style mixing you need to generate mixing examples and create a third folder

C for the pixp2pixHD model. It turns out that in folder A and C there will be

two different people, who have to mix, and in folder B the result. For the

learning script, you only need to add --input_nc 6 parameter.

The source code, pretrained models, and dataset will be available under Creative Commons BY-NC 4.0 license by Yandex LLC. You can use, copy, tranform and build upon the material for non-commercial purposes as long as you give appropriate credit by citing our paper, and indicate if changes were made.

@inproceedings{DBLP:conf/eccv/ViazovetskyiIK20,

author = {Yuri Viazovetskyi and

Vladimir Ivashkin and

Evgeny Kashin},

title = {StyleGAN2 Distillation for Feed-Forward Image Manipulation},

booktitle = {ECCV},

year = {2020}

}