FATE-LLM is a framework to support federated learning for large language models(LLMs) and small language models(SLMs).

- Federated learning for large language models(LLMs) and small language models(SLMs).

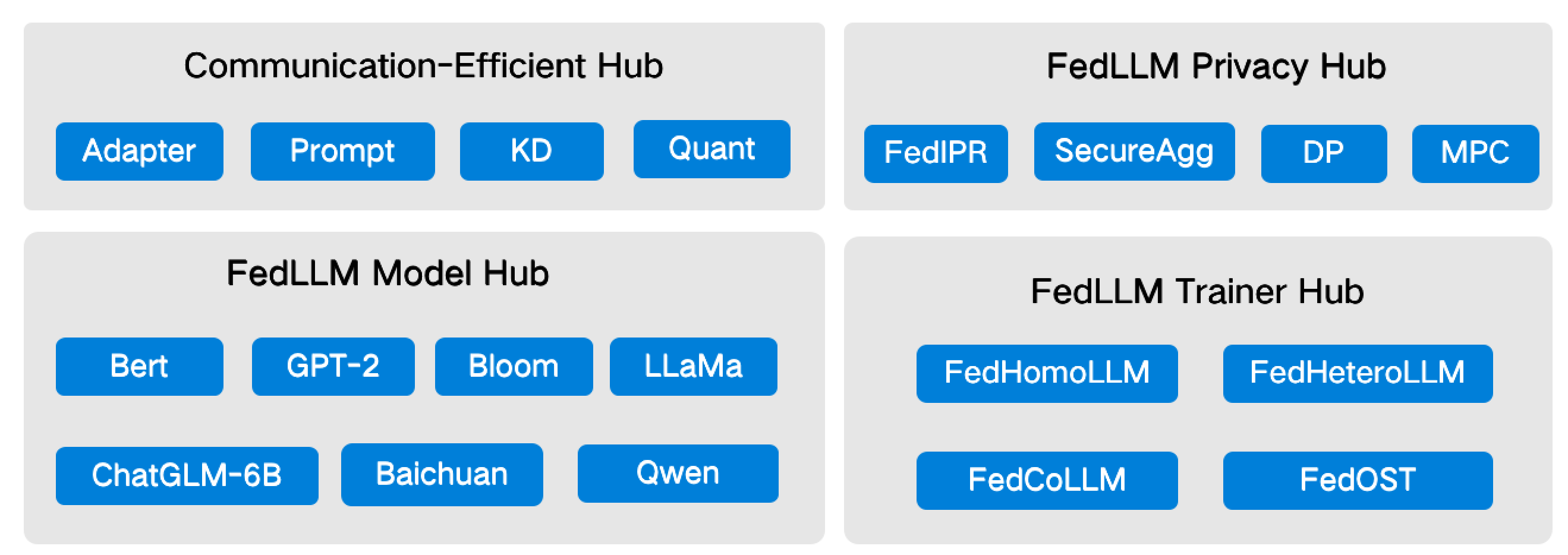

- Promote training efficiency of federated LLMs using Parameter-Efficient methods.

- Protect the IP of LLMs using FedIPR.

- Protect data privacy during training and inference through privacy preserving mechanisms.

- To deploy FATE-LLM v2.2.0 or higher version, three ways are provided, please refer deploy tutorial for more details:

- deploy with FATE only from pypi then using Launcher to run tasks

- deploy with FATE、FATE-Flow、FATE-Client from pypi, user can run tasks with Pipeline

- To deploy lower versions: please refer to FATE-Standalone deployment.

- To deploy FATE-LLM v2.0.* - FATE-LLM v2.1.*, deploy FATE-Standalone with version >= 2.1, then make a new directory

{fate_install}/fate_llmand clone the code into it, install the python requirements, and add{fate_install}/fate_llm/pythontoPYTHONPATH - To deploy FATE-LLM v1.x, deploy FATE-Standalone with 1.11.3 <= version < 2.0, then copy directory

python/fate_llmto{fate_install}/fate/python/fate_llm

- To deploy FATE-LLM v2.0.* - FATE-LLM v2.1.*, deploy FATE-Standalone with version >= 2.1, then make a new directory

Use FATE-LLM deployment packages to deploy, refer to FATE-Cluster deployment for more deployment details.

- Federated ChatGLM3-6B Training

- Builtin Models In PELLM

- Offsite Tuning: Transfer Learning without Full Model

- FedKSeed: Federated Full-Parameter Tuning of Billion-Sized Language Models with Communication Cost under 18 Kilobytes

- InferDPT: Privacy-preserving Inference for Black-box Large Language Models

- FedMKT: Federated Mutual Knowledge Transfer for Large and Small Language Models

- PDSS: A Privacy-Preserving Framework for Step-by-Step Distillation of Large Language Models

- FDKT: Federated Domain-Specific Knowledge Transfer on Large Language Models Using Synthetic Data

If you publish work that uses FATE-LLM, please cite FATE-LLM as follows:

@article{fan2023fate,

title={Fate-llm: A industrial grade federated learning framework for large language models},

author={Fan, Tao and Kang, Yan and Ma, Guoqiang and Chen, Weijing and Wei, Wenbin and Fan, Lixin and Yang, Qiang},

journal={Symposium on Advances and Open Problems in Large Language Models (LLM@IJCAI'23)},

year={2023}

}