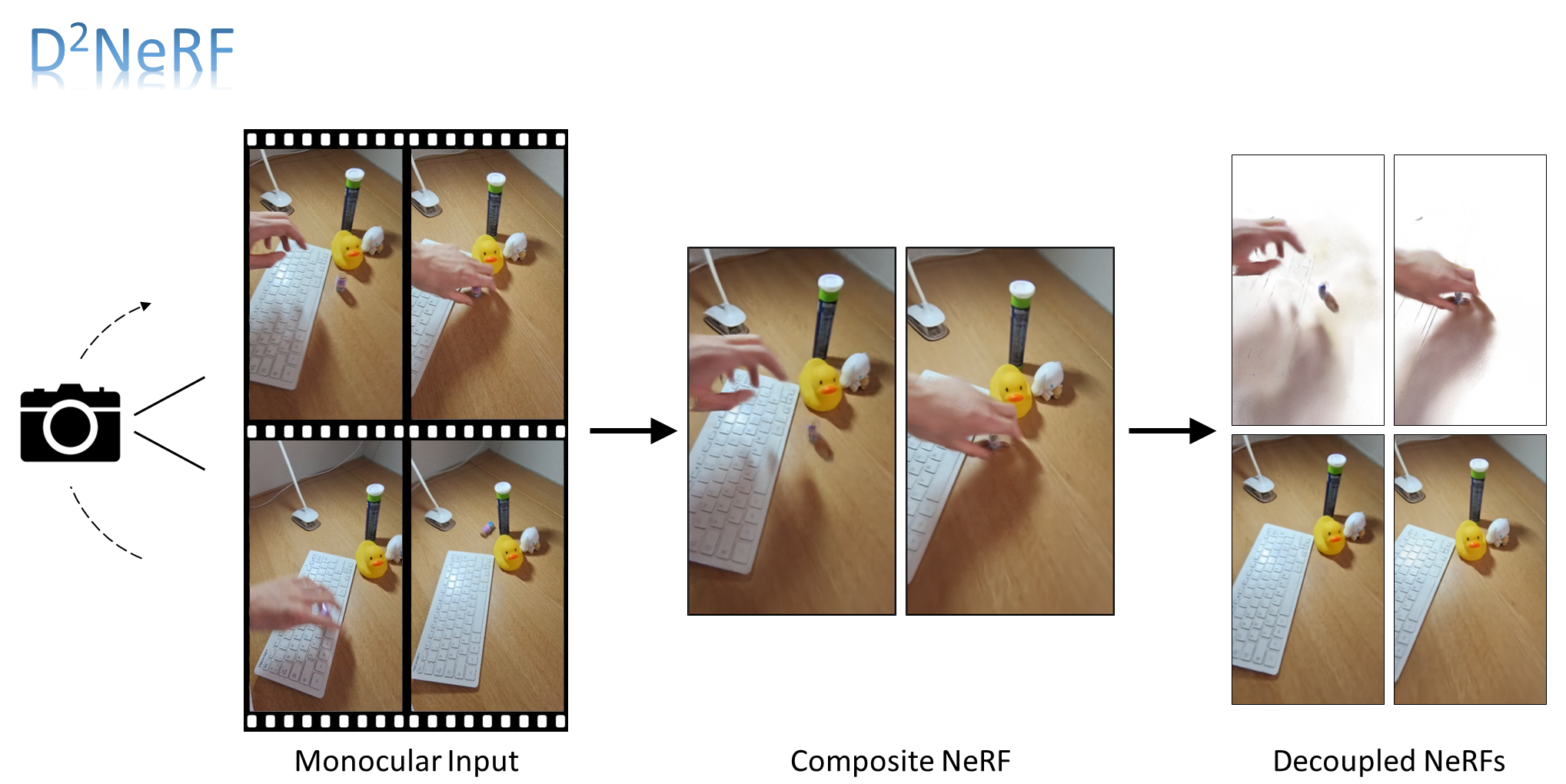

This is the code for "D2NeRF: Self-Supervised Decoupling of Dynamic and Static Objects from a Monocular Video".

This codebase implements D2NeRF based on HyperNeRF

The code can be run under any environment with Python 3.8 and above.

Firstly, set up an environment via Miniconda or Anaconda:

conda create --name d2nerf python=3.8

Next, install the required packages:

pip install -r requirements.txt

Install the appropriate JAX distribution for your environment by following the instructions here. For example:

pip install --upgrade "jax[cuda]" -f https://storage.googleapis.com/jax-releases/jax_releases.html

Please download our dataset here.

After unzipping the data, you can train with the following command:

export DATASET_PATH=/path/to/dataset

export EXPERIMENT_PATH=/path/to/save/experiment/to

export CONFIG_PATH=configs/rl/001.gin

python train.py \

--base_folder $EXPERIMENT_PATH \

--gin_bindings="data_dir='$DATASET_PATH'" \

--gin_configs $CONFIG_PATH

To plot telemetry to Tensorboard and render checkpoints on the fly, also launch an evaluation job by running:

python eval.py \

--base_folder $EXPERIMENT_PATH \

--gin_bindings="data_dir='$DATASET_PATH'" \

--gin_configs $CONFIG_PATH

We also provide an example script at train_eval_balloon.sh.

- Similiar to HyperNeRF, We use Gin for configuration.

- We provide a couple of preset configurations:

configs/decompose/: template configurations defining shared comfigurations for NeRF and HyperNeRFconfigs/rl/: configurations for experiments on real-life scenes.configs/synthetic/: configurations for experiments on synthetic scenes.

- Please refer to

config.pyfor documentation on what each configuration does.

The dataset uses the same format as Nerfies.

For synthetic scenes generated using Kubric, we also provide the worker script

, named script.py under each folder.

We include several pre-trained model checkpoints which can be downloaded from here. Please use the config.gin files included in each subfolder for evaluation of the model checkpoints.

Because our code is fully compatiable with HyperNeRF dataset, thanks to them, you can simply use their colab notebook to process your video and prepare a dataset for training.