[2021-07-07]: Update the OSFD code at 3R/code/OSFD/.

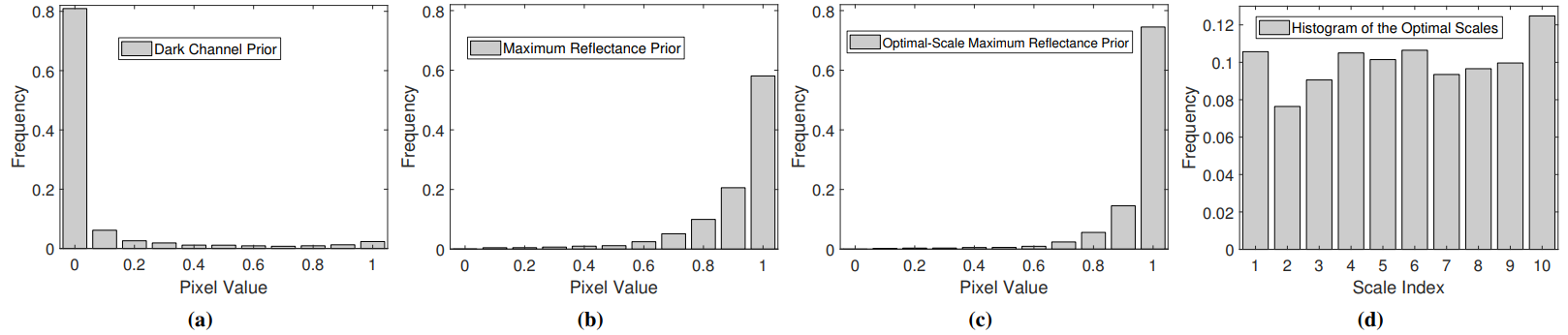

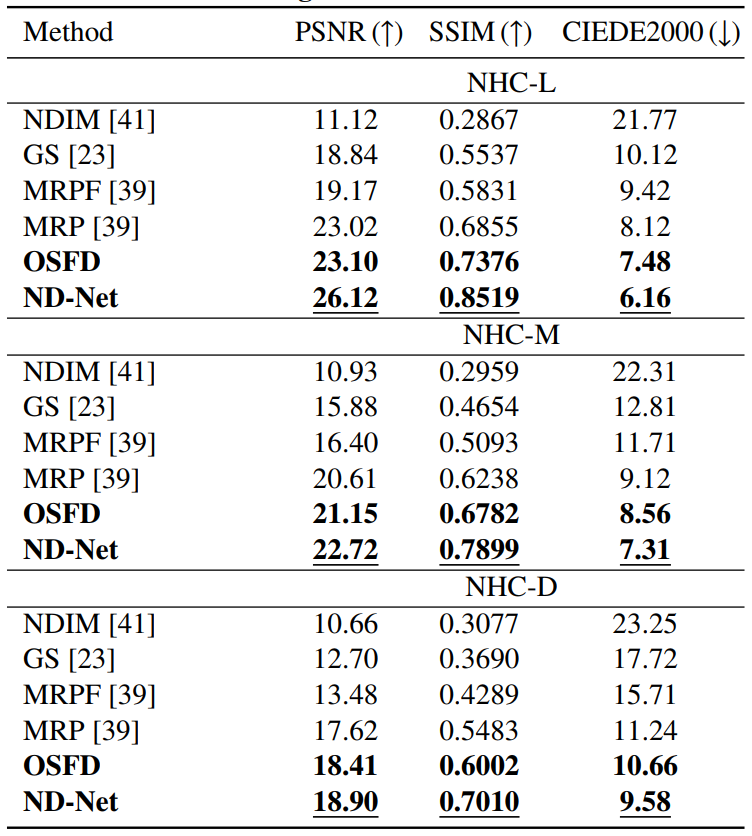

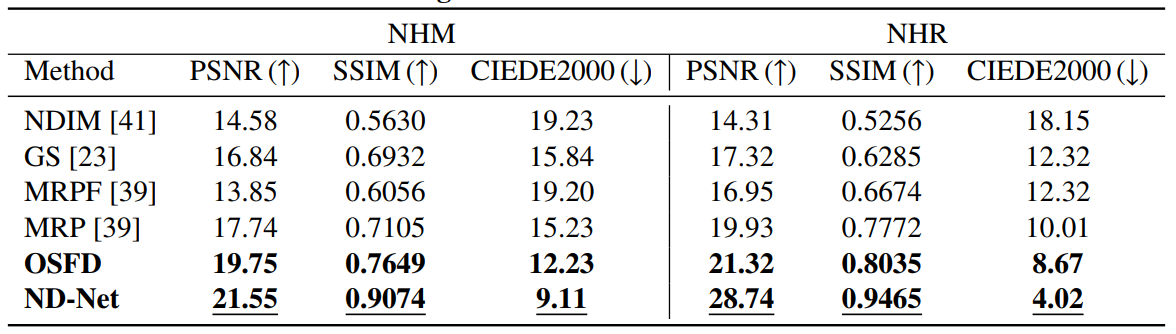

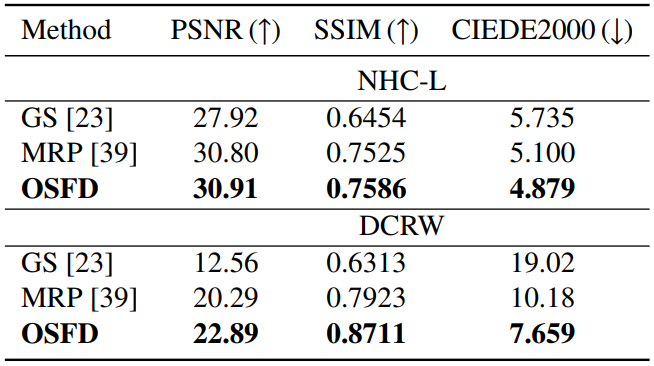

This repository contains the code, datasets, models, and test results in the paper Nighttime Dehazing with a Synthetic Benchmark (Arxiv). Increasing the visibility of nighttime hazy images is challenging because of uneven illumination from active artificial light sources and haze absorbing/scattering. The absence of large-scale benchmark datasets hampers progress in this area. To address this issue, we propose a novel synthetic method called 3R to simulate nighttime hazy images from daytime clear images, which first reconstructs the scene geometry, then simulates the light rays and object reflectance, and finally renders the haze effects. Based on it, we generate realistic nighttime hazy images by sampling real-world light colors from a prior empirical distribution. Experiments on the synthetic benchmark show that the degrading factors jointly reduce the image quality. To address this issue, we propose an optimal-scale maximum reflectance prior (OS-MRP) to disentangle the color correction from haze removal and address them sequentially. Besides, we also devise a simple but effective learning-based baseline (ND-Net) which has an encoder-decoder structure based on the MobileNet-v2 backbone. Experiment results demonstrate their superiority over state-of-the-art methods in terms of both image quality and runtime

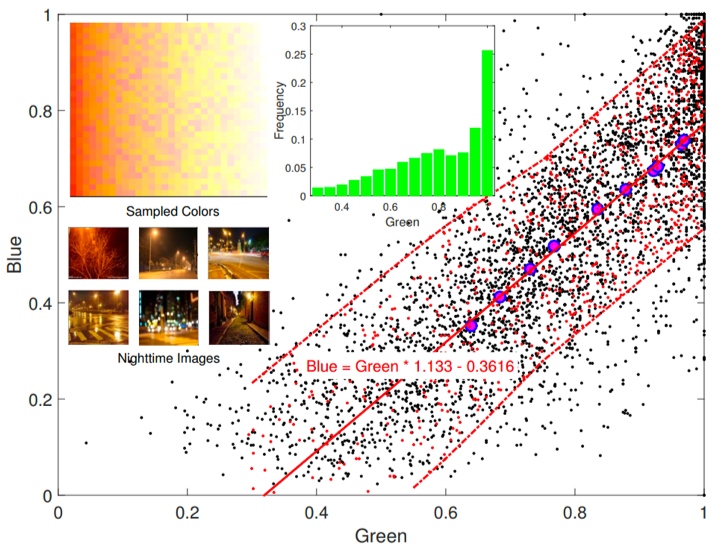

Since there are no public large-scale nighttime hazy images, to address the challenging issue, we propose a novel synthetic method named 3R, which can generate realistic nighttime hazy images. First, we carry out an empirical study on real-world light colors and obtain an empirical equation between the green channel and blue channel of light colors, along with an empirical distribution of green channel values as shonw in Figure 2. Based on them, we can sample realistic light colors as shown in the top-left subfigure.

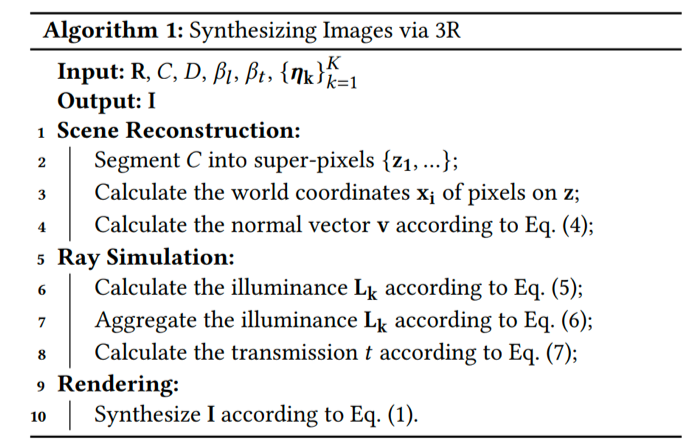

Then, we propose the 3R method, it can simulate rays in the scene and render the nighttime hazy images based on the reconstructed scene geometry.

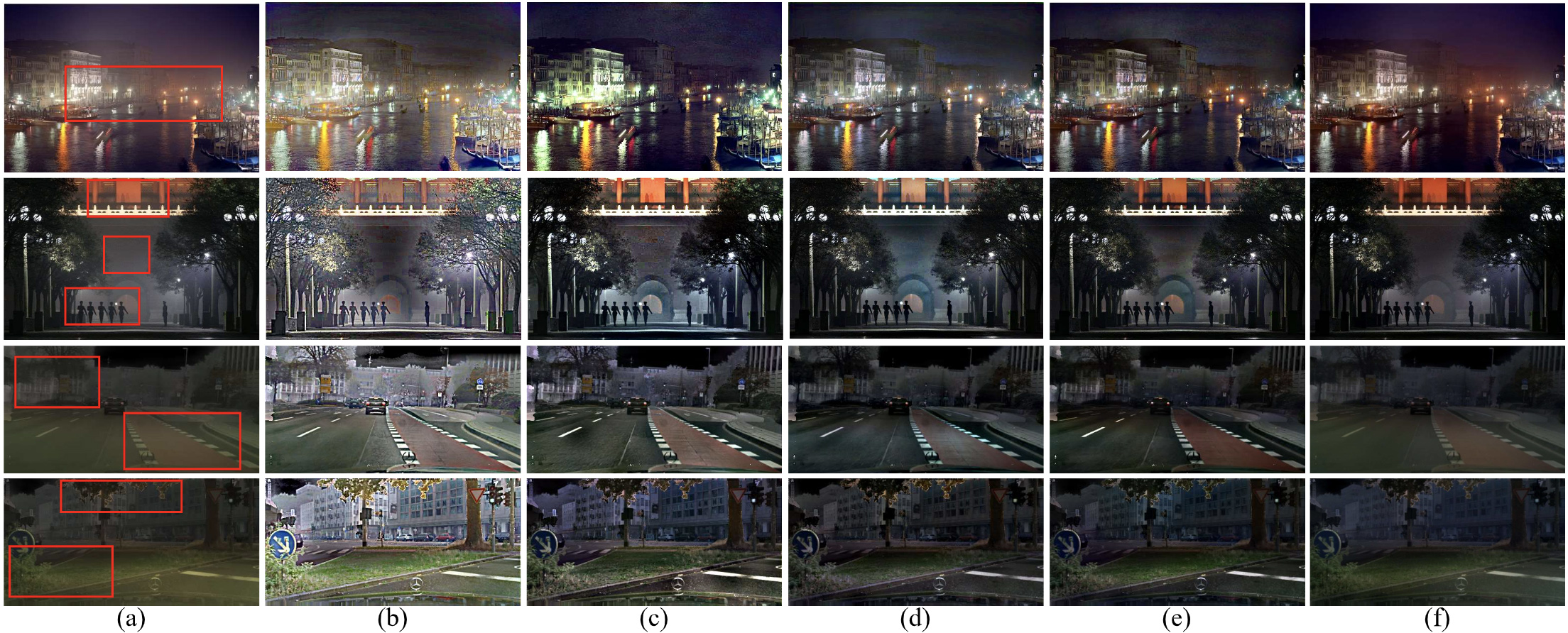

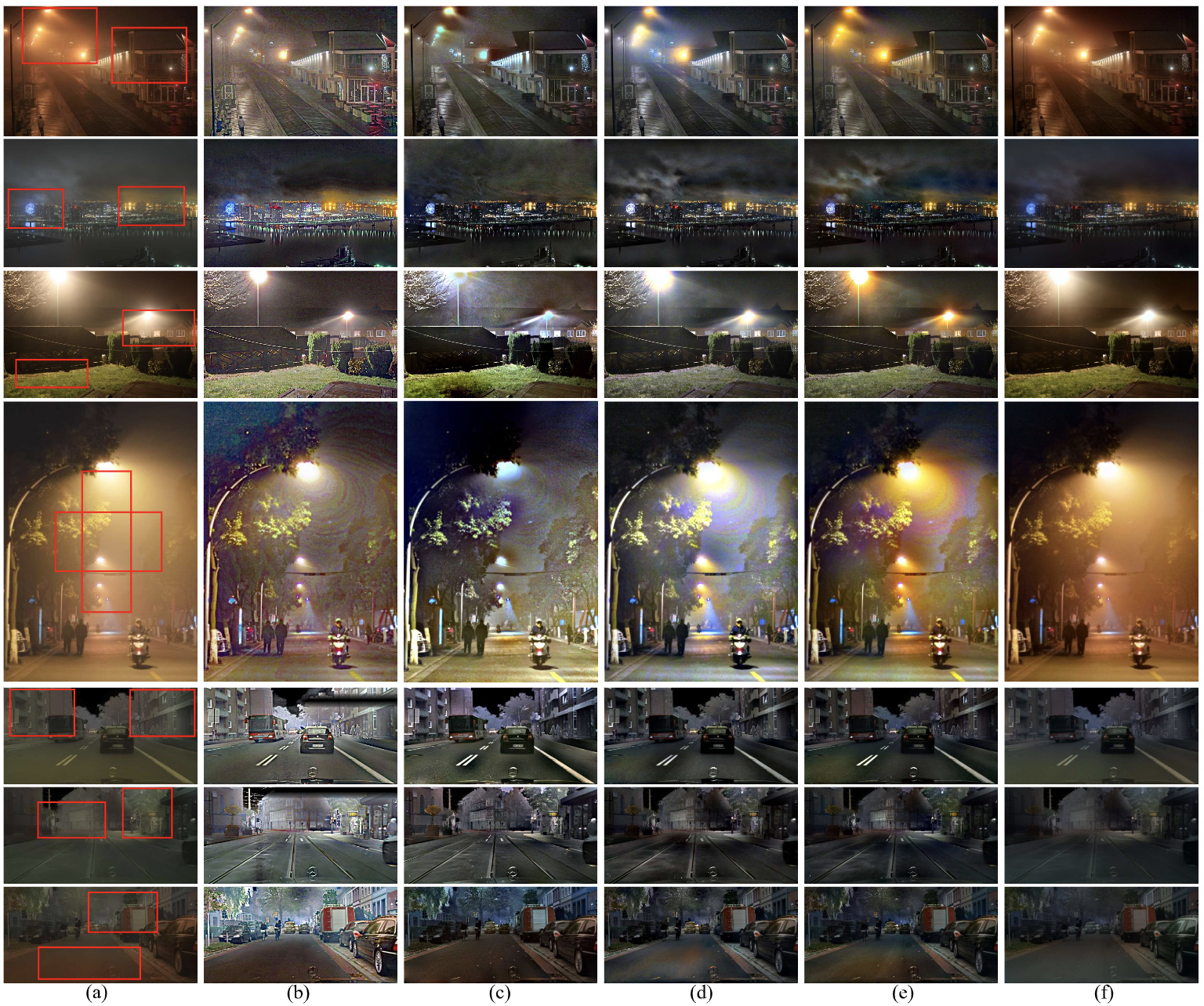

As can be seen from the walls, roads, and cars in Figure 3(c), the illuminance is more realistic than Figure 3(b), since 3R leverages the scene geometry and real-world light colors. The haze further reduces image contrast, especially in distant regions. It is noteworthy that we mask out the sky region due to its inaccurate depth values. 3R also generates some intermediate results such as L,

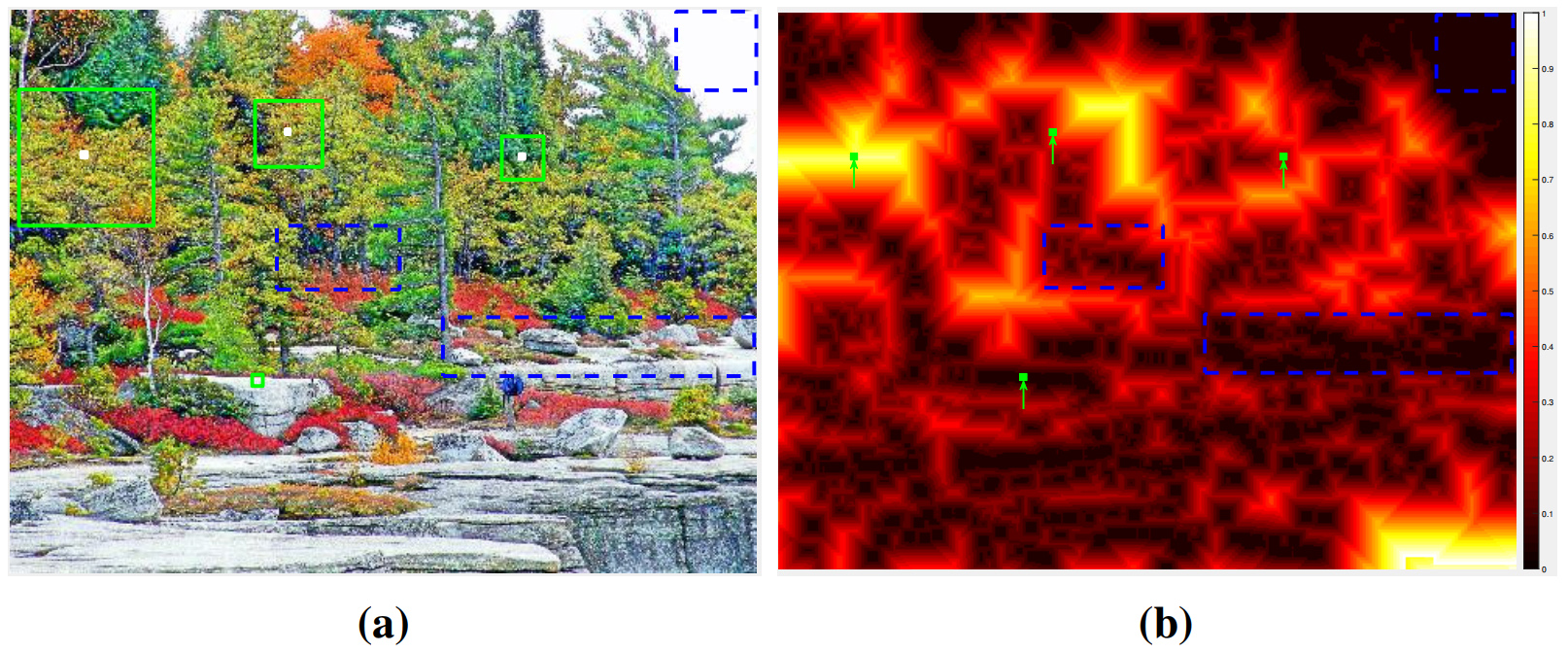

In this paper, we introduce a novel prior named Optimal-Scale Maximum Reflectance Prior (OS-MRP). It extends MRP to the multi-scale case but is more effective. The optimal scale is defined as a sufficiently large scale but not necessary to be larger to obtain the highest maximum reflectance probability. As can be seen from Figure 4, due to the diverse content in the image, the optimal scales for different local patches are different. For a local patch with diverse colors, the optimal scale should be small.

Based on this definition, we have the OS-MRP prior that the maximum reflectance is always close to one within each local patch at its optimal scale.

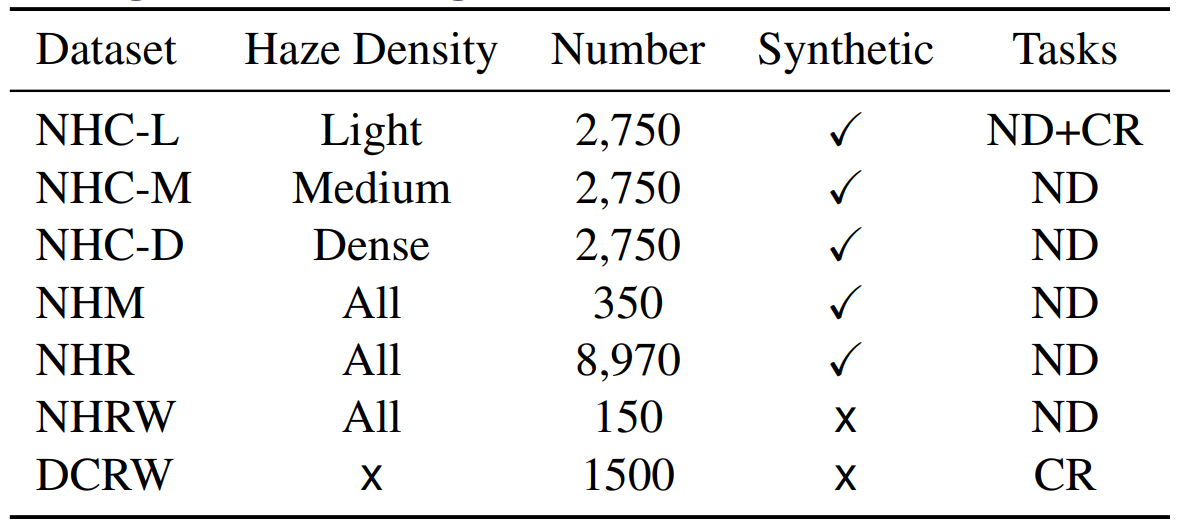

Following [29], 550 clear images were selected from Cityscapes [11] to synthesize nighttime hazy images using 3R. We synthesized 5 images for each of them by changing the light positions and colors, resulting in a total of 2,750 images, called ''Nighttime Hazy Cityscapes'' (NHC). We also altered the haze density by setting

The datasets can be downloaded from GoogleDrive or BaiduDisk (code: is11). NHC-L, NHC-M, nad NHC-D can be generated by the 3R code in this repository.

Please cite our paper in your publications if it helps your research:

@inproceedings{zhang2020nighttime,

title={Nighttime Dehazing with a Synthetic Benchmark},

author={Zhang, Jing and Cao, Yang and Zha, Zheng-Jun and Tao, Dacheng},

booktitle={Proceedings of the 28th ACM International Conference on Multimedia},

pages={2355--2363},

year={2020}

}

Please have all correspondence go to the first author (Jing Zhang: jing.zhang1ATsydney.edu.au).