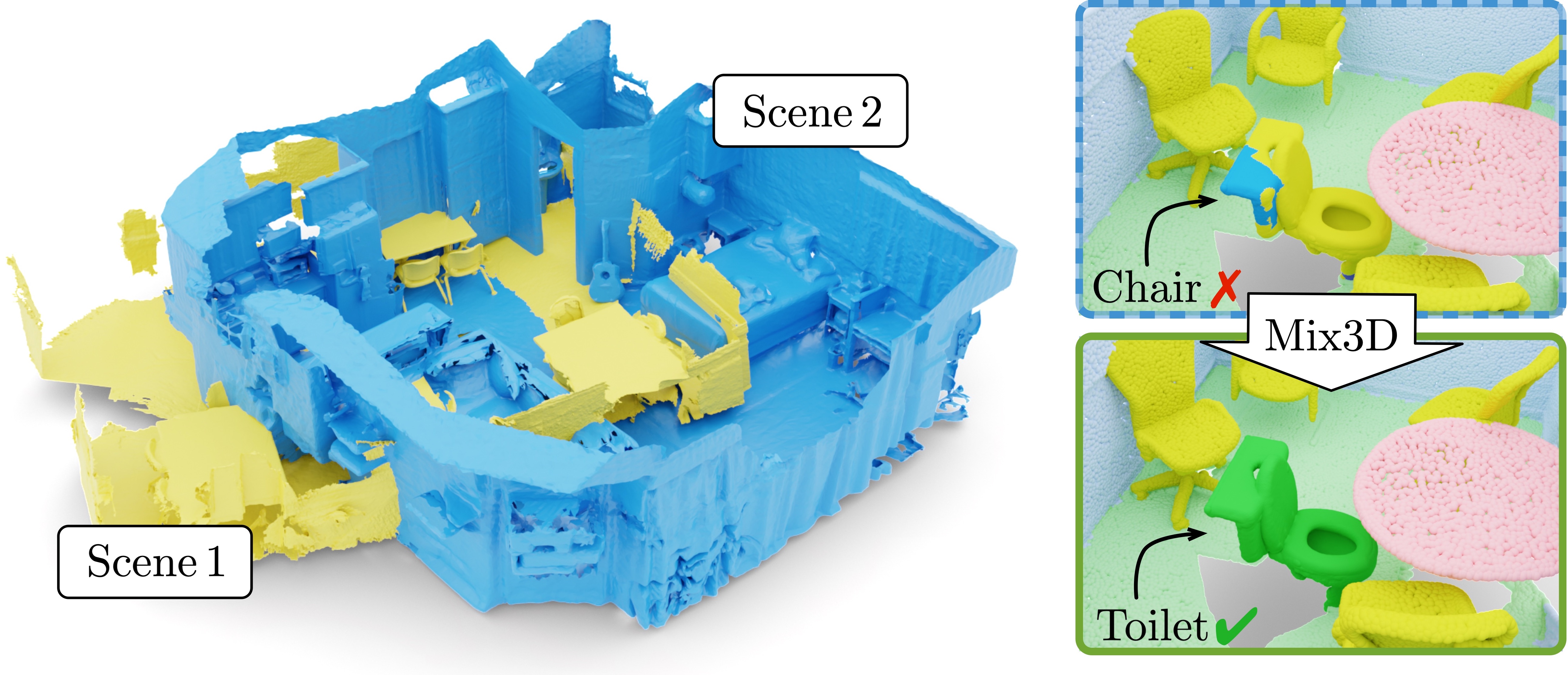

Mix3D is a data augmentation technique for 3D segmentation methods that improves generalization.

[Project Webpage] [arXiv]

- 12. October 2021: Code released.

- 6. October 2021: Mix3D accepted for oral presentation at 3DV 2021. Paper on [arXiv].

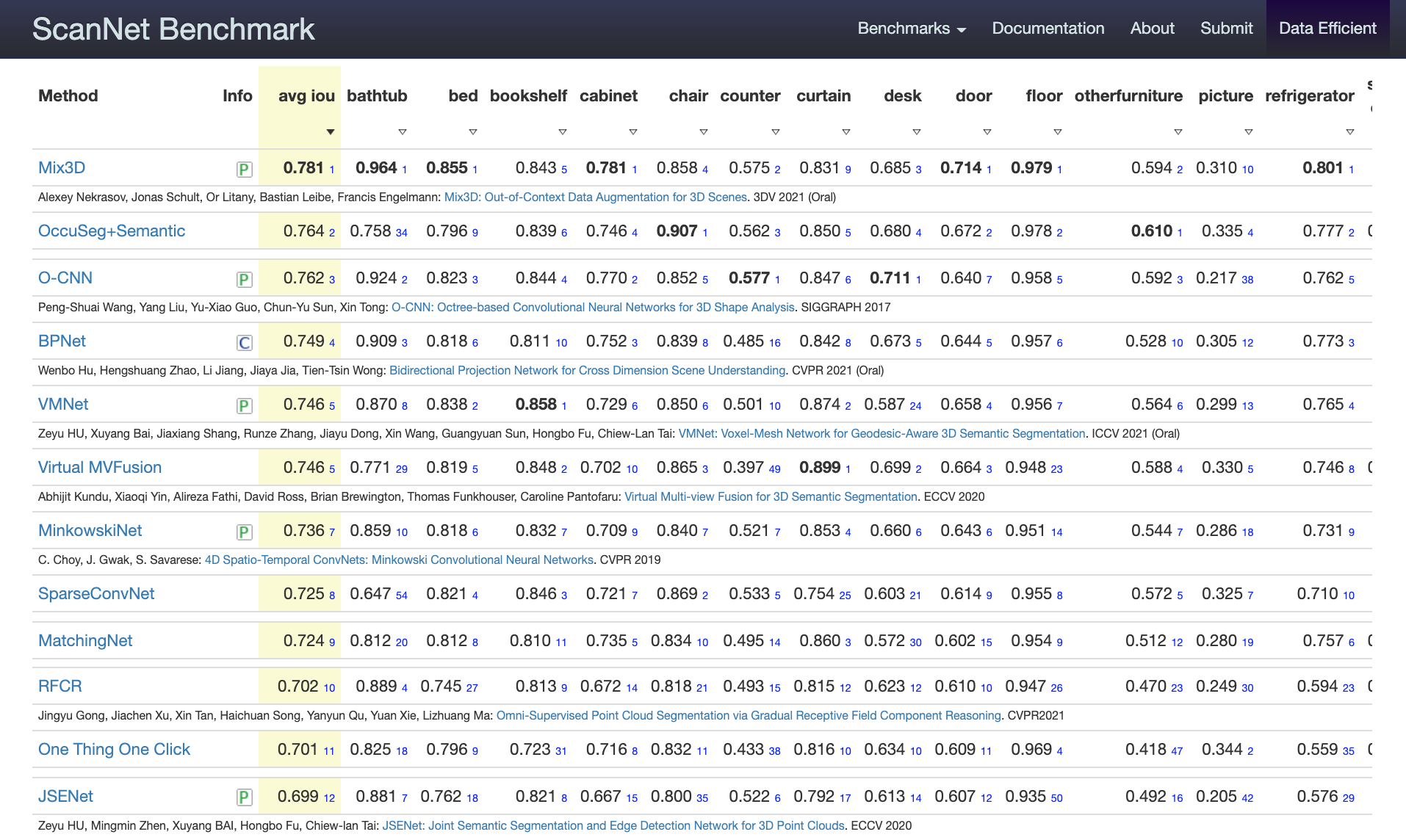

- 30. July 2021: Mix3D ranks 1st on the ScanNet semantic labeling benchmark.

This repository contains the code for the analysis experiments of section 4.2. Motivation and Analysis Experiments from the paper

For the ScanNet benchmark and Table 1 (main paper) we use the original SpatioTemporalSegmentation-Scannet code.

To add Mix3D to the original MinkowskiNet codebase, we provide the patch file SpatioTemporalSegmentation.patch.

With the patch file kpconv_tensorflow_mix3d.patch, you can add Mix3D to the official TensorFlow code release of KPConv on ScanNet and S3DIS.

Analogously, you can patch the official PyTorch reimplementation of KPConv with the patch file kpconv_pytorch_mix3d.patch.

Check the supplementary for more details.

├── mix3d

│ ├── __init__.py

│ ├── __main__.py <- the main file

│ ├── conf <- hydra configuration files

│ ├── datasets

│ │ ├── outdoor_semseg.py <- outdoor dataset

│ │ ├── preprocessing <- folder with preprocessing scripts

│ │ ├── semseg.py <- indoor dataset

│ │ └── utils.py <- code for mixing point clouds

│ ├── logger

│ ├── models <- MinkowskiNet models

│ ├── trainer

│ │ ├── __init__.py

│ │ └── trainer.py <- train loop

│ └── utils

├── data

│ ├── processed <- folder for preprocessed datasets

│ └── raw <- folder for raw datasets

├── scripts

│ ├── experiments

│ │ └── 1000_scene_merging.bash

│ ├── init.bash

│ ├── local_run.bash

│ ├── preprocess_matterport.bash

│ ├── preprocess_rio.bash

│ ├── preprocess_scannet.bash

│ └── preprocess_semantic_kitti.bash

├── docs

├── dvc.lock

├── dvc.yaml <- dvc file to reproduce the data

├── poetry.lock

├── pyproject.toml <- project dependencies

├── README.md

├── saved <- folder that stores models and logs

└── SpatioTemporalSegmentation-ScanNet.patch <- patch file for original repo

The main dependencies of the project are the following:

python: 3.7

cuda: 10.1For others, the project uses the poetry dependency management package. Everything can be installed with the command:

poetry installCheck scripts/init.bash for more details.

After the dependencies are installed, it is important to run the preprocessing scripts.

They will bring scannet, matterport, rio, semantic_kitti datasets to a single format.

By default, the scripts expect to find datsets in the data/raw/ folder.

Check scripts/preprocess_*.bash for more details.

dvc repro scannet # matterport, rio, semantic_kittiThis command will run the preprocessing for scannet and will save the result using the dvc data versioning system.

Train MinkowskiNet on the scannet dataset without Mix3D with a voxel size of 5cm:

poetry run trainTrain MinkowskiNet on the scannet dataset with Mix3D with a voxel size of 5cm:

poetry run train data/collation_functions=voxelize_collate_merge@inproceedings{Nekrasov213DV,

title = {{Mix3D: Out-of-Context Data Augmentation for 3D Scenes}},

author = {Nekrasov, Alexey and Schult, Jonas and Litany, Or and Leibe, Bastian and Engelmann, Francis},

booktitle = {{International Conference on 3D Vision (3DV)}},

year = {2021}

}