“李太白少时,梦所用之笔头上生花后天才赡逸,名闻天下。”——王仁裕《开元天宝遗事·梦笔头生花》

Docs | Model | Dataset | Paper | 中文版

TextBox is developed based on Python and PyTorch for reproducing and developing text generation algorithms in a unified, comprehensive and efficient framework for research purpose. Our library includes 16 text generation algorithms, covering two major tasks:

- Unconditional (input-free) Generation

- Sequence-to-Sequence (Seq2Seq) Generation, including Machine Translation and Summarization

We provide the support for 6 benchmark text generation datasets. A user can apply our library to process the original data copy, or simply download the processed datasets by our team.

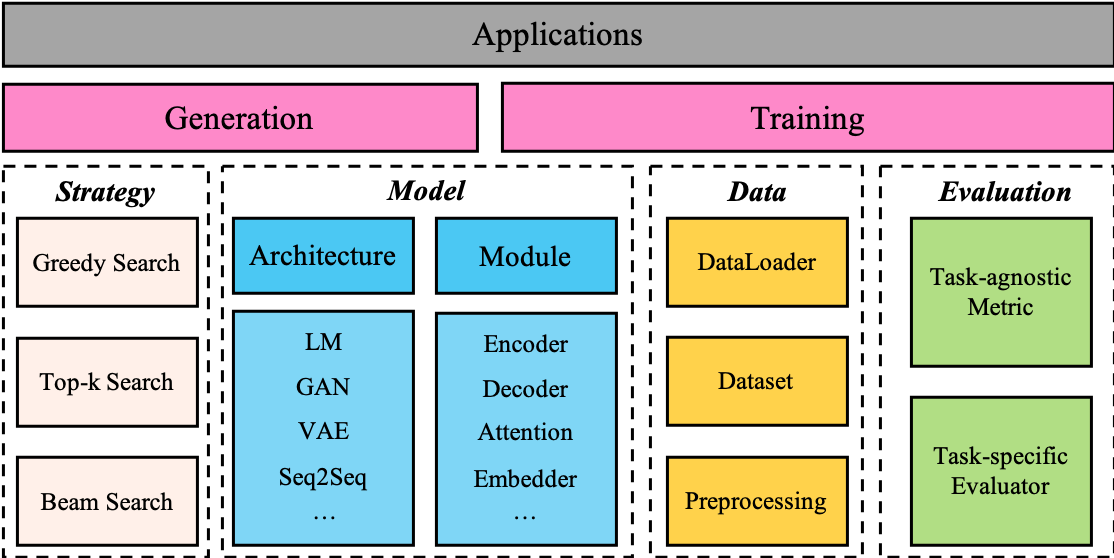

Figure: The Overall Architecture of TextBox

- Unified and modularized framework. TextBox is built upon PyTorch and designed to be highly modularized, by decoupling diverse models into a set of highly reusable modules.

- Comprehensive models, benchmark datasets and standardized evaluations. TextBox also contains a wide range of text generation models, covering the categories of VAE, GAN, RNN or Transformer based models, and pre-trained language models (PLM).

- Extensible and flexible framework. TextBox provides convenient interfaces of various common functions or modules in text generation models, RNN encoder-decoder, Transformer encoder-decoder and pre-trained language model.

- Easy and convenient to get started. TextBox provides flexible configuration files, which allows green hands to run experiments without modifying source code, and allows researchers to conduct qualitative analysis by modifying few configurations.

TextBox requires:

-

Python >= 3.6.2 -

torch >= 1.6.0. Please follow the official instructions to install the appropriate version according to your CUDA version and NVIDIA driver version. -

GCC >= 5.1.0

pip install textboxgit clone https://github.com/RUCAIBox/TextBox.git && cd TextBox

pip install -e . --verboseWith the source code, you can use the provided script for initial usage of our library:

python run_textbox.py --model=RNN --dataset=COCO --task_type=unconditionalThis script will run the RNN model on the COCO dataset. Typically, this example takes a few minutes. We will obtain the output log like example.log.

If you want to change the parameters, such as rnn_type, max_vocab_size, just set the additional command parameters as you need:

python run_textbox.py --model=RNN --dataset=COCO --task_type=unconditional \

--rnn_type=lstm --max_vocab_size=4000We also support to modify YAML configuration files in corresponding dataset and model properties folders and include it in the command line.

If you want to change the model, the dataset or the task type, just run the script by modifying corresponding command parameters:

python run_textbox.py --model=[model_name] --dataset=[dataset_name] --task_type=[task_name]model_name is the model to be run, such as RNN and BART. Models we implemented can be found in Model.

TextBox covers three major task types of text generation, namely unconditional, translation and summarization.

If you want to change the datasets, please refer to Dataset.

If TextBox is installed from pip, you can create a new python file, download the dataset, and write and run the following code:

from textbox.quick_start import run_textbox

run_textbox(config_dict={'model': 'RNN',

'dataset': 'COCO',

'data_path': './dataset',

'task_type': 'unconditional'})This will perform the training and test of the RNN model on the COCO dataset.

If you want to run different models, parameters or datasets, the operations are same with Start from source.

TextBox supports to apply part of pretrained language models (PLM) to conduct text generation. Take the GPT-2 for example, we will show you how to use PLMs to fine-tune.

-

Download the GPT-2 model provided from Hugging Face (https://huggingface.co/gpt2/tree/main), including

config.json,merges.txt,pytorch_model.bin,tokenizer.jsonandvocab.json. Then put them in a folder at the same level astextbox, such aspretrained_model/gpt2. -

After downloading, you just need to run the command:

python run_textbox.py --model=GPT2 --dataset=COCO --task_type=unconditional \

--pretrained_model_path=pretrained_model/gpt2The above Figure presents the overall architecture of our library. The running procedure relies on some experimental configuration, obtained from the files, command line or parameter dictionaries. The dataset and model are prepared and initialized according to the configured settings, and the execution module is responsible for training and evaluating models. The details of interfaces can be obtained in our document.

We implement 16 text generation models covering unconditional generation and sequence-to-sequence generation. We include the basic RNN language model for unconditional generation, and the remaining 15 models in the following table:

| Category | Task Type | Model | Reference |

|---|---|---|---|

| VAE | Unconditional | LSTMVAE | (Bowman et al., 2016) |

| CNNVAE | (Yang et al., 2017) | ||

| HybridVAE | (Semeniuta et al., 2017) | ||

| GAN | SeqGAN | (Yu et al., 2017) | |

| TextGAN | (Zhang et al., 2017) | ||

| RankGAN | (Lin et al., 2017) | ||

| MaliGAN | (Che et al., 2017) | ||

| LeakGAN | (Guo et al., 2018) | ||

| MaskGAN | (Fedus et al., 2018) | ||

| Seq2Seq | Translation Summarization |

RNN | (Sutskever et al., 2014) |

| Transformer | (Vaswani et al., 2017b) | ||

| GPT-2 | (Radford et al.) | ||

| XLNet | (Yang et al., 2019) | ||

| BERT2BERT | (Rothe et al., 2020) | ||

| BART | (Lewis et al., 2020) |

We have also collected 6 datasets that are commonly used for above three tasks, which can be downloaded from Google Drive and Baidu Wangpan (Password: lwy6), including raw data and processed data.

We list the 6 datasets in the following table:

| Task | Dataset |

|---|---|

| Unconditional | Image COCO Caption |

| EMNLP2017 WMT News | |

| IMDB Movie Review | |

| Translation | IWSLT2014 German-English |

| WMT2014 English-German | |

| Summarization | GigaWord |

The downloaded dataset should be placed in the dataset folder, just as our main branch.

We also support you to run our model using your own dataset. Just follow the three steps:

-

Create a new folder under the

datasetfolder to put your own corpus file which includes a sequence per line, e.g.dataset/YOUR_DATASET; -

Write a YAML configuration file using the same file name to set the hyper-parameters of your dataset, e.g.

textbox/properties/dataset/YOUR_DATASET.yaml.If you want to splitted the dataset, please set

split_strategy: "load_split"in the yaml, just as the COCO yaml or IWSLT14_DE_EN yaml.If you want to split the dataset by ratio automaticly, please set

split_strategy: "by_ratio"and your desiredsplit_ratioin the yaml, just as the IMDB yaml. -

For unconditional generation, name the corpus file

corpus_large.txtif you set"by_ratio", name the corpus filestrain.txt, valid.txt, dev.txtif you set"load_split".For sequence-to-sequence generation, we only support to load the splitted data. Please name the corpus files

train.[xx/yy], valid.[xx/yy], dev.[xx/yy], and thexxoryyis the suffix of the source or target file which should be consistent withsource_suffixandtarget_suffixin the YAML.

We have implemented various text generation models, and compared their performance on unconditional and conditional text generation tasks. We also show a few generated examples, and more examples can be found in generated_examples.

The following results were obtained from our TextBox in preliminary experiments. However, these algorithms were implemented and tuned based on our understanding and experiences, which may not achieve their optimal performance. If you could yield a better result for some specific algorithm, please kindly let us know. We will update this table after the results are verified.

Negative Log-Likelihood (NLL), BLEU and Self-BLEU (SBLEU) on test dataset:

| Model | NLL | BLEU-2 | BLEU-3 | BLEU-4 | BLEU-5 | SBLEU-2 | SBLEU-3 | SBLEU-4 | SBLEU-5 |

|---|---|---|---|---|---|---|---|---|---|

| RNNVAE | 33.02 | 80.46 | 51.5 | 25.89 | 11.55 | 89.18 | 61.58 | 32.69 | 14.03 |

| CNNVAE | 36.61 | 0.63 | 0.27 | 0.28 | 0.29 | 3.10 | 0.28 | 0.29 | 0.30 |

| HybridVAE | 56.44 | 31.96 | 3.75 | 1.61 | 1.76 | 77.79 | 26.77 | 5.71 | 2.49 |

| SeqGAN | 30.56 | 80.15 | 49.88 | 24.95 | 11.10 | 84.45 | 54.26 | 27.42 | 11.87 |

| TextGAN | 32.46 | 77.47 | 45.74 | 21.57 | 9.18 | 82.93 | 51.34 | 24.41 | 10.01 |

| RankGAN | 31.07 | 77.36 | 45.05 | 21.46 | 9.41 | 83.13 | 50.62 | 23.79 | 10.08 |

| MaliGAN | 31.50 | 80.08 | 49.52 | 24.03 | 10.36 | 84.85 | 55.32 | 28.28 | 12.09 |

| LeakGAN | 25.11 | 93.49 | 82.03 | 62.59 | 42.06 | 89.73 | 64.57 | 35.60 | 14.98 |

| MaskGAN | 95.93 | 58.07 | 21.22 | 5.07 | 1.88 | 76.10 | 43.41 | 20.06 | 9.37 |

| GPT-2 | 26.82 | 75.51 | 58.87 | 38.22 | 21.66 | 92.78 | 75.47 | 51.74 | 32.39 |

Part of generated examples:

| Model | Examples |

|---|---|

| RNNVAE | people playing polo to eat in the woods . |

| LeakGAN | a man is standing near a horse on a lush green grassy field . |

| GPT-2 | cit a large zebra lays down on the ground. |

NLL, BLEU and SBLEU on test dataset:

| Model | NLL | BLEU-2 | BLEU-3 | BLEU-4 | BLEU-5 | SBLEU-2 | SBLEU-3 | SBLEU-4 | SBLEU-5 |

|---|---|---|---|---|---|---|---|---|---|

| RNNVAE | 142.23 | 58.81 | 19.70 | 5.57 | 2.01 | 72.79 | 27.04 | 7.85 | 2.73 |

| CNNVAE | 164.79 | 0.82 | 0.17 | 0.18 | 0.18 | 2.78 | 0.19 | 0.19 | 0.20 |

| HybridVAE | 177.75 | 29.58 | 1.62 | 0.47 | 0.49 | 59.85 | 10.3 | 1.43 | 1.10 |

| SeqGAN | 142.22 | 63.90 | 20.89 | 5.64 | 1.81 | 70.97 | 25.56 | 7.05 | 2.18 |

| TextGAN | 140.90 | 60.37 | 18.86 | 4.82 | 1.52 | 68.32 | 23.24 | 6.10 | 1.84 |

| RankGAN | 142.27 | 61.28 | 19.81 | 5.58 | 1.82 | 67.71 | 23.15 | 6.63 | 2.09 |

| MaliGAN | 149.93 | 45.00 | 12.69 | 3.16 | 1.17 | 65.10 | 20.55 | 5.41 | 1.91 |

| LeakGAN | 162.70 | 76.61 | 39.14 | 15.84 | 6.08 | 85.04 | 54.70 | 29.35 | 14.63 |

| MaskGAN | 303.00 | 63.08 | 21.14 | 5.40 | 1.80 | 83.92 | 47.79 | 19.96 | 7.51 |

| GPT-2 | 88.01 | 55.88 | 21.65 | 5.34 | 1.40 | 75.67 | 36.71 | 12.67 | 3.88 |

Part of generated examples:

| Model | Examples |

|---|---|

| RNNVAE | lewis holds us in total because they have had a fighting opportunity to hold any bodies when companies on his assault . |

| LeakGAN | we ' re a frustration of area , then we do coming out and play stuff so that we can be able to be ready to find a team in a game , but I know how we ' re going to say it was a problem . |

| GPT-2 | russ i'm trying to build a house that my kids can live in, too, and it's going to be a beautiful house. |

NLL, BLEU and SBLEU on test dataset:

| Model | NLL | BLEU-2 | BLEU-3 | BLEU-4 | BLEU-5 | SBLEU-2 | SBLEU-3 | SBLEU-4 | SBLEU-5 |

|---|---|---|---|---|---|---|---|---|---|

| RNNVAE | 445.55 | 29.14 | 13.73 | 4.81 | 1.85 | 38.77 | 14.39 | 6.61 | 5.16 |

| CNNVAE | 552.09 | 1.88 | 0.11 | 0.11 | 0.11 | 3.08 | 0.13 | 0.13 | 0.13 |

| HybridVAE | 318.46 | 38.65 | 2.53 | 0.34 | 0.31 | 70.05 | 17.27 | 1.57 | 0.59 |

| SeqGAN | 547.09 | 66.33 | 26.89 | 6.80 | 1.79 | 72.48 | 35.48 | 11.60 | 3.31 |

| TextGAN | 488.37 | 63.95 | 25.82 | 6.81 | 1.51 | 72.11 | 30.56 | 8.20 | 1.96 |

| RankGAN | 518.10 | 58.08 | 23.71 | 6.84 | 1.67 | 69.93 | 31.68 | 11.12 | 3.78 |

| MaliGAN | 552.45 | 44.50 | 15.01 | 3.69 | 1.23 | 57.25 | 22.04 | 7.36 | 3.26 |

| LeakGAN | 499.57 | 78.93 | 58.96 | 32.58 | 12.65 | 92.91 | 79.21 | 60.10 | 39.79 |

| MaskGAN | 509.58 | 56.61 | 21.41 | 4.49 | 0.86 | 92.09 | 77.88 | 59.62 | 42.36 |

| GPT-2 | 348.67 | 72.52 | 41.75 | 15.40 | 4.22 | 86.21 | 58.26 | 30.03 | 12.56 |

Part of generated examples (with max_length 100):

| Model | Examples |

|---|---|

| RNNVAE | best brilliant known plot , sound movie , although unfortunately but it also like . the almost five minutes i will have done its bad numbers . so not yet i found the difference from with |

| LeakGAN | i saw this film shortly when I storms of a few concentration one before it all time . It doesn t understand the fact that it is a very good example of a modern day , in the <|unk|> , I saw it . It is so bad it s a <|unk|> . the cast , the stars , who are given a little |

| GPT-2 | be a very bad, low budget horror flick that is not worth watching and, in my humble opinion, not worth watching any time. the acting is atrocious, there are scenes that you could laugh at and the story, if you can call it that, was completely lacking in logic and |

BLEU metric on test dataset with three decoding strategies: top-k sampling, greedy search and beam search (with beam_size 5):

| Model | Metric | Top-k sampling | Greedy search | Beam search |

|---|---|---|---|---|

| RNN with Attention | BLEU-2 | 26.68 | 33.74 | 35.68 |

| BLEU-3 | 16.95 | 23.03 | 24.94 | |

| BLEU-4 | 10.85 | 15.79 | 17.42 | |

| BLEU | 19.66 | 26.23 | 28.23 | |

| Transformer | BLEU-2 | 30.96 | 35.48 | 36.88 |

| BLEU-3 | 20.83 | 24.76 | 26.10 | |

| BLEU-4 | 14.16 | 17.41 | 18.54 | |

| BLEU | 23.91 | 28.10 | 29.49 |

| Source (Germany) | wissen sie , eines der großen < unk > beim reisen und eine der freuden bei der < unk > forschung ist , gemeinsam mit den menschen zu leben , die sich noch an die alten tage erinnern können . die ihre vergangenheit noch immer im wind spüren , sie auf vom regen < unk > steinen berühren , sie in den bitteren blättern der pflanzen schmecken . |

| Gold Target (English) | you know , one of the intense pleasures of travel and one of the delights of < unk > research is the opportunity to live amongst those who have not forgotten the old ways , who still feel their past in the wind , touch it in stones < unk > by rain , taste it in the bitter leaves of plants . |

| RNN with Attention | you know , one of the great < unk > trips is a travel and one of the friends in the world & apos ; s investigation is located on the old days that you can remember the past day , you & apos ; re < unk > to the rain in the < unk > chamber of plants . |

| Transformer | you know , one of the great < unk > about travel , and one of the pleasure in the < unk > research is to live with people who remember the old days , and they still remember the wind in the wind , but they & apos ; re touching the < unk > . |

ROUGE metric on test dataset using beam search (with beam_size 5):

| Model | ROUGE-1 | ROUGE-2 | ROUGE-L | ROUGE-W |

|---|---|---|---|---|

| RNN with Attention | 36.32 | 17.63 | 38.36 | 25.08 |

| Transformer | 36.21 | 17.64 | 38.10 | 24.89 |

Part of generated examples:

| Article | japan 's nec corp. and computer corp. of the united states said wednesday they had agreed to join forces in supercomputer sales . |

| Gold Summary | nec in computer sales tie-up |

| RNN with Attention | nec computer corp . |

| Transformer | nec computer to join forces in chip sales |

| Releases | Date | Features |

|---|---|---|

| v0.1.5 | 01/11/2021 | Basic TextBox |

Please let us know if you encounter a bug or have any suggestions by filing an issue.

We welcome all contributions from bug fixes to new features and extensions.

We expect all contributions discussed in the issue tracker and going through PRs.

If you find TextBox useful for your research or development, please cite the following paper:

@article{recbole,

title={TextBox: A Unified, Modularized, and Extensible Framework for Text Generation},

author={Junyi Li, Tianyi Tang, Gaole He, Jinhao Jiang, Xiaoxuan Hu, Puzhao Xie, Wayne Xin Zhao, Ji-Rong Wen},

year={2021},

journal={arXiv preprint arXiv:2101.02046}

}

TextBox is developed and maintained by AI Box.

TextBox uses MIT License.