We plan to create a very interesting demo by combining Grounding DINO and Segment Anything! Right now, this is just a simple small project. We will continue to improve it and create more interesting demos.

Why this project?

- Segment Anything is a strong segmentation model. But it needs prompts (like boxes/points) to generate masks.

- Grounding DINO is a strong zero-shot detector which is capable of to generate high quality boxes and labels with free-form text.

- The combination of the two models enable to detect and segment everything with text inputs!

- The combination of

BLIP + GroundingDINO + SAMfor automatic labeling! - The combination of

GroundingDINO + SAM + Stable-diffusionfor data-factory, generating new data!

Grounded-SAM + Stable-Diffusion Inpainting: Data-Factory, Generating New Data!

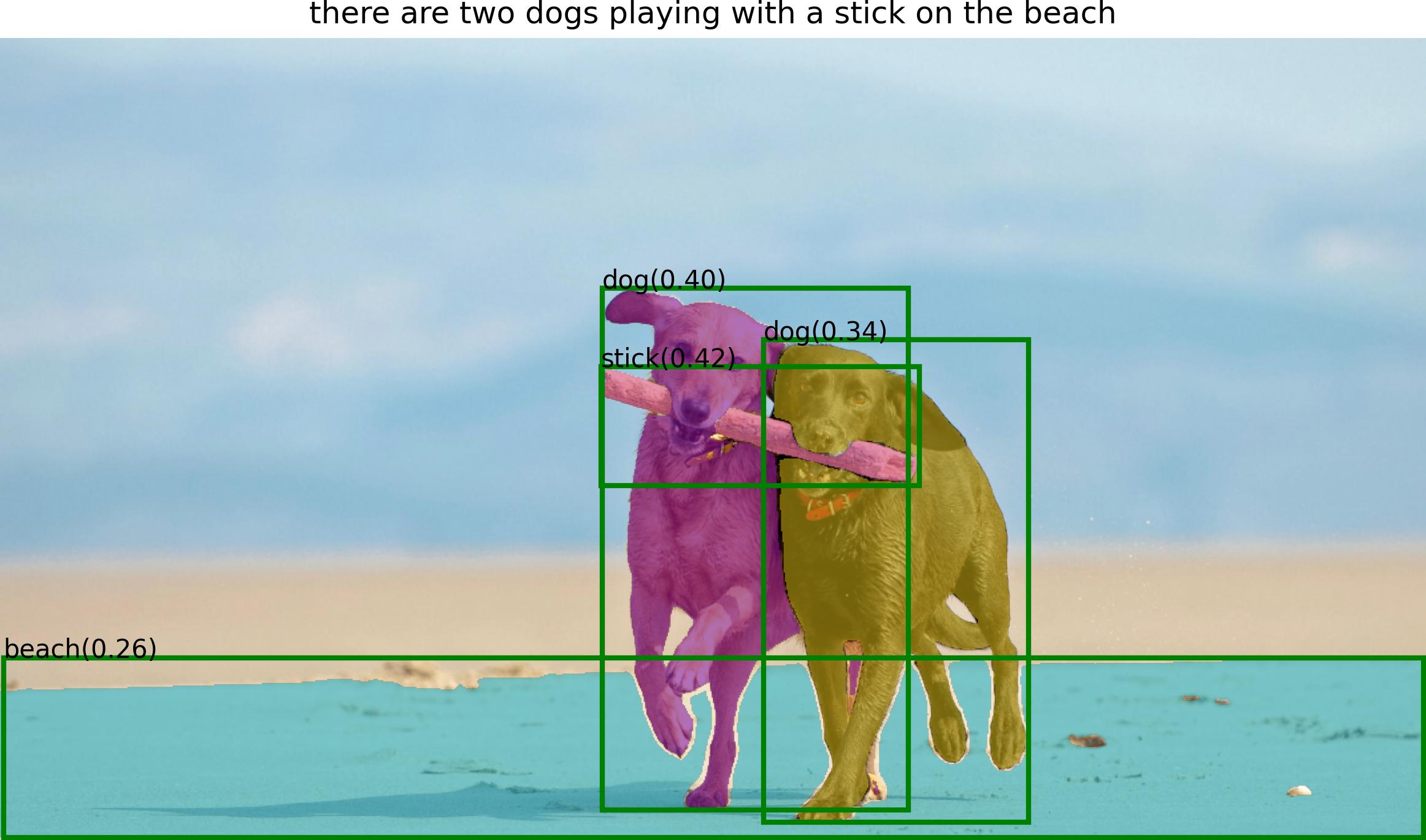

BLIP + Grounded-SAM: Automatic Label System!

Using BLIP to generate caption, extract tags and using Grounded-SAM for box and mask generating. Here's the demo output:

Imagine Space

Some possible avenues for future work ...

- Automatic image generation to construct new datasets.

- Stronger foundation models with segmentation pre-training.

- Collaboration with (Chat-)GPT.

- A whole pipeline to automatically label image (with box and mask) and generate new image.

-

🆕 Release the interactive fashion-edit playground in here. Run in the notebook, just click for annotating points for further segmentation. Enjoy it!

-

🆕 Checkout our related human-face-edit branch here. We'll keep updating this branch with more interesting features. Here are some examples:

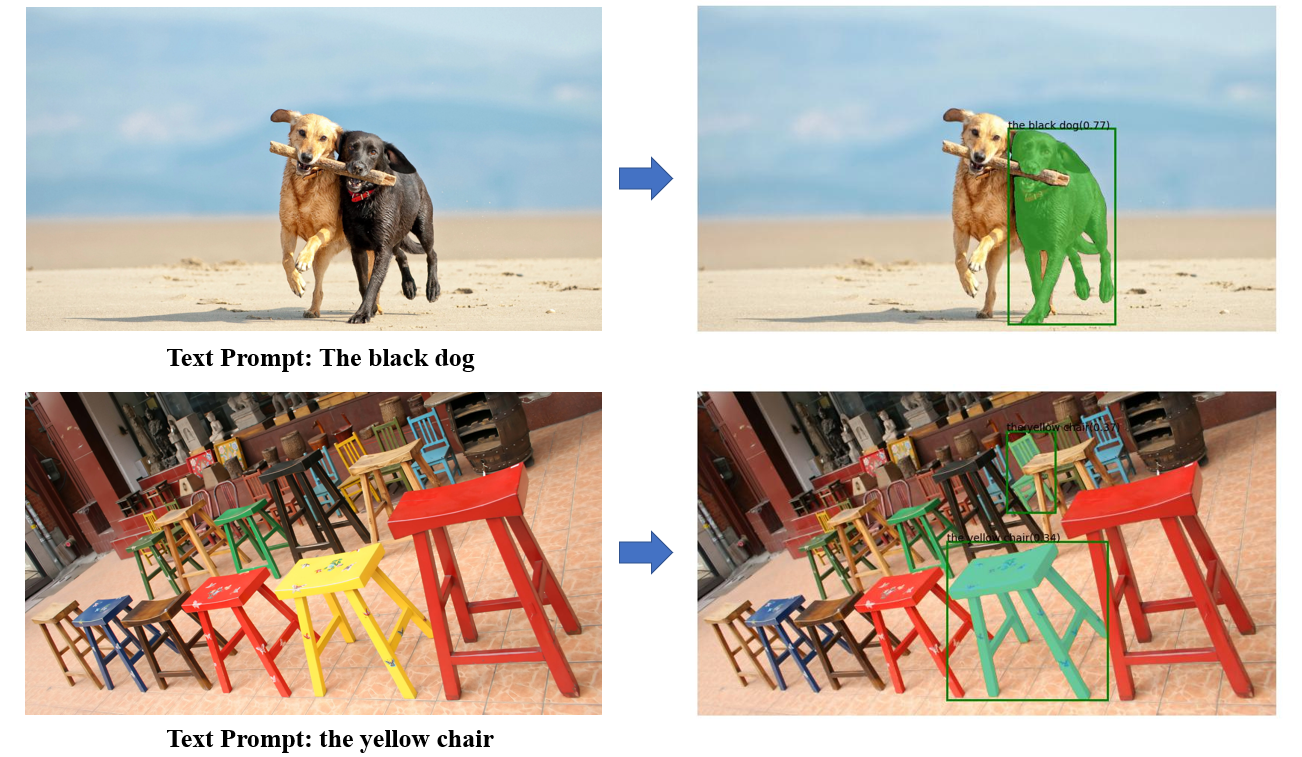

- GroundingDINO Demo

- GroundingDINO + Segment-Anything Demo

- GroundingDINO + Segment-Anything + Stable-Diffusion Demo

- BLIP + GroundingDINO + Segment-Anything + Stable-Diffusion Demo

- Hugging Face Demo

- Colab demo

See our notebook file as an example.

The code requires python>=3.8, as well as pytorch>=1.7 and torchvision>=0.8. Please follow the instructions here to install both PyTorch and TorchVision dependencies. Installing both PyTorch and TorchVision with CUDA support is strongly recommended.

Install Segment Anything:

python -m pip install -e segment_anythingInstall GroundingDINO:

python -m pip install -e GroundingDINOInstall diffusers:

pip install --upgrade diffusers[torch]The following optional dependencies are necessary for mask post-processing, saving masks in COCO format, the example notebooks, and exporting the model in ONNX format. jupyter is also required to run the example notebooks.

pip install opencv-python pycocotools matplotlib onnxruntime onnx ipykernel

More details can be found in install segment anything and install GroundingDINO

- Download the checkpoint for groundingdino:

cd Grounded-Segment-Anything

wget https://github.com/IDEA-Research/GroundingDINO/releases/download/v0.1.0-alpha/groundingdino_swint_ogc.pth- Run demo

export CUDA_VISIBLE_DEVICES=0

python grounding_dino_demo.py \

--config GroundingDINO/groundingdino/config/GroundingDINO_SwinT_OGC.py \

--grounded_checkpoint groundingdino_swint_ogc.pth \

--input_image assets/demo1.jpg \

--output_dir "outputs" \

--box_threshold 0.3 \

--text_threshold 0.25 \

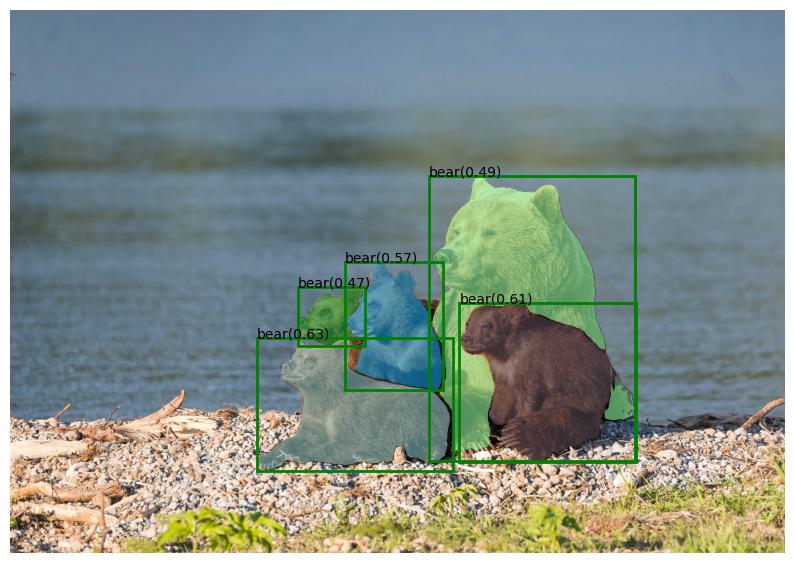

--text_prompt "bear" \

--device "cuda"- The model prediction visualization will be saved in

output_diras follow:

- Download the checkpoint for segment-anything and grounding-dino:

cd Grounded-Segment-Anything

wget https://dl.fbaipublicfiles.com/segment_anything/sam_vit_h_4b8939.pth

wget https://github.com/IDEA-Research/GroundingDINO/releases/download/v0.1.0-alpha/groundingdino_swint_ogc.pth- Run Demo

export CUDA_VISIBLE_DEVICES=0

python grounded_sam_demo.py \

--config GroundingDINO/groundingdino/config/GroundingDINO_SwinT_OGC.py \

--grounded_checkpoint groundingdino_swint_ogc.pth \

--sam_checkpoint sam_vit_h_4b8939.pth \

--input_image assets/demo1.jpg \

--output_dir "outputs" \

--box_threshold 0.3 \

--text_threshold 0.25 \

--text_prompt "bear" \

--device "cuda"- The model prediction visualization will be saved in

output_diras follow:

CUDA_VISIBLE_DEVICES=0

python grounded_sam_inpainting_demo.py \

--config GroundingDINO/groundingdino/config/GroundingDINO_SwinT_OGC.py \

--grounded_checkpoint groundingdino_swint_ogc.pth \

--sam_checkpoint sam_vit_h_4b8939.pth \

--input_image assets/inpaint_demo.jpg \

--output_dir "outputs" \

--box_threshold 0.3 \

--text_threshold 0.25 \

--det_prompt "bench" \

--inpaint_prompt "A sofa, high quality, detailed" \

--device "cuda"python gradio_app.py- The gradio_app visualization as follow:

It is easy to generate pseudo labels automatically as follows:

- Use BLIP (or other caption models) to generate a caption.

- Extract tags from the caption. We use ChatGPT to handle the potential complicated sentences.

- Use Grounded-Segment-Anything to generate the boxes and masks.

- Run Demo

export CUDA_VISIBLE_DEVICES=0

python automatic_label_demo.py \

--config GroundingDINO/groundingdino/config/GroundingDINO_SwinT_OGC.py \

--grounded_checkpoint groundingdino_swint_ogc.pth \

--sam_checkpoint sam_vit_h_4b8939.pth \

--input_image assets/demo3.jpg \

--output_dir "outputs" \

--openai_key your_openai_key \

--box_threshold 0.25 \

--text_threshold 0.2 \

--iou_threshold 0.5 \

--device "cuda"- The pseudo labels and model prediction visualization will be saved in

output_diras follows:

If you find this project helpful for your research, please consider citing the following BibTeX entry.

@article{kirillov2023segany,

title={Segment Anything},

author={Kirillov, Alexander and Mintun, Eric and Ravi, Nikhila and Mao, Hanzi and Rolland, Chloe and Gustafson, Laura and Xiao, Tete and Whitehead, Spencer and Berg, Alexander C. and Lo, Wan-Yen and Doll{\'a}r, Piotr and Girshick, Ross},

journal={arXiv:2304.02643},

year={2023}

}

@inproceedings{ShilongLiu2023GroundingDM,

title={Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection},

author={Shilong Liu and Zhaoyang Zeng and Tianhe Ren and Feng Li and Hao Zhang and Jie Yang and Chunyuan Li and Jianwei Yang and Hang Su and Jun Zhu and Lei Zhang},

year={2023}

}