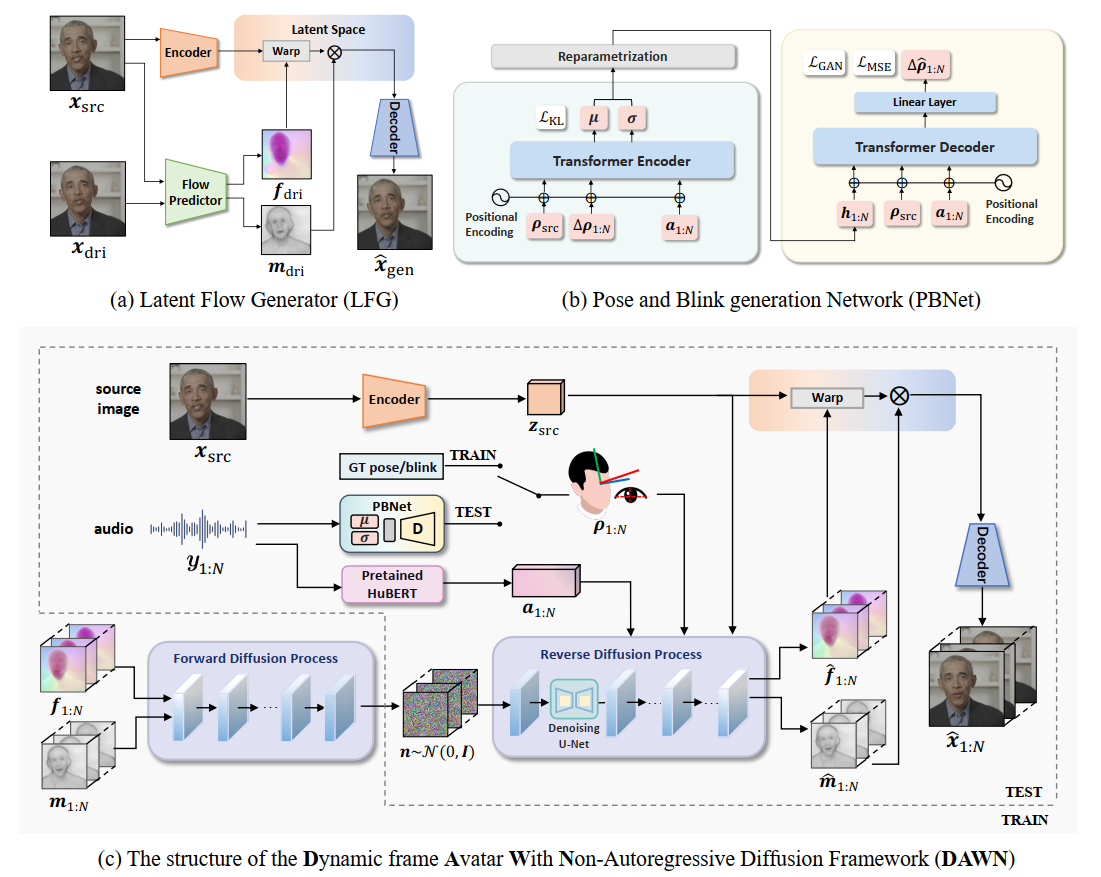

🌅 DAWN: Dynamic Frame Avatar with Non-autoregressive Diffusion Framework for Talking Head Video Generation

2024.10.14🔥 We release the Demo page.2024.10.18🔥 We release the paper DAWN.2024.10.21🔥 We update the Chinese introduction .2024.11.7🔥🔥 We realse the pretrained model on hugging face.2024.11.9🔥🔥🔥 We realse the inference code. We sincerely invite you to experience our model. 😊2025.2.16🔥🔥🔥 We optimize the unified inference code. Now you can run the test pipeline with only one script. 🚀

- release the inference code

- release the pretrained model of 128*128

- release the pretrained model of 256*256

- release the unified test code

- in progress ...

With our VRAM-oriented optimized code, the maximum length of video that can be generated is linearly related to the size of the GPU VRAM. Larger VRAM produce longer videos.

- To generate 128*128 video, we recommend using a GPU with 12GB or more VRAM. This can at least generate video of approximately 400 frames.

- To generate 256*256 video, we recommend using a GPU with 24GB or more VRAM. This can at least generate video of approximately 200 frames.

PS: Although optimized code can improve VRAM utilization, it currently sacrifices inference speed due to incomplete optimization of local attention. We are actively working on this issue, and if you have a better solution, we welcome your PR. If you wish to achieve faster inference speeds, you can use unoptimized code, but this will increase VRAM usage (O(n²) spatial complexity).

We highly recommend to try DAWN on linux platform. Runing on windows may produce some rubbish files need to be deleted manually and requires additional effort for the deployment of the 3DDFA repository (our extract_init_states folder) comment.

- set up the conda environment

conda create -n DAWN python=3.8

conda activate DAWN

pip install -r requirements.txt

Since our model is trained only on the HDTF dataset and has few parameters, in order to ensure the best driving effect, please provide examples of :

- standard human photos as much as possible, try not to wear hats or large headgear

- ensure a clear boundary between the background and the subject

- have the face occupying the main position in the image.

The preparation for inference:

-

Download the pretrain checkpoints from hugging face. Create the

./pretrain_modelsdirectory and put the checkpoint files into it. Please down load the Hubert model from facebook/hubert-large-ls960-ft.directory structure: pretrain_models/ ├── LFG_256_400ep.pth ├── LFG_128_1000ep.pth ├── DAWN_256.pth ├── DAWN_128.pth └── hubert-large-ls960-ft/ ├── ..... -

Run the inference script:

python unified_video_generator.py \ --audio_path your/audio/path \ --image_path your/image/path \ --output_path output/path \ --cache_path cache/path \ --resolution 128 \ # optional: 128 or 256

Inference on other dataset:

By specifying the audio_path, image_path, and output_path of the VideoGenerator class during each inference, and modifying the contents of directory_name and output_video_path in unified_video_generator.py Lines 310-312 and 393-394, you can control the naming logic for saving images and videos, enabling testing on any dataset.

If you wish to refer to the baseline results published here, please use the following BibTeX entries:

@misc{dawn2024,

title={DAWN: Dynamic Frame Avatar with Non-autoregressive Diffusion Framework for Talking Head Video Generation},

author={Hanbo Cheng and Limin Lin and Chenyu Liu and Pengcheng Xia and Pengfei Hu and Jiefeng Ma and Jun Du and Jia Pan},

year={2024},

eprint={2410.13726},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2410.13726},

}Limin Lin and Hanbo Cheng contributed equally to the project.

Thank you to the authors of Diffused Heads for assisting us in reproducing their work! We also extend our gratitude to the authors of MRAA, LFDM, 3DDFA_V2 and ACTOR for their contributions to the open-source community. Lastly, we thank our mentors and co-authors for their continuous support in our research work!