This repository contains the official PyTorch implementation for the CVPR 2024 Highlight paper titled "Latent Modulated Function for Computational Optimal Continuous Image Representation" by Zongyao He and Zhi Jin.

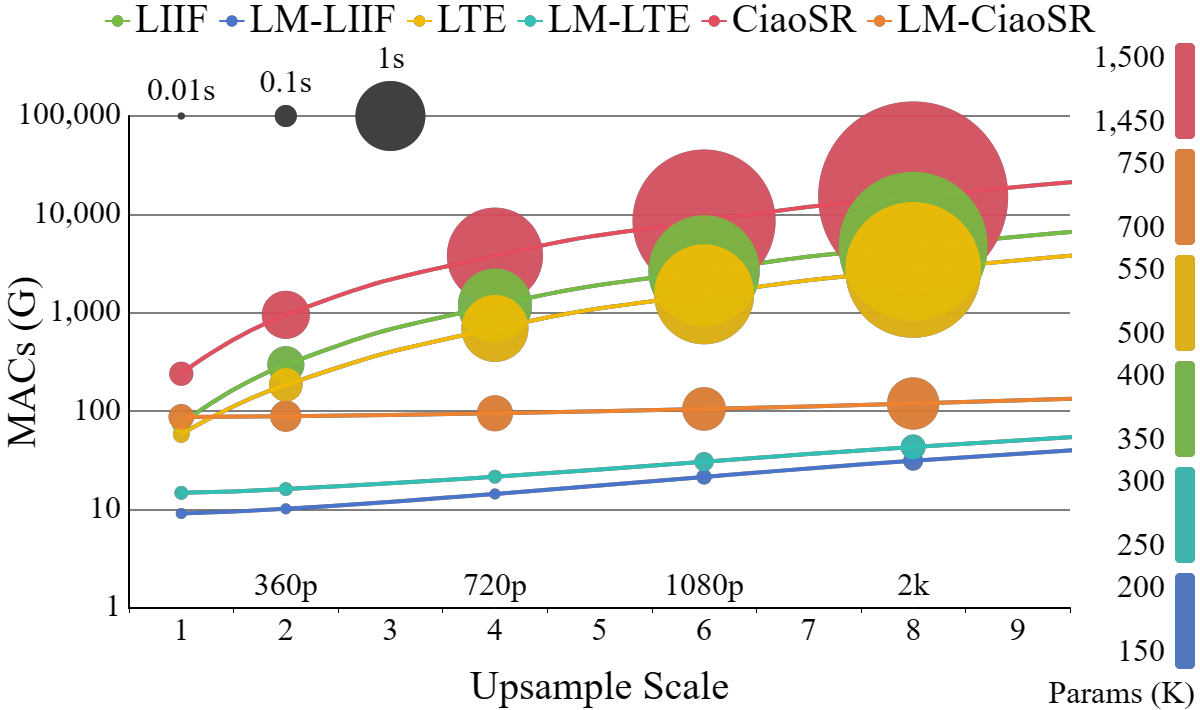

The recent work Local Implicit Image Function (LIIF) and subsequent Implicit Neural Representation (INR) based works have achieved remarkable success in Arbitrary-Scale Super-Resolution (ASSR) by using MLP to decode Low-Resolution (LR) features. However, these continuous image representations typically implement decoding in High-Resolution (HR) High-Dimensional (HD) space, leading to a quadratic increase in computational cost and seriously hindering the practical applications of ASSR.

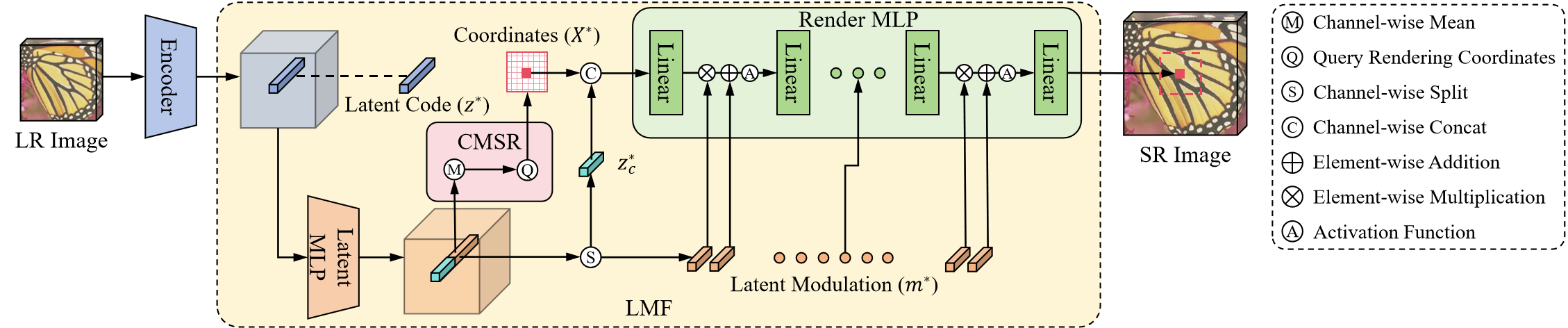

To tackle this problem, we propose a novel Latent Modulated Function (LMF), which decouples the HR-HD decoding process into shared latent decoding in LR-HD space and independent rendering in HR Low-Dimensional (LD) space, thereby realizing the first computational optimal paradigm of continuous image representation. Specifically, LMF utilizes an HD MLP in latent space to generate latent modulations of each LR feature vector. This enables a modulated LD MLP in render space to quickly adapt to any input feature vector and perform rendering at arbitrary resolution. Furthermore, we leverage the positive correlation between modulation intensity and input image complexity to design a Controllable Multi-Scale Rendering (CMSR) algorithm, offering the flexibility to adjust the decoding efficiency based on the rendering precision.

Extensive experiments demonstrate that converting existing INR-based ASSR methods to LMF can reduce the computational cost by up to 99.9%, accelerate inference by up to 57×, and save up to 76% of parameters, while maintaining competitive performance.

Ensure your environment meets the following prerequisites:

-

Python: >= 3.8

-

PyTorch: >= 1.9.1

- Pre-existing Models: We provide LM-LIIF (EDSR-b) and LM-LTE (EDSR-b) models in the

./save/pretrained_modelsfolder. - Additional Models: Download more pre-trained models from this google drive link.

- Original Method Models: You can download the pre-trained models of the original methods in their repos ( LIIF, LTE, CiaoSR).

Download the benchmark datasets from EDSR-PyTorch Repository and store them in the ./load folder. The benchmark datasets include:

- Set5

- Set14

- BSD100

- Urban100

-

Test on a Single Image:

python demo.py --input demo.png --scale 4 --output demo_x4.png --model save/pretrained_models/edsr-b_lm-liif.pth --gpu 0 --fast True

-

Test on a Testset:

python test.py --config configs/test-lmf/test-set5.yaml --model save/pretrained_models/edsr-b_lm-liif.pth --gpu 0 --fast True

- DIV2K Dataset: Download from the DIV2K official website and place the files in the

./loadfolder. Both DIV2K_train_HR and DIV2K_valid_HR are needed.

- Command to Start Training:

python train.py --config configs/train-lmf/train_edsr-baseline-lmliif.yaml --gpu 0 --name edsr-b_lm-liif

- Configuration Details: Configuration files for different LMF-based models are located in

./configs/train-lmf. Each configuration file details the model settings and hyperparameters. - Output: Trained models and logs will be saved in the

./savefolder.

-

Evaluate without CMSR:

python test.py --config configs/test-lmf/test-div2k-x2.yaml --model save/pretrained_models/edsr-b_lm-liif.pth --gpu 0 --fast True

- Configuration Details: Modify the configuration files in the

./configs/test-lmffolder to evaluate on different testsets and scale factors. - Output: The SR images are stored in the

./outputfolder.

- Configuration Details: Modify the configuration files in the

-

Evaluate with CMSR:

-

Generate Scale2Mod Table: Multiple commands are needed for initializing CMSR at various scales. Here's an example for scale x2:

python init_cmsr.py --config configs/cmsr/init-div2k-x2.yaml --model edsr-b_lm-liif.pth --gpu 0 --mse_threshold 0.00002 --save_path configs/cmsr/result/edsr-b_lm-liif_2e-5.yaml

Repeat the above command with different scales as needed. Usually scales x2, x3, x4, x6, x12, x18 are dense enough for ASSR.

-

Evaluate with CMSR Enabled:

python test.py --config configs/test-lmf/test-div2k-x8.yaml --model edsr-b_lm-liif.pth --gpu 0 --fast True --cmsr True --cmsr_mse 0.00002 --cmsr_path configs/cmsr/result/edsr-b_lm-liif_2e-5.yaml

-

- Release the paper on ArXiv.

- Release the code for Latent Modulated Function (LMF).

- Release more pre-trained models and evaluation results.

This work was supported by Frontier Vision Lab, SUN YAT-SEN University.

Special acknowledgment goes to the following projects: LIIF, LTE, CiaoSR, and DIIF.

If you find this work helpful, please consider citing:

@InProceedings{He_2024_CVPR,

author={He, Zongyao and Jin, Zhi},

title={Latent Modulated Function for Computational Optimal Continuous Image Representation},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month={June},

year={2024},

pages={26026-26035}

}

Feel free to reach out for any questions or issues related to the project. Thank you for your interest!