Using Deep Learning to generate pop music!

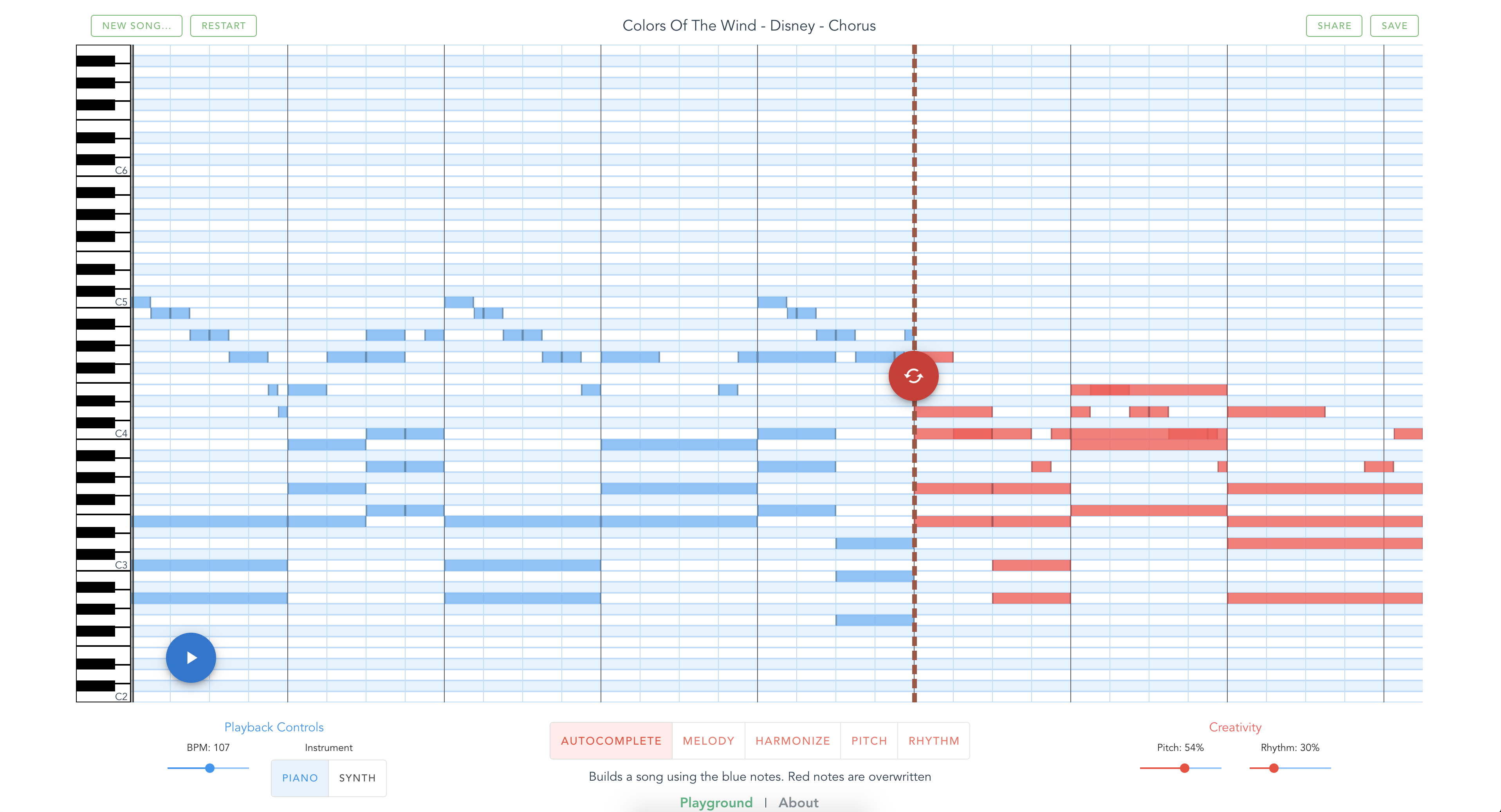

You can also experiment through the web app - musicautobot.com

Recent advances in NLP have produced amazing results in generating text. Transformer architecture is a big reason behind this.

This project aims to leverage these powerful language models and apply them to music. It's built on top of the fast.ai library

MusicTransformer - This basic model uses Transformer-XL to take a sequence of music notes and predict the next note.

MultitaskTransformer - Built on top of MusicTransformer, this model is trained on multiple tasks.

- Next Note Prediction (same as MusicTransformer)

- BERT Token Masking

- Sequence To Sequence Translation - Using chords to predict melody and vice versa.

Training on multiple tasks means we can generate some really cool predictions (Check out this Notebook):

- Harmonization - generate accompanying chords

- Melody - new melody from existing chord progression

- Remix tune - new song in the rhythm of a reference song

- Remix beat - same tune, different rhythm

Details are explained in this 4 part series:

- Part I - Creating a Pop Music Generator

- Part II - Implementation details

- Part III - Multitask Transformer

- Part IV - Composing a song with Multitask

- Play with predictions on Google Colab

- MusicTransformer Generate - Loads a pretrained model and shows how to generate/predict new notes

- MultitaskTransformer Generate - Loads a pretrained model and shows how to harmonize, generate new melodies, and remix existing songs.

- MusicTransformer

- Train - End to end example on how to create a dataset from midi files and train a model from scratch

- Generate - Loads a pretrained model and shows how to generate/predict new notes

- MultitaskTransformer

- Train - End to end example on creating a seq2seq and masked dataset for multitask training.

- Generate - Loads a pretrained model and shows how to harmonize, generate new melodies, and remix existing songs.

- Data Encoding

- Midi2Tensor - Shows how the libary internally encodes midi files to tensors for training.

- MusicItem - MusicItem is a wrapper that makes it easy to manipulate midi data. Convert midi to tensor, apply data transformations, even play music or display the notes within browser.

Pretrained models are available as MusicTransformer and MultitaskTransformer (small and large).

Each model has an additional keyC version. keyC means that the model has been trained solely on music transposed to the key of C (all white keys). These models produce better results, but expects the input to all be in the key of C.

For details on how to load these models, follow the Generate and Multitask Generate notebooks

- musicautobot/

- numpy_encode.py - submodule for encoding midi to tensor

- music_transformer.py - Submodule structure similar to fastai's library.

- Learner, Model, Transform - MusicItem, Dataloader

- multitask_transformer.py - Submodule structure similar to fastai's library.

- Learner, Model, Transform - MusicItem, Dataloader

CLI scripts for training models:

run_multitask.py - multitask training

python run_multitask.py --epochs 14 --save multitask_model --batch_size=16 --bptt=512 --lamb --data_parallel --lr 1e-4

run_music_transformer.py - music model training

python run_music_transformer.py --epochs 14 --save music_model --batch_size=16 --bptt=512 --lr 1e-4

run_ddp.sh - Helper method to train with mulitple GPUs (DistributedDataParallel). Only works with run_music_transformer.py

SCRIPT=run_multitask.py bash run_ddp.sh --epochs 14 --save music_model --batch_size=16 --bptt=512 --lr 1e-4

Commands must be run inside the scripts/ folder

-

Install anaconda: https://www.anaconda.com/distribution/

-

Run:

git clone https://github.com/bearpelican/musicautobot.git

cd musicautobot

conda env update -f environment.yml

source activate musicautobot-

Install Musescore - to view sheet music within a jupyter notebook

Ubuntu:

sudo apt-get install musescore

MacOS - download

Installation:

cd serve

conda env update -f environment.ymlYou need to setup an s3 bucket to save your predictions. After you've created a bucket, update the config api/api.cfg with the new bucket name.

Development:

python run.pyProduction:

gunicorn -b 127.0.0.1:5000 run_guni:app --timeout 180 --workers 8Unfortunately I cannot provide the dataset used for training the model.

Here's some suggestions:

- Classical Archives - incredible catalog of high quality classical midi

- HookTheory - great data for sequence to sequence predictions. Need to manually copy files into hookpad

- Reddit - 130k files

- Lakh - great research dataset

This project is built on top of fast.ai's deep learning library and music21's incredible musicology library.