OVO is a novel training framework for optimizing feature representations directly using causal technology. There are two main parts of OVO which are called COP and VOP. From the perspective of the correlation among features, COP reduces the spurious relation by minimizing the covariance computed from all features. From the viewpoint of each feature, VOP makes the feature more causal by minimizing the variance calculated from each feature of the same label.

Source code of paper "CoVariance-based Causal Debiasing for Entity and Relation Extraction".

The codes for OVO are in ./code/OVO.

We apply OVO on three baselines whose codes are located as follows:

- PURE:

./code/PURE - SpERT.PL:

./code/SpERT_PL - PL-Marker:

./code/PL-Marker

The OVO's codes on each baseline are in training directory. For example, ./code/PURE/training

We use two widely used datasets that are available on the Internet.

The locations of datasets are as follows:

PURE:

PURE

|-ace05

|-scierc_data

SpERT.PL

|-SpERT_PL

|-InputsAndOutputs

|--data

|---datasets

|----ace05

|----scierc

PL-Marker:

PURE

|-ace05

|-scierc

Note: For SpERT.PL, datasets are required to modify by generate_augmented_input.py.

The results of baselines applying OVO are in ./results.

Note that the results are different because the prediction files of baselines are different.

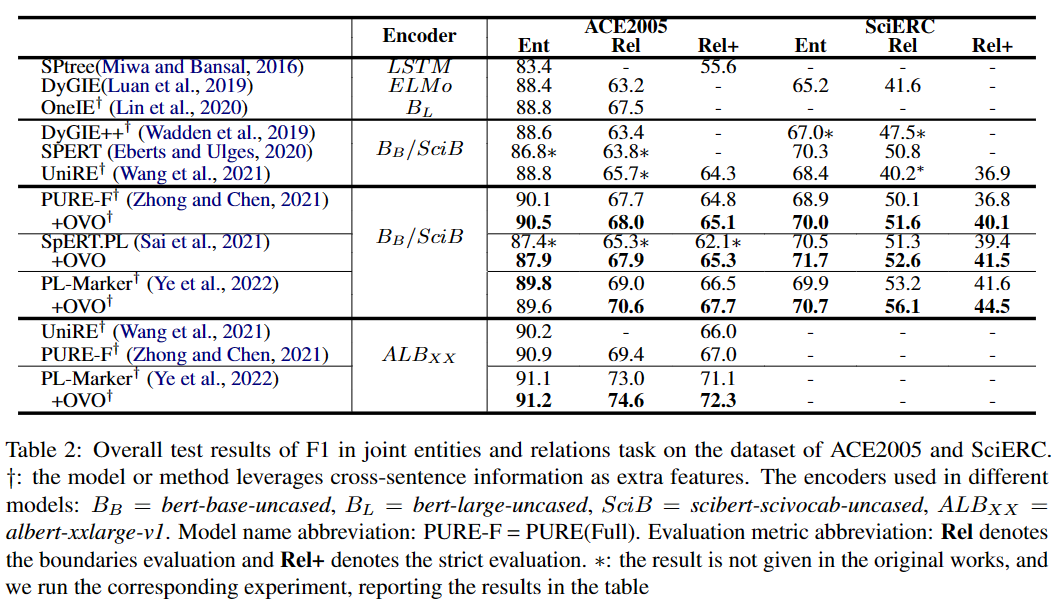

The experiments in the paper are as follows:

If you use our code in your research, please cite our work:

@inproceedings{ren-etal-2023-covariance,

title = "{C}o{V}ariance-based Causal Debiasing for Entity and Relation Extraction",

author = "Ren, Lin and

Liu, Yongbin and

Cao, Yixin and

Ouyang, Chunping",

editor = "Bouamor, Houda and

Pino, Juan and

Bali, Kalika",

booktitle = "Findings of the Association for Computational Linguistics: EMNLP 2023",

month = dec,

year = "2023",

address = "Singapore",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2023.findings-emnlp.173",

doi = "10.18653/v1/2023.findings-emnlp.173",

pages = "2627--2640",

}