[Project Page][Paper][Conference Paper]

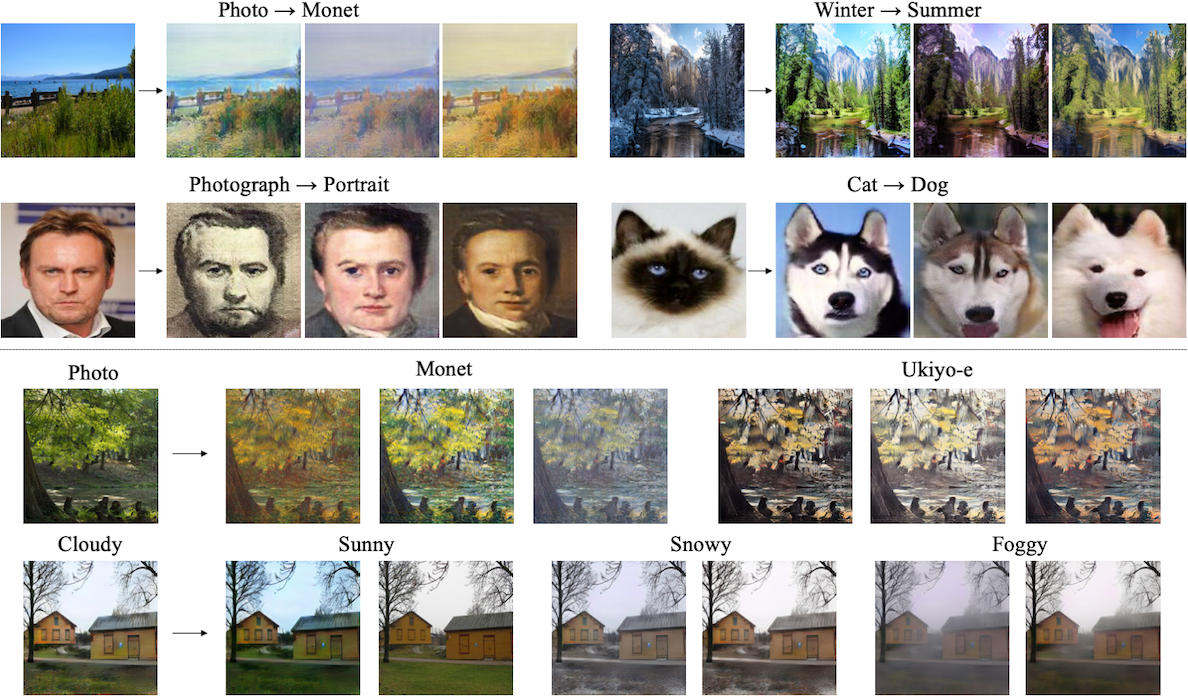

Pytorch implementation for our image-to-image translation method. With the proposed disentangled representation framework, we are able to learn diverse image-to-image translation from unpaired training data.

We have an extension of this work that:

- Apply mode-seeking regularization to improve the diversity, please see the training options for details.

- Apply DRIT on the multidomain setting, please refer to MDMM if you're interested in it.

Contact: Hsin-Ying Lee (hlee246@ucmerced.edu) and Hung-Yu Tseng (htseng6@ucmerced.edu)

Please cite our paper if you find the code or dataset useful for your research.

DRIT++: Diverse Image-to-Image Translation via Disentangled Representations

Hsin-Ying Lee*, Hung-Yu Tseng*, Qi Mao*, Jia-Bin Huang, Yu-Ding Lu, Maneesh Kumar Singh, and Ming-Hsuan Yang

@article{DRIT_plus,

author = {Lee, Hsin-Ying and Tseng, Hung-Yu and Mao, Qi and Huang, Jia-Bin and Lu, Yu-Ding and Singh, Maneesh Kumar and Yang, Ming-Hsuan},

title = {DRIT++: Diverse Image-to-Image Translation viaDisentangled Representations},

journal={International Journal of Computer Vision},

pages={1--16},

year={2020}

}

Diverse Image-to-Image Translation via Disentangled Representations

Hsin-Ying Lee*, Hung-Yu Tseng*, Jia-Bin Huang, Maneesh Kumar Singh, and Ming-Hsuan Yang

European Conference on Computer Vision (ECCV), 2018 (oral) (* equal contribution)

@inproceedings{DRIT,

author = {Lee, Hsin-Ying and Tseng, Hung-Yu and Huang, Jia-Bin and Singh, Maneesh Kumar and Yang, Ming-Hsuan},

booktitle = {European Conference on Computer Vision},

title = {Diverse Image-to-Image Translation via Disentangled Representations},

year = {2018}

}

- Python 3.5 or Python 3.6

- Pytorch 0.4.0 and torchvision (https://pytorch.org/)

- TensorboardX

- Tensorflow (for tensorboard usage)

- We provide a Docker file for building the environment based on CUDA 9.0, CuDNN 7.1, and Ubuntu 16.04.

- Clone this repo:

git clone https://github.com/HsinYingLee/DRIT.git

cd DRIT/src

- Download the dataset using the following script.

bash ../datasets/download_dataset.sh dataset_name

- portrait: 6452 photography images from CelebA dataset, 1811 painting images downloaded and cropped from Wikiart.

- cat2dog: 871 cat (birman) images, 1364 dog (husky, samoyed) images crawled and cropped from Google Images.

- You can follow the instructions in CycleGAN website to download the Yosemite (winter, summer) dataset and artworks (monet, van Gogh) dataset. For photo <-> artrwork translation, we use the summer images in Yosemite dataset as the photo images.

- Yosemite (summer <-> winter)

python3 train.py --dataroot ../datasets/yosemite --name yosemite

tensorboard --logdir ../logs/yosemite

Results and saved models can be found at ../results/yosemite.

- Portrait (photograpy <-> painting)

python3 train.py --dataroot ../datasets/portrait --name portrait --concat 0

tensorboard --logdir ../logs/portrait

Results and saved models can be found at ../results/portrait.

- Download a pre-trained model (We will upload the latest models in a few days)

bash ../models/download_model.sh

- Generate results with randomly sampled attributes

- Require folder

testA(for a2b) ortestB(for b2a) under dataroot

- Require folder

python3 test.py --dataroot ../datasets/yosemite --name yosemite_random --resume ../models/example.pth

Diverse generated winter images can be found at ../outputs/yosemite_random

- Generate results with attributes encoded from given images

- Require both folders

testAandtestBunder dataroot

- Require both folders

python3 test_transfer.py --dataroot ../datasets/yosemite --name yosemite_encoded --resume ../models/example.pth

Diverse generated winter images can be found at ../outputs/yosemite_encoded

-

Mode seeking regularization is used by default. Set

--no_msto disable the regularization. -

Due to the usage of adaptive pooling for attribute encoders, our model supports various input size. For example, here's the result of Grayscale -> RGB using 340x340 images.

-

We provide two different methods for combining content representation and attribute vector. One is simple concatenation, the other is feature-wise transformation (learn to scale and bias features). In our experience, if the translation involves less shape variation (e.g. winter <-> summer), simple concatenation produces better results. On the other hand, for the translation with shape variation (e.g. cat <-> dog, photography <-> painting), feature-wise transformation should be used (i.e. set

--concat 0) in order to generate diverse results. -

In our experience, using the multiscale discriminator often gets better results. You can set the number of scales manually with

--dis_scale. -

There is a hyper-parameter "d_iter" which controls the training schedule of the content discriminator. The default value is d_iter = 3, yet the model can still generate diverse results with d_iter = 1. Set

--d_iter 1if you would like to save some training time. -

We also provide option

--dis_spectral_normfor using spectral normalization (https://arxiv.org/abs/1802.05957). We use the code from the master branch of pytorch since pytorch 0.5.0 is not stable yet. However, despite using spectral normalization significantly stabilizes the training, we fail to observe consistent quality improvement. We encourage everyone to play around with various settings and explore better configurations. -

Since the log file will be large if you want to display the images on tensorboard, set

--no_img_displayif you like to display only the loss values.