This repository contains Transformer implementation used to translate Korean sentence into English sentence.

I used translation dataset for NMT, but you can apply this model to any sequence to sequence (i.e. text generation) tasks such as text summarization, response generation, ..., etc.

In this project, I specially used Korean-English translation corpus from AI Hub to apply torchtext into Korean dataset.

And I also used soynlp library which is used to tokenize Korean sentence. It is really nice and easy to use, you should try if you plan to handle Korean sentences :)

Currently, the lowest valid and test losses are 2.047 and 3.488 respectively.

- Number of train data: 92,000

- Number of validation data: 11,500

- Number of test data: 11,500

Example:

{

'kor': '['부러진', '날개로', '다시한번', '날개짓을', '하라']',

'eng': '['wings', 'once', 'again', 'with', 'broken', 'wings']'

}

- Following libraries are fundamental to this repository.

- You should install PyTorch via official Installation guide.

- To use spaCy model which is used to tokenize english sentence, download English model by running

python -m spacy download en_core_web_sm.

en-core-web-sm==2.1.0

matplotlib==3.1.1

numpy==1.16.4

pandas==0.25.1

scikit-learn==0.21.3

soynlp==0.0.493

spacy==2.1.8

torch==1.2.0

torchtext==0.4.0

- If you have troble in installing

torchandtorchtext, dopip install torch==1.2.0 -f https://download.pytorch.org/whl/torch_stable.htmlandpip install Torchtext==0.04

- Before training the model, you should train

soynlp tokenizeron your training dataset and build vocabulary using following code. - You can determine the size of vocabulary of Korean and English dataset.

- In general, Korean dataset creates the larger size vocabulary than English dataset. Therefore to make balance, you have to choose proper vocab size

- By running following code, you will get

tokenizer.pickle,kor.pickleandeng.picklewhich are used to train, test the model and predict user's input sentence.

python build_pickles.py --kor_vocab KOREAN_VOCAB_SIZE --eng_vocab ENGLISH_VOCAB_SIZE

# in default vocab size

python build_pickles.py

- For training, run

main.pywith train mode (which is default option)

python main.py

- For testing, run

main.pywith test mode

python main.py --mode test

- For predicting, run

predict.pywith your Korean input sentence. - Don't forget to wrap your input with double quotation mark !

python predict.py --input "YOUR_KOREAN_INPUT"

kor> 내일 여자친구를 만나러 가요

eng> I am going to meet my girlfriend tomorrow

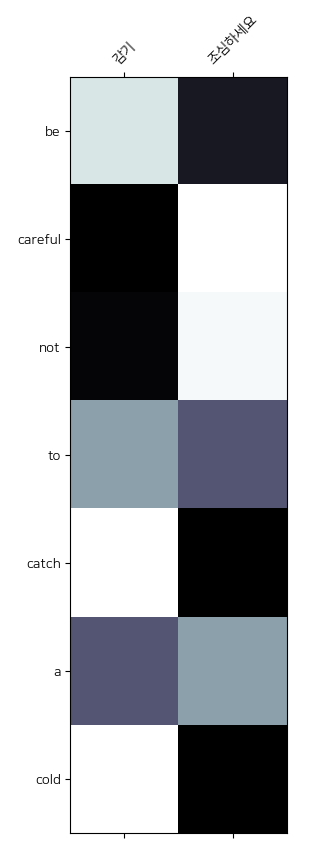

kor> 감기 조심하세요

eng> Be careful not to catch a cold

Basically, most of my codes are based on original paper. But, I found that there is a difference between original paper and practical implementation in tensor2tensor framework. Then, I fixed some codes to follow practical framework and got better result. For following these change, you should check-out the last reference article.