Will appear at CVPR 2024!

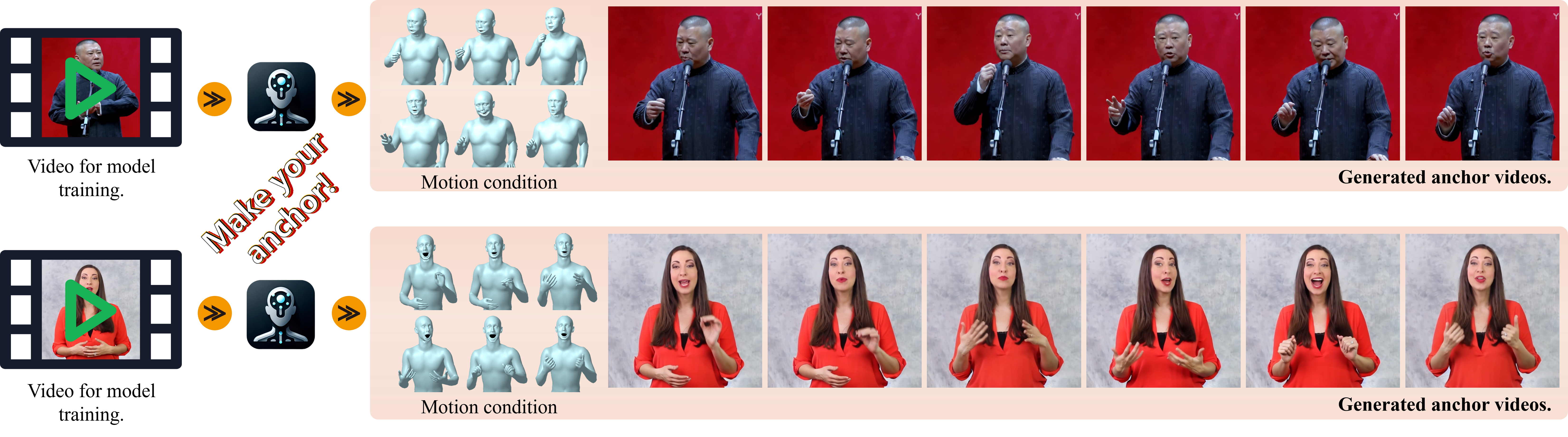

Make-Your-Anchor is a personalized 2d avatar generation framework based on diffusion model, which is capable of generating realistic human videos with SMPL-X sequences as condition.

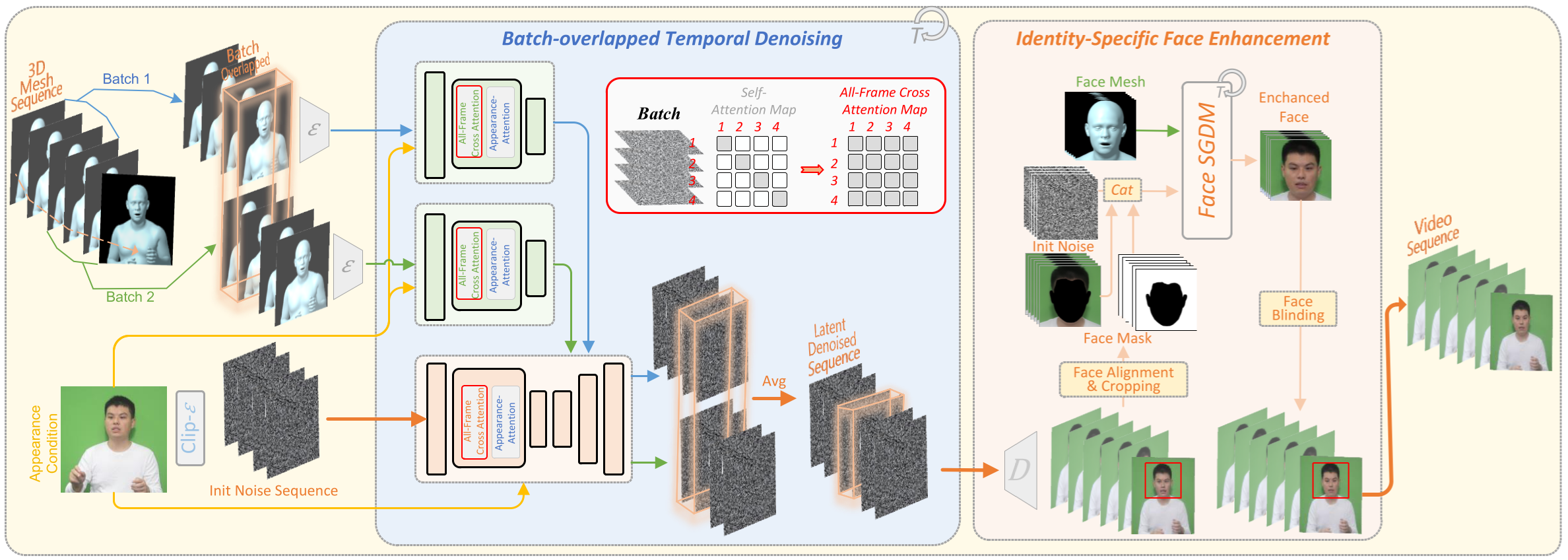

Despite the remarkable process of talking-head-based avatar-creating solutions, directly generating anchor-style videos with full-body motions remains challenging. In this study, we propose Make-Your-Anchor, a novel system necessitating only a one-minute video clip of an individual for training, subsequently enabling the automatic generation of anchor-style videos with precise torso and hand movements. Specifically, we finetune a proposed structure-guided diffusion model on input video to render 3D mesh conditions into human appearances. We adopt a two-stage training strategy for the diffusion model, effectively binding movements with specific appearances. To produce arbitrary long temporal video, we extend the 2D U-Net in the frame-wise diffusion model to a 3D style without additional training cost, and a simple yet effective batch-overlapped temporal denoising module is proposed to bypass the constraints on video length during inference. Finally, a novel identity-specific face enhancement module is introduced to improve the visual quality of facial regions in the output videos. Comparative experiments demonstrate the effectiveness and superiority of the system in terms of visual quality, temporal coherence, and identity preservation, outperforming SOTA diffusion/non-diffusion methods.

- The self-collected data described in the paper will not be released due to the privacy, while we release the model trained with open dataset.

- As a person-specific approach, we plan to release the pre-trained weight from pre-training stage, and the fine-tuning code. The guidance and code for preprocess training data will be updated.

- Due to the limitation of current training dataset, our method performs better when the driven motion is in a similar style as the target person (as cross-person result shows). We plan to increase the quantity of pre-training and fine-tuning data to overcome this limitation.

- [2024.04.22]: Release the inference code and pretrained weights.

- Inference code and checkpoints

- Preprocess code and guidance

- Fine-tuning code and pre-trained weights

Our code is based on PyTorch and Diffusers. Recommended requirements can be installed via

pip install -r requirements.txtTo process videos, FFmpeg is required to be installed.

For face alignment, please download and unzip the relative files from this link to the folder .\inference\insightface_func\models\.

Please download the checkpoints from Google Drive, and place them in the folder ./inference/checkpoints. Currently, we upload the checkpoints trained from open-dataset.

We provide pre-trained weights and a sample fine-tuning data of one identity for fine-tuning. For the pre-trained weights, please download them from [pre-trained_weight] and place them in ./train/checkpoints. For the sample fine-tuning data, please download the train_data.zip from [fine-tune_data] and unzip it in ./train. The final folder should be seen like:

train/

├── checkpoints/

│ └──pre-trained_weight/

│ ├── body/

│ └──head/

└── train_data/

├── body/

├── head/

├── body_train.json

└── head_train.json

We provide the inference code with our released checkpoints. After download/fine-tuned the checkpoints and place them in the ./inference/checkpoints, the inference can be run as:

bash inference.shSpecifically, five parameters should be filled with your configuration in the inference.sh:

## Please fill the parameters here

# path to the body model folder

body_weight_dir=./checkpoints/seth/body

# path to the head model folder

head_weight_dir=./checkpoints/seth/head

# path to the input poses

body_input_dir=./samples/poses/seth1

# path to the reference body appearance

body_prompt_img_pth=./samples/appearance/body.png

# path to the reference head appearance

head_prompt_img_pth=./samples/appearance/head.pngAfter generation (it takes about 5 minutes), the results are listed in the ./inference/samples/output.

We provide training scripts. Please download the pre-trained weights from [pre-trained_weight] and sample data from [fine-tune_data] as the above instructions. The training code can be run as:

bash train_body.sh

bash train_head.shSome parameters should be filled with your configuration in the train_body.sh and train_head.sh.

main1.mp4

main2.mp4

audio-driven.mp4

ablation.mp4

cross-person.mp4

fullbody.mp4

@article{huang2024makeyouranchor,

title={Make-Your-Anchor: A Diffusion-based 2D Avatar Generation Framework},

author={Huang, Ziyao and Tang, Fan and Zhang, Yong and Cun, Xiaodong and Cao, Juan and Li, Jintao and Lee, Tong-Yee},

journal={arXiv preprint arXiv:2403.16510},

year={2024}

}Here are some great resources we benefit:

-

TalkSHOW for preprocess and audio-driven inference

-

SimSwap for the code of face preprocess

-

ControlVideo for the implementation of full-frame attention.