This repo is the official implementation of "Unmasked Teacher: Towards Training-Efficient Video Foundation Models".

By Kunchang Li, Yali Wang, Yizhuo Li, Yi Wang, Yinan He, Limin Wang and Yu Qiao.

-

🔥 2023/07/19: All the code and models are released.

- single_modality: Single-modality pretraining and finetuning.

- Action Classification: Kinetics, Moments in Time, Something-Something.

- Action Detecetion: AVA.

- The models and scripts are in MODEL_ZOO. Have a try!

- multi_modality: Multi-modality pretraining and finetuning.

- Video-Text Retrieval: MSRVTT, DiDeMo, ActivityNet, LSMDC, MSVD, Something-Something.

- Video Question Answering: ActivityNet-QA, MSRVTT-QA, MSRVTT-MC, MSVD-QA.

- The models and scripts are in MODEL_ZOO. Have a try!

- 🙇♂️ We are hiring researchers, engineers and interns in General Vision Group, Shanghai AI Lab. If you are interested in working with us, please contact Yi Wang (

wangyi@pjlab.org.cn).

- single_modality: Single-modality pretraining and finetuning.

-

2023/07/14: Unmasked Teacher is accpeted by ICCV2023! 🎉🎉

-

2023/03/17: We gave a blog in Chinese Zhihu.

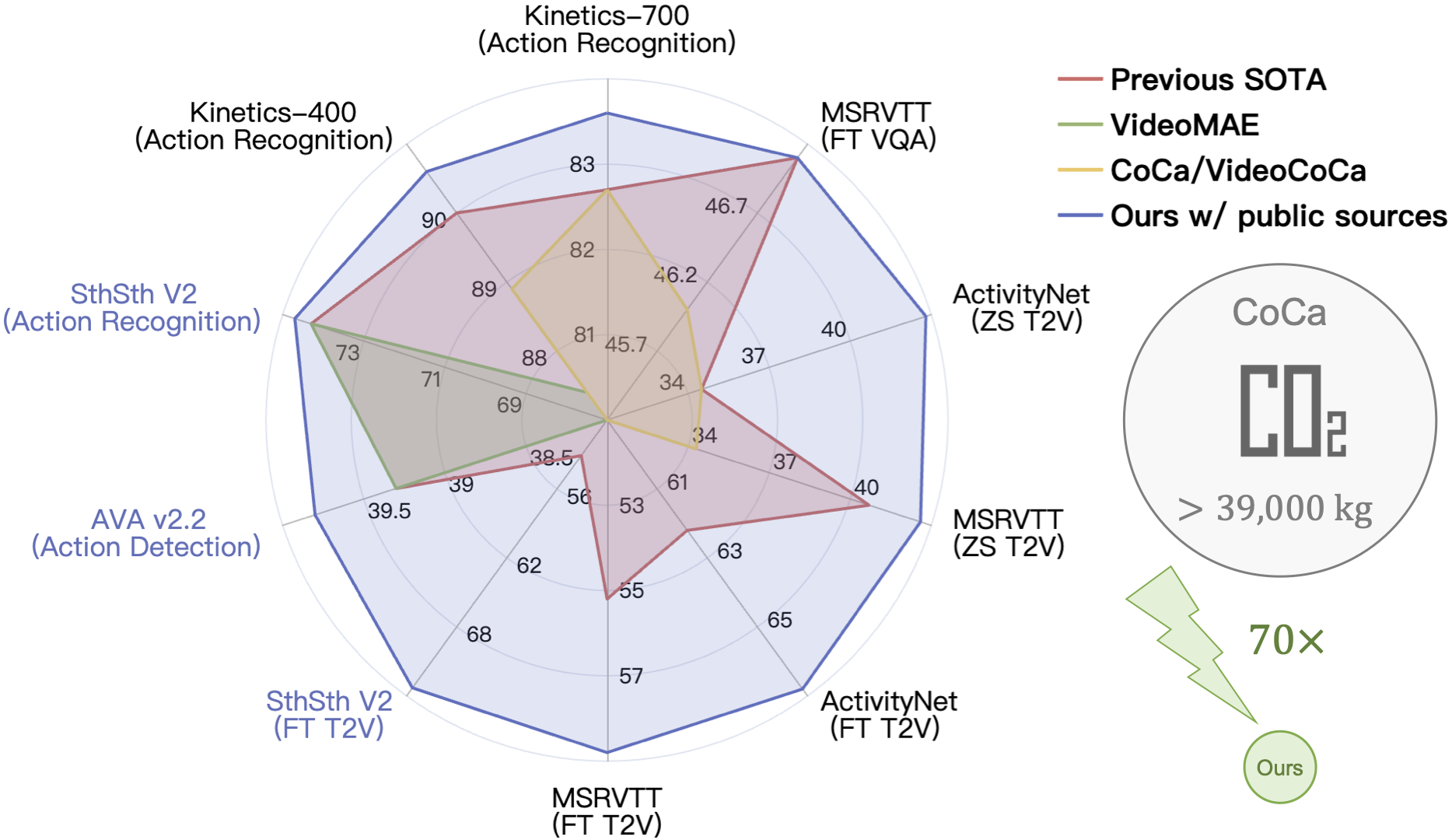

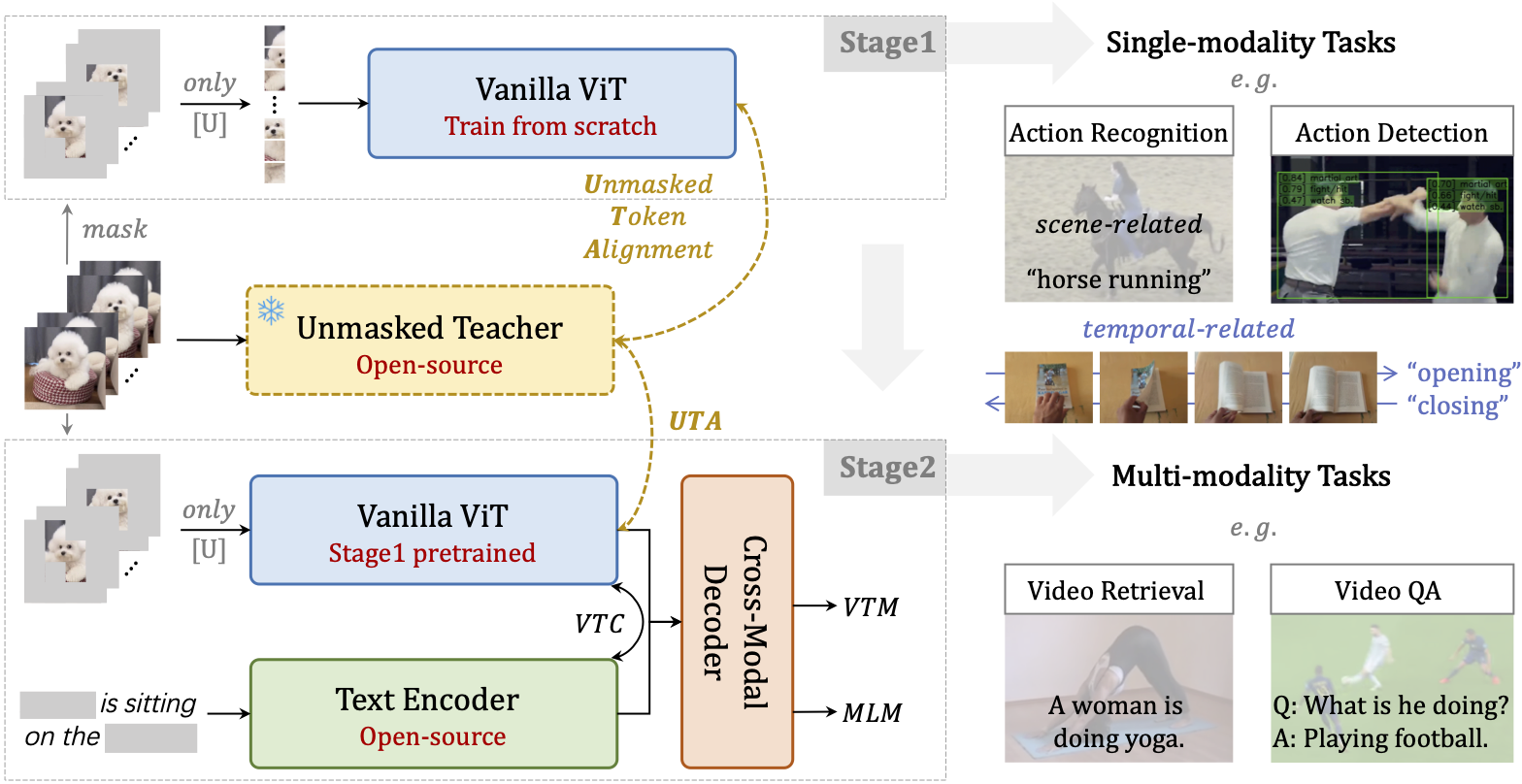

Video Foundation Models (VFMs) have received limited exploration due to high computational costs and data scarcity. Previous VFMs rely on Image Foundation Models (IFMs), which face challenges in transferring to the video domain. Although VideoMAE has trained a robust ViT from limited data, its low-level reconstruction poses convergence difficulties and conflicts with high-level cross-modal alignment. This paper proposes a training-efficient method for temporal-sensitive VFMs that integrates the benefits of existing methods. To increase data efficiency, we mask out most of the low-semantics video tokens, but selectively align the unmasked tokens with IFM, which serves as the UnMasked Teacher (UMT). By providing semantic guidance, our method enables faster convergence and multimodal friendliness. With a progressive pre-training framework, our model can handle various tasks including scene-related, temporal-related, and complex video-language understanding. Using only public sources for pre-training in 6 days on 32 A100 GPUs, our scratch-built ViT-L/16 achieves state-of-the-art performances on various video tasks.

If you find this repository useful, please use the following BibTeX entry for citation.

@misc{li2023unmasked,

title={Unmasked Teacher: Towards Training-Efficient Video Foundation Models},

author={Kunchang Li and Yali Wang and Yizhuo Li and Yi Wang and Yinan He and Limin Wang and Yu Qiao},

year={2023},

eprint={2303.16058},

archivePrefix={arXiv},

primaryClass={cs.CV}

}This repository is built based on UniFormer, VideoMAE and VINDLU repository.

If you have any questions during the trial, running or deployment, feel free to join our WeChat group discussion! If you have any ideas or suggestions for the project, you are also welcome to join our WeChat group discussion!