Trankit: A Light-Weight Transformer-based Python Toolkit for Multilingual Natural Language Processing

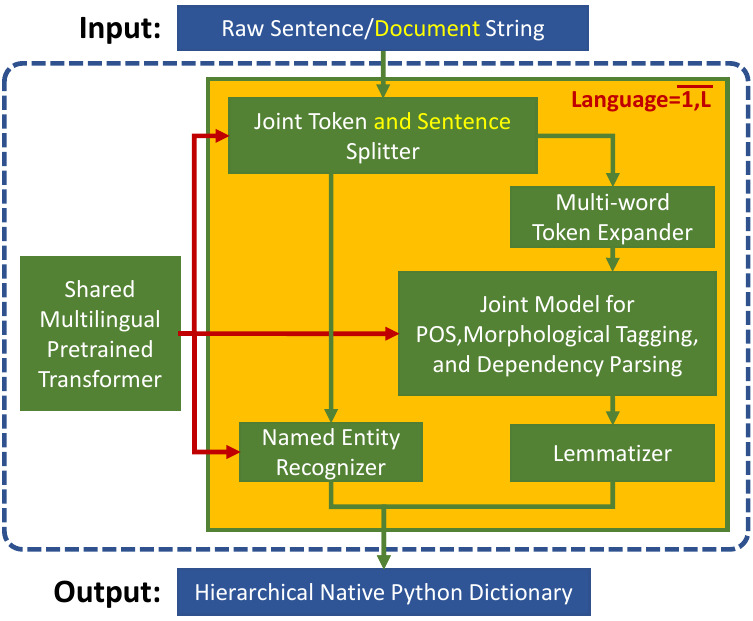

Trankit is a light-weight Transformer-based Python Toolkit for multilingual Natural Language Processing (NLP). It provides a trainable pipeline for fundamental NLP tasks over 100 languages, and 90 downloadable pretrained pipelines for 56 languages.

Trankit outperforms the current state-of-the-art multilingual toolkit Stanza (StanfordNLP) in many tasks over 90 Universal Dependencies v2.5 treebanks of 56 different languages while still being efficient in memory usage and speed, making it usable for general users.

In particular, for English, Trankit is significantly better than Stanza on sentence segmentation (+7.22%) and dependency parsing (+3.92% for UAS and +4.37% for LAS). For Arabic, our toolkit substantially improves sentence segmentation performance by 16.16% while Chinese observes 12.31% and 12.72% improvement of UAS and LAS for dependency parsing. Detailed comparison between Trankit, Stanza, and other popular NLP toolkits (i.e., spaCy, UDPipe) in other languages can be found here on our documentation page.

We also created a Demo Website for Trankit, which is hosted at: http://nlp.uoregon.edu/trankit

Technical details about Trankit are presented in our following paper. Please cite the paper if you use Trankit in your research.

@misc{nguyen2021trankit,

title={Trankit: A Light-Weight Transformer-based Toolkit for Multilingual Natural Language Processing},

author={Minh Nguyen and Viet Lai and Amir Pouran Ben Veyseh and Thien Huu Nguyen},

year={2021},

eprint={2101.03289},

archivePrefix={arXiv},

primaryClass={cs.CL}

}Trankit can be easily installed via one of the following methods:

pip install trankit

The command would install Trankit and all dependent packages automatically.

git clone https://github.com/nlp-uoregon/trankit.git

cd trankit

pip install -e .

This would first clone our github repo and install Trankit.

Trankit can process inputs which are untokenized (raw) or pretokenized strings, at both sentence and document level. Currently, Trankit supports the following tasks:

- Sentence segmentation.

- Tokenization.

- Multi-word token expansion.

- Part-of-speech tagging.

- Morphological feature tagging.

- Dependency parsing.

- Named entity recognition.

The following code shows how to initialize a pretrained pipeline for English; it is instructed to run on GPU, automatically download pretrained models, and store them to the specified cache directory. Trankit will not download pretrained models if they already exist.

from trankit import Pipeline

# initialize a multilingual pipeline

p = Pipeline(lang='english', gpu=True, cache_dir='./cache')After initializing a pretrained pipeline, it can be used to process the input on all tasks as shown below. If the input is a sentence, the tag is_sent must be set to True.

from trankit import Pipeline

p = Pipeline(lang='english', gpu=True, cache_dir='./cache')

######## document-level processing ########

untokenized_doc = '''Hello! This is Trankit.'''

pretokenized_doc = [['Hello', '!'], ['This', 'is', 'Trankit', '.']]

# perform all tasks on the input

processed_doc1 = p(untokenized_doc)

processed_doc2 = p(pretokenized_doc)

######## sentence-level processing #######

untokenized_sent = '''This is Trankit.'''

pretokenized_sent = ['This', 'is', 'Trankit', '.']

# perform all tasks on the input

processed_sent1 = p(untokenized_sent, is_sent=True)

processed_sent2 = p(pretokenized_sent, is_sent=True)Note that, although pretokenized inputs can always be processed, using pretokenized inputs for languages that require multi-word token expansion such as Arabic or French might not be the correct way. Please check out the column Requires MWT expansion? of this table to see if a particular language requires multi-word token expansion or not.

For more detailed examples, please check out our documentation page.

In case we want to process inputs of different languages, we need to initialize a multilingual pipeline.

from trankit import Pipeline

# initialize a multilingual pipeline

p = Pipeline(lang='english', gpu=True, cache_dir='./cache')

langs = ['arabic', 'chinese', 'dutch']

for lang in langs:

p.add(lang)

# tokenize an English input

p.set_active('english')

en = p.tokenize('Rich was here before the scheduled time.')

# get ner tags for an Arabic input

p.set_active('arabic')

ar = p.ner('وكان كنعان قبل ذلك رئيس جهاز الامن والاستطلاع للقوات السورية العاملة في لبنان.')In this example, .set_active() is used to switch between languages.

Training customized pipelines is easy with Trankit via the class TPipeline. Below we show how we can train a token and sentence splitter on customized data.

from trankit import TPipeline

tp = TPipeline(training_config={

'task': 'tokenize',

'save_dir': './saved_model',

'train_txt_fpath': './train.txt',

'train_conllu_fpath': './train.conllu',

'dev_txt_fpath': './dev.txt',

'dev_conllu_fpath': './dev.conllu'

}

)

trainer.train()Detailed guidelines for training and loading a customized pipeline can be found here

- Language Identification

We use XLM-Roberta and Adapters as our shared multilingual encoder for different tasks and languages. The AdapterHub is used to implement our plug-and-play mechanism with Adapters. To speed up the development process, the implementations for the MWT expander and the lemmatizer are adapted from Stanza.