This is the official implementation of the paper "DINO: DETR with Improved DeNoising Anchor Boxes for End-to-End Object Detection".

Authors: Hao Zhang*, Feng Li*, Shilong Liu*, Lei Zhang, Hang Su, Jun Zhu, Lionel M. Ni, Heung-Yeung Shum

Code is under preparation, please be patient.

[2020/4/10]: Code for DAB-DETR is avaliable here.

[2022/3/8]: We reach the SOTA on MS-COCO leader board with 63.3AP!

[2022/3/9]: We build a repo Awesome Detection Transformer to present papers about transformer for detection and segmenttion. Welcome to your attention!

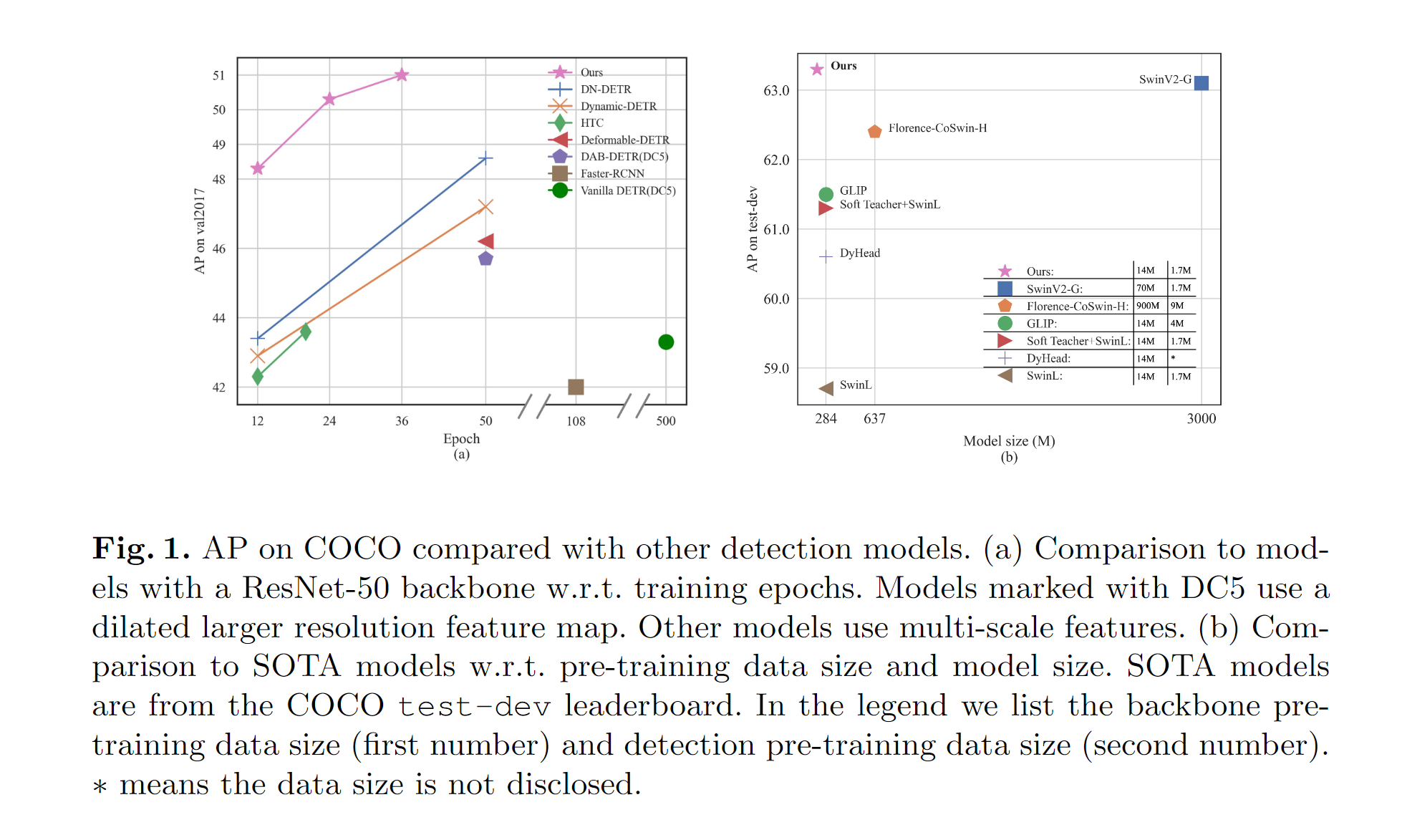

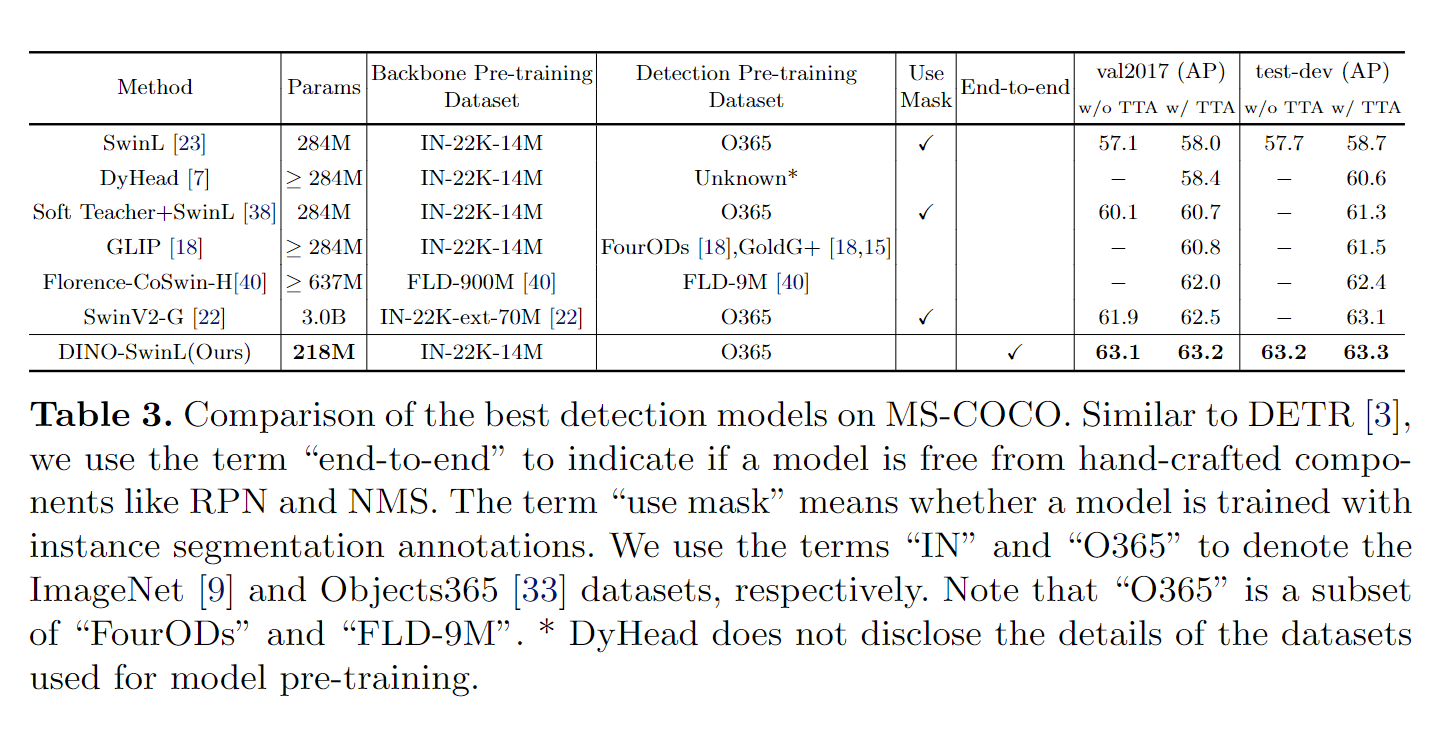

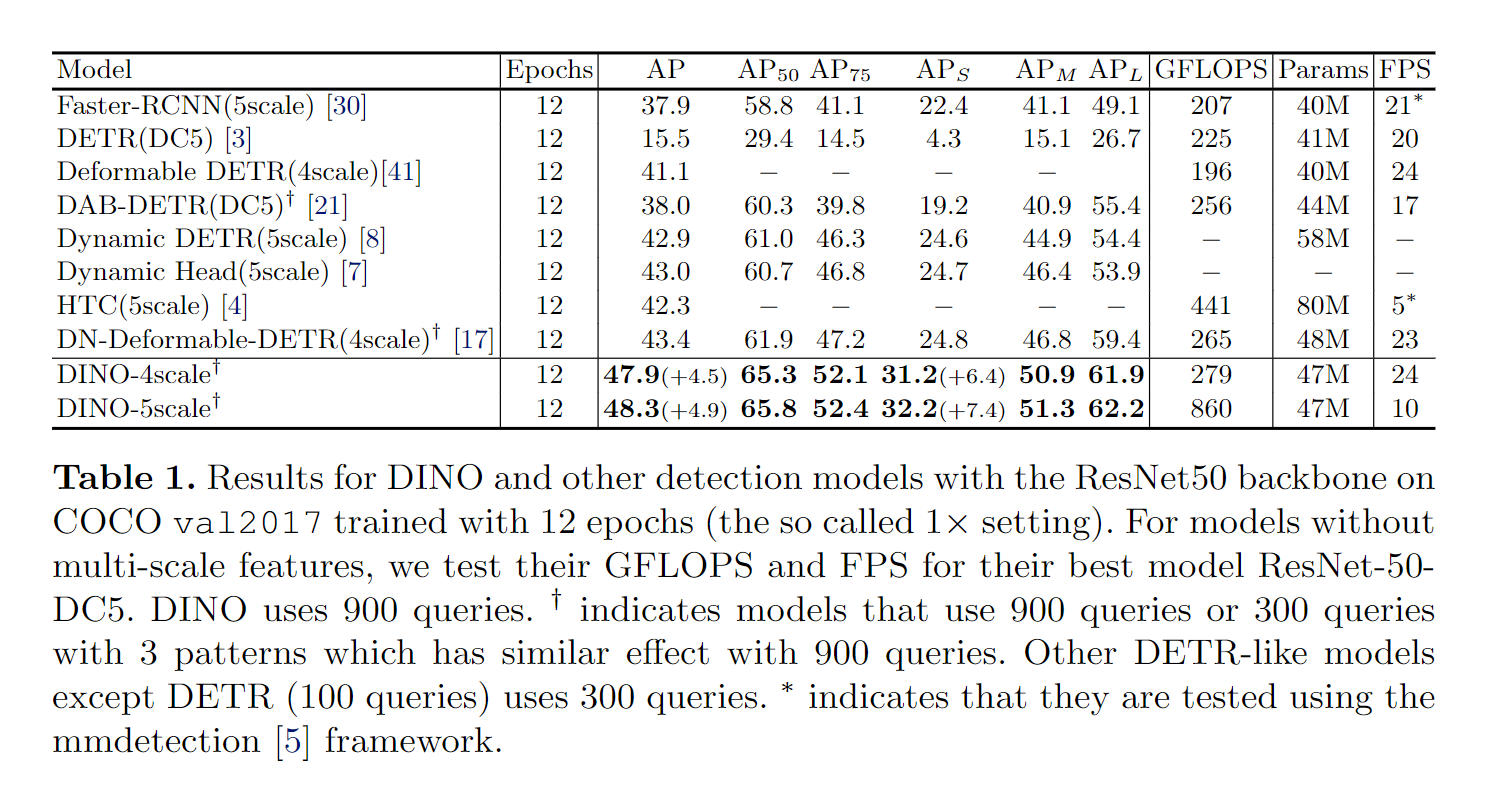

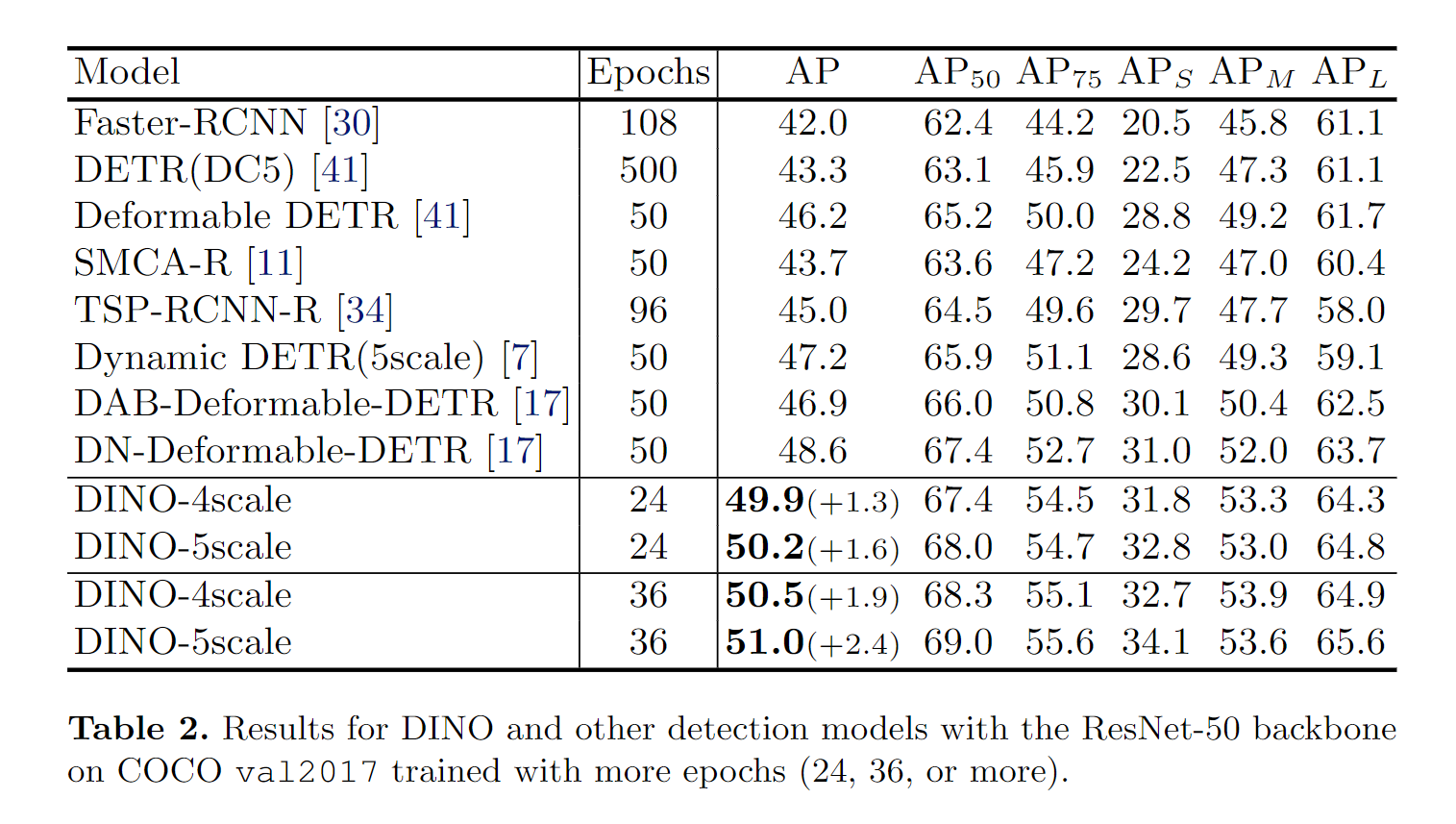

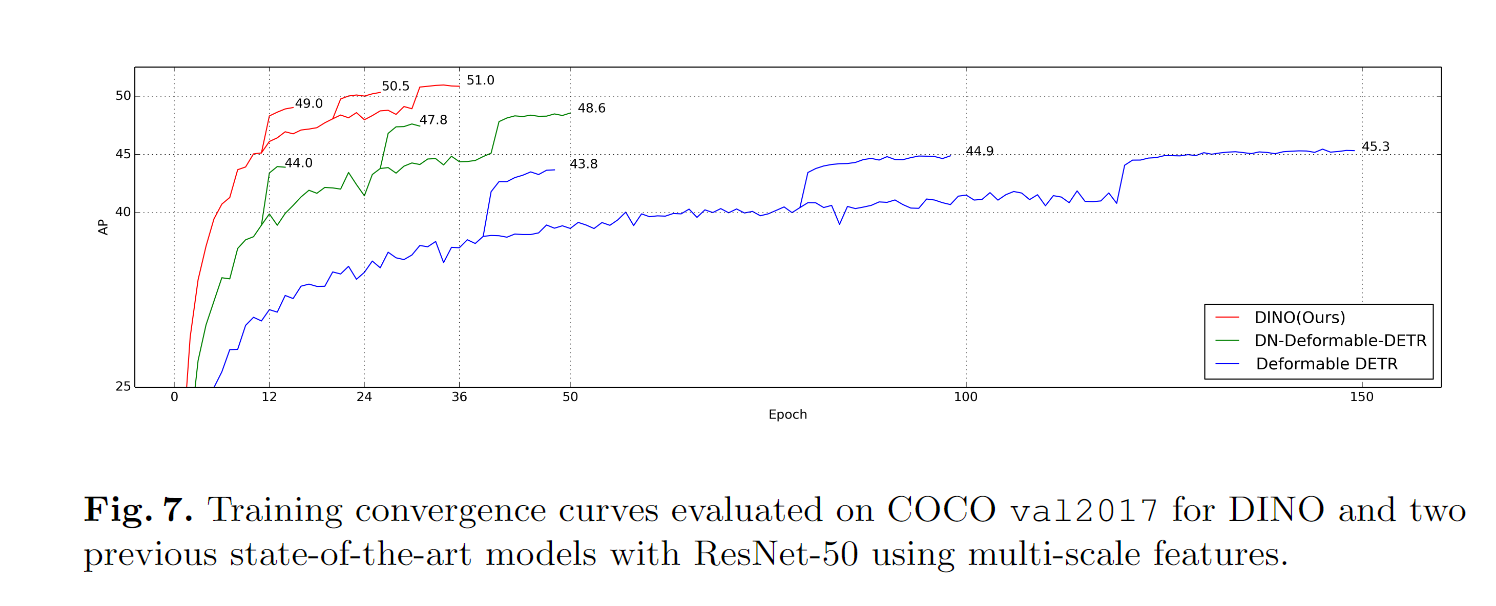

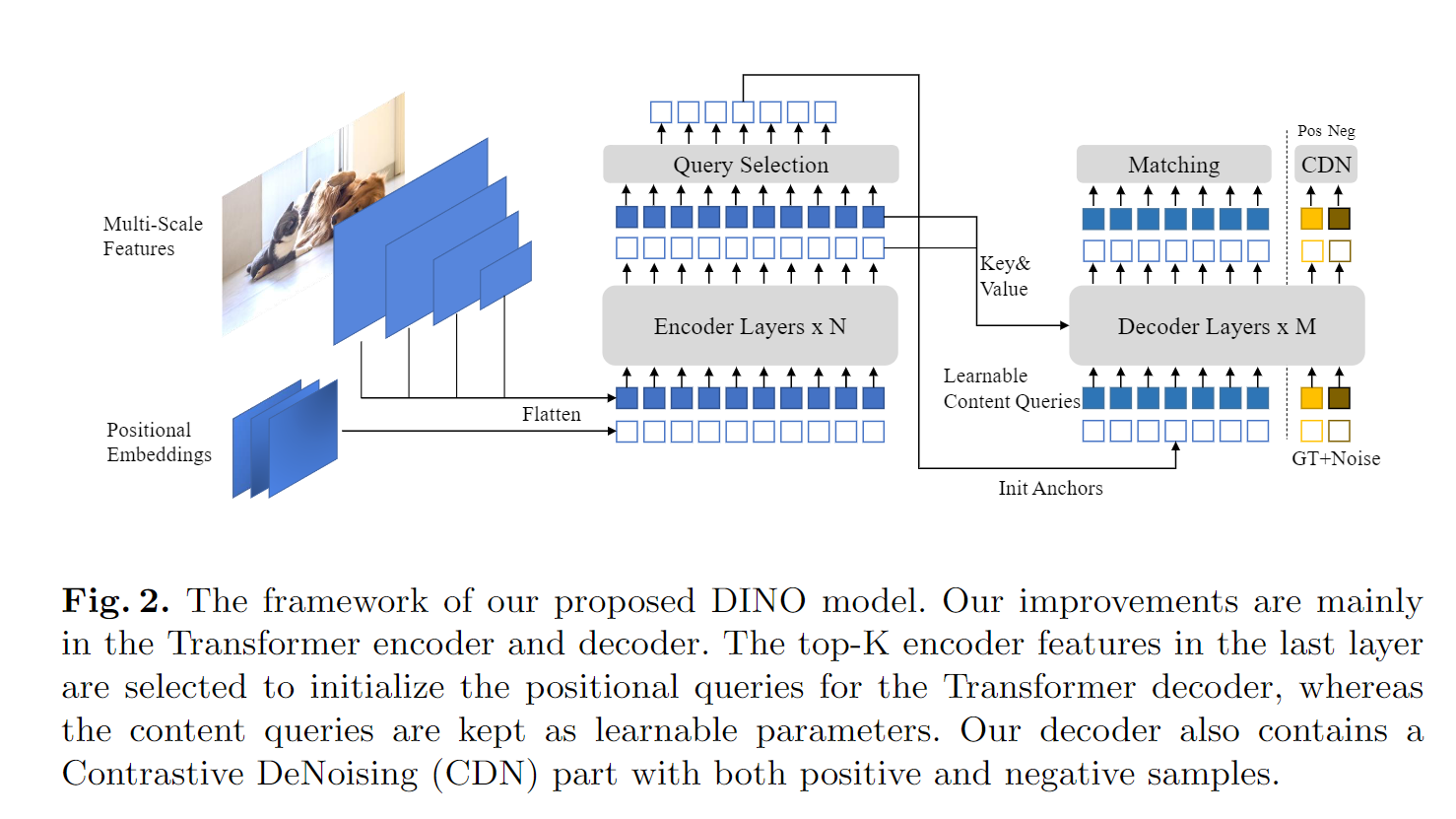

We present DINO (DETR with Improved deNoising anchOr boxes), a state-of-the-art end-to-end object detector. DINO improves over previous DETR-like models in performance and efficiency by using a contrastive way for denoising training, a mixed query selection method for anchor initialization, and a look forward twice scheme for box prediction. DINO achieves 48.3AP in 12 epochs and 51.0AP in 36 epochs on COCO with a ResNet-50 backbone and multi-scale features, yielding a significant improvement of +4.9AP and +2.4AP, respectively, compared to DN-DETR, the previous best DETR-like model. DINO scales well in both model size and data size. Without bells and whistles, after pre-training on the Objects365 dataset with a SwinL backbone, DINO obtains the best results on both COCO val2017 (63.2AP) and test-dev (63.3AP). Compared to other models on the leaderboard, DINO significantly reduces its model size and pre-training data size while achieving better results.

Our model is based on DAB-DETR and DN-DETR.

DN-DETR: Accelerate DETR Training by Introducing Query DeNoising.

Feng Li*, Hao Zhang*, Shilong Liu, Jian Guo, Lionel M. Ni, Lei Zhang.

IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2022.

[paper] [code] [中文解读]

DAB-DETR: Dynamic Anchor Boxes are Better Queries for DETR.

Shilong Liu, Feng Li, Hao Zhang, Xiao Yang, Xianbiao Qi, Hang Su, Jun Zhu, Lei Zhang.

International Conference on Learning Representations (ICLR) 2022.

[paper] [code]

If you find our work helpful for your research, please consider citing the following BibTeX entry.

@misc{zhang2022dino,

title={DINO: DETR with Improved DeNoising Anchor Boxes for End-to-End Object Detection},

author={Hao Zhang and Feng Li and Shilong Liu and Lei Zhang and Hang Su and Jun Zhu and Lionel M. Ni and Heung-Yeung Shum},

year={2022},

eprint={2203.03605},

archivePrefix={arXiv},

primaryClass={cs.CV}

}