This repository contains a reimplementation of the Deep Recurrent Attentive Writer (DRAW) network architecture introduced by K. Gregor, I. Danihelka, A. Graves and D. Wierstra. The original paper can be found at

http://arxiv.org/pdf/1502.04623

- Blocks follow the install instructions. This will install all the other dependencies for you (Theano, Fuel, etc.).

- Theano

- Fuel

- picklable_itertools

Draw currently works with the "cutting-edge development version". But since the API is subject to change, you might consider installing this known to be supported version:

pip install --upgrade git+git://github.com/mila-udem/blocks.git@c528d097 \

-r https://raw.githubusercontent.com/mila-udem/blocks/master/requirements.txt

You also need to install

- Bokeh 0.8.1+

- ipdb

- ImageMagick

You need to set the location of your data directory:

export FUEL_DATA_PATH=/home/user/data

and download the binarized MNIST data. To do that using the latest version of Fuel:

cd $FUEL_DATA_PATH

fuel-download binarized_mnist

fuel-convert binarized_mnist

Similarly for cifar10:

cd $FUEL_DATA_PATH

fuel-download cifar10

fuel-convert cifar10

The datasets/README.md file has instructions for additional data-sets.

Before training you need to start the bokeh-server

bokeh-server

or

bokeh-server --ip 0.0.0.0

To train a model with a 2x2 read and a 5x5 write attention window run

cd draw

./train-draw.py --attention=2,5 --niter=64 --lr=3e-4 --epochs=100

On Amazon g2xlarge it takes more than 40min for Theano's compilation to end and training to start. Once training starts you can track its live plotting. It will take about 2 days to train the model. After each epoch it will save the following files:

- a pickle of the model [issue: access denied]

- a pickle [issue: access denied] of the log

- animation.gif showing how the creation of the result.

The animation.gif can also be created manually with

python sample.py [pickle-of-model]

convert -delay 5 -loop 0 samples-*.png animaion.gif

creating samples similar to

Run

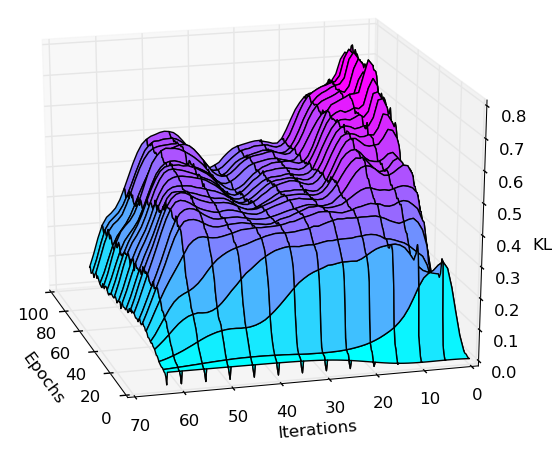

pyhthon plot-kl.py [pickle-of-log]

to create a visualization of the KL divergence potted over inference iterations and epochs. E.g:

Run

nosetests -v tests

to execute the testsuite. Run

cd draw

./attention.py

to test the attention windowing code on some image. It will open three windows: A window displaying the original input image, a window displaying some extracted, downsampled content (testing the read-operation), and a window showing the upsampled content (matching the input size) after the write operation.

Work in progress