This repo demonstrates a collection of NLP tasks all using the books of Harry Potter for source documents. Individual tasks can be read about here:

- Topic modeling with Latent Dirichlet Allocation

- Regular Expression case study

- Extractive text summarization

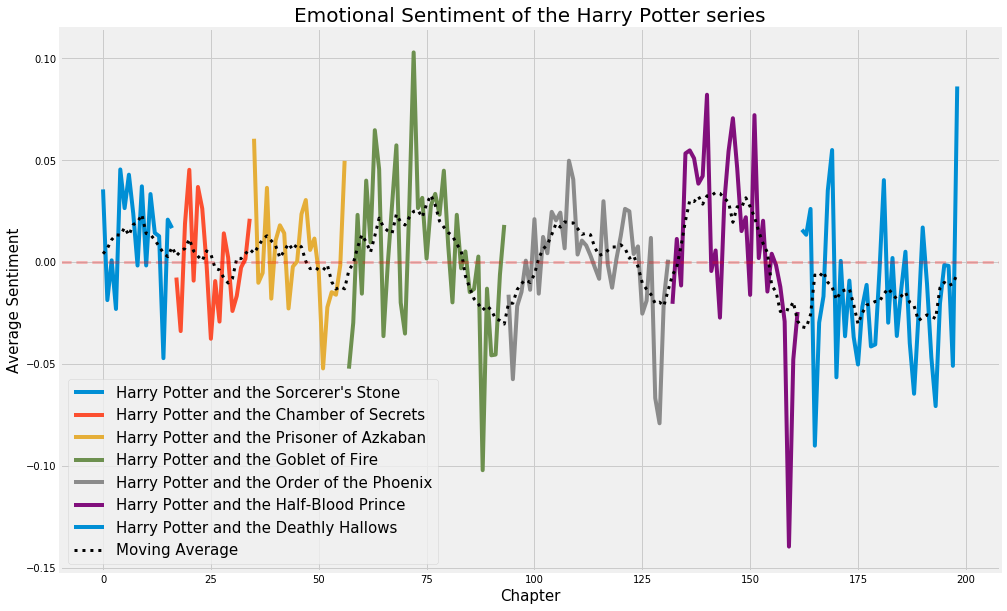

- Sentiment analysis

Functions of the class are topic modeling with LDA, document summarization, and sentiment analysis.

- Initialize the class with a list of documents and an optional list of document titles, for example:

texts = ['this is the first document', 'this is the second document', 'this is the third document']

titles = ['doc1', 'doc2', 'doc3']

nlp = BasicNLP(texts, titles)

-

LDA:

- Create an elbow plot and print the coherence scores by specifying the number of topics to include, with:

nlp.compute_coherence(start=5, stop=20, step=3) - Set the number of topics to use in the model with:

nlp.set_number_of_topics(10) - View the clusters (only available in Jupyter notebook):

import pyLDAvis pyLDAvis.enable_notebook() vis = nlp.view_clusters() pyLDAvis.display(vis) - Get the vocabulary for each topic in the LDA model with (topics can be 'all', a list of integers, or a single integer):

nlp.get_topic_vocabulary(topics='all', num_words=10) - Get the documents most highly associated with the given topics with:

nlp.get_representative_documents(topics='all', num_docs=1) - Get the sentence summaries of the documents most highly associated with the given topics with:

nlp.get_representative_sentences(topics='all', num_sentences=3) - Provide a name for an LDA topic (if preferred over the numbering system) with:

nlp.name_topic(topic_number=1, topic_name='My topic')

- Create an elbow plot and print the coherence scores by specifying the number of topics to include, with:

-

Document summarization:

Get the sentence summaries of the requested documents with:

nlp.get_document_summaries(documents='all', num_sent=5) -

Sentiment analysis:

Get the sentiment scores (compound, positive, neutral, negative) for the requested documents with:

nlp.get_sentiment(documents='all')