Code for the NAACL2022 (Findings) paper "Good Visual Guidance Makes A Better Extractor: Hierarchical Visual Prefix for Multimodal Entity and Relation Extraction".

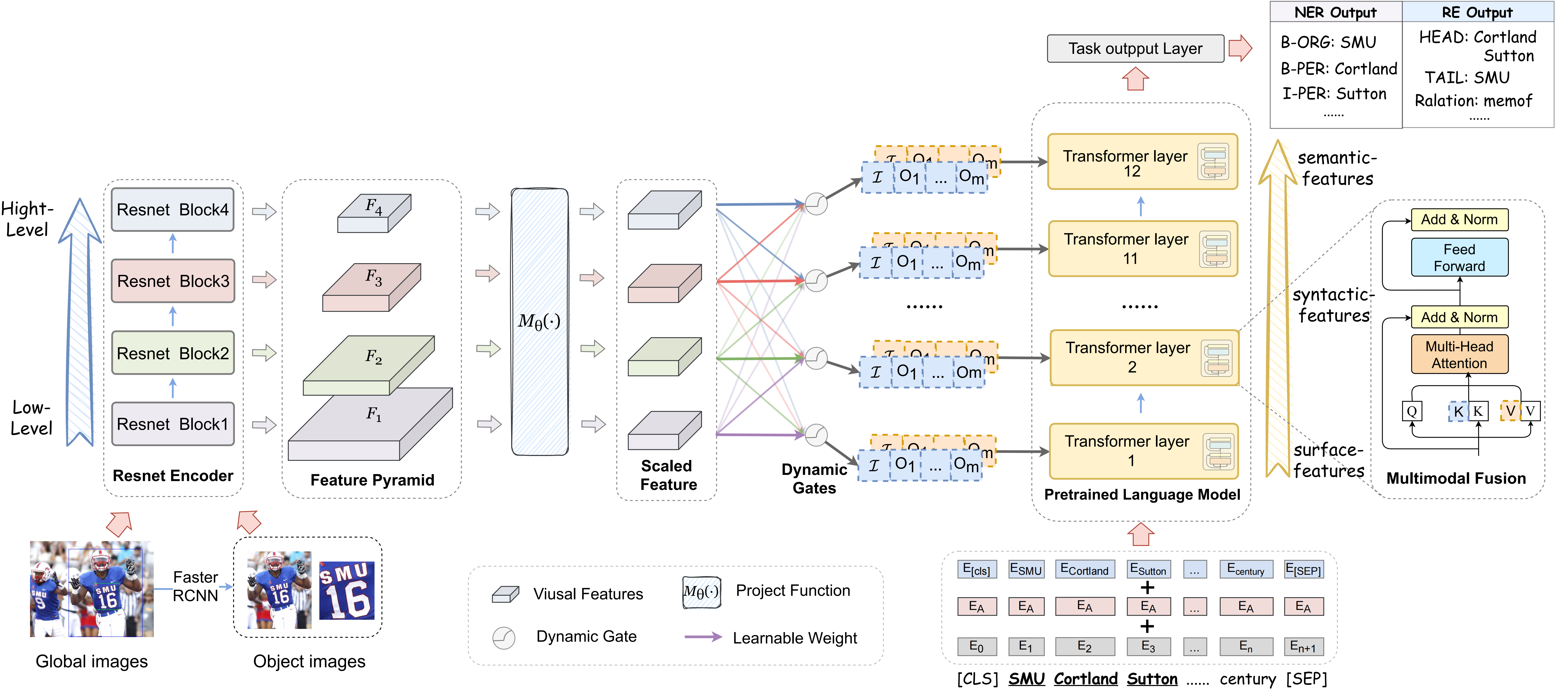

The overall architecture of our hierarchical modality fusion network.To run the codes, you need to install the requirements:

pip install -r requirements.txt

The datasets that we used in our experiments are as follows:

-

Twitter2015 & Twitter2017

The text data follows the conll format. You can download the Twitter2015 data via this link and download the Twitter2017 data via this link. Please place them in

data/NER_data.You can also put them anywhere and modify the path configuration in

run.py -

MNER

The MRE dataset comes from MEGA and you can download the MRE dataset with detected visual objects using folloing

command:cd data wget 120.27.214.45/Data/re/multimodal/data.tar.gz tar -xzvf data.tar.gz mv data RE_data

To extract visual object images, we first use the NLTK parser to extract noun phrases from the text and apply the visual grouding toolkit to detect objects. The detected objects are available in our data links.

The expected structure of files is:

HMNeT

|-- data # conll2003, mit-movie, mit-restaurant and atis

| |-- NER_data

| | |-- twitter2015 # text data

| | | |-- train.txt

| | | |-- valid.txt

| | | |-- test.txt

| | | |-- twitter2015_train_dict.pth # {full-image-[object-image]}

| | | |-- ...

| | |-- twitter2015_images # full image data

| | |-- twitter2015_aux_images # object image data

| | |-- twitter2017

| | |-- twitter2017_images

| |-- RE_data

| | |-- ...

|-- models # models

| |-- bert_model.py

| |-- modeling_bert.py

|-- modules

| |-- metrics.py # metric

| |-- train.py # trainer

|-- processor

| |-- dataset.py # processor, dataset

|-- logs # code logs

|-- run.py # main

|-- run_ner_task.sh

|-- run_re_task.sh

The data path and GPU related configuration are in the run.py. To train ner model, run this script.

bash run_twitter15.sh

bash run_twitter17.shcheckpoints can be download via Twitter15_ckpt, Twitter17_ckpt.

To train re model, run this script.

bash run_re_task.shcheckpoints can be download via re_ckpt

To test ner model, you can download the model chekpoints we provide via Twitter15_ckpt, Twitter17_ckpt or use your own tained model and set load_path to the model path, then run following script:

python -u run.py \

--dataset_name="twitter15/twitter17" \

--bert_name="bert-base-uncased" \

--seed=1234 \

--only_test \

--max_seq=80 \

--use_prompt \

--prompt_len=4 \

--sample_ratio=1.0 \

--load_path='your_ner_ckpt_path'

To test re model, you can download the model chekpoints we provide via re_ckpt or use your own tained model and set load_path to the model path, then run following script:

python -u run.py \

--dataset_name="MRE" \

--bert_name="bert-base-uncased" \

--seed=1234 \

--only_test \

--max_seq=80 \

--use_prompt \

--prompt_len=4 \

--sample_ratio=1.0 \

--load_path='your_re_ckpt_path'

The acquisition of Twitter15 and Twitter17 data refer to the code from UMT, many thanks.

The acquisition of MNRE data for multimodal relation extraction task refer to the code from MEGA, many thanks.

If you use or extend our work, please cite the paper as follows:

@article{DBLP:journals/corr/abs-2205-03521,

author = {Xiang Chen and

Ningyu Zhang and

Lei Li and

Yunzhi Yao and

Shumin Deng and

Chuanqi Tan and

Fei Huang and

Luo Si and

Huajun Chen},

title = {Good Visual Guidance Makes {A} Better Extractor: Hierarchical Visual

Prefix for Multimodal Entity and Relation Extraction},

journal = {CoRR},

volume = {abs/2205.03521},

year = {2022},

url = {https://doi.org/10.48550/arXiv.2205.03521},

doi = {10.48550/arXiv.2205.03521},

eprinttype = {arXiv},

eprint = {2205.03521},

timestamp = {Wed, 11 May 2022 17:29:40 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-2205-03521.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}