📖 Docs: readthedocs

🚀 Simple Robot Agent Example:

💻 Simulation Example with SimplerEnv:

🤖 Motor Agent using OpenVLA:

Updates:

June 30 2024, embodied-agents v1.0:

- Added Motor Agent supporting OpenVLA with free API endpoint hosted.

- Added Sensory Agent supporting i.e. 3D object pose detection.

- Improved automatic dataset recording.

- Agent now can make remote act calls to API servers i.e. Gradio, vLLM.

- Bug fixes and performance improvements have been made.

- PyPI project is renamed to

mbodied.

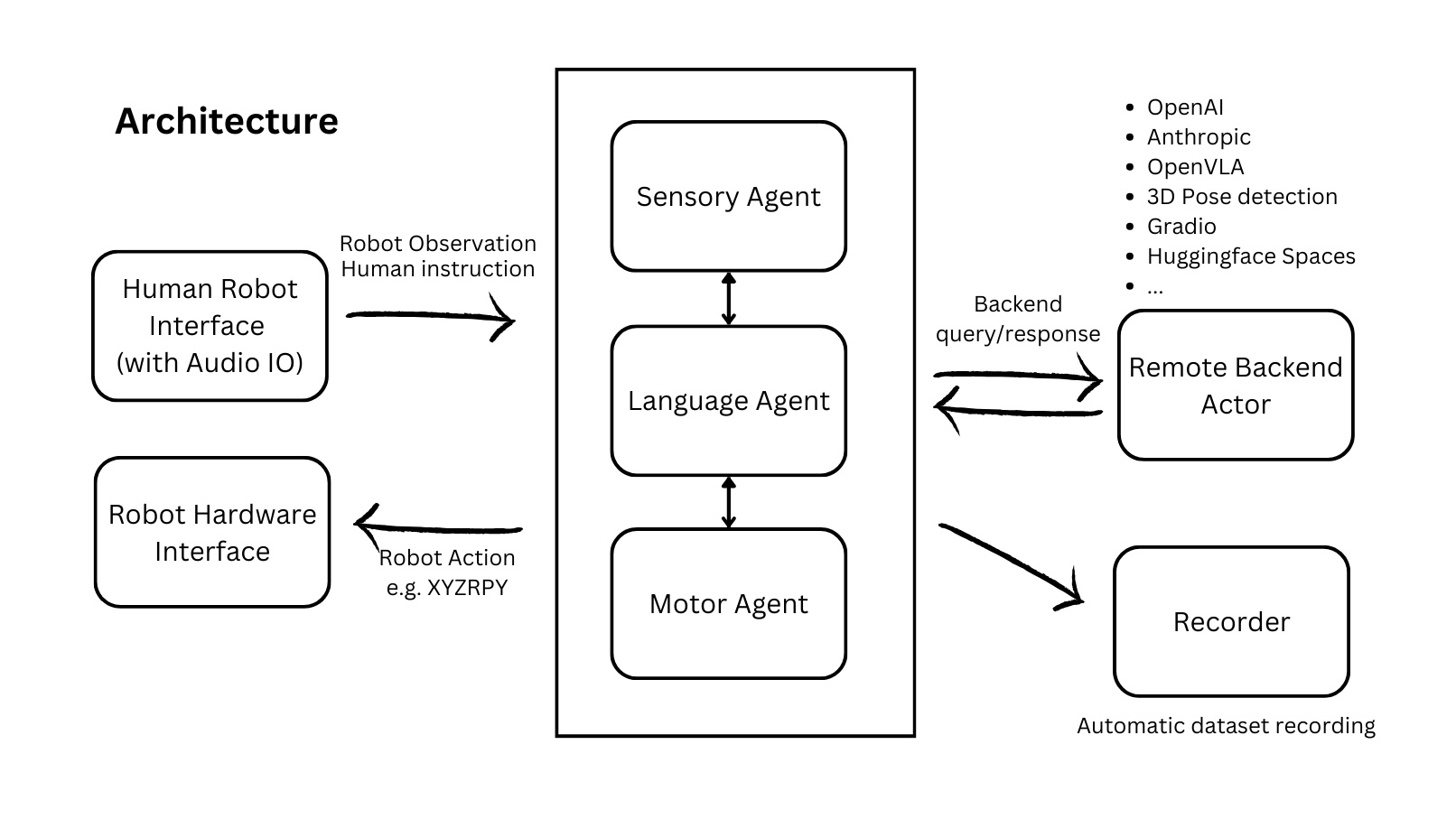

embodied agents is a toolkit for integrating large multi-modal models into existing robot stacks with just a few lines of code. It provides consistency, reliability, scalability and is configurable to any observation and action space.

- embodied agents

This repository is broken down into 3 main components: Agents, Data, and Hardware. Inspired by the efficiency of the central nervous system, each component is broken down into 3 meta-modalities: Language, Motion, and Sense. Each agent has an act method that can be overridden and satisfies:

- Language Agents always return a string.

- Motor Agents always return a

Motion. - Sensory Agents always return a

SensorReading.

A call to act or async_act can perform local or remote inference synchronously or asynchronously. Remote execution can be performed with Gradio, httpx, or different LLM clients. Validation is performed with Pydantic.

Jump to getting started to get up and running on real hardware or simulation. Be sure to join our Discord for 🥇-winning discussions :)

⭐ Give us a star on GitHub if you like us!

There is a signifcant barrier to entry for running SOTA models in robotics

It is currently unrealistic to run state-of-the-art AI models on edge devices for responsive, real-time applications. Furthermore, the complexity of integrating multiple models across different modalities is a significant barrier to entry for many researchers, hobbyists, and developers. This library aims to address these challenges by providing a simple, extensible, and efficient way to integrate large models into existing robot stacks.

Facillitate data-collection and sharing among roboticists.

This requires reducing much of the complexities involved with setting up inference endpoints, converting between different model formats, and collecting and storing new datasets for future availibility.

We aim to achieve this by:

- Providing simple, Python-first abstrations that are modular, extensible and applicable to a wide range of tasks.

- Providing endpoints, weights, and interactive Gradio playgrounds for easy access to state-of-the-art models.

- Ensuring that this library is observation and action-space agnostic, allowing it to be used with any robot stack.

Beyond just improved robustness and consistency, this architecture makes asynchronous and remote agent execution exceedingly simple. In particular we demonstrate how responsive natural language interactions can be achieved in under 30 lines of Python code.

Embodied Agents are not yet capable of learning from in-context experience:

- Frameworks for advanced RAG techniques are clumsy at best for OOD embodied applications however that may improve.

- Amount of data required for fine-tuning is still prohibitively large and expensive to collect.

- Online RL is still in its infancy and not yet practical for most applications.

- This library is intended to be used for research and prototyping.

- This library is still experimental and under active development. Breaking changes may occur although they will be avoided as much as possible. Feel free to report issues!

- Extensible, user-friendly python SDK with explicit typing and modularity

- Asynchronous and remote thread-safe agent execution for maximal responsiveness and scalability.

- Full-compatiblity with HuggingFace Spaces, Datasets, Gymnasium Spaces, Ollama, and any OpenAI-compatible api.

- Automatic dataset-recording and optionally uploads dataset to huggingface hub.

- Closed: OpenAI, Anthropic

- Open Weights: OpenVLA, Idefics2, Llava-1.6-Mistral, Phi-3-vision-128k-instruct

- All gradio endpoints hosted on HuggingFace spaces.

- More Motor Agents

- More Data Augmentation

- More Evaluation on Latency, Accuracy, and Prompting

pip install mbodied

# For audio support

pip install mbodied[audio]from mbodied.types.motion.control import HandControl, FullJointControl

from mbodied.types.motion import AbsoluteMotionField, RelativeMotionField

class FineGrainedHandControl(HandControl):

comment: str = Field(None, description="A comment to voice aloud.")

# Any attempted validation will fail if the bounds and shape are not satisfied.

index: FullJointControl = AbsoluteMotionField([0,0,0],bounds=[-3.14, 3.14], shape=(3,))

thumb: FullJointControl = RelativeMotionField([0,0,0],bounds=[-3.14, 3.14], shape=(3,))import os

from mbodied.agents import LanguageAgent

from mbodied.agents.motion import OpenVlaAgent

from mbodied.agents.sense.audio import AudioAgent

from mbodied.hardware.sim_interface import SimInterface

cognition = LanguageAgent(

context="You are an embodied planner that responds with a python list of strings and nothing else.",

api_key=os.getenv("ANTHROPIC_API_KEY"), # Or use OpenAI

model_src="anthropic", model_kwargs={"model": "claude-3-5-sonnet-20240620"},

recorder="auto",

)

speech = AudioAgent(use_pyaudio=False) # pyaudio is buggy on mac

motion = OpenVlaAgent(model_src="https://api.mbodi.ai/community-models/")

# Subclass and override do() and capture() methods.

hardware_interface = SimInterface()

instruction = speech.listen()

plan = cognition.act(instruction, hardware_interface.capture())

for step in plan.strip('[]').strip().split(','):

print("\nMotor agent is executing step: ", step, "\n")

for _ in range(10):

hand_control = motion.act(step, hardware_interface.capture())

hardware_interface.do(hand_control)Example Scripts:

- examples/simple_robot_agent.py: A very simple language based cognitive agent taking instruction from user and output actions.

- examples/full_example.py: Full example of languaged based cognitive and motor agent executing task.

- examples/motor_example_openvla.py: Run robotic transformers, i.e. OpenVLA, in several lines on the robot.

- examples/reason_plan_act_robot.py: Full example of language based cognitive agent and OpenVLA motor agent executing task.

Simulation with: SimplerEnv :

MotorAgent with OpenVLA: examples/motor_example_openvla.py

The Sample Class

The Sample class is a base model for serializing, recording, and manipulating arbitrary data. It is designed to be extendable, flexible, and strongly typed. By wrapping your observation or action objects in the Sample class, you'll be able to convert to and from the following with ease:

- A Gym space for creating a new Gym environment.

- A flattened list, array, or tensor for plugging into an ML model.

- A HuggingFace dataset with semantic search capabilities.

- A Pydantic BaseModel for reliable and quick json serialization/deserialization.

To learn more about all of the possibilities with embodied agents, check out the documentation

- You can

packa list ofSamples or Dicts into a singleSampleorDictandunpackaccordingly? - You can

unflattenany python structure into aSampleclass so long you provide it with a valid json schema?

Creating a Sample requires just wrapping a python dictionary with the Sample class. Additionally, they can be made from kwargs, Gym Spaces, and Tensors to name a few.

# Creating a Sample instance

sample = Sample(observation=[1,2,3], action=[4,5,6])

# Flattening the Sample instance

flat_list = sample.flatten()

print(flat_list) # Output: [1, 2, 3, 4, 5, 6]

# Generating a simplified JSON schema

>>> schema = sample.schema()

{'type': 'object', 'properties': {'observation': {'type': 'array', 'items': {'type': 'integer'}}, 'action': {'type': 'array', 'items': {'type': 'integer'}}}}

# Unflattening a list into a Sample instance

Sample.unflatten(flat_list, schema)

>>> Sample(observation=[1, 2, 3], action=[4, 5, 6])The Sample class leverages Pydantic's powerful features for serialization and deserialization, allowing you to easily convert between Sample instances and JSON.

# Serialize the Sample instance to JSON

sample = Sample(observation=[1,2,3], action=[4,5,6])

json_data = sample.model_dump_json()

print(json_data) # Output: '{"observation": [1, 2, 3], "action": [4, 5, 6]}'

# Deserialize the JSON data back into a Sample instance

json_data = '{"observation": [1, 2, 3], "action": [4, 5, 6]}'

sample = Sample.model_validate(from_json(json_data))

print(sample) # Output: Sample(observation=[1, 2, 3], action=[4, 5, 6])# Converting to a dictionary

sample_dict = sample.to("dict")

print(sample_dict) # Output: {'observation': [1, 2, 3], 'action': [4, 5, 6]}

# Converting to a NumPy array

sample_np = sample.to("np")

print(sample_np) # Output: array([1, 2, 3, 4, 5, 6])

# Converting to a PyTorch tensor

sample_pt = sample.to("pt")

print(sample_pt) # Output: tensor([1, 2, 3, 4, 5, 6])

# Converting to a HuggingFace Dataset

sample_hf = sample.to("hf")

print(sample_hf)

# Output: Dataset({

# features: ['observation', 'action'],

# num_rows: 3

# })gym_space = sample.space()

print(gym_space)

# Output: Dict('action': Box(-inf, inf, (3,), float64), 'observation': Box(-inf, inf, (3,), float64))See sample.py for more details.

The Message class represents a single completion sample space. It can be text, image, a list of text/images, Sample, or other modality. The Message class is designed to handle various types of content and supports different roles such as user, assistant, or system.

You can create a Message in versatile ways. They can all be understood by mbodi's backend.

Message(role="user", content="example text")

Message(role="user", content=["example text", Image("example.jpg"), Image("example2.jpg")])

Message(role="user", content=[Sample("Hello")])The Backend class is an abstract base class for Backend implementations. It provides the basic structure and methods required for interacting with different backend services, such as API calls for generating completions based on given messages. See backend directory on how various backends are implemented.

Agent is the base class for various agents listed below. It provides a template for creating agents that can talk to a remote backend/server and optionally record their actions and observations.

The Language Agent is the main entry point for intelligent robot agents. It can connect to different backends or transformers of your choice. It includes methods for recording conversations, managing context, looking up messages, forgetting messages, storing context, and acting based on an instruction and an image.

Currently supported API services are OpenAI and Anthropic. Upcoming API services includes Gemini, Ollama, and HuggingFace.

To use OpenAI for your robot backend:

robot_agent = LanguageAgent(context=context_prompt, model_src="openai")context can be either a string or a list, for example:

context_prompt = "you are a robot"

# OR

context_prompt = [

Message(role="system", content="you are a robot"),

Message(role="user", content=["example text", Image("example.jpg")]),

Message(role="assistant", content="Understood."),

]To execute an instruction:

response = robot_agent.act(instruction, image)[0]

# You can also pass an arbituary number of text and image to the agent:

response = robot_agent.act([instruction1, image1, instruction2, image2])[0]Language Agent can connect to vLLM as well. For example, suppose you are running a vLLM server Mistral-7B on 1.2.3.4:1234. All you need to do is:

agent = LanguageAgent(

context=context,

model_src="openai",

model_kwargs={"api_key": "EMPTY", "base_url": "http://1.2.3.4:1234/v1"},

)

response = agent.act("Hello, how are you?", model="mistralai/Mistral-7B-Instruct-v0.3")Motor Agent is similar to Language Agent but instead of returning a string, it always returns a Motion. Motor Agent is generally powered by robotic transformer models, i.e. OpenVLA, RT1, Octo, etc.

Some small model, like RT1, can run on edge devices. However, some, like OpenVLA, are too large to run on edge devices. See OpenVLA Agent and an example OpenVLA server

These agents interact with the environment to collect sensory data. They always return a SensorReading, which can be various forms of processed sensory input such as images, depth data, or audio signals.

For example, object_pose_estimator_3d is a sensory agent that senses objects' 3d coordinates as the robot sees.

The motion_controls module defines various motions to control a robot as Pydantic models. They are also subclassed from Sample, thus possessing all the capability of Sample as mentioned above. These controls cover a range of actions, from simple joint movements to complex poses and full robot control.

Mapping robot actions from a model to an action is very easy. In our example script, we use a mock hardware interface. We also have an XArm interface as an example.

Dataset Recorder can record your conversation and the robot's actions to a dataset as you interact with/teach the robot. You can define any observation space and action space for the Recorder.

observation_space = spaces.Dict({

'image': Image(size=(224, 224)).space(),

'instruction': spaces.Text(1000)

})

action_space = HandControl().space()

recorder = Recorder('example_recorder', out_dir='saved_datasets', observation_space=observation_space, action_space=action_space)

# Every time robot makes a conversation or performs an action:

recorder.record(observation={'image': image, 'instruction': instruction,}, action=hand_control)The dataset is saved to ./saved_datasets.

The Replayer class is designed to process and manage data stored in HDF5 files generated by Recorder. It provides a variety of functionalities, including reading samples, generating statistics, extracting unique items, and converting datasets for use with HuggingFace. The Replayer also supports saving specific images during processing and offers a command-line interface for various operations.

Example for iterating through a dataset from Recorder with Replayer:

replayer = Replayer(path=str("path/to/dataset.h5"))

for observation, action in replayer:

...├─ assets/ ............. Images, icons, and other static assets

├─ examples/ ........... Example scripts and usage demonstrations

├─ resources/ .......... Additional resources for examples

├─ src/

│ └─ mbodied/

│ ├─ agents/ ....... Modules for robot agents

│ │ ├─ backends/ .. Backend implementations for different services for agents

│ │ ├─ language/ .. Language based agents modules

│ │ ├─ motion/ .... Motion based agents modules

│ │ └─ sense/ ..... Sensory, e.g. audio, processing modules

│ ├─ data/ ......... Data handling and processing

│ ├─ hardware/ ..... Hardware interface and interaction

│ └─ types/ ........ Common types and definitions

└─ tests/ .............. Unit testsWe welcome issues, questions and PRs. See the contributing guide for more information.