Compact Transformers

Preprint Link: Escaping the Big Data Paradigm with Compact Transformers

By Ali Hassani[1]*, Steven Walton[1]*, Nikhil Shah[1], Abulikemu Abuduweili[1], Jiachen Li[1,2], and Humphrey Shi[1,2,3]

*Ali Hassani and Steven Walton contributed equal work

In association with SHI Lab @ University of Oregon[1] and UIUC[2], and Picsart AI Research (PAIR)[3]

Other implementations & resources

[PyTorch blog]: check out our official blog post with PyTorch to learn more about our work and vision transformers in general.

[Keras]: check out Compact Convolutional Transformers on keras.io by Sayak Paul.

[vit-pytorch]: CCT is also available through Phil Wang's vit-pytorch, simply use pip install vit-pytorch

Abstract

With the rise of Transformers as the standard for language processing, and their advancements in computer vision, along with their unprecedented size and amounts of training data, many have come to believe that they are not suitable for small sets of data. This trend leads to great concerns, including but not limited to: limited availability of data in certain scientific domains and the exclusion of those with limited resource from research in the field. In this paper, we dispel the myth that transformers are “data hungry” and therefore can only be applied to large sets of data. We show for the first time that with the right size and tokenization, transformers can perform head-to-head with state-of-the-art CNNs on small datasets, often with bet-ter accuracy and fewer parameters. Our model eliminates the requirement for class token and positional embeddings through a novel sequence pooling strategy and the use of convolution/s. It is flexible in terms of model size, and can have as little as 0.28M parameters while achieving good results. Our model can reach 98.00% accuracy when training from scratch on CIFAR-10, which is a significant improvement over previous Transformer based models. It also outperforms many modern CNN based approaches, such as ResNet, and even some recent NAS-based approaches,such as Proxyless-NAS. Our simple and compact design democratizes transformers by making them accessible to those with limited computing resources and/or dealing with small datasets. Our method also works on larger datasets, such as ImageNet (82.71% accuracy with 29% parameters of ViT),and NLP tasks as well.

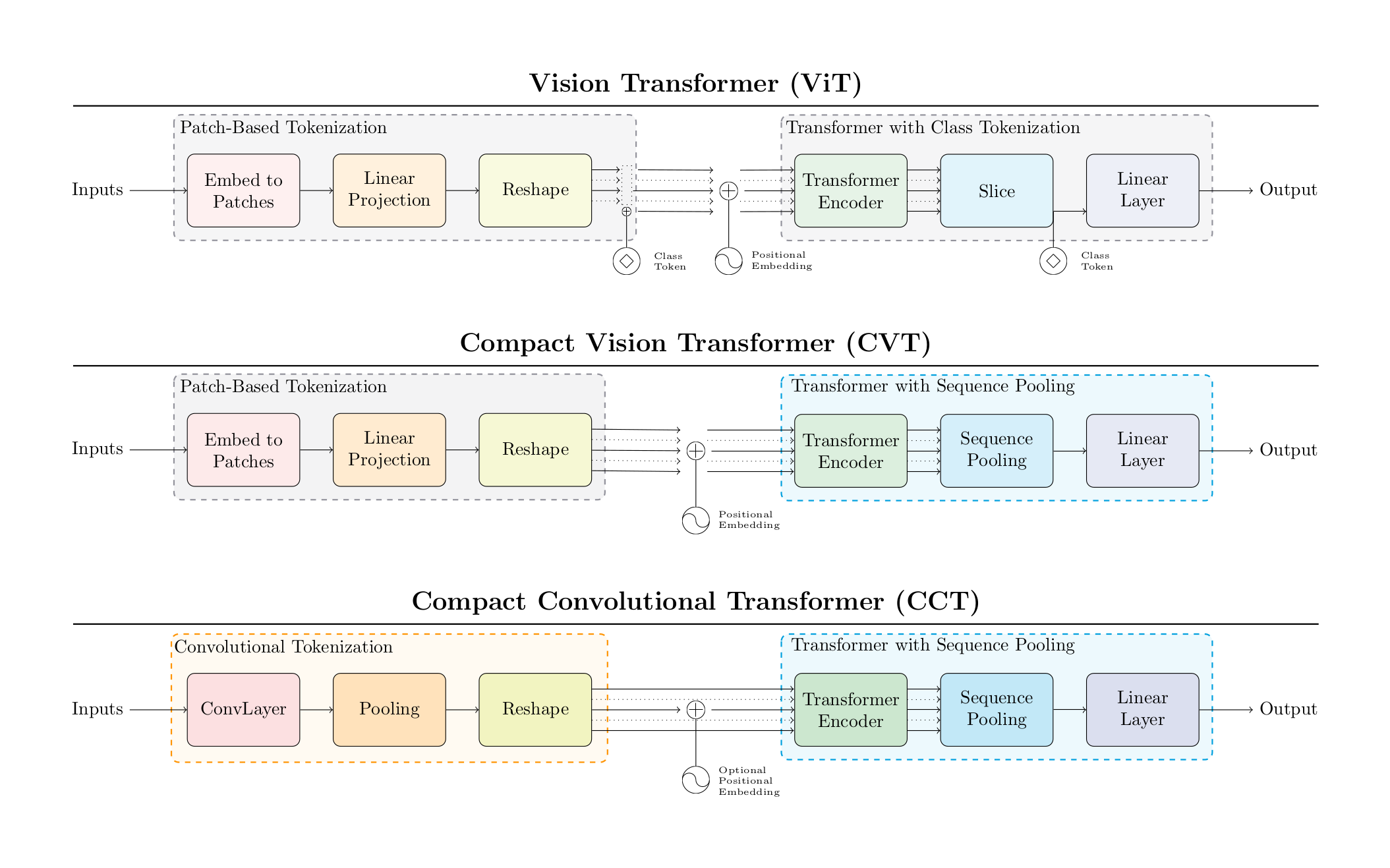

ViT-Lite: Lightweight ViT

Different from ViT we show that an image is not always worth 16x16 words and the image patch size matters. Transformers are not in fact ''data-hungry,'' as the authors proposed, and smaller patching can be used to train efficiently on smaller datasets.

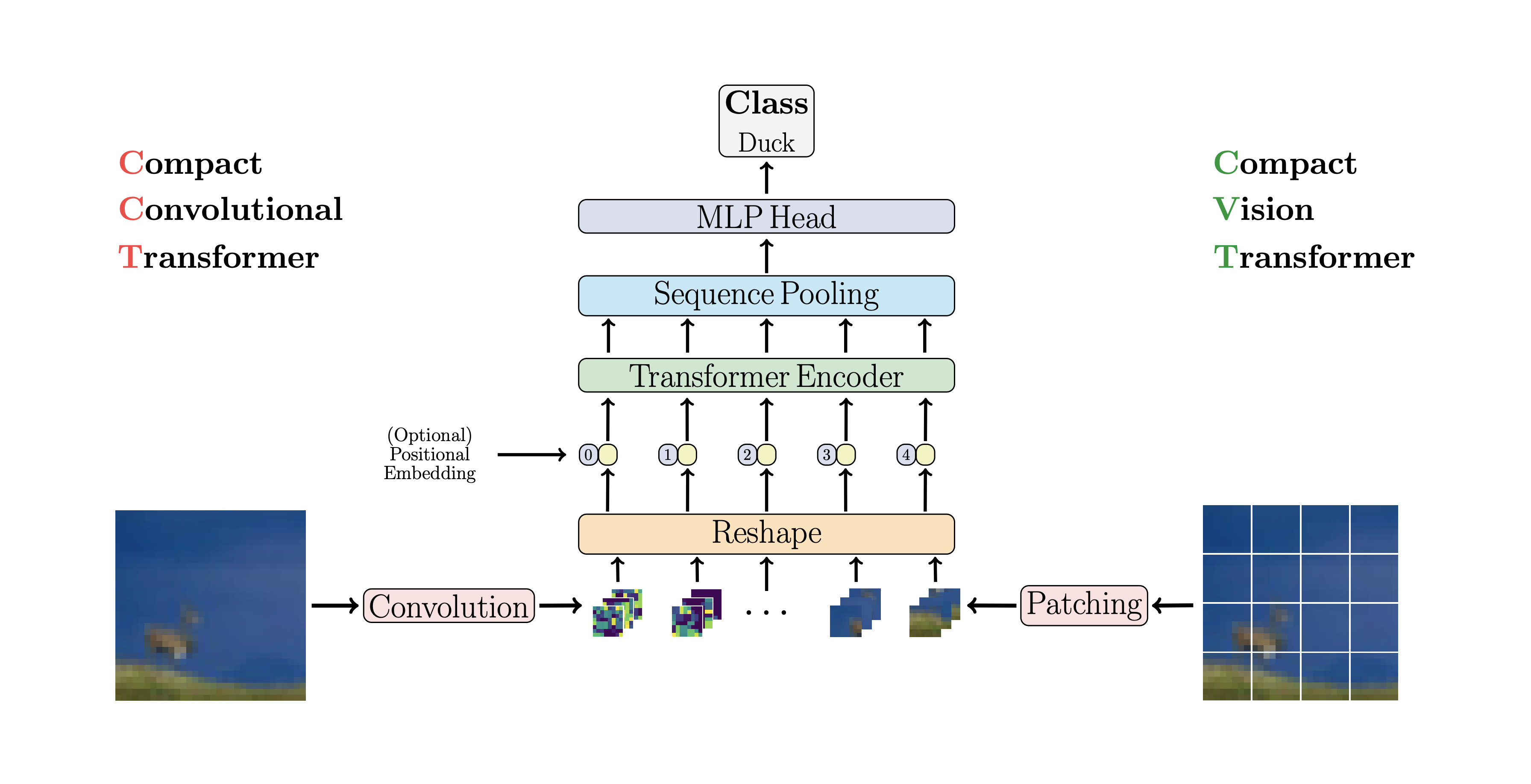

CVT: Compact Vision Transformers

Compact Vision Transformers better utilize information with Sequence Pooling post encoder, eliminating the need for the class token while achieving better accuracy.

CCT: Compact Convolutional Transformers

Compact Convolutional Transformers not only use the sequence pooling but also replace the patch embedding with a convolutional embedding, allowing for better inductive bias and making positional embeddings optional. CCT achieves better accuracy than ViT-Lite and CVT and increases the flexibility of the input parameters.

How to run

Install locally

Our base model is in pure PyTorch and Torchvision. No extra packages are required. Please refer to PyTorch's Getting Started page for detailed instructions.

Here are some of the models that can be imported from src (full list available in Variants.md):

| Model | Resolution | PE | Name | Pretrained Weights | Config |

| CCT-7/3x1 | 32x32 | Learnable | cct_7_3x1_32 |

CIFAR-10/300 Epochs | pretrained/cct_7-3x1_cifar10_300epochs.yml |

| Sinusoidal | cct_7_3x1_32_sine |

CIFAR-10/5000 Epochs | pretrained/cct_7-3x1_cifar10_5000epochs.yml |

||

| Learnable | cct_7_3x1_32_c100 |

CIFAR-100/300 Epochs | pretrained/cct_7-3x1_cifar100_300epochs.yml |

||

| Sinusoidal | cct_7_3x1_32_sine_c100 |

CIFAR-100/5000 Epochs | pretrained/cct_7-3x1_cifar100_5000epochs.yml |

||

| CCT-7/7x2 | 224x224 | Sinusoidal | cct_7_7x2_224_sine |

Flowers-102/300 Epochs | pretrained/cct_7-7x2_flowers102.yml |

| CCT-14/7x2 | 224x224 | Learnable | cct_14_7x2_224 |

ImageNet-1k/300 Epochs | pretrained/cct_14-7x2_imagenet.yml |

| 384x384 | cct_14_7x2_384 |

ImageNet-1k/Finetuned/30 Epochs | finetuned/cct_14-7x2_imagenet384.yml |

||

| 384x384 | cct_14_7x2_384_fl |

Flowers102/Finetuned/300 Epochs | finetuned/cct_14-7x2_flowers102.yml |

You can simply import the names provided in the Name column:

from src import cct_14_7x2_384

model = cct_14_7x2_384(pretrained=True, progress=True)The config files are provided both to specify the training settings and hyperparameters, and allow easier reproduction.

Please note that the models missing pretrained weights will be updated soon. They were previously trained using our old training script, and we're working on training them again with the new script for consistency.

You could even create your own models with different image resolutions, positional embeddings, and number of classes:

from src import cct_14_7x2_384, cct_7_7x2_224_sine

model = cct_14_7x2_384(img_size=256)

model = cct_7_7x2_224_sine(img_size=256, positional_embedding='sine')Changing resolution and setting pretrained=True will interpolate the PE vector to support the new size,

just like ViT.

These models are also based on experiments in the paper. You can create your own versions:

from src import cct_14

model = cct_14(arch='custom', pretrained=False, progress=False, kernel_size=5, n_conv_layers=3)You can even go further and create your own custom variant by importing the class CCT.

All of these apply to CVT and ViT as well.

Training

timm is recommended for image classification training and required for the training script provided in this repository:

Distributed training

./dist_classification.sh $NUM_GPUS -c $CONFIG_FILE /path/to/datasetYou can use our training configurations provided in configs/:

./dist_classification.sh 8 -c configs/imagenet.yml --model cct_14_7x2_224 /path/to/ImageNetNon-distributed training

python train.py -c configs/datasets/cifar10.yml --model cct_7_3x1_32 /path/to/cifar10Models and config files

We've updated this repository and moved the previous training script and the checkpoints associated

with it to examples/. The new training script here is just the timm training script. We've provided

the checkpoints associated with it in the next section, and the hyperparameters are all provided in

configs/pretrained for models trained from scratch, and configs/finetuned for fine-tuned models.

Results

Type can be read in the format L/PxC where L is the number of transformer

layers, P is the patch/convolution size, and C (CCT only) is the number of

convolutional layers.

CIFAR-10 and CIFAR-100

| Model | Pretraining | Epochs | PE | CIFAR-10 | CIFAR-100 |

| CCT-7/3x1 | None | 300 | Learnable | 96.53% | 80.92% |

| 1500 | Sinusoidal | 97.48% | 82.72% | ||

| 5000 | Sinusoidal | 98.00% | 82.87% |

Flowers-102

| Model | Pre-training | PE | Image Size | Accuracy |

| CCT-7/7x2 | None | Sinusoidal | 224x224 | 97.19% |

| CCT-14/7x2 | ImageNet-1k | Learnable | 384x384 | 99.76% |

ImageNet

| Model | Type | Resolution | Epochs | Top-1 Accuracy | # Params | MACs |

| ViT | 12/16 | 384 | 300 | 77.91% | 86.8M | 17.6G |

| CCT | 14/7x2 | 224 | 310 | 80.67% | 22.36M | 5.11G |

| 14/7x2 | 384 | 310 + 30 | 82.71% | 22.51M | 15.02G |

NLP

NLP results and instructions have been moved to nlp/.

Citation

@article{hassani2021escaping,

title = {Escaping the Big Data Paradigm with Compact Transformers},

author = {Ali Hassani and Steven Walton and Nikhil Shah and Abulikemu Abuduweili and Jiachen Li and Humphrey Shi},

year = 2021,

url = {https://arxiv.org/abs/2104.05704},

eprint = {2104.05704},

archiveprefix = {arXiv},

primaryclass = {cs.CV}

}