head.mp4

-

2024/04/02: ✨✨✨SMPL & Rendering scripts released! Champ your dance videos now.💃🤸♂️🕺 -

2024/03/30: 🚀🚀🚀Watch this amazing video tutorial from Toy. It's based on the unofficial easy Champ ComfyUI without SMPL from Kijai🥳.

- System requirement: Ubuntu20.04/Windows 11, Cuda 12.1

- Tested GPUs: A100, RTX3090

Git clone Champ with following command:

git clone --recurse-submodules https://github.com/fudan-generative-vision/champCreate conda environment:

conda create -n champ python=3.10

conda activate champ pip install -r requirements.txtInstall packages with poetry

If you want to run this project on a Windows device, we strongly recommend to use

poetry.

poetry install --no-rootChamp use the great work 4D-Humans to fit SMPL on inputs. Please follow their instructions Installation to set it up and Run demo on images to download checkpoints. Note that we have a fork in Champ/4D-Humans, so you don't need to clone the original repository.

-

Download pretrained weight of base models:

-

Download our checkpoints: \

Our checkpoints consist of denoising UNet, guidance encoders, Reference UNet, and motion module.

Finally, these pretrained models should be organized as follows:

./pretrained_models/

|-- champ

| |-- denoising_unet.pth

| |-- guidance_encoder_depth.pth

| |-- guidance_encoder_dwpose.pth

| |-- guidance_encoder_normal.pth

| |-- guidance_encoder_semantic_map.pth

| |-- reference_unet.pth

| `-- motion_module.pth

|-- image_encoder

| |-- config.json

| `-- pytorch_model.bin

|-- DWPose

| |-- dw-ll_ucoco_384.onnx

| `-- yolox_l.onnx

|-- sd-vae-ft-mse

| |-- config.json

| |-- diffusion_pytorch_model.bin

| `-- diffusion_pytorch_model.safetensors

`-- stable-diffusion-v1-5

|-- feature_extractor

| `-- preprocessor_config.json

|-- model_index.json

|-- unet

| |-- config.json

| `-- diffusion_pytorch_model.bin

`-- v1-inference.yaml

We have provided several sets of example data for inference. Please first download and place them in the example_data folder.

Here is the command for inference:

python inference.py --config configs/inference.yamlIf using poetry, command is

poetry run python inference.py --config configs/inference.yamlAnimation results will be saved in results folder. You can change the reference image or the guidance motion by modifying inference.yaml.

You can also extract the driving motion from any videos and then render with Blender. We will later provide the instructions and scripts for this.

Note: The default motion-01 in inference.yaml has more than 500 frames and takes about 36GB VRAM. If you encounter VRAM issues, consider switching to other example data with less frames.

Try Champ with your dance videos! It may take time to setup the environment, follow the instruction step by step🐢, report issue when necessary.

Use ffmpeg to extract frames from video. For example:

ffmpeg -i driving_videos/Video_1/Your_Video.mp4 -c:v png driving_videos/Video_1/images/%04d.png

Please organize your driving videos and reference images like this:

|-- driving_videos

|-- Video_1

|-- images

|-- 0000.png

...

|-- 0020.png

...

|-- Video_2

|-- images

|-- 0000.png

...

...

|-- Video_n

|-- reference_imgs

|-- images

|-- your_ref_img_A.png

|-- your_ref_img_B.png

...Make sure you have organized directory as above. Substitute your path as absolute path in following command:

python inference_smpl.py --reference_imgs_folder test_smpl/reference_imgs --driving_videos_folder test_smpl/driving_videos --device YOUR_GPU_ID

Once finished, you can check reference_imgs/visualized_imgs to see the overlay results. To better fit some extreme figures, you may also append --figure_scale to manually change the figure(or shape) of predicted SMPL, from -10(extreme fat) to 10(extreme slim).

TODO: Coming Soon.

Replace with absolute path in following command:

python transfer_smpl.py --reference_path test_smpl/reference_imgs/smpl_results/ref.npy --driving_path test_smpl/driving_videos/Video_1 --output_folder test_smpl/transfer_result --figure_transfer --view_transferAppend --figure_transfer when you want the result matches the reference SMPL's figure, and --view_transfer to transform the driving SMPL onto reference image's camera space.

First of all, install Blender in your Server or PC.

Replace with absolute path in following command:

blender smpl_rendering.blend --background --python rendering.py --driving_path test_smpl/transfer_result/smpl_results --reference_path test_smpl/reference_imgs/images/ref.pngThis will rendering in CPU on default. Append --device YOUR_GPU_ID to select a GPU for rendering.

Make sure you have finished SMPL rendering. Replace with absolute path in following command:

python inference_dwpose.py --imgs_path test_smpl/transfer_result --device YOUR_GPU_ID

We thank the authors of MagicAnimate, Animate Anyone, and AnimateDiff for their excellent work. Our project is built upon Moore-AnimateAnyone, 4D-Humans, DWPose and we are grateful for their open-source contributions.

Visit our roadmap to preview the future of Champ.

If you find our work useful for your research, please consider citing the paper:

@misc{zhu2024champ,

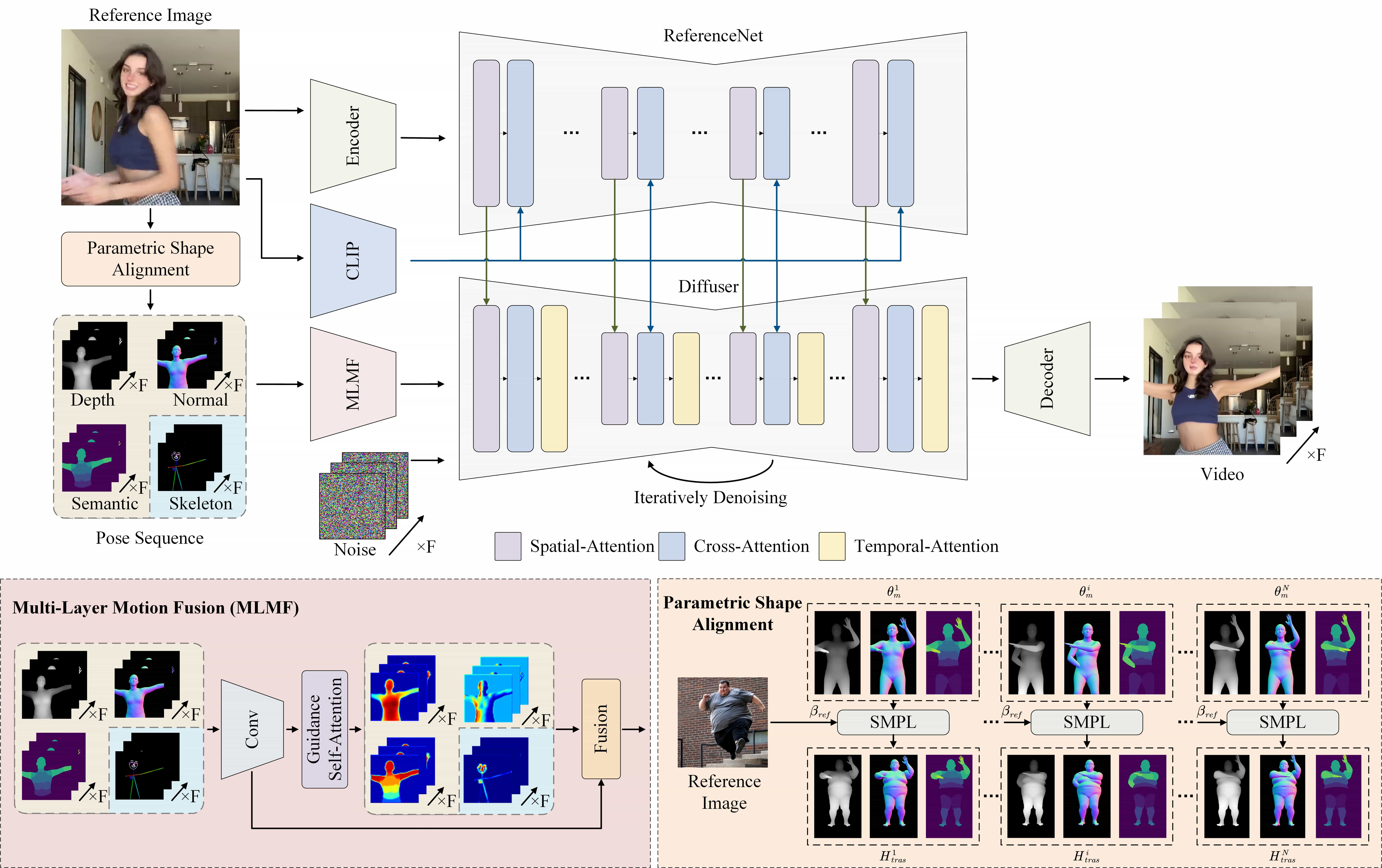

title={Champ: Controllable and Consistent Human Image Animation with 3D Parametric Guidance},

author={Shenhao Zhu and Junming Leo Chen and Zuozhuo Dai and Yinghui Xu and Xun Cao and Yao Yao and Hao Zhu and Siyu Zhu},

year={2024},

eprint={2403.14781},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

Multiple research positions are open at the Generative Vision Lab, Fudan University! Include:

- Research assistant

- Postdoctoral researcher

- PhD candidate

- Master students

Interested individuals are encouraged to contact us at siyuzhu@fudan.edu.cn for further information.