Table of Contents

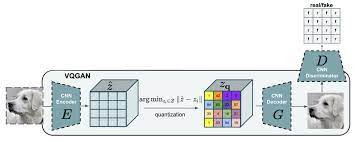

VQGAN, short for Vector Quantized Generative Adversarial Network, is a powerful deep learning architecture used in the field of image generation. It combines elements of both generative adversarial networks (GANs) and vector quantization to create high-quality, diverse, and controllable images. VQGAN utilizes a discrete latent space to represent image features, allowing for efficient and expressive encoding of visual information.

Install all the libraries

pip install pytorch tqdm numpy albumentations matplotlibChange the arguments value like dataset,batch size,etc in training_vqgan.py file and run that file

- Implementing 1st phase of the paper

- Implementing transformer architecture for 2nd phase

- Adding another vqgan model with prompt or masked images

See the open issues for a full list of proposed features (and known issues).

@misc{esser2021taming,

title={Taming Transformers for High-Resolution Image Synthesis},

author={Patrick Esser and Robin Rombach and Björn Ommer},

year={2021},

eprint={2012.09841},

archivePrefix={arXiv},

primaryClass={cs.CV}

}