This is Genetic Algorithm implementation, written in Python. The goal is to create a Genetic Algorithm that is quick to implement and easy to use. This implementation introduces several new features that I came up with while working with it, such as support for stochastic problems and new types offspring creation.

GeneticAlgorithm = GA(

generations_count=100, # number of simulated generations

population_count=200, # number of population in generation

function=function, # function to optimize

params_bounds=bounds, # variable bounds for each argument

fitness_threshold=None, # threshold value of fitness for early stopping

maximize=False, # decide to optimize values for minimizing or maximizing function output

floating_point=True, # variables data type

stochastic=False, # set True if function to optimize have stochastic nature

stochastic_iterations=3, # if function to optimize have stochastic nature, performs multiple calculations for every individual (>=3)

allow_gene_duplication=True, # allows the same genes to be calculated several times

crossover_percentage=0.3,# percentage of population reproduced by crossover

mutation_percentage=0.7 # percentage of population reproduced by mutation

)GeneticAlgorithm.evolve(verbose=1)Runs a solution search. Verbosity mode. 0 = progress bar, 1 = generation number and best stats, 2 = adds console clear between generations.

GeneticAlgorithm.get_best()Returns best solution.

GeneticAlgorithm.get_best_fitness()Returns best solution fitness.

GeneticAlgorithm.get_evolution_time()Returns searching time in seconds.

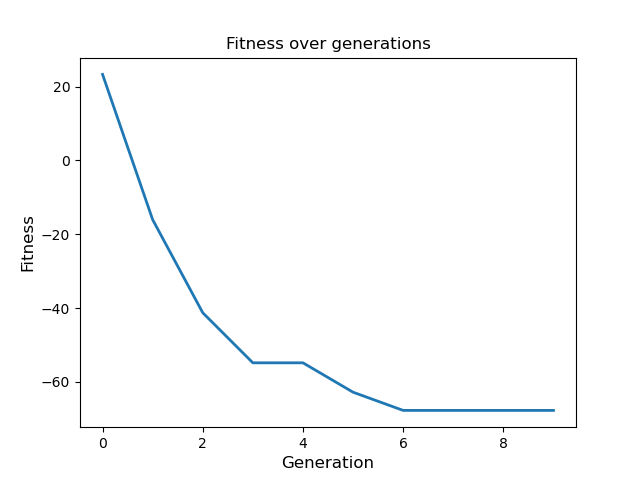

GeneticAlgorithm.plot_learning_curve(

title = 'Fitness over generations',

xlabel = 'Generation',

ylabel = 'Fitness',

font_size = 12,

line_width = 2,

save_dir = None,

image_name = 'learning_curve.png'

)Plots learning curve and returns fitness array. if save_dir is None, the image will not be saved.

GeneticAlgorithm.get_searched_list()Returns a list of searched solutions, only if allow_gene_duplication=False.

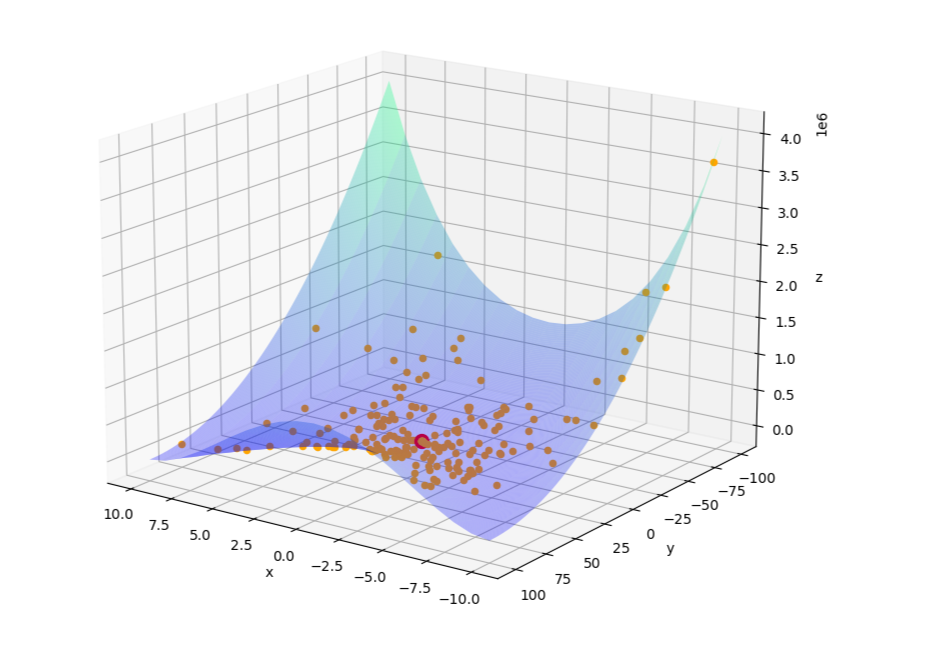

A test of a genetic algorithm on a popular optimization problem, the Rosenbrock function. The script runs a basic optimization using a relatively small population and number of generations.

Sample learning curve:

After the evolution is complete, the searched solutions are plotted on the function plane. The best solution is marked as a big red dot.