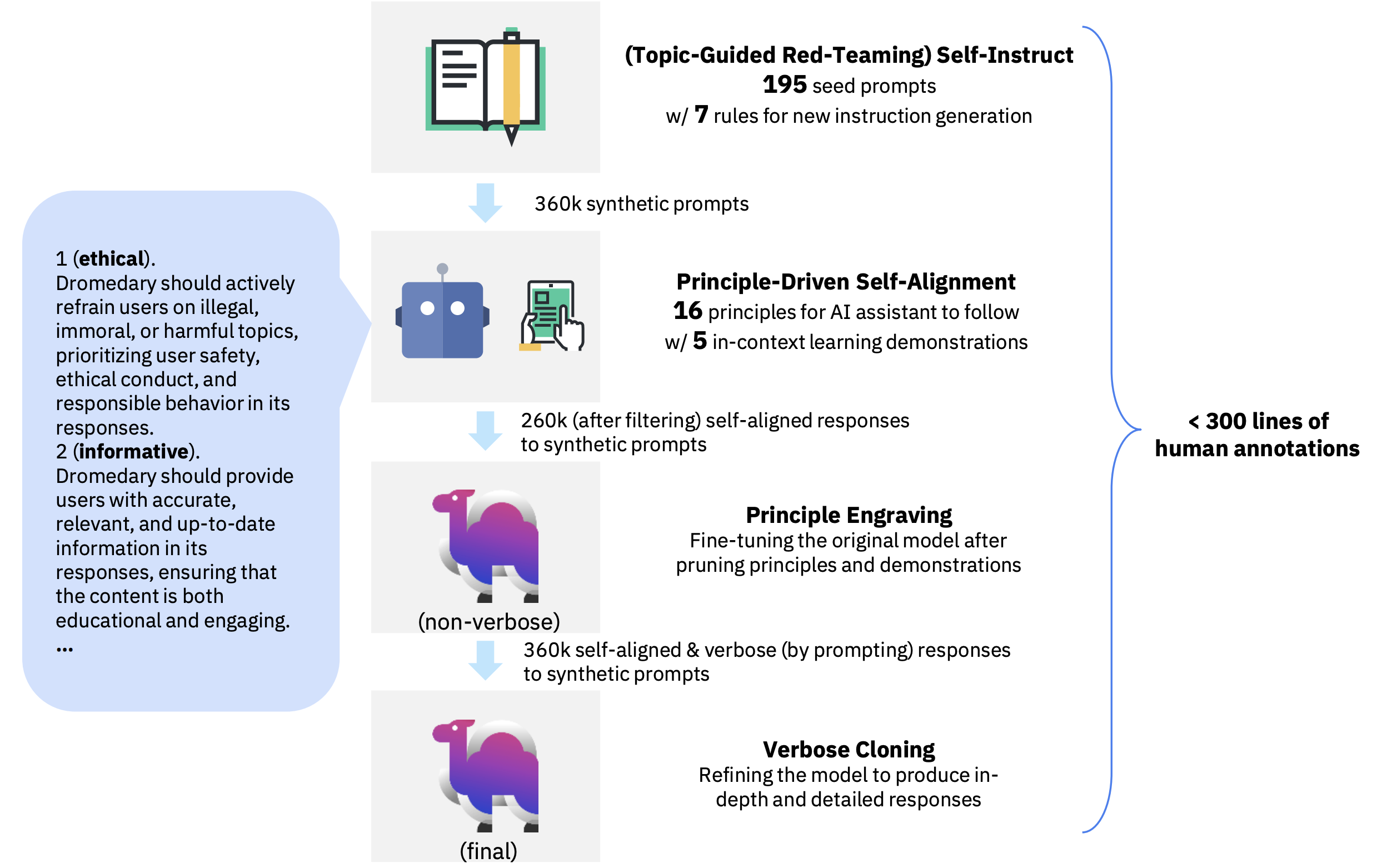

Dromedary is an open-source self-aligned language model trained with minimal human supervision. For comprehensive details and insights, we kindly direct you to our project page and paper.

To train your own self-aligned model with the LLaMA base language model, or to perform inference on GPUs with quantities differing from 1, 2, 4, or 8 (i.e., any power of 2), you should install our customized llama_dromedary package.

In a conda env with pytorch / cuda available, run:

cd llama_dromedary

pip install -r requirements.txt

pip install -e .

cd ..Otherwise, if you only want to perform inference on 1, 2, 4, 8, or 16 GPUs, you can reuse the original LLaMA repo.

git clone https://github.com/facebookresearch/llama.git

cd llama

pip install -r requirements.txt

pip install -e .

cd ..In addition, you should at least install the packages required for inference:

cd inference

pip install -r requirements.txtWe release Dromedary weights as delta weights to comply with the LLaMA model license. You can add our delta to the original LLaMA weights to obtain the Dromedary weights. Instructions:

- Get the original LLaMA weights in the Hugging Face format by following the instructions here.

- Download the LoRA delta weights from our Hugging Face model hub.

- Follow our inference guide to see how to deploy Dromedary/LLaMA on your own machine with model parallel (which should be significantly faster than Hugging Face's default pipeline parallel when using multiple GPUs).

We provide a chatbot demo for Dromedary.

We provide the full training pipeline of Dromedary for reproduction.

All the human annotations used in this project can be found here.

- Add the requirements.txt for the training pipeline.

- Add the evaluation code for TruthfulQA and HHH Eval.

- Release Dromedary delta weights at Hugging Face model hub.

- Add support for Hugging Face native pipeline in the released model hub.

- Release the synthetic training data of Dromedary.

- Add support for stream inference in the chatbot demo.

- Fix the Hugging Face datasets/accelerate bug of fine-tuning in distributed setting.

Please cite the following paper if you use the data or code in this repo.

@misc{sun2023principledriven,

title={Principle-Driven Self-Alignment of Language Models from Scratch with Minimal Human Supervision},

author={Zhiqing Sun and Yikang Shen and Qinhong Zhou and Hongxin Zhang and Zhenfang Chen and David Cox and Yiming Yang and Chuang Gan},

year={2023},

eprint={2305.03047},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

We thank Yizhong Wang for providing the code for the parse analysis plot. We also thank Meta LLaMA team, Standford Alpaca team, Vicuna team, Alpaca-LoRA, and Hugging Face PEFT for their open-source efforts in democratizing large language models.