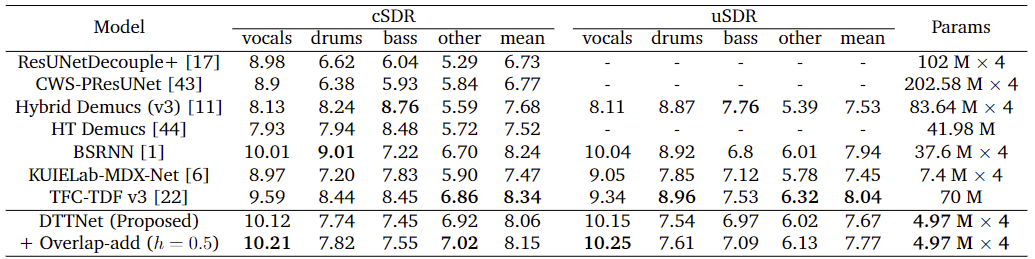

A Pytorch Implementation of the ICASSP 2024 paper: Dual-Path TFC-TDF UNet for Music Source Separation. DTTNet achieves 10.12 dB cSDR on vocals with 86% fewer parameters compared to BSRNN (SOTA).

Link to our paper:

- arXiv (Accepted Version): https://arxiv.org/abs/2309.08684

- IEEE Xplore (Published Version): https://ieeexplore.ieee.org/document/10448020

- Overlap-add is switched on by default, comment the values of key

overlap_addinconfigs\inferandconfigs\evaluationto switch it off and the inference time will be 4x faster.

- Download MUSDB18HQ from https://sigsep.github.io/datasets/musdb.html

- (Optional) Edit the validation_set in configs/datamodule/musdb_dev14.yaml

- Create Miniconda/Anaconda environment

conda env create -f conda_env_gpu.yaml -n DTT

source /root/miniconda3/etc/profile.d/conda.sh

conda activate DTT

pip install -r requirements.txt

export PYTHONPATH=$PYTHONPATH:$(pwd) # for Windows, replace the 'export' with 'set'

- Edit .env file according to the instructions. It is recommended to use wandb to manage the logs.

cp .env.example .env

vim .env

Once all these settings are configured, the next time you simply need to execute these code snippets to set up the environment

source /root/miniconda3/etc/profile.d/conda.sh

conda activate DTT

- Download checkpoints from either:

- Run code

python run_infer.py model=vocals ckpt_path=xxxxx mixture_path=xxxx

The files will be saved under the folder PROJECT_ROOT\infer\songname_suffix\

Parameter Options:

- model=vocals, model=bass, model=drums, model=other

Change pool_workers in configs\evaluation. You can set the number as the number of cores in your CPU.

export ckpt_path=xxx # for Windows, replace the 'export' with 'set'

python run_eval.py model=vocals logger.wandb.name=xxxx

# or if you don't want to use logger

python run_eval.py model=vocals logger=[]

The result will be saved as eval.csv under the folder LOG_DIR\basename(ckpt_path)_suffix

Parameter Options:

- model=vocals, model=bass, model=drums, model=other

Note that you will need:

- 1 TB disk space for data augmentation.

- Otherwise, edit

configs/datamodule/musdb18_hq.yamlso that:aug_params=[]. This will train the model without data augmentation.

- Otherwise, edit

- 2 A40 (48GB). Or equivalently, 4 RTX 3090 (24 GB).

- Otherwise, edit

configs/experiment/vocals_dis.yamlso that:datamodule.batch_sizeis smallertrainer.devices:1model.bn_norm: BN- delete

trainer.sync_batchnorm

- Otherwise, edit

python demos/split_dataset.py # data partition

# install aug tools

sudo apt-get update

sudo apt-get install soundstretch

mkdir /root/autodl-tmp/tmp

# perform augumentation

python src/utils/data_augmentation.py --data_dir /root/autodl-tmp/musdb18hq/

python train.py experiment=vocals_dis datamodule=musdb_dev14 trainer=default

# or if you don't want to use logger

python train.py experiment=vocals_dis datamodule=musdb_dev14 trainer=default logger=[]

The 5 best models will be saved under LOG_DIR\dtt_vocals_suffix\checkpoints

# edit api_key and path

python src/utils/pick_best.py

git checkout bespoke

- TFC-TDF UNet

- BandSplitRNN

- fast-reid (Sync BN)

- Zero_Shot_Audio_Source_Separation (overlap-add)

@INPROCEEDINGS{chen_dttnet_2024,

author={Chen, Junyu and Vekkot, Susmitha and Shukla, Pancham},

booktitle={ICASSP 2024 - 2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)},

title={Music Source Separation Based on a Lightweight Deep Learning Framework (DTTNET: DUAL-PATH TFC-TDF UNET)},

year={2024},

volume={},

number={},

pages={656-660},

keywords={Deep learning;Time-frequency analysis;Source separation;Target tracking;Convolution;Market research;Acoustics;source separation;music;audio;dual-path;deep learning},

doi={10.1109/ICASSP48485.2024.10448020}}