📘Documentation | 🛠️Installation | 👀Model Zoo | 🚀Awesome DETR | 🆕News | 🤔Reporting Issues

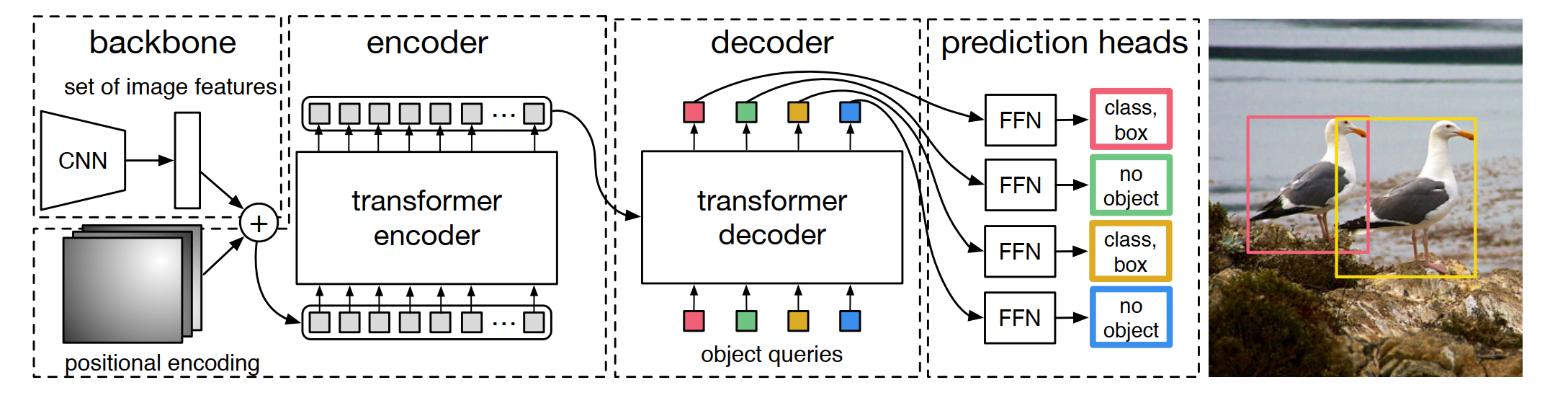

detrex is an open-source toolbox that provides state-of-the-art Transformer-based detection algorithms. It is built on top of Detectron2 and its module design is partially borrowed from MMDetection and DETR. Many thanks for their nicely organized code. The main branch works with Pytorch 1.10+ or higher (we recommend Pytorch 1.12).

Major Features

-

Modular Design. detrex decomposes the Transformer-based detection framework into various components which help users easily build their own customized models.

-

State-of-the-art Methods. detrex provides a series of Transformer-based detection algorithms, including DINO which reached the SOTA of DETR-like models with 63.3AP!

-

Easy to Use. detrex is designed to be light-weight and easy for users to use:

- LazyConfig System for more flexible syntax and cleaner config files.

- Light-weight training engine modified from detectron2 lazyconfig_train_net.py

Apart from detrex, we also released a repo Awesome Detection Transformer to present papers about Transformer for detection and segmentation.

The repo name detrex has several interpretations:

-

detr-ex : We take our hats off to DETR and regard this repo as an extension of Transformer-based detection algorithms.

-

det-rex : rex literally means 'king' in Latin. We hope this repo can help advance the state of the art on object detection by providing the best Transformer-based detection algorithms from the research community.

-

de-t.rex : de means 'the' in Dutch. T.rex, also called Tyrannosaurus Rex, means 'king of the tyrant lizards' and connects to our research work 'DINO', which is short for Dinosaur.

v0.2.1 was released on 01/02/2023:

- Support MaskDINO coco instance segmentation.

- Support new DINO baselines:

ViTDet-DINO,Focal-DINO. - Support

FocalNetBackbone. - Add tutorial about

downloading pretrained backbones,verify installation. - Modified learning rate scheduler usage and add tutorial on

customized scheduler. - Add more readable logging information for criterion and matcher.

Please see changelog.md for details and release history.

Please refer to Installation Instructions for the details of installation.

Please refer to Getting Started with detrex for the basic usage of detrex. We also provides other tutorials for:

- Learn about the config system of detrex

- How to convert the pretrained weights from original detr repo into detrex format

- Visualize your training data and testing results on COCO dataset

- Analyze the model under detrex

- Download and initialize with the pretrained backbone weights

- Frequently asked questions

Please see documentation for full API documentation and tutorials.

Results and models are available in model zoo.

Supported methods

- DETR (ECCV'2020)

- Deformable-DETR (ICLR'2021 Oral)

- Conditional-DETR (ICCV'2021)

- DAB-DETR (ICLR'2022)

- DAB-Deformable-DETR (ICLR'2022)

- DN-DETR (CVPR'2022 Oral)

- DN-Deformable-DETR (CVPR'2022 Oral)

- DINO (ICLR'2023)

- Group-DETR (ArXiv'2022)

- H-Deformable-DETR (ArXiv'2022)

- MaskDINO (ArXiv'2022)

Please see projects for the details about projects that are built based on detrex.

This project is released under the Apache 2.0 license.

- detrex is an open-source toolbox for Transformer-based detection algorithms created by researchers of IDEACVR. We appreciate all contributions to detrex!

- detrex is built based on Detectron2 and part of its module design is borrowed from MMDetection, DETR, and Deformable-DETR.

If you use this toolbox in your research or wish to refer to the baseline results published here, please use the following BibTeX entries:

Citation List

detrex project:

@misc{ideacvr2022detrex,

author = {detrex contributors},

title = {detrex: An Research Platform for Transformer-based Object Detection Algorithms},

howpublished = {\url{https://github.com/IDEA-Research/detrex}},

year = {2022}

}relevant publications:

@inproceedings{carion2020end,

title={End-to-end object detection with transformers},

author={Carion, Nicolas and Massa, Francisco and Synnaeve, Gabriel and Usunier, Nicolas and Kirillov, Alexander and Zagoruyko, Sergey},

booktitle={European conference on computer vision},

pages={213--229},

year={2020},

organization={Springer}

}

@article{zhu2020deformable,

title={Deformable DETR: Deformable Transformers for End-to-End Object Detection},

author={Zhu, Xizhou and Su, Weijie and Lu, Lewei and Li, Bin and Wang, Xiaogang and Dai, Jifeng},

journal={arXiv preprint arXiv:2010.04159},

year={2020}

}

@inproceedings{meng2021-CondDETR,

title = {Conditional DETR for Fast Training Convergence},

author = {Meng, Depu and Chen, Xiaokang and Fan, Zejia and Zeng, Gang and Li, Houqiang and Yuan, Yuhui and Sun, Lei and Wang, Jingdong},

booktitle = {Proceedings of the IEEE International Conference on Computer Vision (ICCV)},

year = {2021}

}

@inproceedings{

liu2022dabdetr,

title={{DAB}-{DETR}: Dynamic Anchor Boxes are Better Queries for {DETR}},

author={Shilong Liu and Feng Li and Hao Zhang and Xiao Yang and Xianbiao Qi and Hang Su and Jun Zhu and Lei Zhang},

booktitle={International Conference on Learning Representations},

year={2022},

url={https://openreview.net/forum?id=oMI9PjOb9Jl}

}

@inproceedings{li2022dn,

title={Dn-detr: Accelerate detr training by introducing query denoising},

author={Li, Feng and Zhang, Hao and Liu, Shilong and Guo, Jian and Ni, Lionel M and Zhang, Lei},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={13619--13627},

year={2022}

}

@misc{zhang2022dino,

title={DINO: DETR with Improved DeNoising Anchor Boxes for End-to-End Object Detection},

author={Hao Zhang and Feng Li and Shilong Liu and Lei Zhang and Hang Su and Jun Zhu and Lionel M. Ni and Heung-Yeung Shum},

year={2022},

eprint={2203.03605},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@article{chen2022group,

title={Group DETR: Fast DETR Training with Group-Wise One-to-Many Assignment},

author={Chen, Qiang and Chen, Xiaokang and Wang, Jian and Feng, Haocheng and Han, Junyu and Ding, Errui and Zeng, Gang and Wang, Jingdong},

journal={arXiv preprint arXiv:2207.13085},

year={2022}

}

@article{jia2022detrs,

title={DETRs with Hybrid Matching},

author={Jia, Ding and Yuan, Yuhui and He, Haodi and Wu, Xiaopei and Yu, Haojun and Lin, Weihong and Sun, Lei and Zhang, Chao and Hu, Han},

journal={arXiv preprint arXiv:2207.13080},

year={2022}

}

@misc{li2022mask,

title={Mask DINO: Towards A Unified Transformer-based Framework for Object Detection and Segmentation},

author={Feng Li and Hao Zhang and Huaizhe xu and Shilong Liu and Lei Zhang and Lionel M. Ni and Heung-Yeung Shum},

year={2022},

eprint={2206.02777},

archivePrefix={arXiv},

primaryClass={cs.CV}

}