continuous-eval is an open-source package created for granular and holistic evaluation of GenAI application pipelines.

-

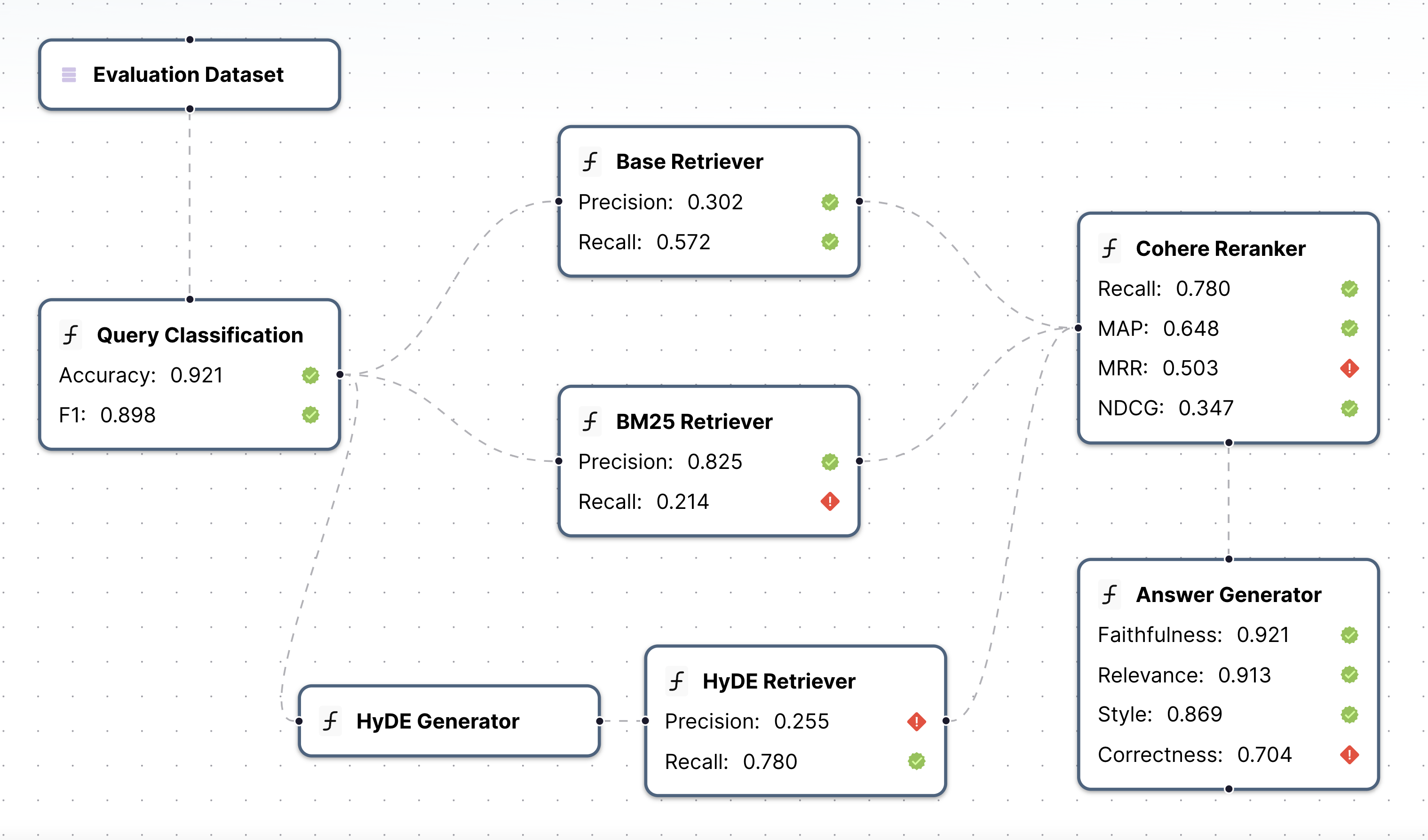

Modularized Evaluation: Measure each module in the pipeline with tailored metrics.

-

Comprehensive Metric Library: Covers Retrieval-Augmented Generation (RAG), Code Generation, Agent Tool Use, Classification and a variety of other LLM use cases. Mix and match Deterministic, Semantic and LLM-based metrics.

-

Leverage User Feedback in Evaluation: Easily build a close-to-human ensemble evaluation pipeline with mathematical guarantees.

-

Synthetic Dataset Generation: Generate large-scale synthetic dataset to test your pipeline.

This code is provided as a PyPi package. To install it, run the following command:

python3 -m pip install continuous-evalif you want to install from source:

git clone https://github.com/relari-ai/continuous-eval.git && cd continuous-eval

poetry install --all-extrasTo run LLM-based metrics, the code requires at least one of the LLM API keys in .env. Take a look at the example env file .env.example.

Here's how you run a single metric on a datum. Check all available metrics here: link

from continuous_eval.metrics.retrieval import PrecisionRecallF1

datum = {

"question": "What is the capital of France?",

"retrieved_context": [

"Paris is the capital of France and its largest city.",

"Lyon is a major city in France.",

],

"ground_truth_context": ["Paris is the capital of France."],

"answer": "Paris",

"ground_truths": ["Paris"],

}

metric = PrecisionRecallF1()

print(metric(**datum))| Module | Category | Metrics |

|---|---|---|

| Retrieval | Deterministic | PrecisionRecallF1, RankedRetrievalMetrics |

| LLM-based | LLMBasedContextPrecision, LLMBasedContextCoverage | |

| Text Generation | Deterministic | DeterministicAnswerCorrectness, DeterministicFaithfulness, FleschKincaidReadability |

| Semantic | DebertaAnswerScores, BertAnswerRelevance, BertAnswerSimilarity | |

| LLM-based | LLMBasedFaithfulness, LLMBasedAnswerCorrectness, LLMBasedAnswerRelevance, LLMBasedStyleConsistency | |

| Classification | Deterministic | ClassificationAccuracy |

| Code Generation | Deterministic | CodeStringMatch, PythonASTSimilarity |

| LLM-based | LLMBasedCodeGeneration | |

| Agent Tools | Deterministic | ToolSelectionAccuracy |

| Custom | Define your own metrics |

To define your own metrics, you only need to extend the Metric class implementing the __call__ method.

Optional methods are batch (if it is possible to implement optimizations for batch processing) and aggregate (to aggregate metrics results over multiple samples_).

Define modules in your pipeline and select corresponding metrics.

from continuous_eval.eval import Module, ModuleOutput, Pipeline, Dataset

from continuous_eval.metrics.retrieval import PrecisionRecallF1, RankedRetrievalMetrics

from continuous_eval.metrics.generation.text import DeterministicAnswerCorrectness

from typing import List, Dict

dataset = Dataset("dataset_folder")

# Simple 3-step RAG pipeline with Retriever->Reranker->Generation

retriever = Module(

name="Retriever",

input=dataset.question,

output=List[str],

eval=[

PrecisionRecallF1().use(

retrieved_context=ModuleOutput(),

ground_truth_context=dataset.ground_truth_context,

),

],

)

reranker = Module(

name="reranker",

input=retriever,

output=List[Dict[str, str]],

eval=[

RankedRetrievalMetrics().use(

retrieved_context=ModuleOutput(),

ground_truth_context=dataset.ground_truth_context,

),

],

)

llm = Module(

name="answer_generator",

input=reranker,

output=str,

eval=[

FleschKincaidReadability().use(answer=ModuleOutput()),

DeterministicAnswerCorrectness().use(

answer=ModuleOutput(), ground_truth_answers=dataset.ground_truths

),

],

)

pipeline = Pipeline([retriever, reranker, llm], dataset=dataset)

print(pipeline.graph_repr()) # optional: visualize the pipelineNow you can run the evaluation on your pipeline

eval_manager.start_run()

while eval_manager.is_running():

if eval_manager.curr_sample is None:

break

q = eval_manager.curr_sample["question"] # get the question or any other field

# run your pipeline ...

eval_manager.next_sample()To log the results you just need to call the eval_manager.log method with the module name and the output, for example:

eval_manager.log("answer_generator", response)The evaluator manager also offers

eval_manager.run_metrics()to run all the metrics defined in the pipelineeval_manager.run_tests()to run the tests defined in the pipeline (see the documentation docs for more details)

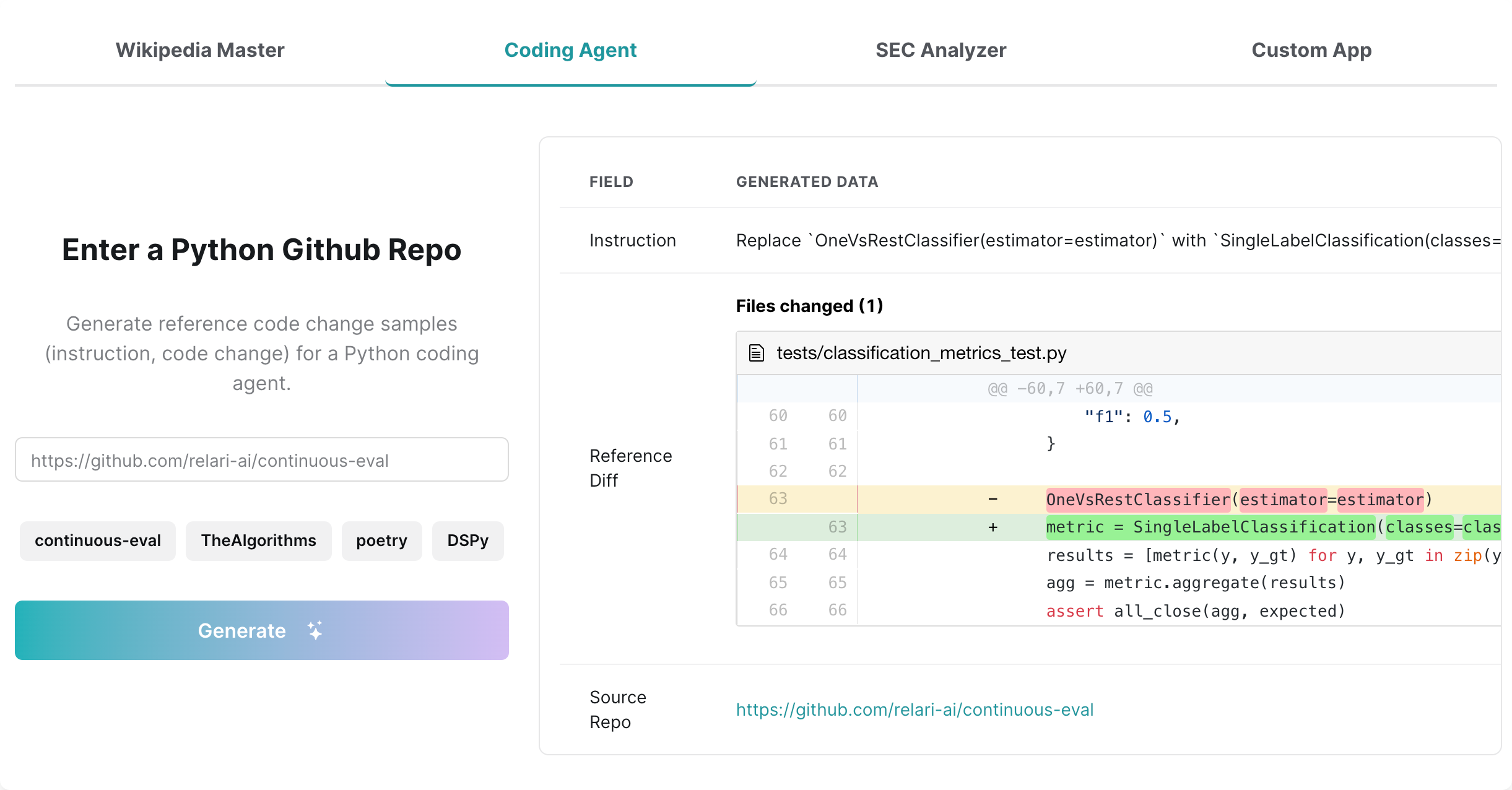

Ground truth data, or reference data, is important for evaluation as it can offer a comprehensive and consistent measurement of system performance. However, it is often costly and time-consuming to manually curate such a golden dataset. We have created a synthetic data pipeline that can custom generate user interaction data for a variety of use cases such as RAG, agents, copilots. They can serve a starting point for a golden dataset for evaluation or for other training purposes. Below is an example for Coding Agents. Try out this demo: Synthetic Data Demo

Interested in contributing? Contributions to LlamaIndex core as well as contributing integrations that build on the core are both accepted and highly encouraged! See our Contribution Guide for more details.

- Docs: link

- Examples Repo: end-to-end example repo

- Blog Posts:

- Practical Guide to RAG Pipeline Evaluation: Part 1: Retrieval, Part 2: Generation

- How important is a Golden Dataset for LLM evaluation? (link)

- How to evaluate complex GenAI Apps: a granular approach (link)

- Discord: Join our community of LLM developers Discord

- Reach out to founders: Email or Schedule a chat

This project is licensed under the Apache 2.0 - see the LICENSE file for details.

We monitor basic anonymous usage statistics to understand our users' preferences, inform new features, and identify areas that might need improvement. You can take a look at exactly what we track in the telemetry code

To disable usage-tracking you set the CONTINUOUS_EVAL_DO_NOT_TRACK flag to true.