This project analyzes the effects of weather on customer reviews of restaurants using YELP and climate datasets. We utilize Snowflake, a cloud-native data warehouse system, to process and analyze the data. The project demonstrates the end-to-end process of building a data warehouse, from raw data ingestion to insightful analysis.

Our data pipeline consists of the following stages:

- Multiple data sources

- Staging

- Operational Data Store (ODS)

- Data Warehouse

- Reporting

- YELP Dataset (JSON format):

- Tip

- User

- Review

- Business

- Check-in

- COVID

- Climate Data (CSV format):

- Temperature

- Precipitation

- Created a staging schema in Snowflake

- Uploaded all Yelp and Climate data to staging tables:

- YELP_TIP_TEMP

- YELP_USER_TEMP

- YELP_REVIEW_TEMP

- YELP_BUSINESS_TEMP

- YELP_CHECKIN_TEMP

- YELP_COVID_TEMP

- TEMPERATURE_TEMP

- PRECIPITATION_TEMP

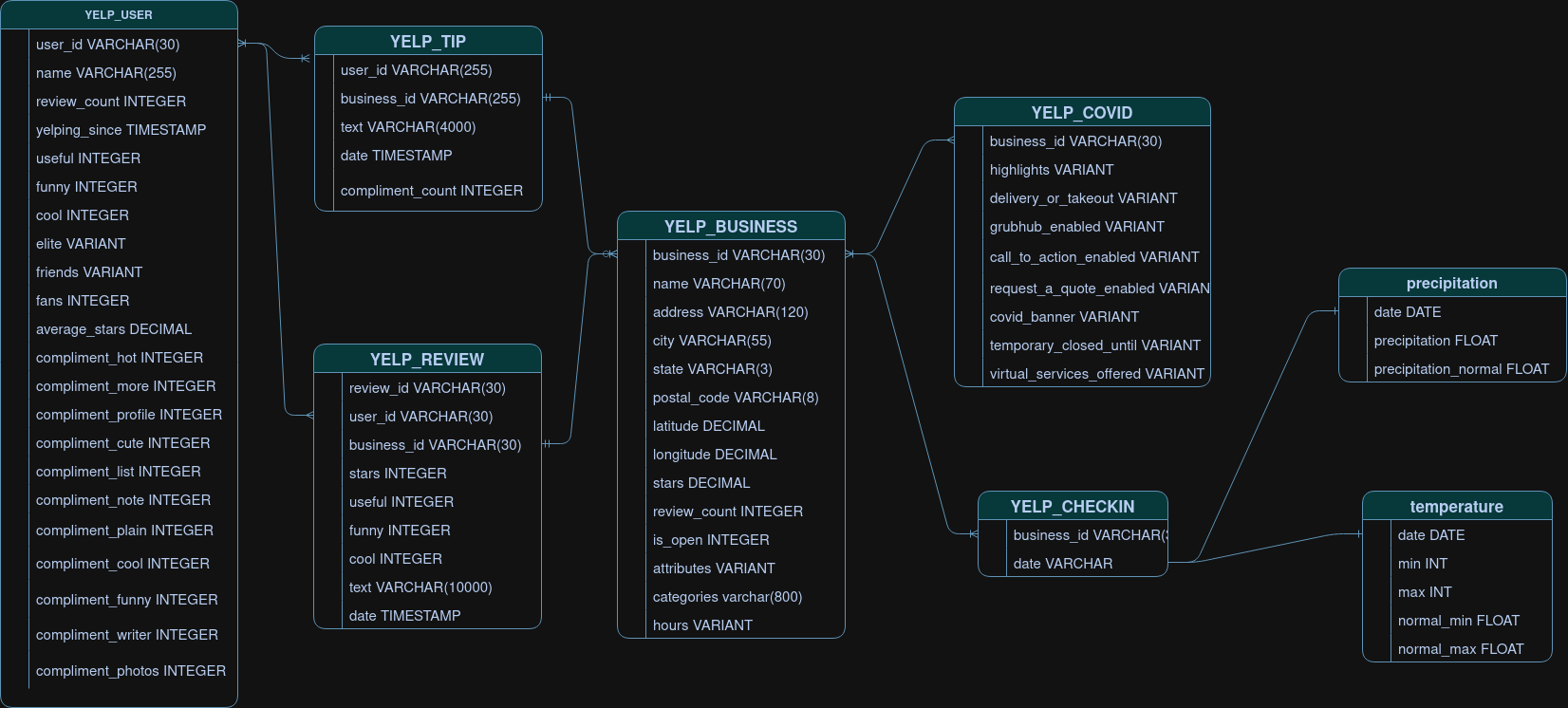

- Created an ODS schema with normalized tables

- Migrated data from staging to ODS

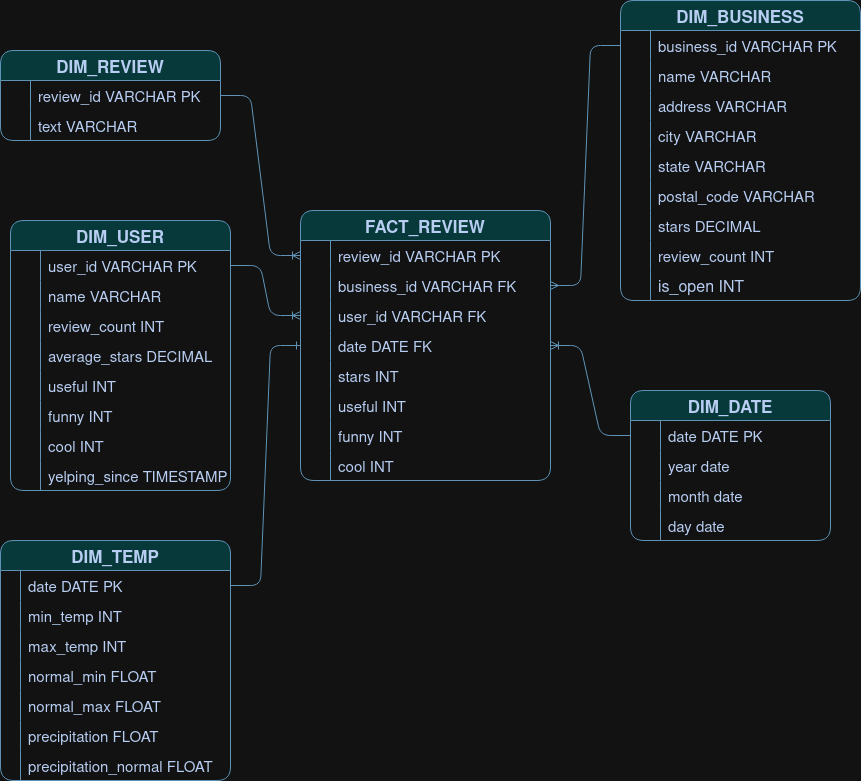

- Implemented a star schema design:

- Fact table: FACT_REVIEW

- Dimension tables: DIM_BUSINESS, DIM_USER, DIM_REVIEW, DIM_DATE, DIM_TEMP

- Migrated data from ODS to Data Warehouse

- Ensure you have at least 10GB of free disk space

- Download the YELP JSON dataset (4.04GB compressed) from the YELP Dataset

- Split large datasets into smaller files (<3 million records per file) using PineTools or 7zip

- Create Snowflake schemas for Staging, ODS, and Data Warehouse

- Upload data to Staging environment

- Migrate data through ODS to Data Warehouse

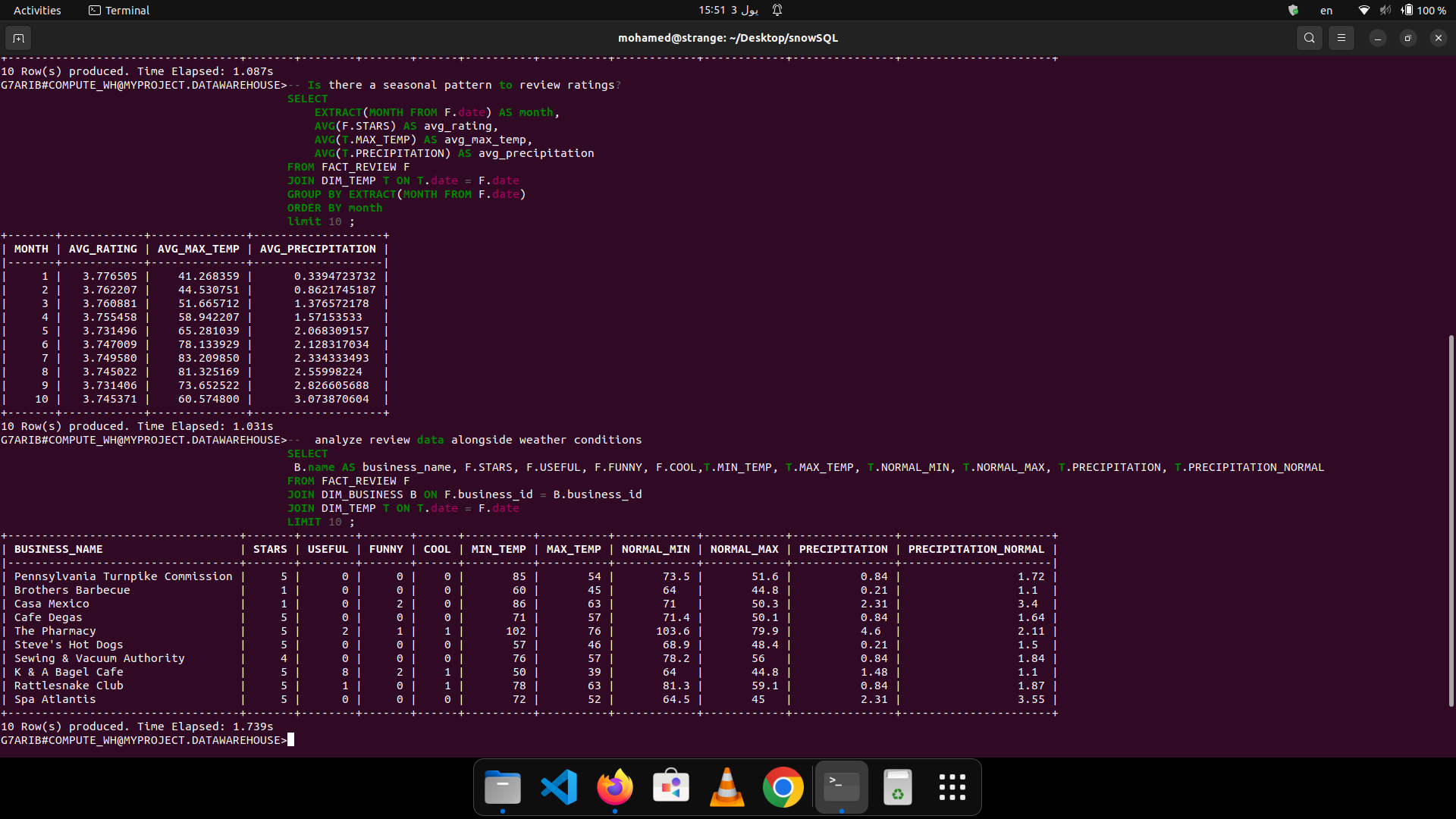

- Execute analysis queries

- Data architecture diagram

- Entity-Relationship (ER) diagram for ODS

- STAR schema diagram for Data Warehouse

- Screenshots of data upload and migration processes

- Final analysis queries and results

- Snowflake

- JSON and CSV data processing tools

- SQL for data transformation and analysis

- Large files may need to be split before uploading to Snowflake

- Verify split data to avoid parsing errors during upload

(Add any potential enhancements or additional analyses that could be performed)