Code for the Paper M4U: Evaluating Multilingual Understanding and Reasoning for Large Multimodal Models.

[Webpage] [Paper] [Huggingface Dataset] [Leaderboard]

M4U: Evaluating Multilingual Understanding and Reasoning for Large Multimodal Models

- 💥 News 💥

- 👀 About M4U

- 🏆 Leaderboard 🏆

- 📖 Dataset Usage

- 🔮 Evaluations on M4U

- 📊 Statistics

- ✅ Cite

- 🧠 Acknowledgments

- [2024.08.16] M4U-mini is public aviailable, which is our first step to extend M4U for more languages. M4U-mini is a tiny subset (5%) of M4U with the support for Japanese, Arabic and Thai.

- [2024.05.23] Our paper and dataset are public aviailable.

Multilingual multimodal reasoning is a core component to achieve human-level intelligence. However, most of the existing benchmarks for multilingual multimodal reasoning struggle to differentiate between models of varying performance: even language models that don't have visual capabilities can easily achieve high scores. This leaves a comprehensive evaluation for the leading multilingual multimodal models largely unexplored.

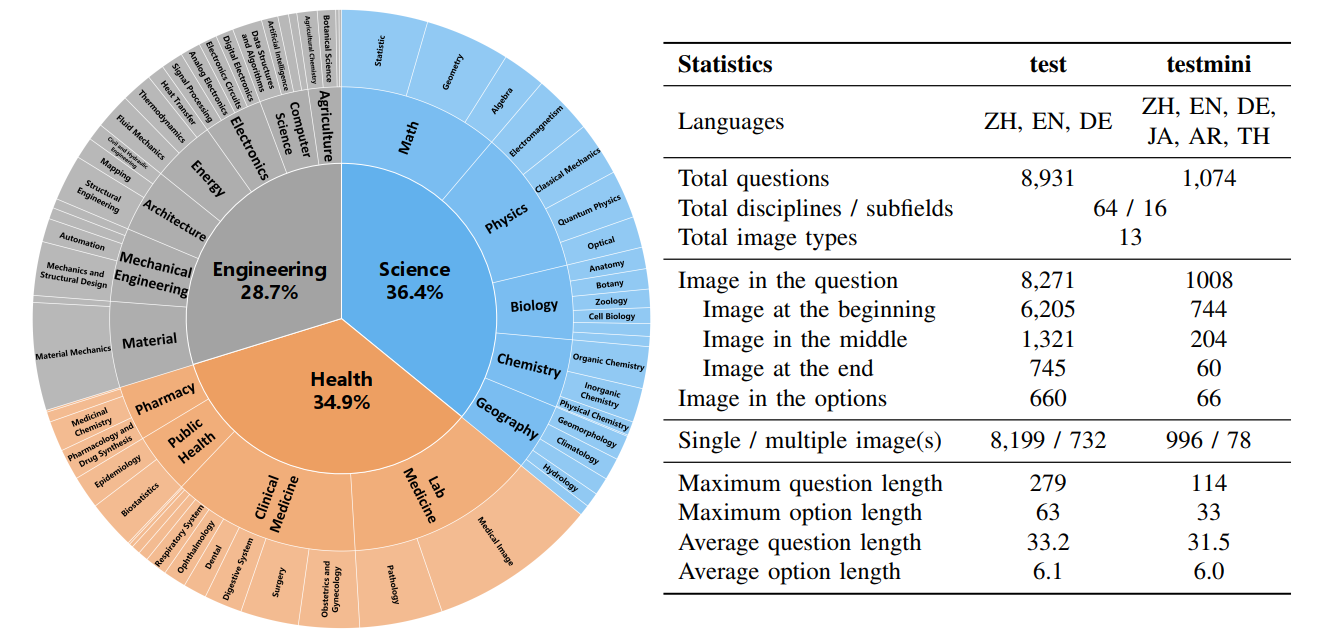

The detailed statistics of M4U dataset.

In this work, we introduce M4U, a novel and challenging benchmark for assessing the capability of multi-discipline multilingual multimodal understanding and reasoning. M4U contains 8,931 samples covering 64 disciplines of 16 subfields from Science, Engineering and Healthcare in Chinese, English and German.

With M4U, we conduct extensive evaluations for 21 leading LMMs and LLMs with external tools. The evaluation results show that the state-of-the-art model, GPT-4o, only achieves 47.6% average accuracy on M4U. Besides, we observe that the leading LMMs have significant language preferences.

Our in-depth analysis shows that the leading LMMs, including GPT-4o, suffer from the performance degradation when they are prompted with cross-lingual multimodal questions, e.g., the images have key textual information in Chinese, while the question is in English or German.

We further analyze the impact of different types of visual content and image positions. The experimental results show that GPT-4o significantly outperforms the other models in medical images, and LLaVA-NeXT has difficulty answering questions where images are included in the options.

🚨🚨 The leaderboard is continuously being updated.

If you want to upload your model's results to the Leaderboard, please send an email to Hongyu Wang and Ruiping Wang.

The evaluation instructions are available at 🔮 Evaluations on M4U.

| # | Model | Method | Source | English | Chinese | German | Average |

|---|---|---|---|---|---|---|---|

| 1 | GPT-4o | LMM | gpt-4o | 47.8 | 49.4 | 45.6 | 47.6 |

| 2 | GPT-4V + CoT | LMM | gpt-4-vision-preview | 43.6 | 43.9 | 40.3 | 42.6 |

| 3 | GPT-4V | LMM | gpt-4-vision-preview | 39.4 | 39.7 | 37.3 | 38.8 |

| 4 | LLaVA-NeXT 34B | LMM | LINK | 36.2 | 38.5 | 35.2 | 36.6 |

| 5 | Gemini 1.0 Pro + CoT | LMM | gemini-pro-vision | 34.2 | 34.4 | 33.9 | 34.2 |

| 6 | Gemini 1.0 Pro | LMM | gemini-pro | 32.7 | 34.9 | 30.8 | 32.8 |

| 6 | Qwen-1.5 14B Chat(+Caption) | Tool | LINK | 32.0 | 32.7 | 33.8 | 32.8 |

| 8 | Yi-VL 34B | LMM | LINK | 33.3 | 33.5 | 30.5 | 32.4 |

| 9 | Yi-VL 6B | LMM | LINK | 31.4 | 33.4 | 29.7 | 31.5 |

| 10 | DeepSeek VL Chat | LMM | LINK | 32.8 | 30.4 | 30.8 | 31.3 |

| 11 | Qwen-1.5 7B Chat(+Caption) | Tool | LINK | 27.7 | 34.2 | 31.7 | 31.2 |

| 11 | Gemini 1.0 Pro(+Caption) | Tool | gemini-pro | 31.1 | 31.6 | 33.8 | 31.2 |

| 13 | InternLM-XComposer VL | LMM | LINK | 31.6 | 31.8 | 30.9 | 30.8 |

| 14 | LLaVA-NeXT Mistral-7B | LMM | LINK | 30.6 | 28.2 | 29.4 | 29.4 |

| 15 | CogVLM Chat | LMM | LINK | 30.2 | 28.9 | 28.5 | 29.2 |

| 16 | Qwen-VL Chat | LMM | LINK | 29.9 | 29.7 | 27.1 | 28.9 |

| 17 | LLaVA-NeXT Vicuna-13B | LMM | LINK | 30.9 | 21.9 | 29.3 | 27.4 |

| 18 | Mistral-Instruct-7B v0.2(+Caption) | Tool | LINK | 24.9 | 24.9 | 26.9 | 25.6 |

| 19 | LLaVA-NeXT Vicuna-7B | LMM | LINK | 29.8 | 11.8 | 28.2 | 23.3 |

| 20 | InstructBLIP Vicuna-7B | LMM | LINK | 28.1 | 13.7 | 19.7 | 20.5 |

| 21 | InstructBLIP Vicuna-13B | LMM | LINK | 23.4 | 10.5 | 18.6 | 17.5 |

| 22 | YingVLM | LMM | LINK | 11.2 | 22.3 | 15.6 | 16.4 |

| 23 | VisualGLM | LMM | LINK | 22.4 | 8.7 | 13.5 | 14.9 |

- Method types

- LMM : Large Multimodal Model

- Tool : Tool-augmented Large Language Model. The captions are generated by Gemini 1.0 Pro.

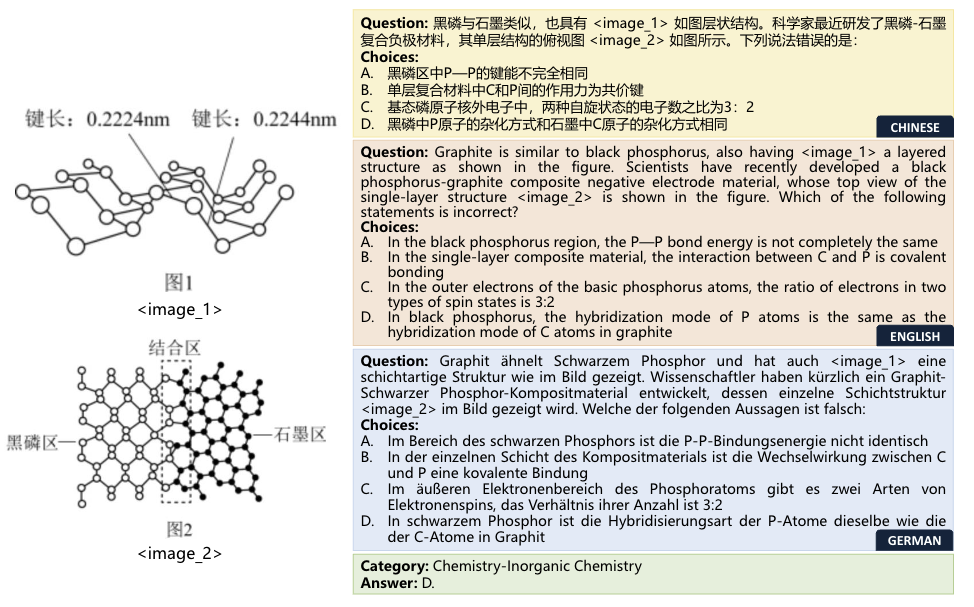

An example from the Chemistry-Inorganic of M4U dataset:

The dataset is in json format. We provide the detailed attributes as follows:

{

"question": [string] The question text,

"image_files": [list] The images stored in bytes

"image_caption": [list] The image caption generated by Gemini Pro 1.0

"image_type": [list] The category of the images

"options": [list] Choices for multiple-choice problems.

"answer": [string] The correct answer for the problem,

"answer_index": [integer] The correct answer index of the options for the problem,

"language": [string] Question language: "en", "zh", or "de",

"discipline": [string] The discipline of the problem: "Geophysics" or "Optical",

"subfield": [string] The subfield of the problem: "Math" or "Geography"

"field": [string] The field of the problem: "science", "engineering" or "healthcare"

"cross_lingual": [bool] Whether the visual content of the problem has the key information or concepts

}

First, make sure that you have successfully setup:

pip install datasetsThen you can easily download this dataset from Huggingface.

from datasets import load_dataset

dataset = load_dataset("M4U-Benchmark/M4U")Here are some examples of how to access the downloaded dataset:

# print the first example on the science_en split

print(dataset["science_en"][0])

print(dataset["science_en"][0]['question']) # print the question

print(dataset["science_en"][0]['options']) # print the options

print(dataset["science_en"][0]['answer']) # print the answerWe provide the evaluation pipelines for the open-source LLaVA and closed-source model GPT-4o, respectively. The pipeline is very simple and easy to use.

First you should follow the instructions to clone the LLaVA repository and install the Python dependencies.

git clone https://github.com/haotian-liu/LLaVA.git

pip install -e LLaVA/Generate the response of LLaVA-NeXT 34B:

python evaluate_llava.py\

--model liuhaotian/llava-v1.6-34b \

--conv_mode chatml_direct \

--field all \

--lang all \

--result_folder ./llava_next_34bCalculate the final scores:

python calculate_scores.py \

--field all \

--lang all \

--result_folder ./llava_next_34bReplace "/your/api/key" in evaluate_gpt4o.py with your personal key. Then generate the response of GPT-4o:

python evaluate_gpt4o.py \

--model gpt-4o \

--field all \

--lang all \

--result_folder ./result/M4U/gpt4oCalculate the final scores:

python calculate_scores.py \

--field all \

--lang all \

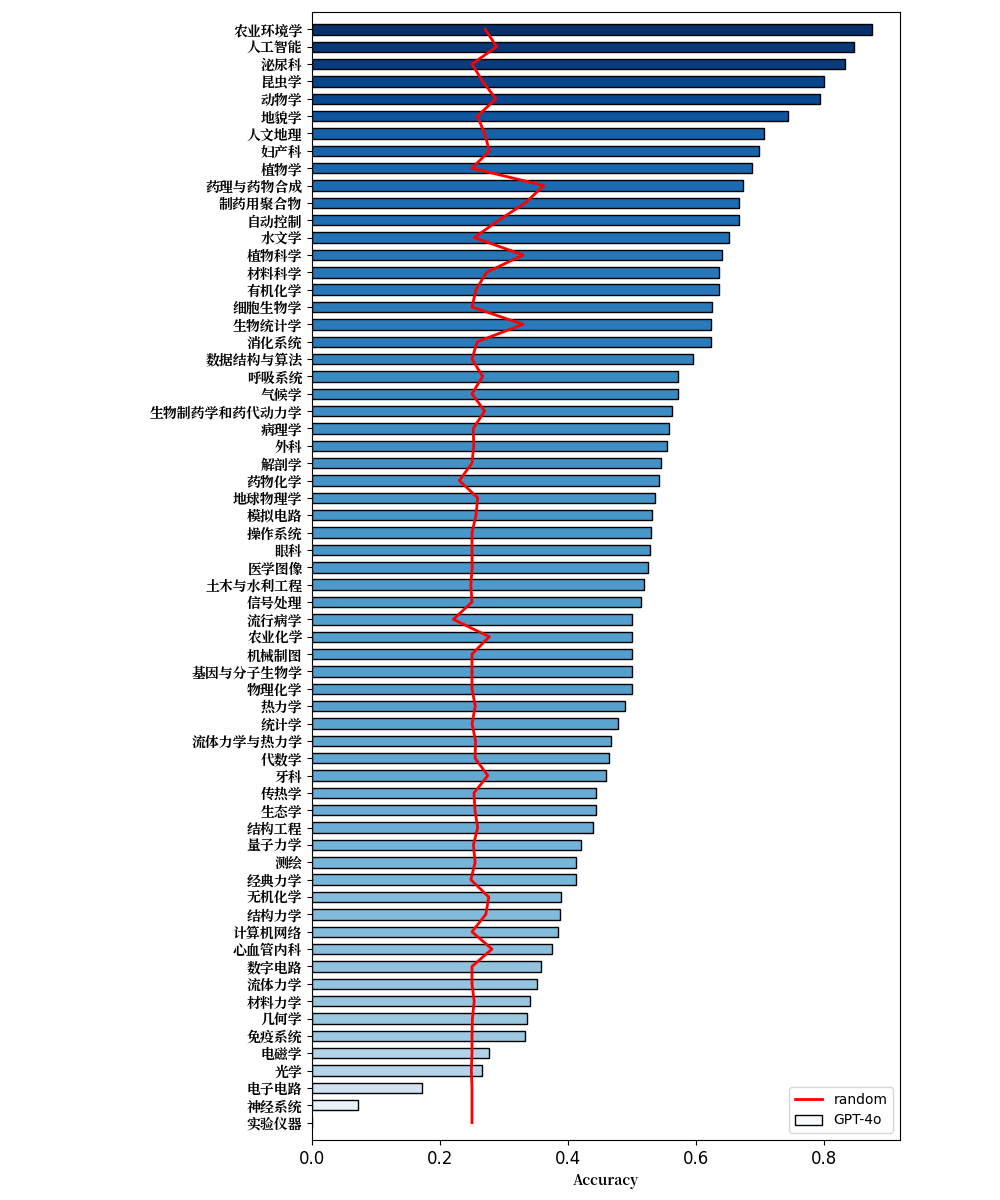

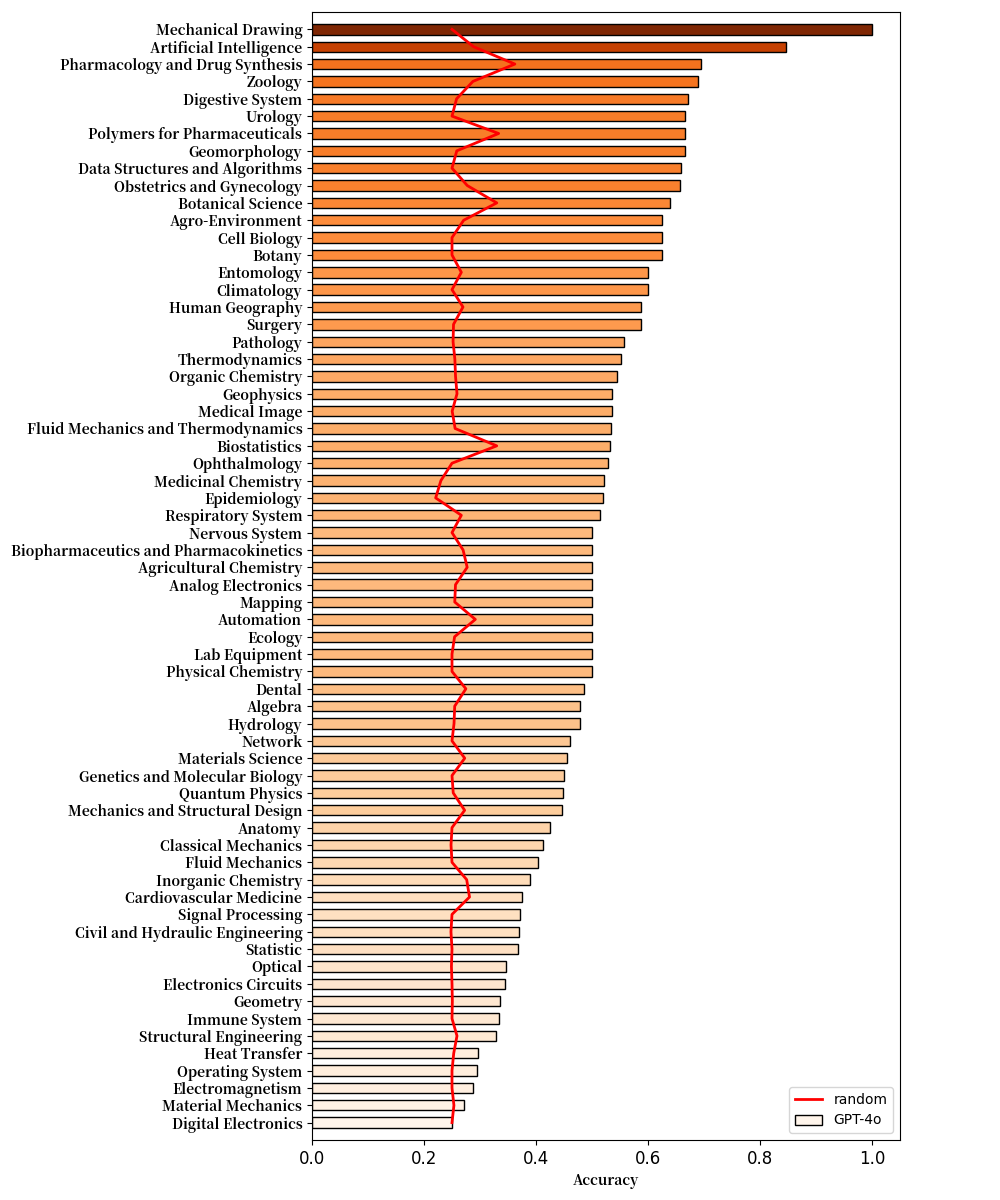

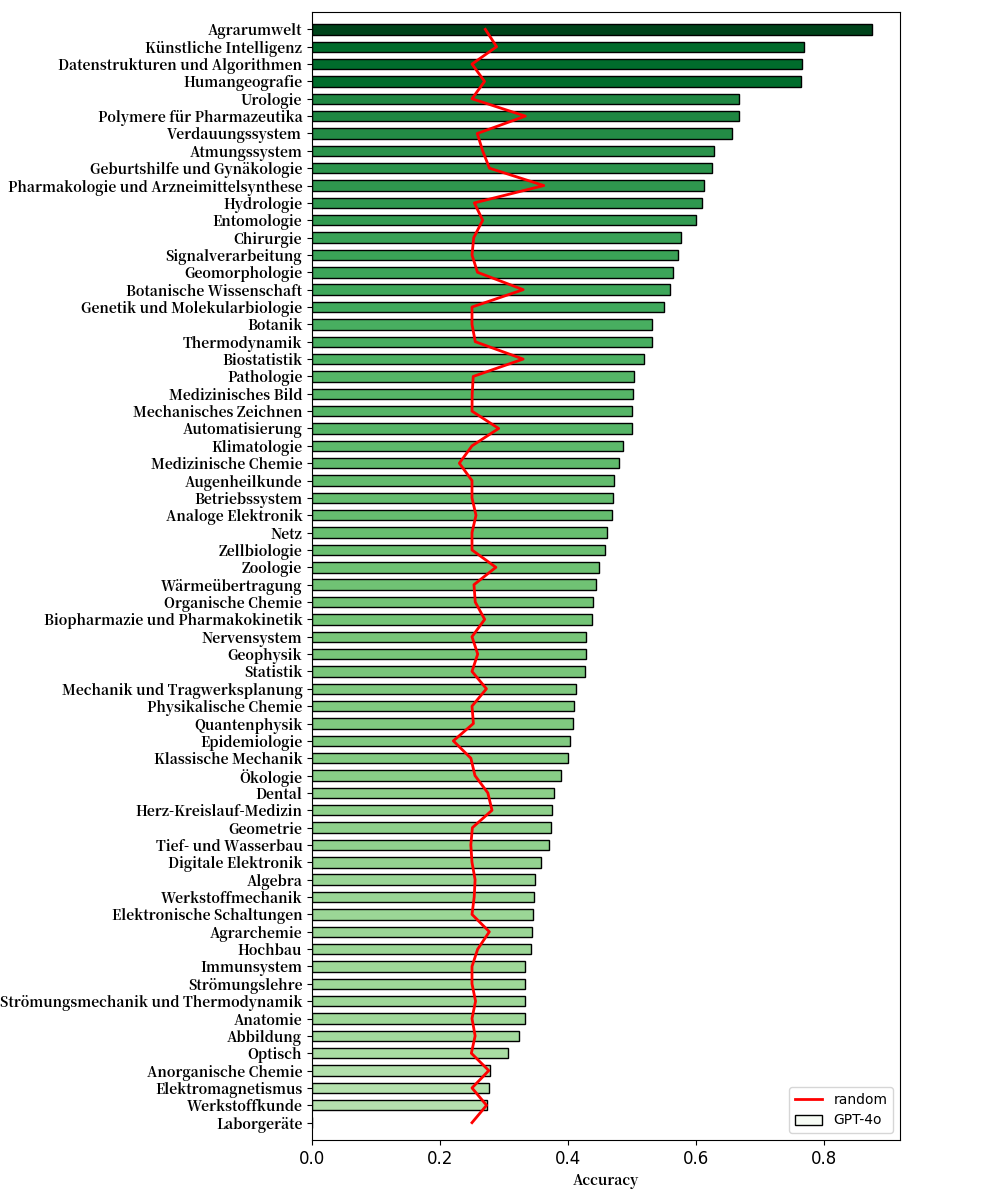

--result_folder ./result/M4U/gpt4oWe depict some critical statistical results on this section, including detailed statistics of GPT-4o's performance on different discipline and language, and visualization of image quality. These detailed statistics will also help understanding and further analysis for M4U.

To further analyze performance of MLLMs on different disciplines, we provided statistics of GPT-4o's performance on 64 different disciplines in M4U. These three figures shown results of GPT-4o on Chinese, English, and German. Disciplines on these images are shown in corresponding language.

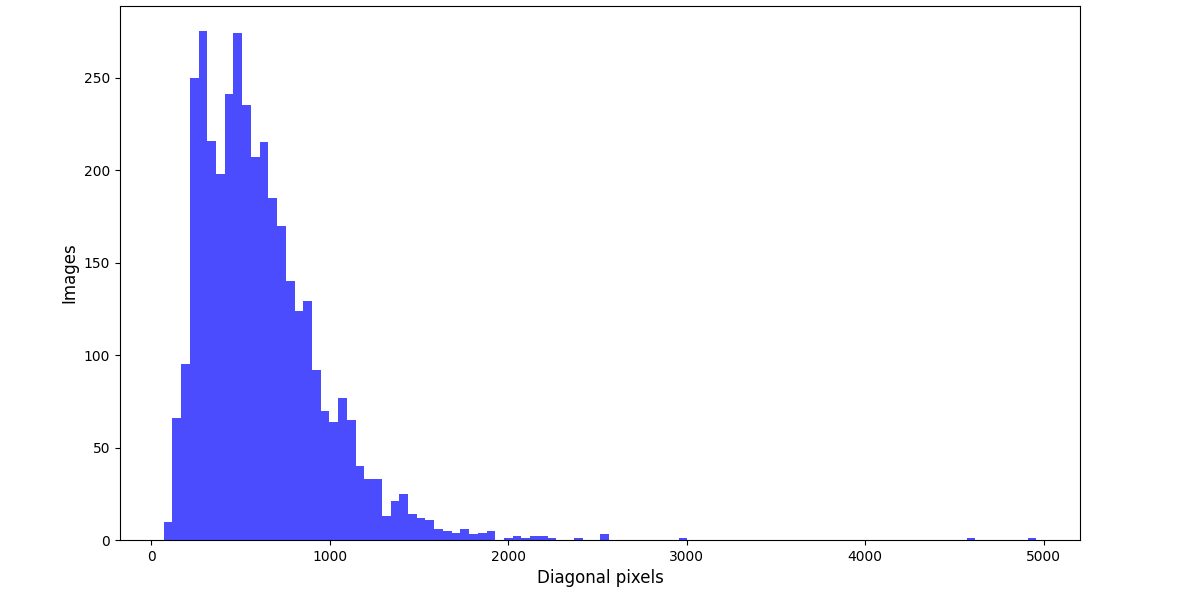

We visualized the distribution of diagonal pixels in the images for M4U. The median of diagonal pixels is 551, and the average is 616. The distribution is shown below.

If you find M4U useful for your research and applications, please kindly cite using this BibTeX:

@article{wang2024m4u,

title={M4U: Evaluating Multilingual Understanding and Reasoning for Large Multimodal Models},

author={Hongyu Wang and Jiayu Xu and Senwei Xie and Ruiping Wang and Jialin Li and Zhaojie Xie and Bin Zhang and Chuyan Xiong and Xilin Chen},

month={May},

year={2024}

}Some implementations in M4U are either adapted from or inspired by the MMMU repository and the MathVista repository.