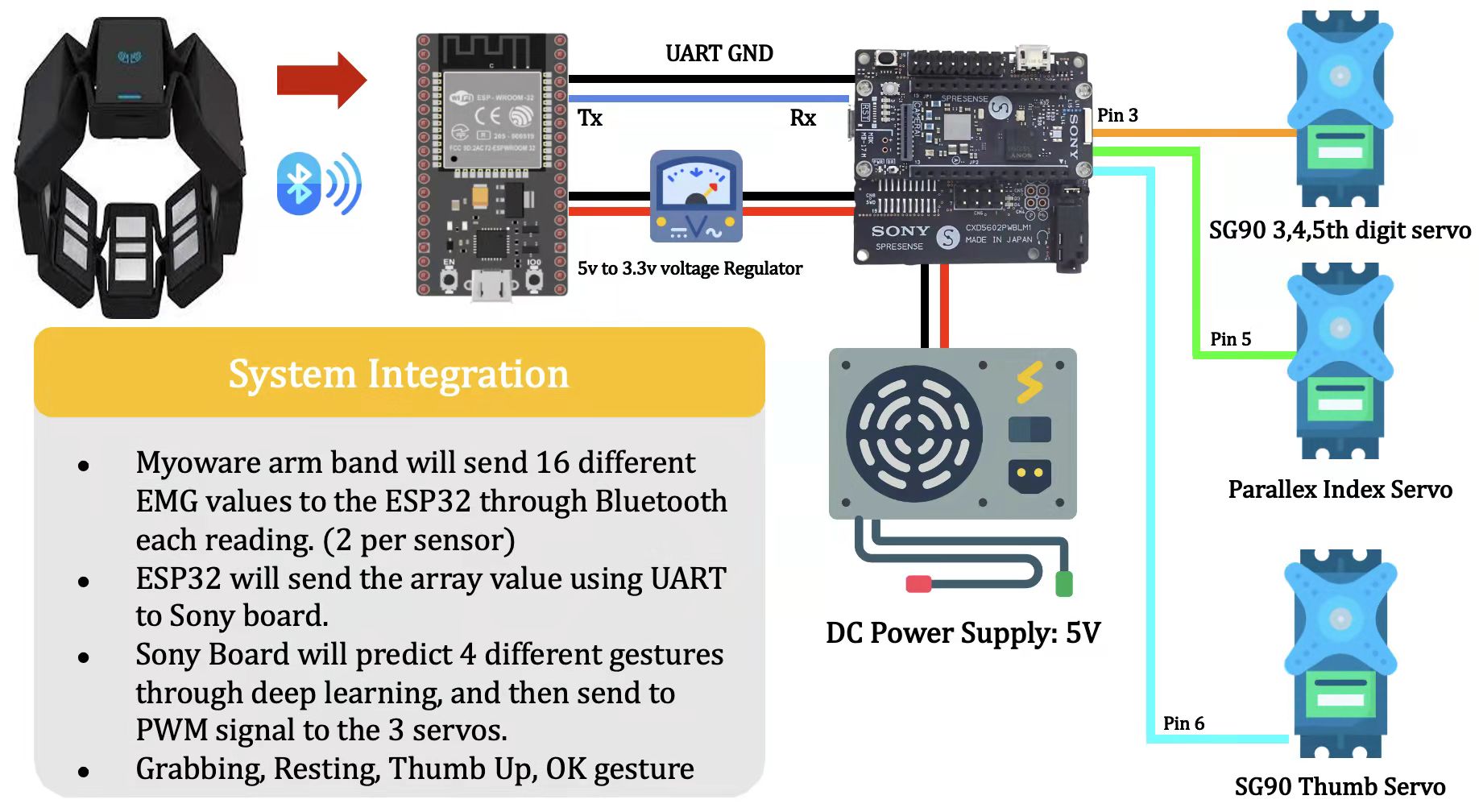

This project aims to deploy a deep neural network on the Sony Spresense micro-controller for real-time bionic arm control. We used a 2D Convolutional Neural Network (CNN) as our EMG pattern recognition algorithm, which has been fine-tuned and compressed before deploying to Sony Spresense. Our sEMG data collection is based on Myo Armband, and communicated to the Sony Spresense via. an intermediary board, the ESP32 Devkit v1.

https://www.hackster.io/emgarm/real-time-bionic-arm-control-via-cnn-based-emg-recognition-b013d3

NOTE: Read Setup.md for INSTALLATION GUIDES.

Sources:

1. For ESP32 Devkit v1:

- arduino-esp32: https://github.com/espressif/arduino-esp32

- sparthan-myo: https://github.com/project-sparthan/sparthan-myo

2. For Sony Spresense (Main + Extension):

- spresense-arduino-tensorflow: https://github.com/YoshinoTaro/spresense-arduino-tensorflow

1. Flash ESP32/ESP32.ino to ESP32 Devkit v1 board by clicking the upload button in Arduino IDE

2. Flash Spresense/Spresense.ino to Sony Spresense board by clicking the upload button in Arduino IDE

-

Folder ESP32 contains:

-

ESP32.ino:- Connects to Myo Armband via. BLE and retrieve sEMG signals from it.

- Communicates retrieved sEMG signals to Sony Spresense via. UART Serial.

- (Baud Rate: 250000)

-

-

Folder Spresense contains:

-

Spresense.ino:- Decode sEMG signals sent from ESP32 Devkit v1 via. UART Serial

- Perform sEMG preprocessing and run model inference z

- Control Bionic Arm

- (Baud Rate: 250000)

-

arm_control.h:- Robotic Arm Control Utility Function

-

model.h:- Finetuned model weights for tensorflow lite model inference

- Exported from: https://github.com/MIC-Laboratory/sEMG_Recognition

-

std_and_mean.h:- Mean and Standard Deviation for each Myo channel, calculated from 7 gestues' sEMG in NinaPro DB5

- Source: https://github.com/MIC-Laboratory/sEMG_Recognition

-

Please follow this Github repo: https://github.com/MIC-Laboratory/sEMG_Recognition