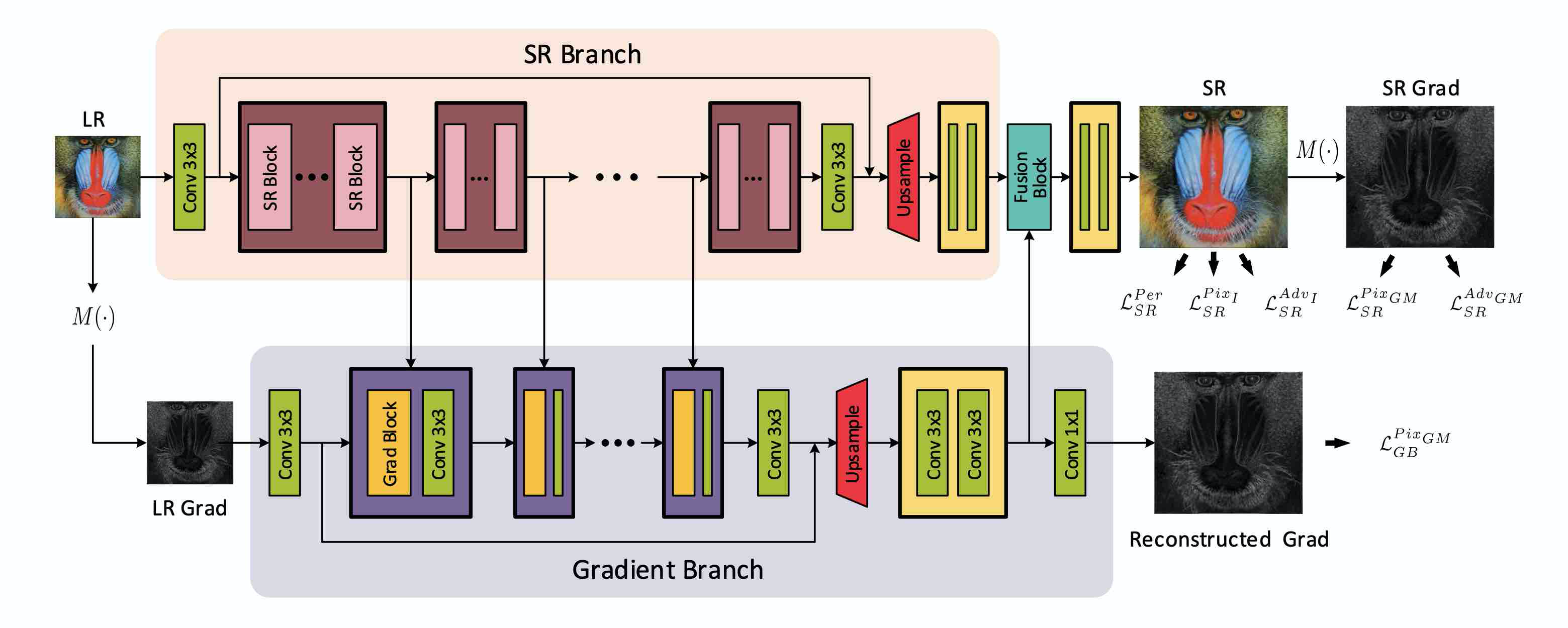

PyTorch implementation of Structure-Preserving Super Resolution with Gradient Guidance (CVPR 2020) [arXiv][CVF]

Extended version: Structure-Preserving Image Super-Resolution (TPAMI 2021) [arXiv]

If you find our work useful in your research, please consider citing:

@ARTICLE{ma2021structure,

author={Ma, Cheng and Rao, Yongming and Lu, Jiwen and Zhou, Jie},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={Structure-Preserving Image Super-Resolution},

year={2021},

volume={},

number={},

pages={1-1},

doi={10.1109/TPAMI.2021.3114428}}

@inproceedings{ma2020structure,

title={Structure-Preserving Super Resolution with Gradient Guidance},

author={Ma, Cheng and Rao, Yongming and Cheng, Yean and Chen, Ce and Lu, Jiwen and Zhou, Jie},

booktitle={Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2020}

}

- Python 3 (Recommend to use Anaconda)

- PyTorch >= 1.0

- NVIDIA GPU + CUDA

- Python packages:

pip install numpy opencv-python lmdb pyyaml - TensorBoard:

- PyTorch >= 1.1:

pip install tb-nightly future - PyTorch == 1.0:

pip install tensorboardX

- PyTorch >= 1.1:

Commonly used training and testing datasets can be downloaded here.

We also provide code to preprocess the datasets here.

-

After downloading the original datasets, please store them to a specific GT folder.

-

You can obtain the LR, HR and Bicubic-upsampled versions of the datasets.

-

Then you can extract sub-images with unified scales for training.

-

The training sets can also be transformed into LMDB format for faster IO speed.

To train an SPSR model:

python train.py -opt options/train/train_spsr.json

-

The json file will be processed by

options/options.py. Please refer to this for more details. -

Before running this code, please modify

train_spsr.jsonto your own configurations including:- the proper

dataroot_HRanddataroot_LRpaths for the data loader (More details) - saving frequency for models and states

- whether to resume training with

.statefiles - other hyperparameters

- loss function, etc.

- the proper

-

You can find your training results in

./experiments. -

During training, you can use Tesorboard to monitor the losses with

tensorboard --logdir tb_logger/NAME_OF_YOUR_EXPERIMENT -

You can choose to use a pretrained RRDB model as a parameter initialization by setting the

pretrain_model_Goption inoptions/train/train_spsr.json. Please download the pretrained model from Google Drive or Baidu Drive (extraction code muw3) and placeRRDB_PSNR_x4.pthinto./experiments/pretrain_models.

To generate SR images by an SPSR model:

python test.py -opt options/test/test_spsr.json

-

Similar to training, the configurations can be modified in the

test_spsr.jsonfile. -

You can find your results in

./results. -

We provide our SPSR model used in our paper that can be downloaded in Google Drive or Baidu Drive (extraction code muw3). Download

spsr.pthand put it into./experiments/pretrain_models. Then modify the directory of pretrained model intest_spsr.jsonand runtest.py. -

You can put your own LR images in a certain folder and just change the

dataroot_LRsetting intest_spsr.jsonand runtest.py.

We provide an easy and useful evaluation toolbox to simplify the procedure of evaluating SR results. In this toolbox, you can get the MA, NIQE, PI, PSNR, SSIM, MSE, RMSE, MAE and LPIPS values of any SR results you want to evaluate.

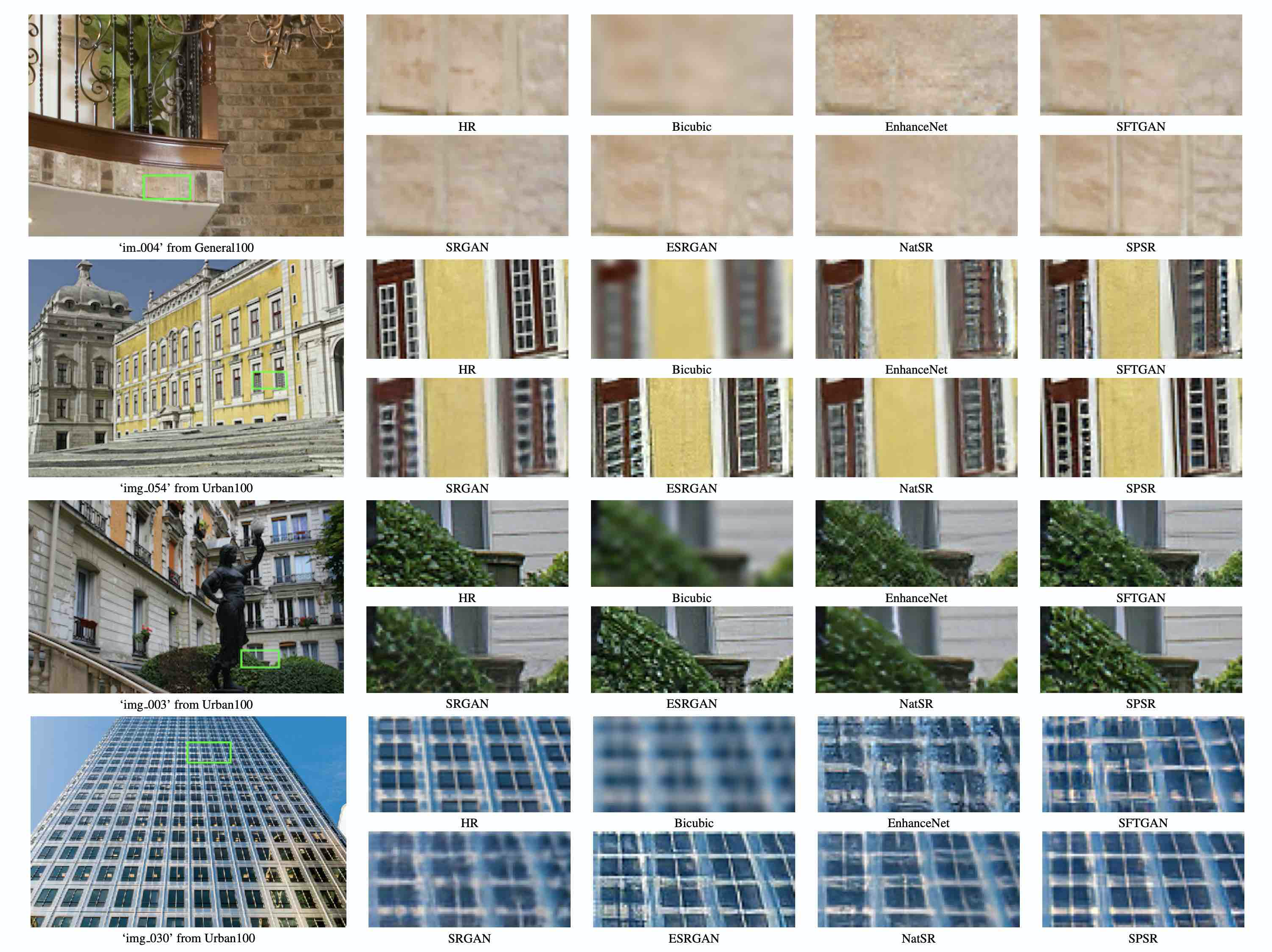

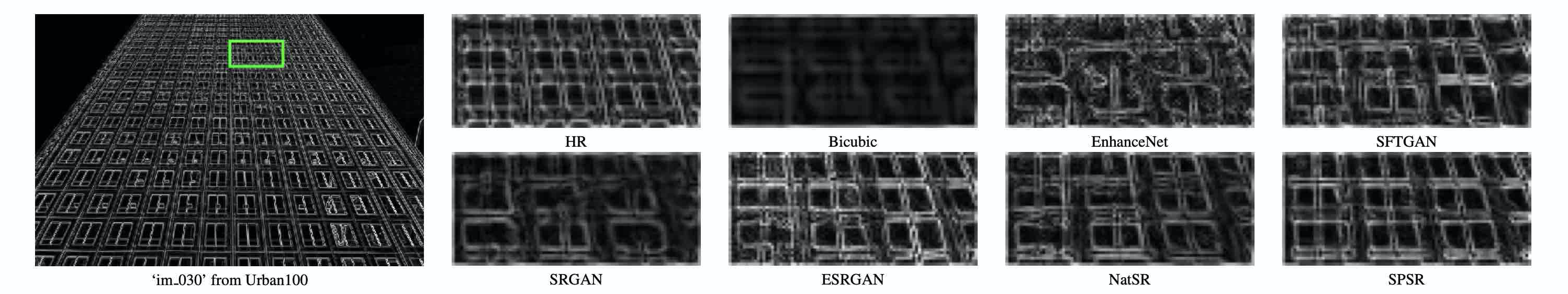

From the below two tables of comparison with perceptual-driven SR methods, we can see our SPSR method is able to obtain the best PI and LPIPS performance and comparable PSNR and SSIM values simultaneously. The top 2 scores are highlighted.

PI/LPIPS comparison with perceptual-driven SR methods.

| Method | Set5 | Set14 | BSD100 | General100 | Urban100 |

|---|---|---|---|---|---|

| Bicubic | 7.3699/0.3407 | 7.0268/0.4393 | 7.0026/0.5249 | 7.9365/0.3528 | 6.9435/0.4726 |

| SFTGAN | 3.7587/0.0890 | 2.9063/0.1481 | 2.3774/0.1769 | 4.2878/0.1030 | 3.6136/0.1433 |

| SRGAN | 3.9820/0.0882 | 3.0851/0.1663 | 2.5459/0.1980 | 4.3757/0.1055 | 3.6980/0.1551 |

| ESRGAN | 3.7522/0.0748 | 2.9261/0.1329 | 2.4793/0.1614 | 4.3234/0.0879 | 3.7704/0.1229 |

| NatSR | 4.1648/0.0939 | 3.1094/0.1758 | 2.7801/0.2114 | 4.6262/0.1117 | 3.6523/0.1500 |

| SPSR | 3.2743/0.0644 | 2.9036/0.1318 | 2.3510/0.1611 | 4.0991/0.0863 | 3.5511/0.1184 |

PSNR/SSIM comparison with perceptual-driven SR methods.

| Method | Set5 | Set14 | BSD100 | General100 | Urban100 |

|---|---|---|---|---|---|

| Bicubic | 28.420/0.8245 | 26.100/0.7850 | 25.961/0.6675 | 28.018/0.8282 | 23.145/0.9011 |

| SFTGAN | 29.932/0.8665 | 26.223/0.7854 | 25.505/0.6549 | 29.026/0.8508 | 24.013/0.9364 |

| SRGAN | 29.168/0.8613 | 26.171/0.7841 | 25.459/0.6485 | 28.575/0.8541 | 24.397/0.9381 |

| ESRGAN | 30.454/0.8677 | 26.276/0.7783 | 25.317/0.6506 | 29.412/0.8546 | 24.360/0.9453 |

| NatSR | 30.991/0.8800 | 27.514/0.8140 | 26.445/0.6831 | 30.346/0.8721 | 25.464/0.9505 |

| SPSR | 30.400/0.8627 | 26.640/0.7930 | 25.505/0.6576 | 29.414/0.8537 | 24.799/0.9481 |

The code is based on BasicSR, MA, NIQE, PI, SSIM and LPIPS.

If you have any questions about our work, please contact macheng17@mails.tsinghua.edu.cn