A set of AWS services for downloading and ingesting Zoom meeting videos into Opencast

The Zoom Ingester (a.k.a., "Zoom Ingester Pipeline", a.k.a., "ZIP") is Harvard DCE's mechanism for moving Zoom recordings out of the Zoom service and into our own video management and delivery system, Opencast. It allows DCE to deliver recorded Zoom class meetings and lectures alongside our other, non-Zoom video content.

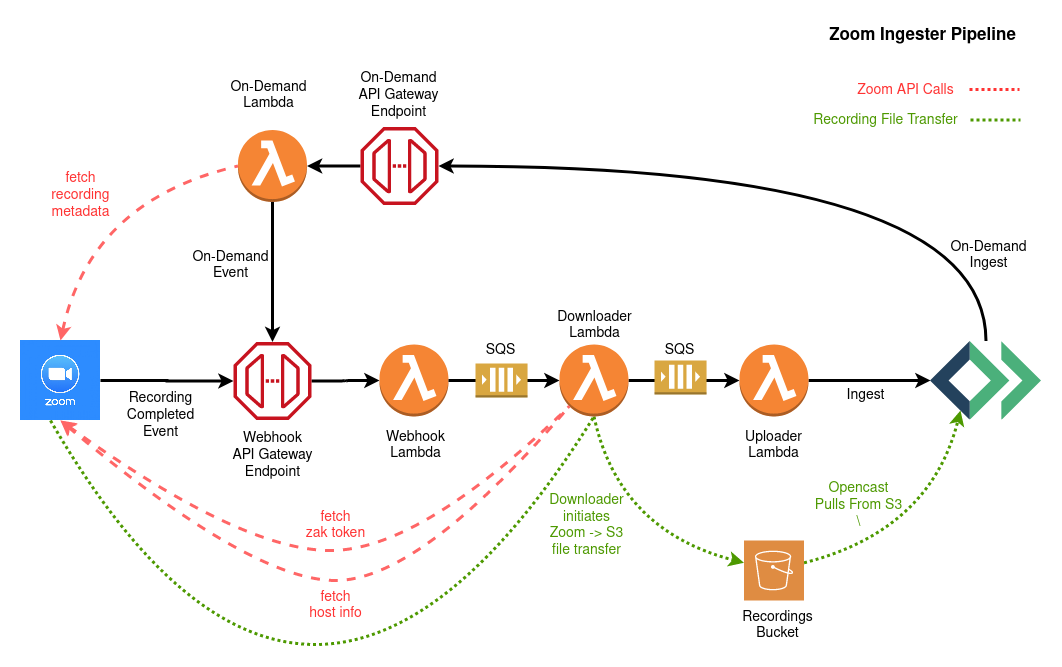

When deployed, the pipeline will have an API endpoint that must be registered in your Zoom account as a receiver of completed recording events. When Zoom has completed the processing of a recorded meeting video it will send a "webhook" notification to the pipeline's endpoint. From there the recording metadata will be passed along through a series of queues and Lambda functions before finally being ingested into Opencast. Along the way, the actual recording files will be fetched from Zoom and stored in S3. Alternatively, from the Opencast admin interface, a user can kick off an "on-demand" ingestion by entering the identifier of a Zoom recording and the corresponding Opencast series into which it should be ingested. The On-Demand ingest function then fetches the recording metadata from the Zoom API and emulates a standard webhook.

Info on Zoom's API and webhook functionality can be found at:

- python 3.8+

- the python

virtualenvpackage - AWS CLI installed and configured https://docs.aws.amazon.com/cli/latest/userguide/cli-chap-install.html

- node.js 10.3 or higher

- the

aws-cdknode.js toolkit installed- this is usually just

npm install -g aws-cdk - it's best if the version matches the version of the

aws-cdk.corepackage inrequirements.txt - see https://docs.aws.amazon.com/cdk/latest/guide/getting_started.html for more info

- this is usually just

- an Opsworks Opencast cluster, including:

- the base url of the admin node

- the user/pass combo of the Opencast API system account user

- Opencast database password

- A Zoom account API key and secret

- Email of Zoom account with privileges to download recordings

- An email address to receive alerts and other notifications

- Make sure to have run

aws configureat some point so that you at least have one set of credentials in an~/.aws/configurefile. - Make a python virtualenv and activate it however you normally do those things, e.g.:

virtualenv venv && source venv/bin/activate - Python dependencies are handled via

pip-toolsso you need to install that first:pip install pip-tools - Install the dependencies by running

pip-sync. - Copy

example.envto.envand update as necessary. See inline comments for an explanation of each setting. - If you have more than one set of AWS credentials configured you can set

AWS_PROFILEin your.envfile. Otherwise you'll need to remember to set in your shell session prior to anyinvokecommands. - Run

invoke testto confirm the installation. - (Optional) run

invoke -lto see a list of all available tasks + descriptions.

- Make sure your s3 bucket for packaged lambda code exists. The

name of the bucket comes from

LAMBDA_CODE_BUCKETin.env. - Run

invoke stack.createto build the CloudFormation stack. - (Optional for dev) Populate the Zoom meeting schedule database. See the Schedule DB section below for more details.

- Export the DCE Zoom schedule google spreadsheet to a CSV file.

- Run

invoke schedule.import-csv [filepath].

That's it. Your Zoom Ingester is deployed and operational. To see a summary of the

state of the CloudFormation stack and the Lambda functions run invoke stack.status.

Once the Zoom Ingester pipeline is operational you can configure your Zoom account to send completed recording notifications to it via the Zoom Webhook settings.

- Get your ingester's webhook endpoint URL. You can find it in the

invoke stack.statusoutput or by browsing to the release stage of your API Gateway REST api. - Go to marketplace.zoom.us and log in. Under "Develop" select "Build App."

- Give your app a name. Turn off "Intend to publish this app on Zoom Marketplace." Choose app type "Webhook only app."

- Click "Create." Fill out the rest of the required information, and enter the API endpoint under "Event Subscription."

- Make sure to subscribe to "All recordings have completed" events.

- Activate the app when desired. (For development it's recommended that you only leave the notifications active while you're actively testing.)

The easiest way to find the on demand ingest endpoint is to run invoke stack.status.

The on-demand endpoint appears in the stack Outputs listing. Look for the row where ExportName is

something like my-zip-stack-ingest-url.

Opencast can send on demand ingest requests to this endpoint as POST requests.

The payload parameter uuid, a unique Zoom recording id, is requried.

The payload parameters oc_series_id, the Opencast series id, and

allow_multiple_ingests, whether to allow multiple ingests of the same

Zoom recording, are optional.

- Create a dev/test stack by setting your

.envSTACK_NAMEto a unique value. - Follow the usual stack creation steps outlined at the top.

- Make changes.

- Run

invoke deploy.all --do-releaseto push changes to your Lambda functions. Alternatively, to save time, if you are only editing one function, runinvoke deploy.[function name] --do-release. - If you make changes to the provisioning code in

./cdkyou must also (or instead) runinvoke stack.diffto inspect the changesinvoke stack.updateto apply the changes

- Run

invoke exec.webhook [options]to initiate the pipeline. See below for options. - Repeat.

Options: --oc-series-id=XX

This task will recreate the webhook notification for the recording identified by

uuid and manually invoke the /new_recording api endpoint.

Options: --oc-series-id=XX

Similar to exec.webhook except that this also triggers the downloader and

uploader functions to run and reports success or error for each.

Options: --oc-series-id=XXX --allow-multiple-ingests

This task will manually invoke the /ingest endpoint. This is the endpoint used

by the Opencast "Zoom+" tool. Specify an opencast series id with --oc-series-id=XX.

Allow multiple ingests of the same recordiing (for testing purposes) with --allow-multiple-ingests.

Incoming Zoom recordings are ingested to an Opencast series based on two pieces of information:

- The Zoom meeting number. AKA the Zoom series id.

- The time the recording was made

The Zoom Ingester pipeline includes a DynamoDB table that stores information about when Zoom classes are held. This is because the same Zoom series id can be used by different courses. To determine the correct Opencast series that the recording should be ingested to we need to also know what time the meeting occurred.

The current authority for Zoom meeting schedules is a google spreadsheet. To populate

our DynamoDB from the spread sheet data we have to export the spreadsheet to CSV and then

import to DynamoDB using the invoke schedule.import-csv [filepath] task.

If a lookup to the DynamoDB schedule data does not find a mapping the uploader function will

log a message to that effect and return. During testing/development, this can be overridden

by setting the DEFAULT_SERIES_ID in the lambda function's environment. Just set that

to whatever test series you want to use and all unmapped meetings will be ingested to that series.

This project uses the invoke python library to provide a simple task cli. Run invoke -l

to see a list of available commands. The descriptions below are listed in the likely order

you would run them and/or their importance.

Does the following:

- Packages each function and uploads the zip files to your s3 code bucket

- Builds all of the AWS resources as part of a CloudFormation stack using the AWS CDK tool

- Releases an initial version "1" of each Lambda function

Notes:

- When running this command (and

stack.updateas well) you will be presented with a confirmation prompt to approve some of provisioning operations or changes, typical those realted to security and/or permissions - The output from this command can be a bit verbose. You will see real-time updates from Cloudformation as resource creation is initiated and completed.

Use stack.update to modify an existing stack.

This will output some tables of information about the current state of the CloudFormation stack and the Lambda functions.

Execute the CodeBuild project. This is the command that should be used to deploy and release new versions of the pipeline functions in a production environment.

--revision is a required argument.

The build steps that CodeBuild will perform are defined in buildspec.yml.

View a diff of CloudFormation changes to the stack.

Apply changes to the CloudFormation stack.

Delete the stack.

Enable/disable debug logging in the Lambda functions. This task adds or modifies

a DEBUG environment variable in the Lambda function(s) settings.

Does a bulk pip-compile upgrade of all base and function requirements.

Dependencies for the project as a whole and the individual functions are managed using

the pip-tools command, pip-compile. Top-level dependencies are listed in a .in file

which is then compiled to a "locked" .txt version like so:

pip-compile -o requirements.txt requirements.in

Both the .in and .txt files are version-controlled, so the initial compile was

only necessary once. Now we only have to run pip-compile in a couple of situations:

- when upgrading a particular package.

- to update the project's base requirements list if a dependency for a specific function is changed

In the first case you run pip-compile -P [package-name] [source file] where source_file is the .in file getting the update.

Following that you must run pip-compile in the project root to pull the function-specific change(s) into the main project list.

Finally, run pip-sync to ensure the packages are updated in your virtualenv .

The lambda python functions each have associated unittests. To run them manually execute:

invoke test

Alternatively you can run tox.

Lambda functions employ the concepts of "versions" and "aliases". Each time you push new

code to a Lambda function it updates a special version signifier, $LATEST. If you wish

to assign an actual version number to what is referenced by $LATEST you "publish" a

new version. Versions are immutable and version numbers always increment by 1.

Aliases allow us to control which versions of the Lambda functions are invoked by the system.

The Zoom Ingester uses a single alias defined by the .env variable, LAMBDA_RELEASE_ALIAS (default "live"),

to designate the currently released versions of each function. A new version of each

function can be published independent of the alias as many times as you like. It is only

when the release alias is updated to point to one of the new versions that the behavior

of the ingester will change.

When you first build the stack using the above "Initial Setup" steps, the version of the Lambda functions code will be whatever was current in your cloned repo. The Lambda function versions will be set to "1" and the release aliases ("live") will be pointing to this same version. At this point you may wish to re-release a specific tag or branch of the function code. In a production environment this should be done via the CodeBuild project, like so:

invoke codebuild -r release-v1.0.0

This command will trigger CodeBuild to package and release the function code from the github repo identified by the "release-v1.0.0" tag. Each function will have a new Lambda version "2" published and the release alias will be updated to point to this version.

Merge all changes for release into master.

Checkout master branch, git pull.

First check that the codebuild runs with the new changes on a dev stack:

If there are new functions you must package and ensure the code is in s3:

invoke package -u

then

invoke stack.update

invoke codebuild --revision=master

Make sure codebuild completes successfully.

First update your tags:

git tag -l | xargs git tag -d

git fetch --tags

Then tag release:

git tag release-vX.X.X

git push --tags

Make sure you are working on the correct zoom ingester stack, double check environment variables. Then:

If there are new functions you must package and ensure the code is in s3:

invoke package -u

then

invoke stack.update

invoke codebuild --revision=release-vX.X.X