This repository is the official implementation of SAM2Long.

SAM2Long: Enhancing SAM 2 for Long Video Segmentation with a Training-Free Memory Tree

Shuangrui Ding, Rui Qian, Xiaoyi Dong, Pan Zhang

Yuhang Zang, Yuhang Cao, Yuwei Guo, Dahua Lin, Jiaqi Wang

CUHK, Shanghai AI Lab

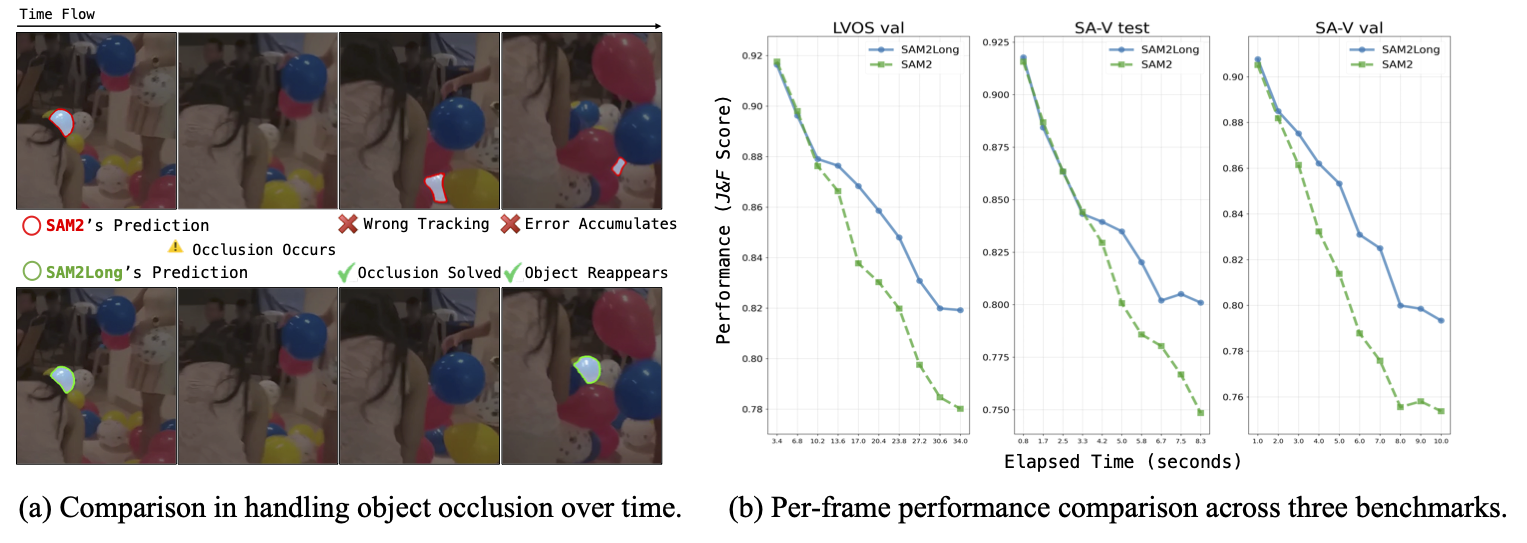

SAM2Long significantly improves upon SAM 2 by addressing error accumulation issue, particularly in challenging long-term video scenarios involving object occlusion and reappearance. With SAM2Long, the segmentation process becomes more resilient and accurate over time, maintaining strong performance even as objects are occluded or reappear in the video stream.

SAM2Long introduces a training-free memory tree that effectively reduces the risk of error propagation over time. By maintaining diverse segmentation hypotheses and dynamically pruning less optimal paths as the video progresses, this approach enhances segmentation without the need for additional parameters or further training. It maximizes the potential of SAM 2 to deliver better results in complex video scenarios.

SAM2Long pushes the performance limits of SAM 2 even further across various video object segmentation benchmarks, especially achieving an average improvement of 3 in J & F scores across all 24 head-to-head comparisons on long-term video datasets like SA-V and LVOS.

The table below provides a one-to-one comparison between SAM 2 and SAM2Long using the improved SAM 2.1 checkpoints.

| Method | Backbone | SA-V val (J & F) | SA-V test (J & F) | LVOS v2 (J & F) |

|---|---|---|---|---|

| SAM 2 | Tiny | 73.5 | 74.6 | 77.8 |

| SAM2Long | Tiny | 77.0 | 78.7 | 81.4 |

| SAM 2 | Small | 73.0 | 74.6 | 79.7 |

| SAM2Long | Small | 77.7 | 78.1 | 83.2 |

| SAM 2 | Base+ | 75.4 | 74.6 | 80.2 |

| SAM2Long | Base+ | 78.4 | 78.5 | 82.3 |

| SAM 2 | Large | 76.3 | 75.5 | 83.0 |

| SAM2Long | Large | 80.8 | 80.8 | 85.2 |

The table below provides a one-to-one comparison between SAM 2 and SAM2Long using the SAM 2 checkpoints.

| Method | Backbone | SA-V val (J & F) | SA-V test (J & F) | LVOS v2 (J & F) |

|---|---|---|---|---|

| SAM 2 | Tiny | 75.1 | 76.3 | 81.6 |

| SAM2Long | Tiny | 78.9 | 79.0 | 82.4 |

| SAM 2 | Small | 76.9 | 76.9 | 82.1 |

| SAM2Long | Small | 79.6 | 80.4 | 84.3 |

| SAM 2 | Base+ | 78.0 | 77.7 | 83.1 |

| SAM2Long | Base+ | 80.5 | 80.8 | 85.2 |

| SAM 2 | Large | 78.6 | 79.6 | 84.0 |

| SAM2Long | Large | 81.1 | 81.2 | 85.3 |

Please follow the instruction of official SAM 2 repo. If you encounter issues running the code, it's recommended to create a new environment specifically for SAM2Long instead of sharing it with SAM2. For further details, please check this issue here.

All the model checkpoints can be downloaded by running:

bash

cd checkpoints && \

./download_ckpts.sh && \

cd ..

The inference instruction is in INFERENCE.md.

The evaluation code can be found here.

To evaluate performance on seen and unseen categories in the LVOS dataset, refer to the evaluation code available here.

Shuangrui Ding: mark12ding@gmail.com

The majority of this project is released under the CC-BY-NC 4.0 license as found in the LICENSE file. The original SAM 2 model checkpoints and SAM 2 training code are licensed under Apache 2.0.

I would like to thank Yixuan Wang for his assistance with dataset preparation and Haohang Xu for his insightful disscusion.

This project is built upon SAM 2 and the format of this README is inspired by VideoMAE.

If you find our work helpful for your research, please consider giving a star ⭐ and citation 📝.

@article{ding2024sam2long,

title={SAM2Long: Enhancing SAM 2 for Long Video Segmentation with a Training-Free Memory Tree},

author={Ding, Shuangrui and Qian, Rui and Dong, Xiaoyi and Zhang, Pan and Zang, Yuhang and Cao, Yuhang and Guo, Yuwei and Lin, Dahua and Wang, Jiaqi},

journal={arXiv preprint arXiv:2410.16268},

year={2024}

}