This repository provides the official PyTorch implementation of the following paper:

Large Language Models as Zero-shot Dialogue State Tracker through Function Calling

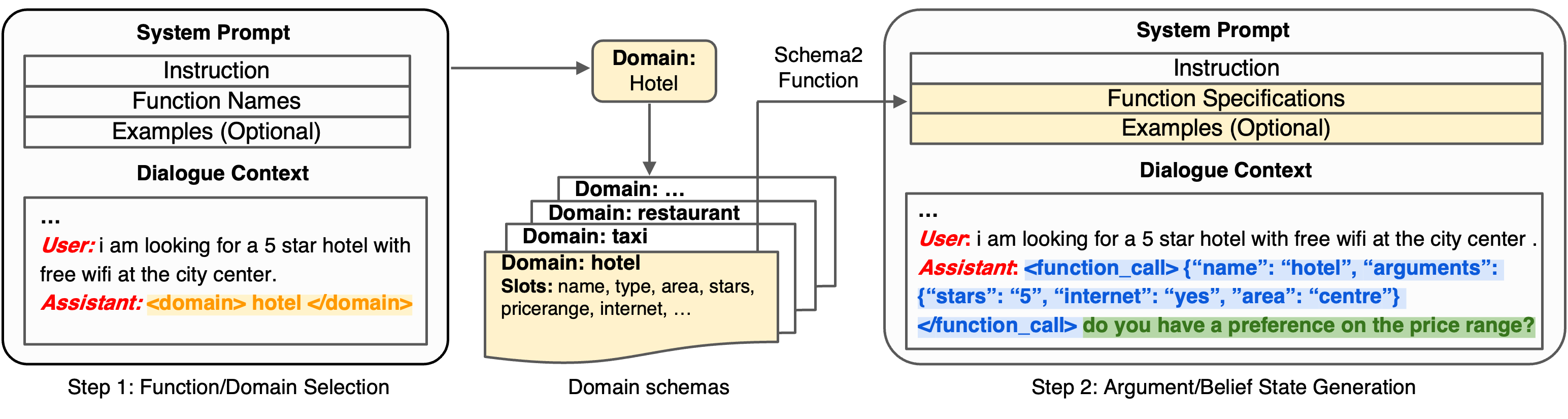

We introduce a novel approach FnCTOD, to address zero-shot DST with LLMs. Our method seamlessly integrates DST as a part of the assistant's output during chat completion. Specifically, we treat the schema of each task-oriented dialogue domain as a specific function, and DST for this domain as the process of ``calling'' the corresponding function. We thus instruct LLMs to generate function calls along with the response in the assistant's output. To achieve this, we convert the domain schema into function specifications, which include the function's description and required arguments, and incorporate them into the system prompt of the LLM. Additionally, we integrate these function calls into the assistant's output within the dialogue context.

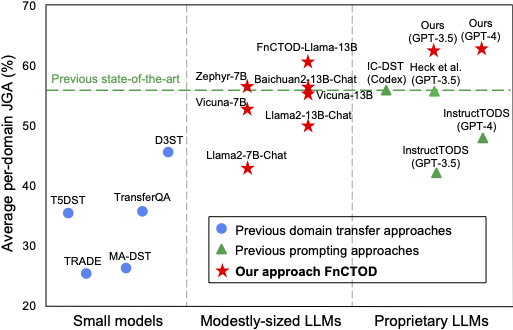

Zero-shot DST performance comparison among (1) previous domain transfer approaches using small models; (2) previous prompting approaches exclusively relying on advanced proprietary LLMs; and (3) our approach, compatible with various LLMs, empowers various 7B and 13B models for superior performance and sets new state-of-the-art with GPT-4.

The detailed instruction for preparing the benchmark dataset MultiWOZ and pre-training corpora (optional) are provided in the ./data folder.

- Requires Python 3.8 – 3.11

- Conda Environment Setup:

pip install -r requirements.txt - Environment Variable Configuration: Set the following environment variables for local model inference in each evaluation script:

export TRANSFORMERS_CACHE='/HOME_PATH/.cache/huggingface/transformers'

export HF_HOME='/HOME_PATH/.cache/huggingface'

export OPENAI_API_KEY='XXXX'

Execute the following scripts located in the ./sh_folders/ directory to run inference with different models.

inference_chatgpt.shinference_fnctod-llama.shinference_oss_models.sh

- For each dataset used in the training, including CamRest676, MSE2E, SGD, Taskmaster, and WoZ, first process the data, then format it in our dialogue prompt for training. Here is an example for the SGD dataset:

cd sh_folders

sh processing-sgd.sh

sh prompting-sgd.sh

- Collect the data from different datasets:

cd sh_folders

sh create_finetunedata.sh

- Finetune FnCTOD-Llama2:

cd sh_folders

sh finetune.sh

- UBAR: upon which our data processing code is built.

- PPTOD: upon which our evaluation code is built.

- FastChat: we borrowed the chat templates from this repository.

We thank the authors for their wonderful work.

See the LICENSE file for details about the license under which this code is made available.

If you find this work useful, please cite our paper:

@article{li2024large,

title={Large Language Models as Zero-shot Dialogue State Tracker through Function Calling},

author={Li, Zekun and Chen, Zhiyu Zoey and Ross, Mike and Huber, Patrick and Moon, Seungwhan and Lin, Zhaojiang and Dong, Xin Luna and Sagar, Adithya and Yan, Xifeng and Crook, Paul A},

journal={arXiv preprint arXiv:2402.10466},

year={2024}

}

In file src/multiwoz/inference.py change line 21, 201, and 599 to your location.

Add your key in sh_folders/inference_chatgpt.sh