This project is originally based on a-PyTorch-Tutorial-to-Transformers. I have made heavy modifications involving hyperparameter configuration and implementing various methods to improve the performance of the model from other papers. Papers involved include:

- Attention Is All You Need

- Lessons on Parameter Sharing across Layers in Transformers

- RoFormer: Enhanced Transformer with Rotary Position Embedding

- ReZero is All You Need: Fast Convergence at Large Depth

- On Layer Normalization in the Transformer Architecture

- Understanding the Difficulty of Training Transformers

Results are documented in a spreadsheet here.

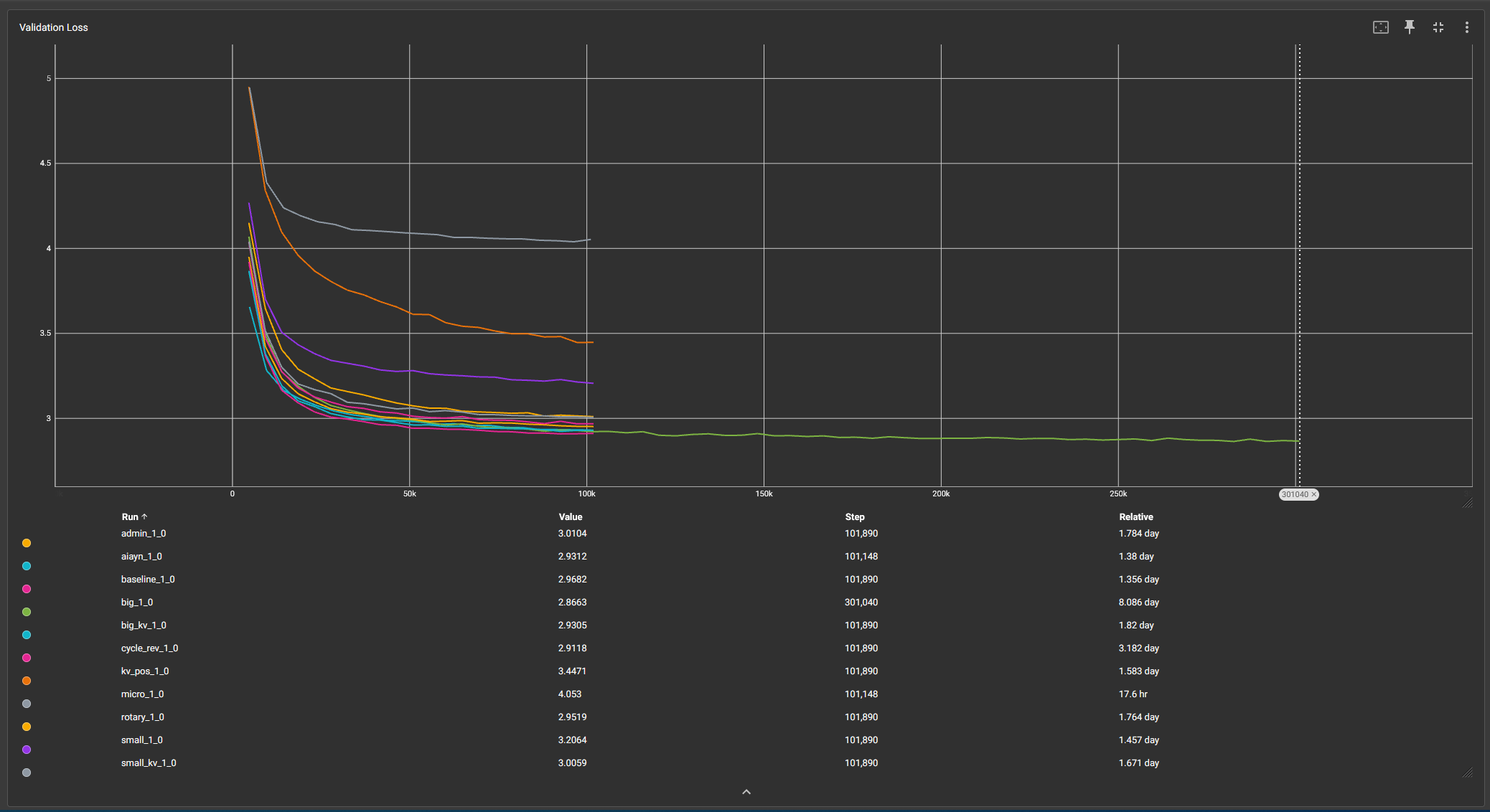

Example tensorboard graph of validation loss between the different runs: