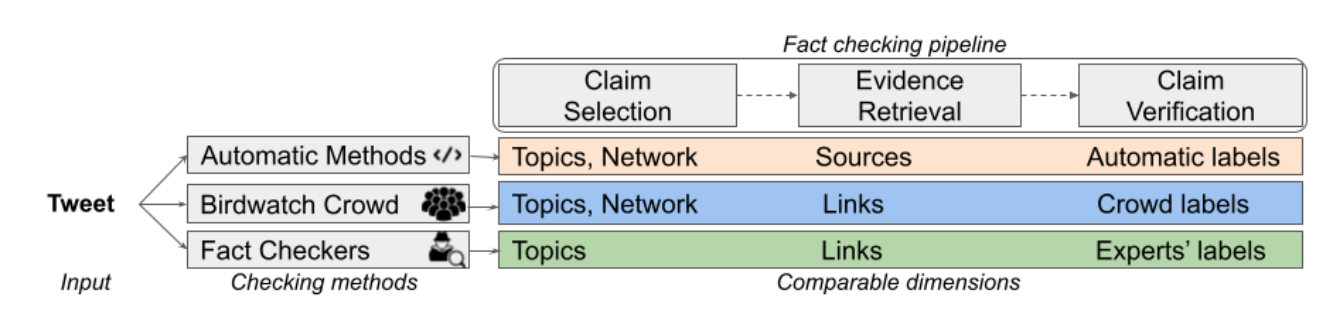

BirdWatch is a crowdsourcing-based approach for veryfying misleading claims on Twitter. We analyze the initiative through the lens of the three main components of a factchecking pipeline: claim detection based on check-worthiness, evidence retrieval, and claim verification, while comparing with expert fact-checkers and computational methods. This repo contains the required code for running the experiments of the associated paper.

git clone https://github.com/MhmdSaiid/BirdWatch

cd BirdWatchvirtualenv birdwatch -p $(which python3)

source birdwatch/bin/activatepip install -r requirements.txtpip install --user ipykernel

python -m ipykernel install --user --name=birdwatchwget -O data/UpdatedClaimReview.zip https://nextcloud.eurecom.fr/s/8fJkTEQH9QeaaxQ/download/UpdatedClaimReview.zip

unzip data/UpdatedClaimReview.zip -d data/

rm -rf data/UpdatedClaimReview.zipwget -O BW_BertTopic.zip https://nextcloud.eurecom.fr/s/kqXfGg49iTEnJKR/download/BW_BertTopic.zip

unzip BW_BertTopic.zip -d model/

rm -rf BW_BertTopic.zip

The dataset BW_CR.csv contains the matched Birdwatch tweets with the ClaimReview fact-checks. BirdWatch dataset was obtained on 18th September 2021 05:02 PM (GMT+2) and the tweets were hydrated on 25th September 2021 09:26 AM (GMT+2). The columns of the dataset are:

- tweetId: ID of the Tweet

- Tweet: Tweet text

- noteId: ID of BirdWatch note

- summary: Written note by the BirdWatch user

- classification: Label given by the BirdWatch user

- CR Fact: Macthed ClaimReview fact-check

- credibility: Label given by the expert

- full_text: Full Tweet text containing characters (such as non-unicode) that were removed in the column Tweet

- Analyzing BirdWatch Data (Section 3.1)

- Analyzing BirdWatch Users (Section 3.1)

- Topic Modeling (Section 3.4)

- Matching BirdWatch & ClaimReview (Section 3.3)

- Claim Topic Analysis (Section 4.1.1)

- ClaimBuster API (Section 4.1.2)

- Tweet Popularity (Section 4.1.3)

- Time Analysis (Section 4.1.4)

- NewsGuard (Section 4.2)

- Internal Agreement (Section 4.3.1)

- External Agreement (Section 4.3.2)

- Note Helpfulness Score (Section 4.3.3)

- Computational Methods (Section 4.3.4)

For any inquiries, feel free to contact us, or raise an issue on Github.

You can cite our work:

@inproceedings{saeed-etal-2022-birdwatch,

title = "Crowdsourced Fact-Checking at Twitter: How Does the Crowd Compare With Experts?",

author = "Saeed, Mohammed and

Traub, Nicolas and

Nicola, Maelle and

Demartini, Gianluca and

Papotti, Paolo",

booktitle = "31st ACM International Conference on Information and Knowledge Management",

month = oct,

year = "2022",

address = "Online and Atlanta, Georgia, USA",

url = "https://arxiv.org/abs/2208.09214",

}