Each team member was assigned creation of feature sets selected from various state-of-the-art academic papers, which could be found in the Michał, kasper, karol and wiktor directories. The table below summarizes created features and contains necessary DOIs to access papers.

| File path | Feature name | DOI |

|---|---|---|

./user-features/features-sources/Michał/Characteristics.py |

Kurtosis | 10.3390/s21030692 |

| Zero crossing | 10.3390/s21030692 |

|

| Correlation coefficient | 10.3390/s21030692 |

|

| Entropy | 10.3390/s21030692 |

|

| Cross correlation between axes | 10.1109/SMC.2015.263 |

|

| Magnitude | 10.1186/s13673-017-0097-2 |

|

| Median absolute deviation | 10.1007/978-0-387-32833-1_261 |

|

./user-features/features-sources/karol/feats.py |

Mean value | 10.3390/s21030692 |

| Standard deviation | 10.3390/s21030692 |

|

| Root mean square value | 10.3390/s21030692 |

|

| Interquartile range | 10.3390/s21030692 |

|

| Energy | 10.3390/s21030692 |

|

| Mean Power frequency | 10.3390/s21030692 |

|

| One quarter of frequency | 10.3390/s21030692 |

|

| Three quarters of frequency | 10.3390/s21030692 |

|

./user-features/features-sources/kasper/feats.py |

Skewness | 10.3390/s21030692 |

| Peak to peak | 10.3390/s21030692 |

|

| Mean absolute value | 10.3390/s21030692 |

|

| Waveform detector | 10.3390/s21030692 |

|

| Jerk | 10.3390/s21030692 |

|

| Haar filters | 10.1109/DSP.2009.4786008 |

|

| FFT from jerk | 10.1186/s13673-017-0097-2 |

|

./user-features/features-sources/wiktor/feats.py/ |

Slope sign change | 10.3390/s21030692 |

| Wilson Amplitude | 10.3390/s21030692 |

|

| 4th order largest value of DFT | 10.3390/s21030692 |

|

| Energy wavelet coefficient | 10.3390/s21030692 |

|

| FFT from Magnitude | 10.1186/s13673-017-0097-2 |

|

| Auto-regression coefficients with Burg order equal to four correlation coefficients between two signals | 10.1186/s13673-017-0097-2 |

|

| Signal lag | - |

iOS and Android differ in the way they collect MARG sensors data. To process it correctly, we needed to compare gathered signals. Our research and data is stored in android-apple-comparison directory.

Additionally, to measure our mobile phones' orientation in space, we have implemented a quaternion-based filter for our MARG data gathered during performed activities. Our code was based on steps from Keeping a Good Attitude: A Quaternion-Based Orientation Filter for IMUs and MARGs (DOI: 10.3390/s150819302).

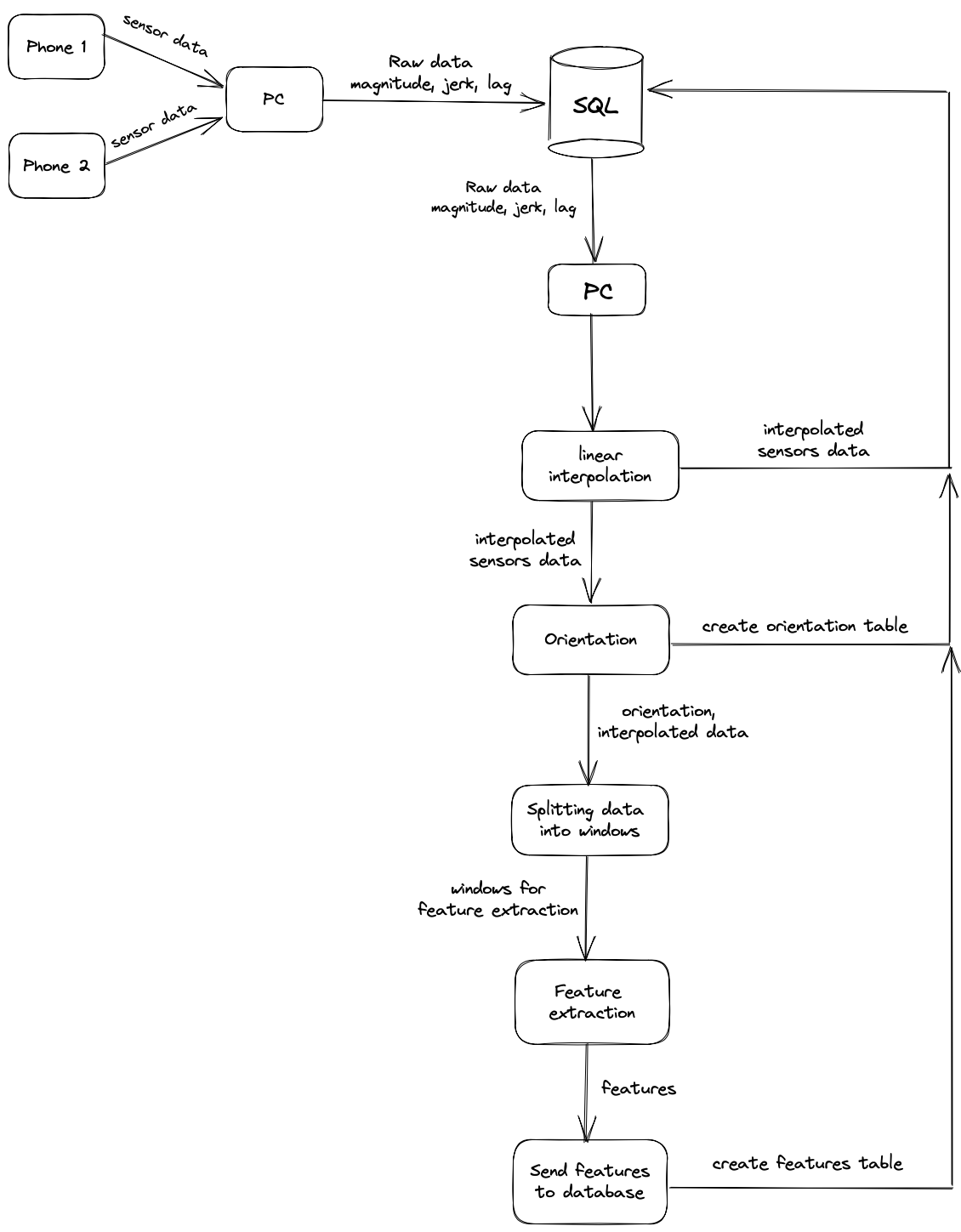

createTable.py is a Python script created for pushing raw data from MARG sensors to a SQL database. It uses pyodbc for database connection and data manipulation. Firstly, the script creates new tables with all raw data columns and additional magnitude, lag and, in accelerometer case, jerk columns. After successful table creation it uses executemany method to quickly insert all the data from .csv files. The process' steps are shown below:

- Create seperate directory for each performed test. Name of the files stored in it will be used to create

.csvfiles' tables. - Read env variables necessary to open DB connection.

- Loop through each directory in

streaming-work-dirand create lists with its contents. - Open connection to database: for each file create a new table. If name of the read file has

ccelstring in it, add additional columns to store jerk data. - Compute magnitude, lag and, if necessary, jerk data. Start inserting data into table.

- Repeat until all the data has been pushed to the database.

During our tests, data gathered from accelerometer, gyroscope and magnetometer sensors turned out not to be in an ideal sync. To solve this problem we've come up with a short function, which uses PchipInterpolator from scipy.interpolate module. Function takes three arguments (accelerometer, gyroscope and magnetometer dataframes with raw sensor data) and returns dataframe with interpolated data in 0.01s intervals with magnitude, lag and in case of accelerometer - jerk columns.

Script used to process data gathered by Android users, so it complies with iPhone MARG readings. Values from certain axes are multiplied by -1, while all values are divided by close estimation of Earth's gravity acceleration.

Script used to preprocess data and compute jerk, magnitude and lag.

Collection of all features from features-sources directory. Uses global variables to implement dynamic feature dataframe size.

Returns names of all tables in database to be processed.

Feature extraction pipeline. Composed of all scripts listed above.