This is the official PyTorch implementation of QLLM: Accurate and Efficient Low-Bitwidth Quantization for Large Language Models.

By Jing Liu, Ruihao Gong, Xiuying Wei, Zhiwei Dong, Jianfei Cai, and Bohan Zhuang.

We propose QLLM, an accurate and efficient low-bitwidth post-training quantization method designed for LLMs.

- [10-03-2024] Release the code!🌟

- [17-01-2024] QLLM is accepted by ICLR 2024! 👏

conda create -n qllm python=3.10 -y

conda activate qllm

git clone https://github.com/ModelTC/QLLM

cd QLLM

pip install --upgrade pip

pip install -e .

We provide the training scripts in scripts folder. For example, to perform W4A8 quantization for LLaMA-7B, run

sh scripts/llama-7b/w4a4.sh

Remember to change the path of model model and output path output_dir.

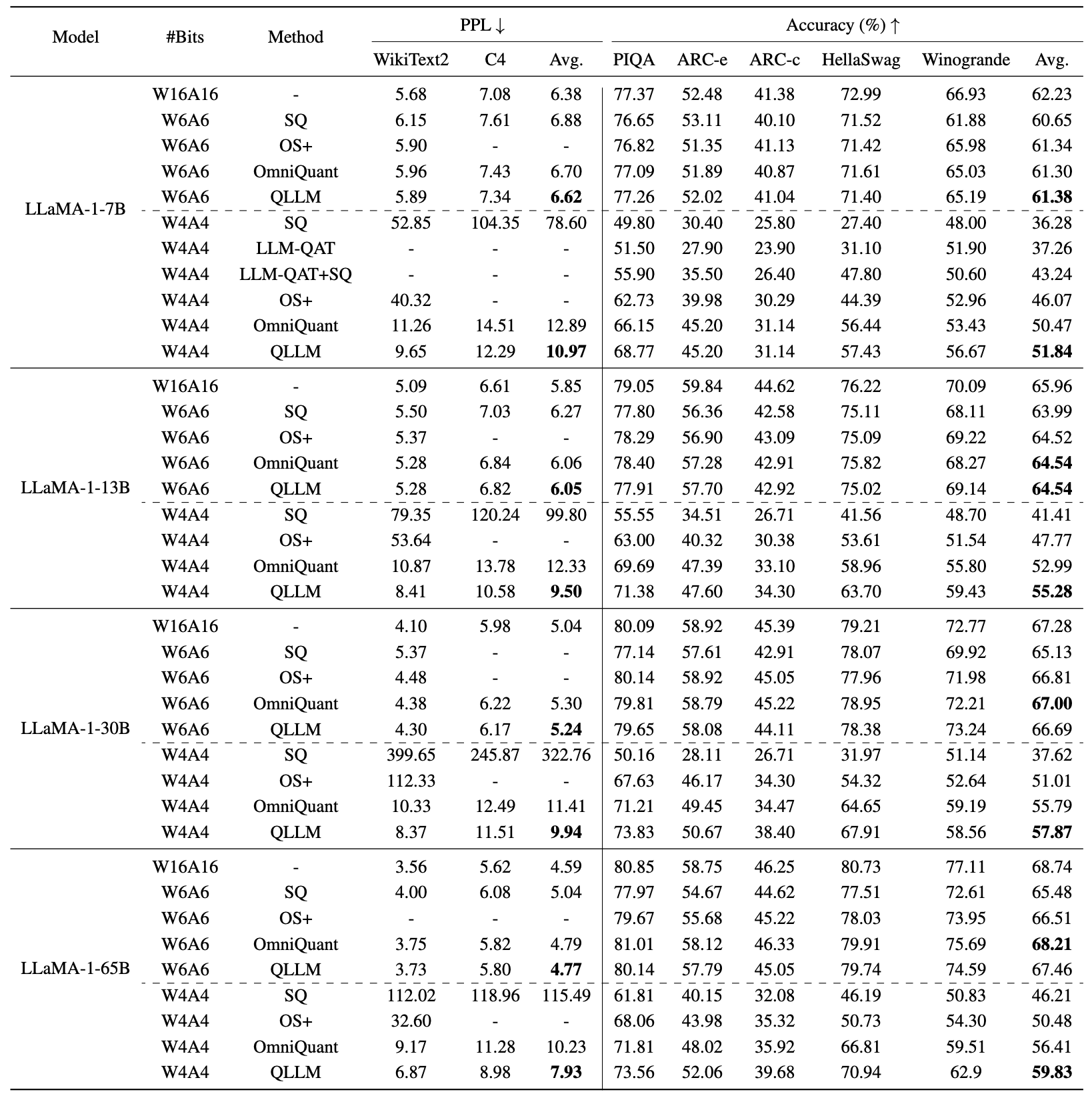

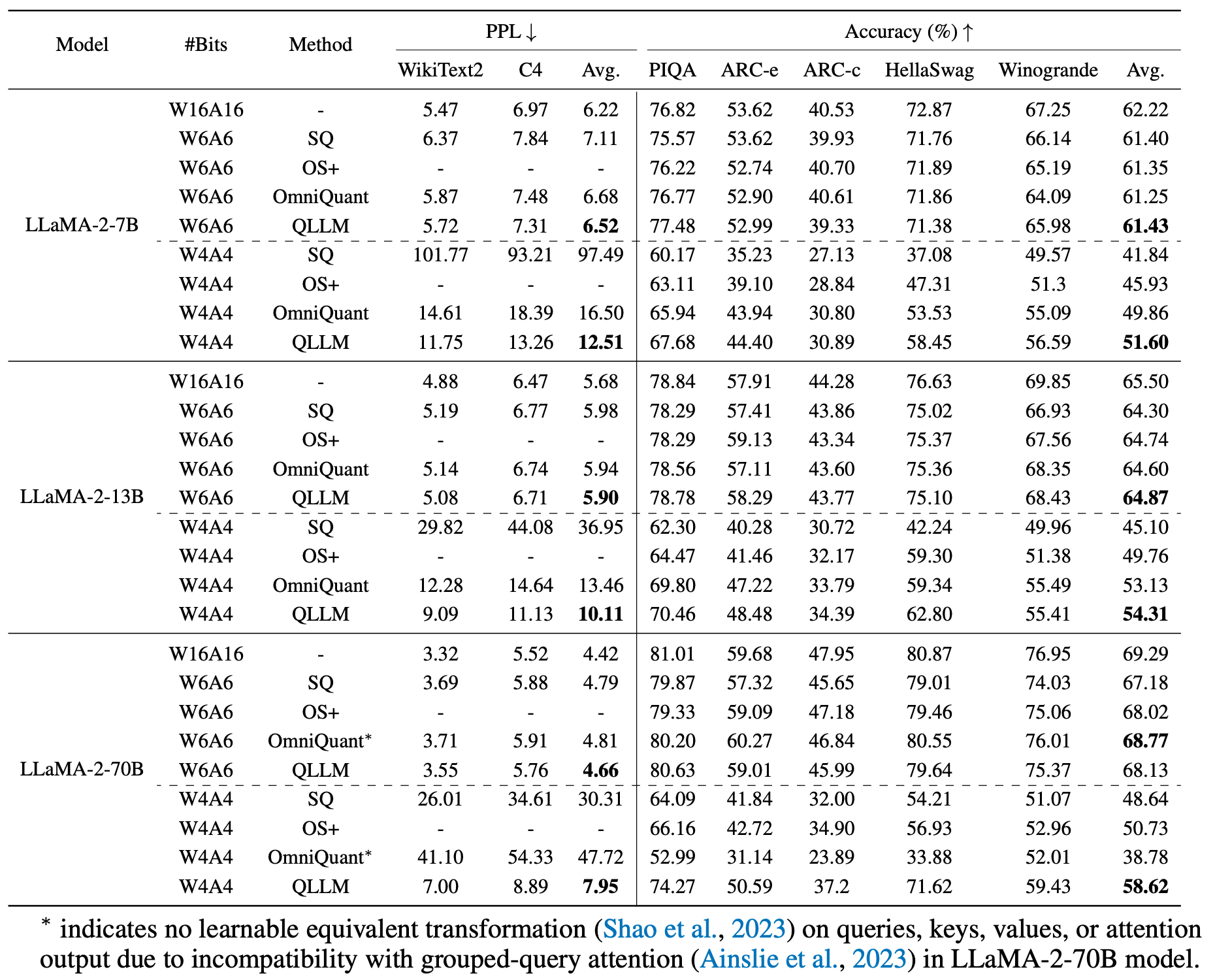

- QLLM achieve SoTA performance in weight-activation quantization

If you find our QLLM useful in your research, please consider to cite the following related papers:

@inproceedings{liu2024qllm,

title = {{QLLM}: Accurate and Efficient Low-Bitwidth Quantization for Large Language Models},

author = {Liu, Jing and Gong, Ruihao and Wei, Xiuying and Dong, Zhiwei and Cai, Jianfei and Zhuang, Bohan},

booktitle = {International Conference on Learning Representations (ICLR)},

year = {2024},

}

This repository is released under the Apache 2.0 license as found in the LICENSE file.

This repository is built upon OmniQuant. We thank the authors for their open-sourced code.