This open-source project demonstrates Retrieval Augmented Generation (RAG) using Large Language Models (LLMs).

siosio-rag uses the following technologies:

- Orchestration: LangChain.

- Embedding Vectorstore: Weaviate.

- Text Embeddings: FlagEmbedding.

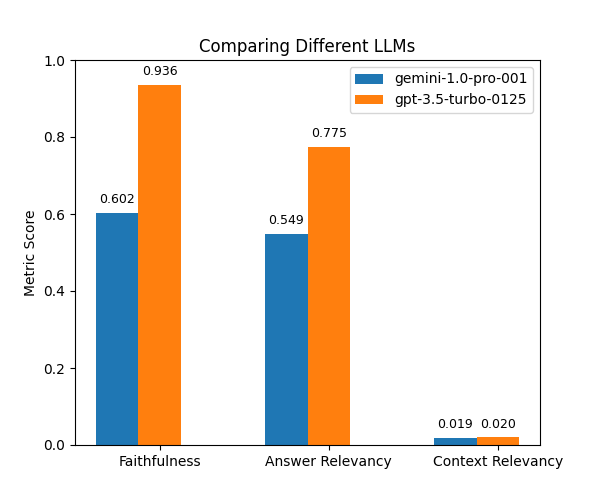

- LLMs: OpenAI ChatGPT and Google Gemini.

- Evaluation: Ragas.

Reference: chat-langchain

Python(version 3.10 or higher)PyTorch(version 2.1.2 or higher)

Once you have these dependencies installed, clone this repository and set up the environment:

git clone https://github.com/your-username/siosio-rag.gitpip install -r requirements.txtRun prepare_data.py to preprocess and save the data:

python prepare_data.py --out_path out_directory_pathRun build_index.py to convert data into embeddings and build an index:

python build_index.py --input_path data_directory_path --out_path out_directory_path --index_name name_for_indexRun rag.py to execute RAG:

python rag.py \

--input_path csv_path \

--output_path out_directory_path \

--index_path index_path \

--index_name index_name \

--model_name llm_model_name \

--api_key api_key_for_llmRun evaluate.py to evaluate the RAG results:

python evaluate.py \

--input_path result_csv_path \

--output_path out_directory_path \

--api_key api_key_for_llmNote:

- Data used in the experiment can be found here.

- The results were obtained with

Ragasv1.7. - For information about the metrics, please refer to this doc.

This project is licensed under the MIT license. Please refer to the LICENSE file for details.