- conda create -n varuna python=3.8; conda activate varuna

- conda install -c conda-forge geopandas

- pip install rasterio

- conda install pytorch torchvision torchaudio cudatoolkit=11.3 -c pytorch

- pip install tensorboard

- pip install -U albumentations

- pip install tqdm

- put the raw data into the ./data_raw/ folder

- Run

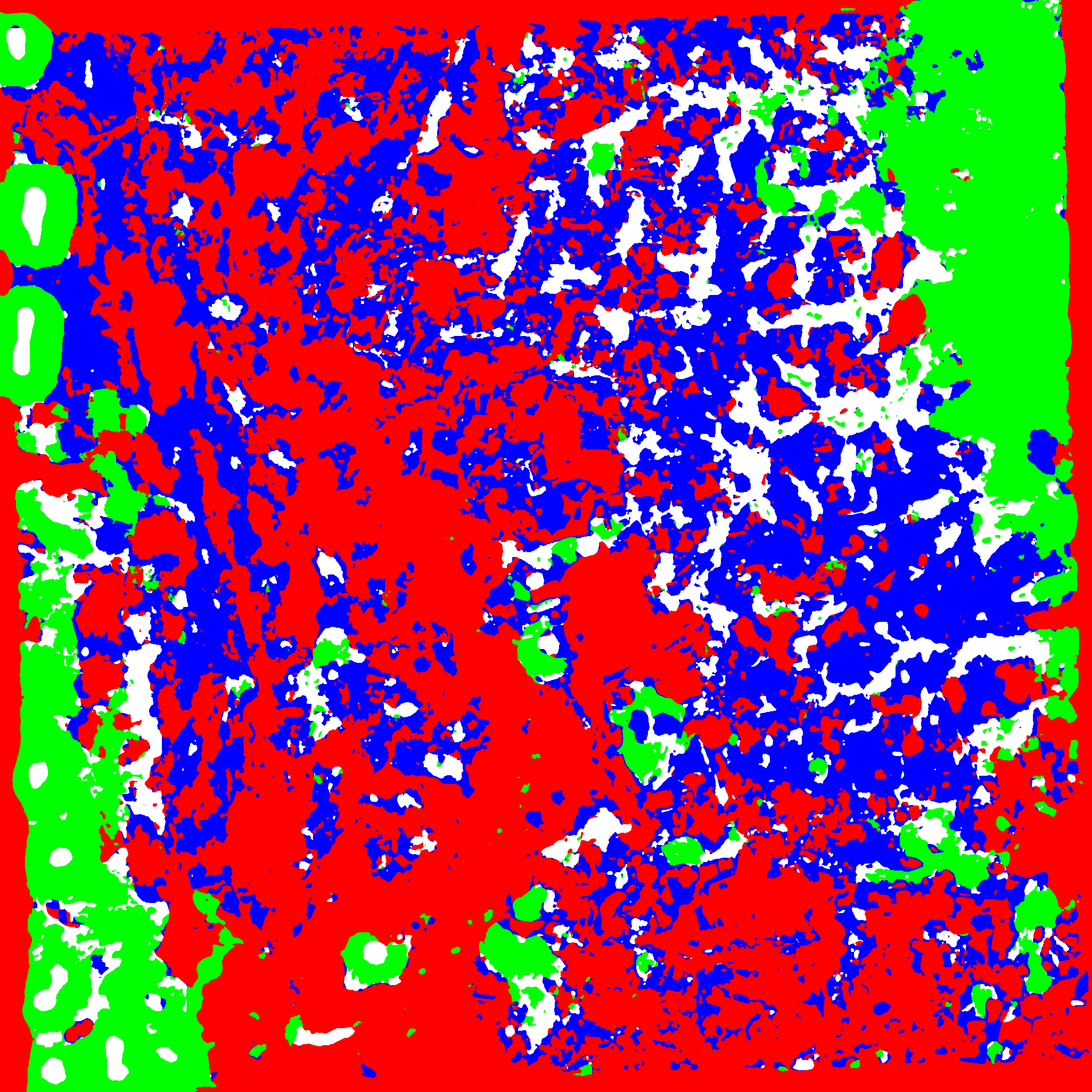

python generate_mask.pyto generate raster masks from polygons - Run

python generate_data.pyto process the raw data into numpy arrays. The process involved date selection, band selections and random crop the big image into multiple smaller data samples. - Run

python train.py --name "my_model" --saveto train and save your model. - Run

tensorbaord --logdir=runsto visualize the training.

- Prepare your data into appropriate folders

- Run

python test_v2.py

-

Our pretrained model can be downloaded here: https://drive.google.com/drive/folders/1drKdEeyS_zlUwh0ncXA4hyCx7ZHXhVx7?usp=sharing

-

Run

python reproducing_first_submission.pyto reproduce the first submission

- Run

python reproducing_second_submission.pyto reproduce the 2nd submission

- A standard UNET segmentation

- standardise input with per-sample statistics to avoid losing relative magnitudes between bands

- extensive augmentations

- a lot of hyper-param tuning

- reduce the training image size to avoid model remebering exact location

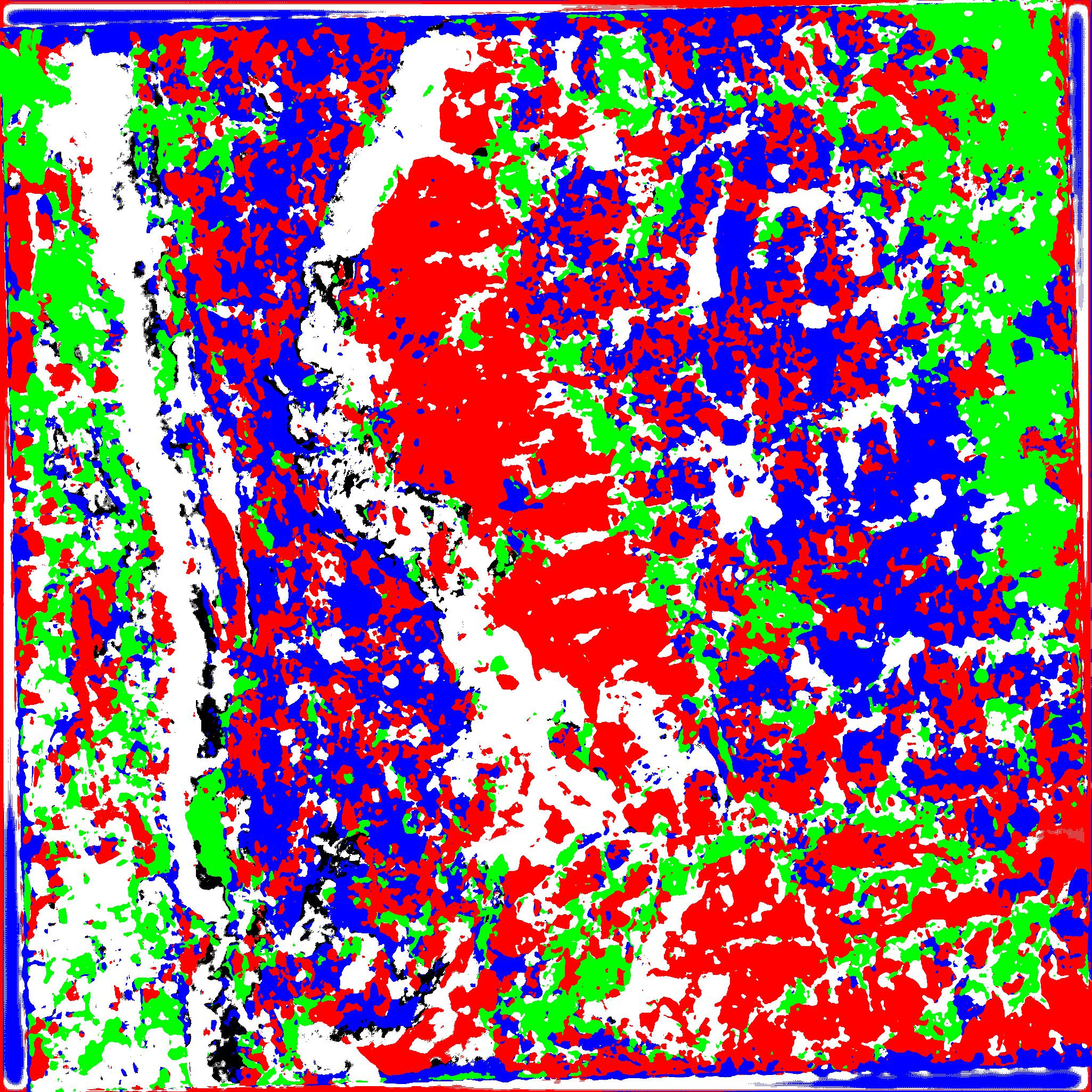

- incorporate temporal data through computing maximum values of NDVI and EVI across time (only from 2021). We use that as additional channels in the inputs.

- BatchNorm between convolutions

- extensive augmentations

We will use the 2nd model for the final submission. If time permitted, training multiple models to perform ensemble estimation might help.