Qihang Ma1* · Xin Tan1,2* · Yanyun Qu3 · Lizhuang Ma1 · Zhizhong Zhang1+ · Yuan Xie1,2

1Eash China Normal University · 2Chongqing Institute of ECNU · 3Xiamen University

*equal contribution, +corresponding authors

CVPR 2024

- 2024.04.01 Code released.

- 2024.02.27 🌟 COTR is accepted by CVPR 2024.

- 2023.12.04 arXiv preprint released.

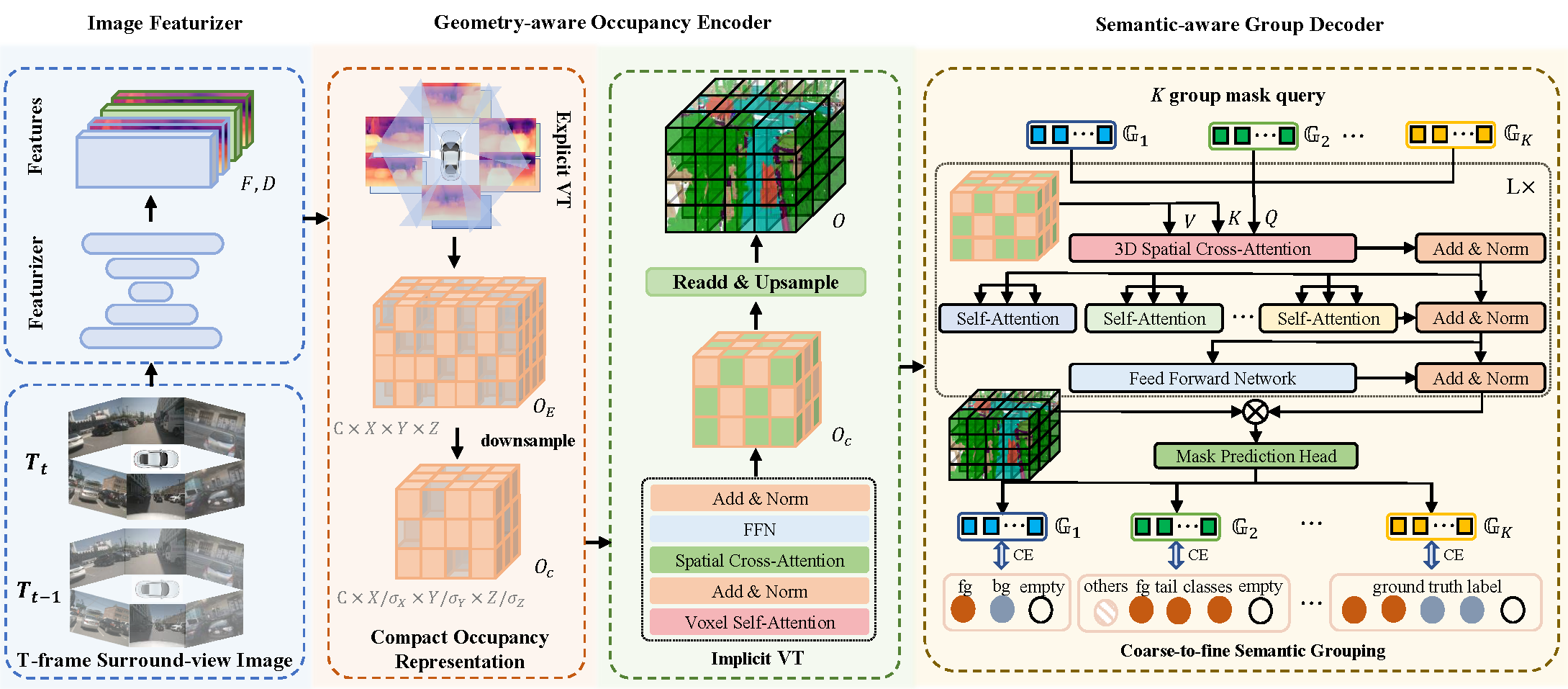

The autonomous driving community has shown significant interest in 3D occupancy prediction, driven by its exceptional geometric perception and general object recognition capabilities. To achieve this, current works try to construct a Tri-Perspective View (TPV) or Occupancy (OCC) representation extending from the Bird-Eye-View perception. However, compressed views like TPV representation lose 3D geometry information while raw and sparse OCC representation requires heavy but redundant computational costs. To address the above limitations, we propose Compact Occupancy TRansformer (COTR), with a geometry-aware occupancy encoder and a semantic-aware group decoder to reconstruct a compact 3D OCC representation. The occupancy encoder first generates a compact geometrical OCC feature through efficient explicit-implicit view transformation. Then, the occupancy decoder further enhances the semantic discriminability of the compact OCC representation by a coarse-to-fine semantic grouping strategy. Empirical experiments show that there are evident performance gains across multiple baselines, e.g., COTR outperforms baselines with a relative improvement of 8%-15%, demonstrating the superiority of our method.

The overall architecture of COTR. T-frame surround-view images are first fed into the image featurizers to get the image features and depth distributions. Taking the image features and depth estimation as input, the geometry-aware occupancy encoder constructs a compact occupancy representation through efficient explicit-implicit view transformation. The semantic-aware group decoder utilizes a coarse-to-fine semantic grouping strategy cooperating with the Transformer-based mask classification to strongly strengthen the semantic discriminability of the compact occupancy representation.

The overall architecture of COTR. T-frame surround-view images are first fed into the image featurizers to get the image features and depth distributions. Taking the image features and depth estimation as input, the geometry-aware occupancy encoder constructs a compact occupancy representation through efficient explicit-implicit view transformation. The semantic-aware group decoder utilizes a coarse-to-fine semantic grouping strategy cooperating with the Transformer-based mask classification to strongly strengthen the semantic discriminability of the compact occupancy representation.

step 1. Please prepare environment as that in Install.

step 2. Prepare nuScenes dataset as introduced in nuscenes_det.md and create the pkl for BEVDet by running:

python tools/create_data_bevdet.pystep 3. For Occupancy Prediction task, download (only) the 'gts' from CVPR2023-3D-Occupancy-Prediction and arrange the folder as:

└── nuscenes

├── v1.0-trainval (existing)

├── sweeps (existing)

├── samples (existing)

└── gts (new)# single gpu

python tools/train_occ.py $config

# multiple gpu

./tools/dist_train_occ.sh $config num_gpu# single gpu

python tools/test_occ.py $config $checkpoint --eval mIoU

# multiple gpu

./tools/dist_test_occ.sh $config $checkpoint num_gpu --eval mIoU# multiple gpu

./train_eval_occ.sh $config num_gpupython tools/dist_test.sh $config $checkpoint --out $savepath

python tools/analysis_tools/vis_frame.py $savepath $config --save-path $scenedir --scene-idx $sceneidx --vis-gt

python tools/analysis_tools/generate_gifs.py --scene-dir $scenedirThis project is not possible without multiple great open-sourced code bases. We list some notable examples below.

If this work is helpful for your research, please consider citing the following BibTeX entry.

@article{ma2023cotr,

title={COTR: Compact Occupancy TRansformer for Vision-based 3D Occupancy Prediction},

author={Ma, Qihang and Tan, Xin and Qu, Yanyun and Ma, Lizhuang and Zhang, Zhizhong and Xie, Yuan},

journal={arXiv preprint arXiv:2312.01919},

year={2023}

}