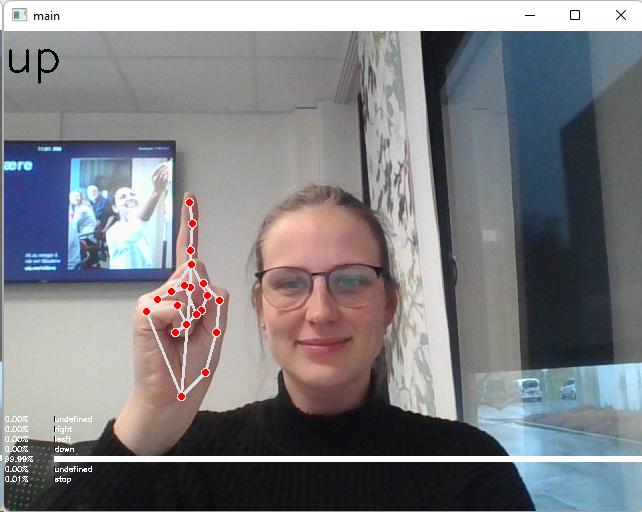

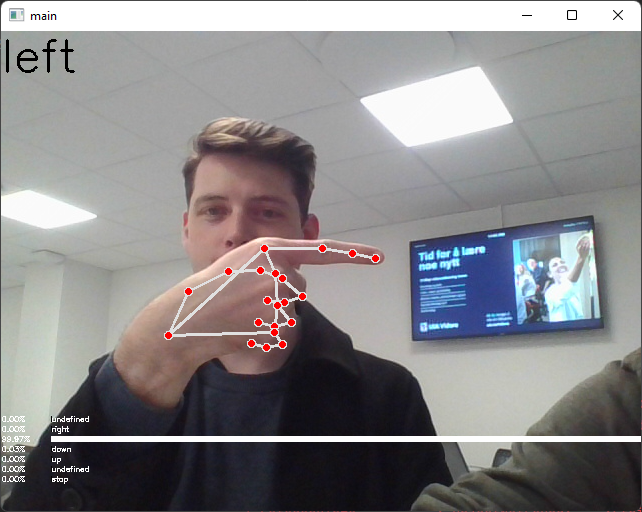

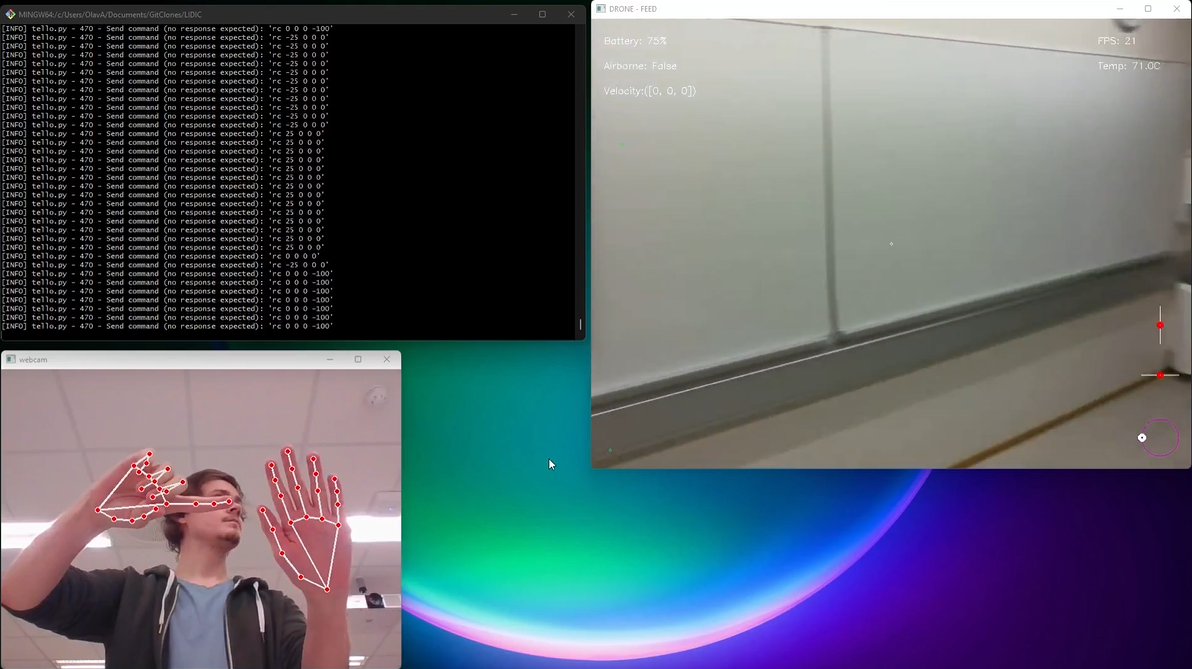

This repository is an implementation of machine vision and deep neural networks to be able to predict hand gestures and control a Tello EDU drone.

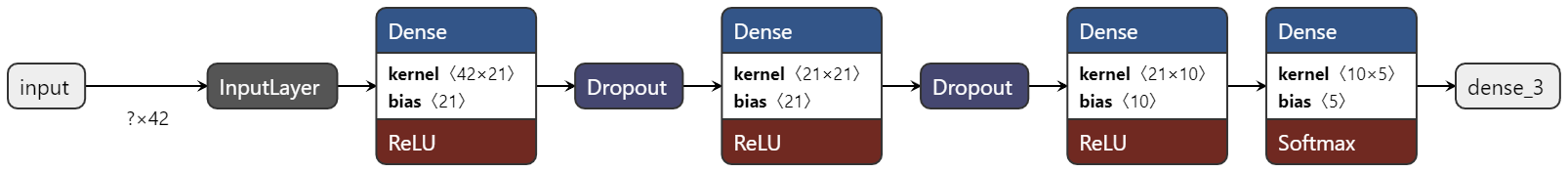

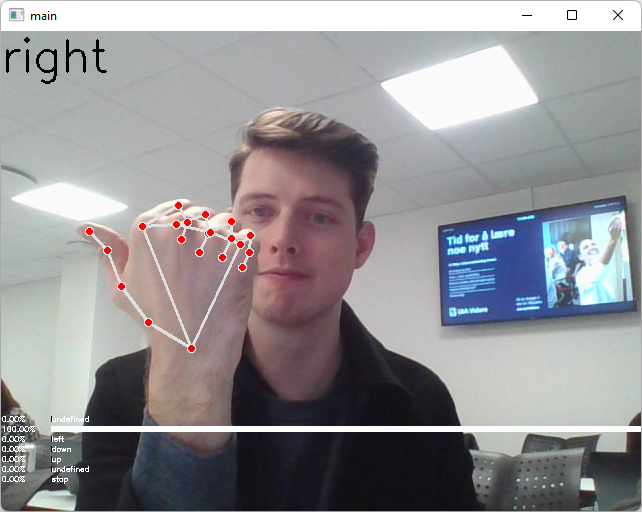

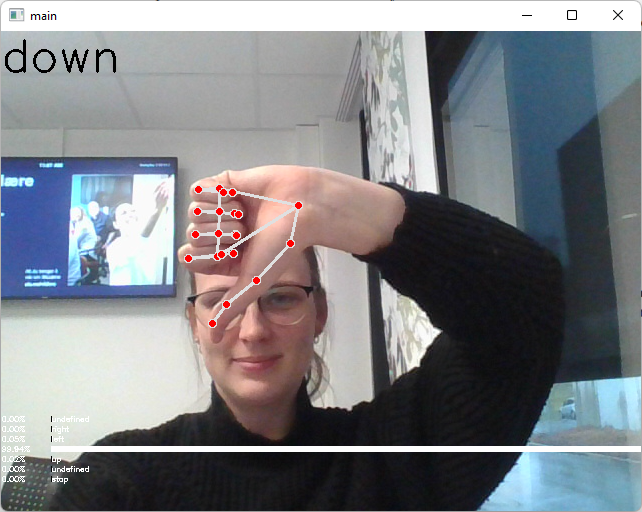

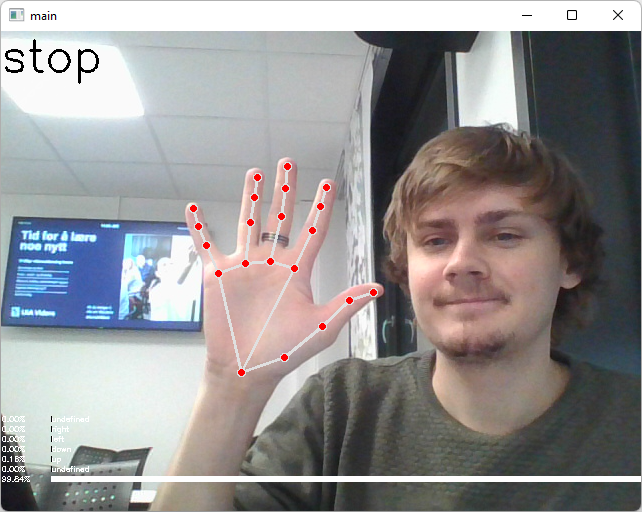

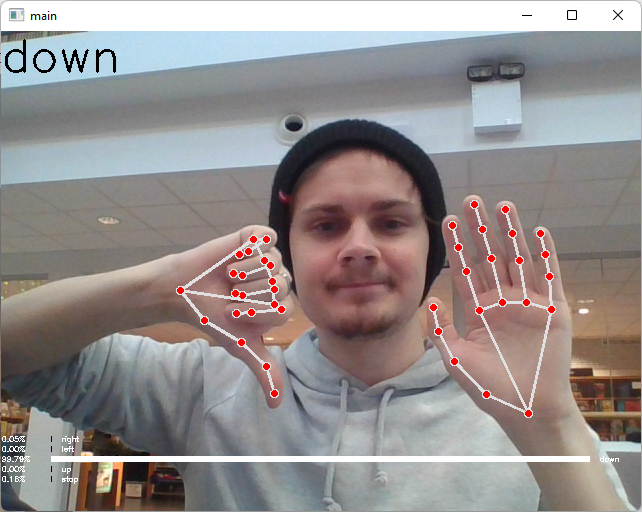

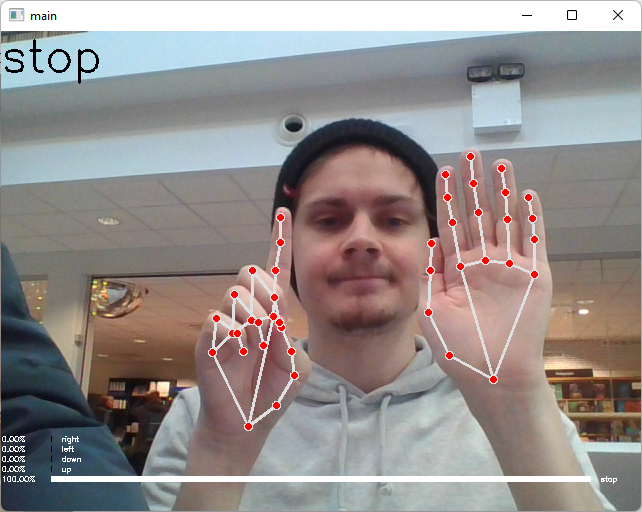

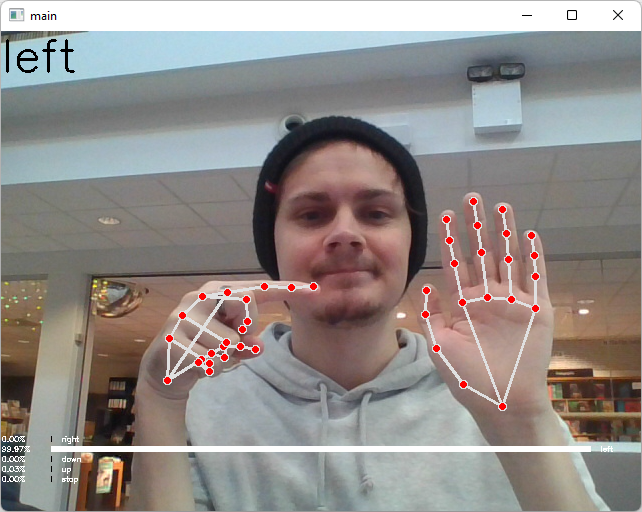

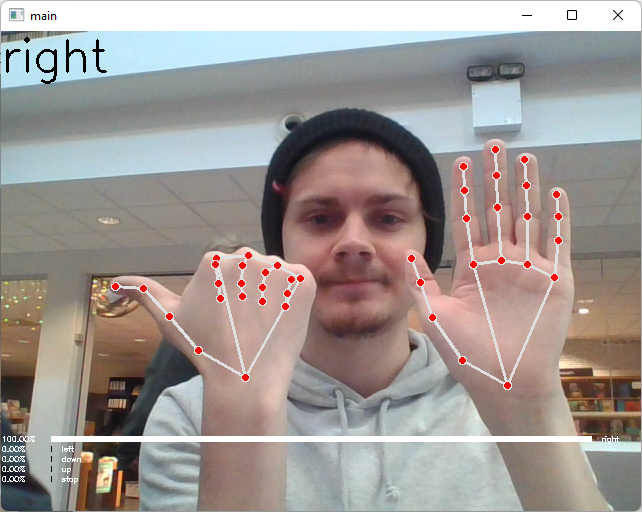

The default repository recognizes five different gestures: stop, up, down, left & right. The default model has an input shape of (42,) and a output shape of (5,) the 5 corresponding to the gestures predicted.

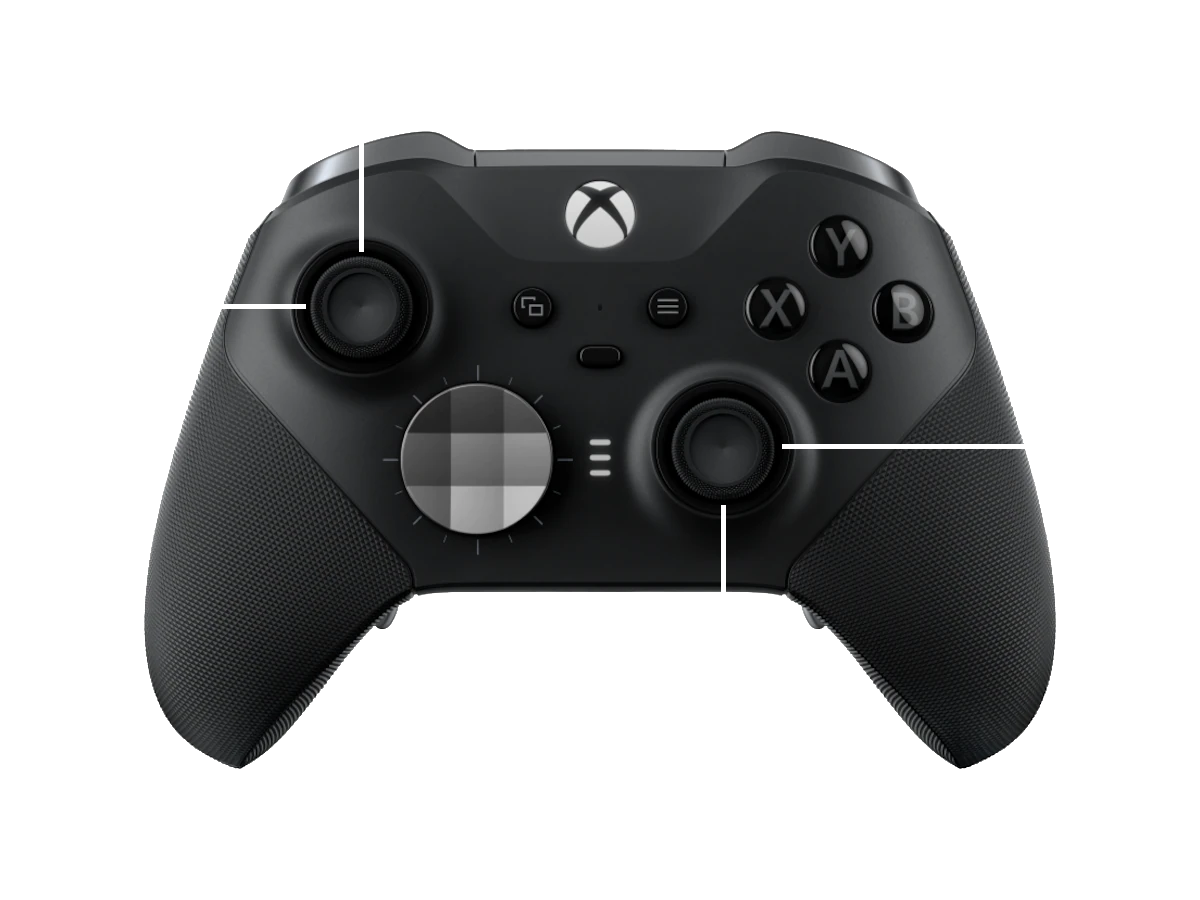

This repository also allow to control the drone using your keyboard, an xbox controller as well as by gestures, the latter is the focus of this repository.

-

Install the requirements to be able to run our program, I am using a conda environment for easy setup.

pip install -r requirements.txt

-

Connect your computer to the drone's access point (WIFI).

-

Run the command below to start the program, choose one of the options below in the square brackets.

python tello.py -c [keyboard, gesture, xbox_controller]

-

Controller Types:

- Xbox Controller:

- Keyboard:

A - Left

D - Right

W - Forward

S - Backwards

X - Takeoff / Land R - Reset RC (ZERO WHEN YOU WANT TO STAY STILL) - Gesture

-

To land the drone and finish the program, press Q.

- File for actuall drone control, use this file when you want to connect and control the drone.

- File for actually training and creating the gesture recognizer

- File for classes and functions frequently used in our program

- Graphics which is used across files and function to draw information about the tello drone and displaying it on a cv2 image feed.

- Different ways to control the drone, e.g xbox_controller, gestures and keyboard

- Files which was used earlier in development to test different types of algorithms to e.g, detect shapes as well as isolating the hand.

- Different training sets to train our models on.

- Folder to contain different neural network models.