Chi Zhang*†, Zhao Yang*, Jiaxuan Liu*, Yucheng Han, Xin Chen, Zebiao Huang,

Bin Fu, Gang Yu✦

(* equal contribution, † Project Leader, ✦ Corresponding Author )

ℹ️Should you encounter any issues

- [2024.2.8]: Added

qwen-vl-max(通义千问-VL) as an alternative multi-modal model. The model is currently free to use but has a relatively poorer performance compared with GPT-4V. - [2024.1.31]: Released the evaluation benchmark used during our testing of AppAgent

- [2024.1.2]: 🔥Added an optional method for the agent to bring up a grid overlay on the screen to tap/swipe anywhere on the screen.

- [2023.12.26]: Added Tips section for better use experience; added instruction for using the Android Studio emulator for users who do not have Android devices.

- [2023.12.21]: 🔥🔥 Open-sourced the git repository, including the detailed configuration steps to implement our AppAgent!

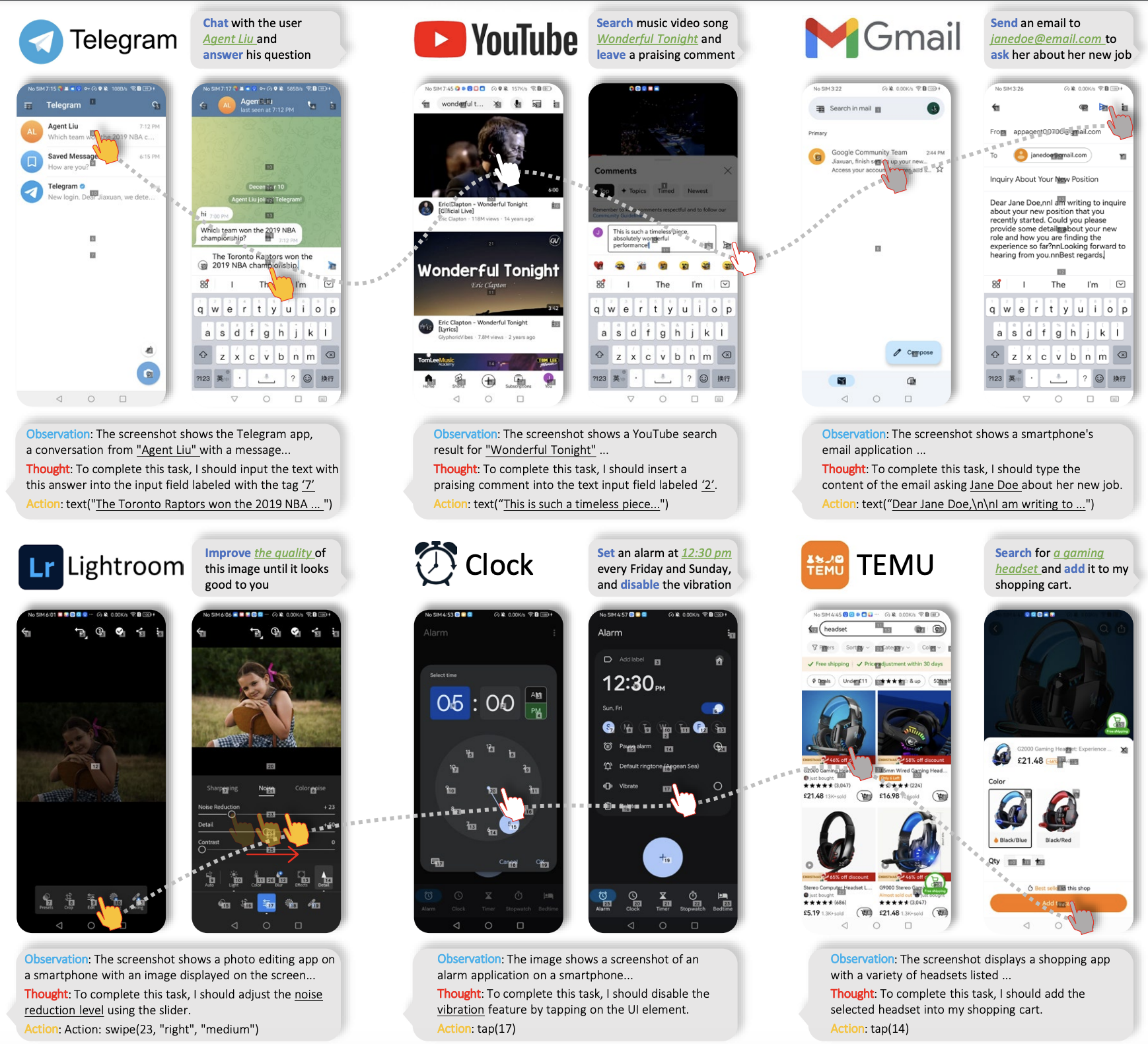

We introduce a novel LLM-based multimodal agent framework designed to operate smartphone applications.

Our framework enables the agent to operate smartphone applications through a simplified action space, mimicking human-like interactions such as tapping and swiping. This novel approach bypasses the need for system back-end access, thereby broadening its applicability across diverse apps.

Central to our agent's functionality is its innovative learning method. The agent learns to navigate and use new apps either through autonomous exploration or by observing human demonstrations. This process generates a knowledge base that the agent refers to for executing complex tasks across different applications.

The demo video shows the process of using AppAgent to follow a user on X (Twitter) in the deployment phase.

x_deploy_720p_1to1.mp4

An interesting experiment showing AppAgent's ability to pass CAPTCHA.

X_authenticate.mp4

An example of using the grid overlay to locate a UI element that is not labeled with a numeric tag.

grid_example.mp4

This section will guide you on how to quickly use gpt-4-vision-preview (or qwen-vl-max) as an agent to complete specific tasks for you on

your Android app.

-

On your PC, download and install Android Debug Bridge (adb) which is a command-line tool that lets you communicate with your Android device from the PC.

-

Get an Android device and enable the USB debugging that can be found in Developer Options in Settings.

-

Connect your device to your PC using a USB cable.

-

(Optional) If you do not have an Android device but still want to try AppAgent. We recommend you download Android Studio and use the emulator that comes with it. The emulator can be found in the device manager of Android Studio. You can install apps on an emulator by downloading APK files from the internet and dragging them to the emulator. AppAgent can detect the emulated device and operate apps on it just like operating a real device.

-

Clone this repo and install the dependencies. All scripts in this project are written in Python 3 so make sure you have installed it.

cd AppAgent

pip install -r requirements.txtAppAgent needs to be powered by a multi-modal model which can receive both text and visual inputs. During our experiment

, we used gpt-4-vision-preview as the model to make decisions on how to take actions to complete a task on the smartphone.

To configure your requests to GPT-4V, you should modify config.yaml in the root directory.

There are two key parameters that must be configured to try AppAgent:

- OpenAI API key: you must purchase an eligible API key from OpenAI so that you can have access to GPT-4V.

- Request interval: this is the time interval in seconds between consecutive GPT-4V requests to control the frequency of your requests to GPT-4V. Adjust this value according to the status of your account.

Other parameters in config.yaml are well commented. Modify them as you need.

Be aware that GPT-4V is not free. Each request/response pair involved in this project costs around $0.03. Use it wisely.

You can also try qwen-vl-max (通义千问-VL) as the alternative multi-modal model to power the AppAgent. The model is currently

free to use but its performance in the context of AppAgent is poorer compared with GPT-4V.

To use it, you should create an Alibaba Cloud account and create a Dashscope API key to fill in the DASHSCOPE_API_KEY field

in the config.yaml file. Change the MODEL field from OpenAI to Qwen as well.

If you want to test AppAgent using your own models, you should write a new model class in scripts/model.py accordingly.

Our paper proposed a novel solution that involves two phases, exploration, and deployment, to turn GPT-4V into a capable agent that can help users operate their Android phones when a task is given. The exploration phase starts with a task given by you, and you can choose to let the agent either explore the app on its own or learn from your demonstration. In both cases, the agent generates documentation for elements interacted during the exploration/demonstration and saves them for use in the deployment phase.

This solution features a fully autonomous exploration which allows the agent to explore the use of the app by attempting the given task without any intervention from humans.

To start, run learn.py in the root directory. Follow the prompted instructions to select autonomous exploration

as the operating mode and provide the app name and task description. Then, your agent will do the job for you. Under

this mode, AppAgent will reflect on its previous action making sure its action adheres to the given task and generate

documentation for the elements explored.

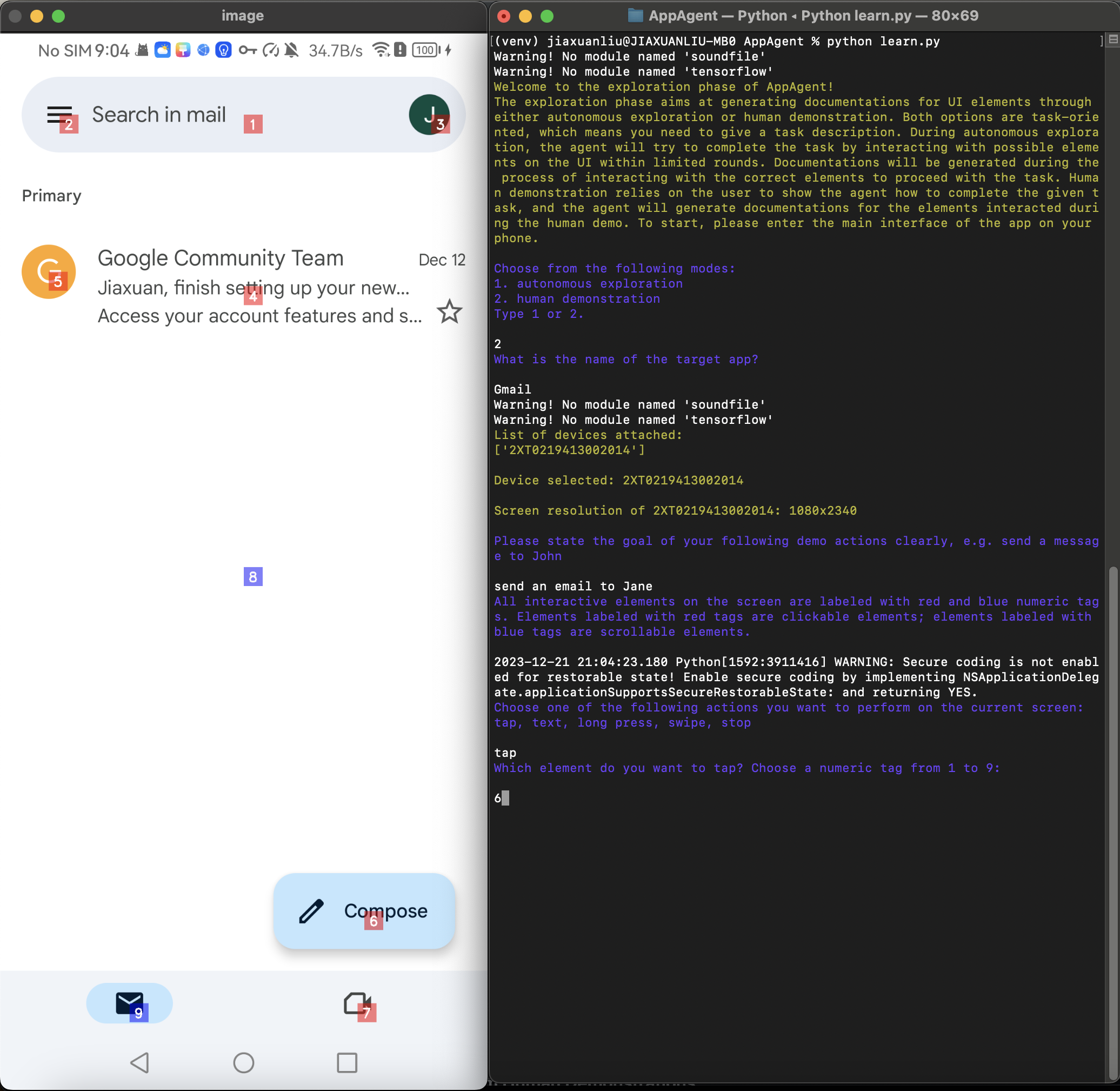

python learn.pyThis solution requires users to demonstrate a similar task first. AppAgent will learn from the demo and generate documentations for UI elements seen during the demo.

To start human demonstration, you should run learn.py in the root directory. Follow the prompted instructions to select

human demonstration as the operating mode and provide the app name and task description. A screenshot of your phone

will be captured and all interactive elements shown on the screen will be labeled with numeric tags. You need to follow

the prompts to determine your next action and the target of the action. When you believe the demonstration is finished,

type stop to end the demo.

python learn.pyAfter the exploration phase finishes, you can run run.py in the root directory. Follow the prompted instructions to enter

the name of the app, select the appropriate documentation base you want the agent to use and provide the task

description. Then, your agent will do the job for you. The agent will automatically detect if there is documentation

base generated before for the app; if there is no documentation found, you can also choose to run the agent without any

documentation (success rate not guaranteed).

python run.py- For an improved experience, you might permit AppAgent to undertake a broader range of tasks through autonomous exploration, or you can directly demonstrate more app functions to enhance the app documentation. Generally, the more extensive the documentation provided to the agent, the higher the likelihood of successful task completion.

- It is always a good practice to inspect the documentation generated by the agent. When you find some documentation not accurately describe the function of the element, manually revising the documentation is also an option.

Please refer to evaluation benchmark.

- Incorporate more LLM APIs into the project.

- Open source the Benchmark.

- Open source the configuration.

@misc{yang2023appagent,

title={AppAgent: Multimodal Agents as Smartphone Users},

author={Chi Zhang and Zhao Yang and Jiaxuan Liu and Yucheng Han and Xin Chen and Zebiao Huang and Bin Fu and Gang Yu},

year={2023},

eprint={2312.13771},

archivePrefix={arXiv},

primaryClass={cs.CV}

}The MIT license.